User login

Device can test multiple cancer drugs in tumors

Image courtesy of

Presage Biosciences

A device that tests multiple cancer drugs in living tumor tissue could guide treatment selection in patients with lymphoma and other cancers, according to researchers.

They also believe the device, called CIVO, could help speed up drug development by testing the efficacy of candidate drugs in very small doses while sparing patients side effects.

CIVO is a handheld microinjection platform that can deliver small doses of up to 8 drugs or combinations of drugs into a tumor.

The device proved effective for testing multiple cancer drugs in xenograft mouse models, dogs, and humans with lymphoma.

Richard Klinghoffer, PhD, of Presage Biosciences in Seattle, Washington, and his colleagues described their research with CIVO in Science Translational Medicine. The research was funded by Presage Biosciences, the National Institutes of Health, and Seattle Children’s Hospital Neuro-Oncology Fund.

About CIVO

CIVO is designed for tumors near the skin surface, such as lymphoma or skin and breast cancer.

The technology enables the placement of multiple columns of drugs directly into the tumor along the needle axis, spanning the full depth of the tumor. This makes it possible to assess drug effects with multiple biomarkers and in multiple regions along the injection axis to capture the heterogeneity of response within the tumor.

Later (typically 24 to 72 hours after injection), the tumor is resected for subsequent analysis, and responses are measured with multiple immunohistochemistry-based assays and high-resolution scanning.

Results in mice

In xenograft lymphoma models, CIVO microinjection of well-characterized anticancer agents (vincristine, doxorubicin, mafosfamide, and prednisolone) induced spatially defined cellular changes around sites of drug exposure, specific to the known mechanisms of each drug. And the observed localized responses predicted responses to the same drugs systemically delivered in animals.

In pair-matched drug-resistant and drug-sensitive lymphoma models, CIVO correctly demonstrated tumor resistance to doxorubicin and vincristine.

The researchers also identified an unexpected enhanced sensitivity to the active form of cyclophosphamide in multidrug-resistant lymphomas compared with chemotherapy-naïve lymphomas.

And a CIVO-enabled in vivo screen of oncology agents revealed that a novel mTOR pathway inhibitor exhibits significantly increased tumor-killing activity in the drug-resistant setting compared with chemotherapy-naïve tumors.

Results in dogs and humans

Dogs with lymphoma showed no toxicity when injected with drugs via CIVO. And the researchers said they observed robust, easily tracked, drug-specific responses in the animals.

For lymphoma patients, the researchers used CIVO to inject microdoses of vincristine into the tumors in patients’ lymph nodes. Cells surrounding the injections died, and there were no serious adverse events, although patients did report mild discomfort.

“This analysis creates a comprehensive portrait of drug response that has never been seen before this early in the drug development process,” Dr Klinghoffer said. “Using this technology, we can assess how drugs, both as single agents and in combinations, impact the biology of tumor cells in the context of the native tumor microenvironment.”

“[T]ranslation of CIVO to the clinical setting has enabled assessment on all aspects of tumor biology, including drug effects on tumor-infiltrating immune cells. This sets the stage for a new type of pre-phase 1 clinical study in which multiple drugs or drug combinations can be tested simultaneously, directly in a patient’s own tumor, without toxicity associated with systemic drug delivery.” ![]()

Image courtesy of

Presage Biosciences

A device that tests multiple cancer drugs in living tumor tissue could guide treatment selection in patients with lymphoma and other cancers, according to researchers.

They also believe the device, called CIVO, could help speed up drug development by testing the efficacy of candidate drugs in very small doses while sparing patients side effects.

CIVO is a handheld microinjection platform that can deliver small doses of up to 8 drugs or combinations of drugs into a tumor.

The device proved effective for testing multiple cancer drugs in xenograft mouse models, dogs, and humans with lymphoma.

Richard Klinghoffer, PhD, of Presage Biosciences in Seattle, Washington, and his colleagues described their research with CIVO in Science Translational Medicine. The research was funded by Presage Biosciences, the National Institutes of Health, and Seattle Children’s Hospital Neuro-Oncology Fund.

About CIVO

CIVO is designed for tumors near the skin surface, such as lymphoma or skin and breast cancer.

The technology enables the placement of multiple columns of drugs directly into the tumor along the needle axis, spanning the full depth of the tumor. This makes it possible to assess drug effects with multiple biomarkers and in multiple regions along the injection axis to capture the heterogeneity of response within the tumor.

Later (typically 24 to 72 hours after injection), the tumor is resected for subsequent analysis, and responses are measured with multiple immunohistochemistry-based assays and high-resolution scanning.

Results in mice

In xenograft lymphoma models, CIVO microinjection of well-characterized anticancer agents (vincristine, doxorubicin, mafosfamide, and prednisolone) induced spatially defined cellular changes around sites of drug exposure, specific to the known mechanisms of each drug. And the observed localized responses predicted responses to the same drugs systemically delivered in animals.

In pair-matched drug-resistant and drug-sensitive lymphoma models, CIVO correctly demonstrated tumor resistance to doxorubicin and vincristine.

The researchers also identified an unexpected enhanced sensitivity to the active form of cyclophosphamide in multidrug-resistant lymphomas compared with chemotherapy-naïve lymphomas.

And a CIVO-enabled in vivo screen of oncology agents revealed that a novel mTOR pathway inhibitor exhibits significantly increased tumor-killing activity in the drug-resistant setting compared with chemotherapy-naïve tumors.

Results in dogs and humans

Dogs with lymphoma showed no toxicity when injected with drugs via CIVO. And the researchers said they observed robust, easily tracked, drug-specific responses in the animals.

For lymphoma patients, the researchers used CIVO to inject microdoses of vincristine into the tumors in patients’ lymph nodes. Cells surrounding the injections died, and there were no serious adverse events, although patients did report mild discomfort.

“This analysis creates a comprehensive portrait of drug response that has never been seen before this early in the drug development process,” Dr Klinghoffer said. “Using this technology, we can assess how drugs, both as single agents and in combinations, impact the biology of tumor cells in the context of the native tumor microenvironment.”

“[T]ranslation of CIVO to the clinical setting has enabled assessment on all aspects of tumor biology, including drug effects on tumor-infiltrating immune cells. This sets the stage for a new type of pre-phase 1 clinical study in which multiple drugs or drug combinations can be tested simultaneously, directly in a patient’s own tumor, without toxicity associated with systemic drug delivery.” ![]()

Image courtesy of

Presage Biosciences

A device that tests multiple cancer drugs in living tumor tissue could guide treatment selection in patients with lymphoma and other cancers, according to researchers.

They also believe the device, called CIVO, could help speed up drug development by testing the efficacy of candidate drugs in very small doses while sparing patients side effects.

CIVO is a handheld microinjection platform that can deliver small doses of up to 8 drugs or combinations of drugs into a tumor.

The device proved effective for testing multiple cancer drugs in xenograft mouse models, dogs, and humans with lymphoma.

Richard Klinghoffer, PhD, of Presage Biosciences in Seattle, Washington, and his colleagues described their research with CIVO in Science Translational Medicine. The research was funded by Presage Biosciences, the National Institutes of Health, and Seattle Children’s Hospital Neuro-Oncology Fund.

About CIVO

CIVO is designed for tumors near the skin surface, such as lymphoma or skin and breast cancer.

The technology enables the placement of multiple columns of drugs directly into the tumor along the needle axis, spanning the full depth of the tumor. This makes it possible to assess drug effects with multiple biomarkers and in multiple regions along the injection axis to capture the heterogeneity of response within the tumor.

Later (typically 24 to 72 hours after injection), the tumor is resected for subsequent analysis, and responses are measured with multiple immunohistochemistry-based assays and high-resolution scanning.

Results in mice

In xenograft lymphoma models, CIVO microinjection of well-characterized anticancer agents (vincristine, doxorubicin, mafosfamide, and prednisolone) induced spatially defined cellular changes around sites of drug exposure, specific to the known mechanisms of each drug. And the observed localized responses predicted responses to the same drugs systemically delivered in animals.

In pair-matched drug-resistant and drug-sensitive lymphoma models, CIVO correctly demonstrated tumor resistance to doxorubicin and vincristine.

The researchers also identified an unexpected enhanced sensitivity to the active form of cyclophosphamide in multidrug-resistant lymphomas compared with chemotherapy-naïve lymphomas.

And a CIVO-enabled in vivo screen of oncology agents revealed that a novel mTOR pathway inhibitor exhibits significantly increased tumor-killing activity in the drug-resistant setting compared with chemotherapy-naïve tumors.

Results in dogs and humans

Dogs with lymphoma showed no toxicity when injected with drugs via CIVO. And the researchers said they observed robust, easily tracked, drug-specific responses in the animals.

For lymphoma patients, the researchers used CIVO to inject microdoses of vincristine into the tumors in patients’ lymph nodes. Cells surrounding the injections died, and there were no serious adverse events, although patients did report mild discomfort.

“This analysis creates a comprehensive portrait of drug response that has never been seen before this early in the drug development process,” Dr Klinghoffer said. “Using this technology, we can assess how drugs, both as single agents and in combinations, impact the biology of tumor cells in the context of the native tumor microenvironment.”

“[T]ranslation of CIVO to the clinical setting has enabled assessment on all aspects of tumor biology, including drug effects on tumor-infiltrating immune cells. This sets the stage for a new type of pre-phase 1 clinical study in which multiple drugs or drug combinations can be tested simultaneously, directly in a patient’s own tumor, without toxicity associated with systemic drug delivery.” ![]()

CCSs more likely to claim social security support

Photo courtesy of

Huntsman Cancer Institute

A new study indicates that childhood cancer survivors (CCSs) are more likely than individuals without a cancer history to enroll on federal programs that provide disability benefits.

CCSs diagnosed between 1970 and 1986 were about 2 to 5 times as likely as control subjects to utilize such a program.

“The long-term impact of cancer can affect other issues besides health outcomes,” said study author Anne Kirchhoff, PhD, of the Huntsman Cancer Institute at the University of Utah.

“We need to do a better job of helping people function throughout their lives, not just when they’re finishing their cancer therapy.”

Dr Kirchhoff and her colleagues conducted this research and detailed the results in the Journal of the National Cancer Institute.

The researchers looked at health insurance surveys completed in 2011 and 2012 by a random sample of 698 CCSs who were diagnosed between the ages of 0 and 20 years. Today, they range in age from 20s to early 60s.

The patients are part of a National Cancer Institute initiative, called the Childhood Cancer Survivor Study, which has followed more than 14,000 children and adolescents since 1994 who were diagnosed with cancer and survived at least 5 years after diagnosis. A comparison group of 210 siblings without cancer also responded to the survey and were used as controls.

Dr Kirchhoff and her colleagues looked at current or former enrollment on 2 federal disability programs:

- Supplemental security income (SSI), which is for people with limited income who have no prior work history

- Social security disability insurance (SSDI), which pays disability benefits to adults ages 18 years and older who have worked and paid social security taxes.

In all, 13.5% of CCSs reported being enrolled on SSI in the past or present, and 10% of survivors reported being enrolled on SSDI at some point. This was substantially higher than for the comparison group, in which 2.6% of patients reported SSI enrollment and 5.4% reported SSDI enrollment.

In addition, CCSs reported current enrollment in SSI more frequently than the US population, at rates of 7.3% and 2.5%, respectively.

Dr Kirchoff and her colleagues also identified survivor socio-demographic and treatment characteristics that were associated with a higher rate of enrollment in federal support programs.

“Survivors that were younger at diagnosis, age 4 or under, were about 7 times more likely to be on SSI than we see with survivors that were diagnosed in their adolescence,” she said.

SSI enrollment was more likely for female CCSs and for survivors with a history of cranial radiation treatment as well.

Dr Kirchhoff noted that, over the years, research on CCSs has caused hospitals to rethink how to better care for cancer survivors.

“There’s really a growing strategy to support survivors in the long-term,” she said. “For example, here at Huntsman Cancer Institute, we have a pediatric cancer late-effects clinic, which helps manage issues that might come up with childhood cancer survivors in the long term, including health-management support, health-behavior support, and access to providers to help them with other issues.” ![]()

Photo courtesy of

Huntsman Cancer Institute

A new study indicates that childhood cancer survivors (CCSs) are more likely than individuals without a cancer history to enroll on federal programs that provide disability benefits.

CCSs diagnosed between 1970 and 1986 were about 2 to 5 times as likely as control subjects to utilize such a program.

“The long-term impact of cancer can affect other issues besides health outcomes,” said study author Anne Kirchhoff, PhD, of the Huntsman Cancer Institute at the University of Utah.

“We need to do a better job of helping people function throughout their lives, not just when they’re finishing their cancer therapy.”

Dr Kirchhoff and her colleagues conducted this research and detailed the results in the Journal of the National Cancer Institute.

The researchers looked at health insurance surveys completed in 2011 and 2012 by a random sample of 698 CCSs who were diagnosed between the ages of 0 and 20 years. Today, they range in age from 20s to early 60s.

The patients are part of a National Cancer Institute initiative, called the Childhood Cancer Survivor Study, which has followed more than 14,000 children and adolescents since 1994 who were diagnosed with cancer and survived at least 5 years after diagnosis. A comparison group of 210 siblings without cancer also responded to the survey and were used as controls.

Dr Kirchhoff and her colleagues looked at current or former enrollment on 2 federal disability programs:

- Supplemental security income (SSI), which is for people with limited income who have no prior work history

- Social security disability insurance (SSDI), which pays disability benefits to adults ages 18 years and older who have worked and paid social security taxes.

In all, 13.5% of CCSs reported being enrolled on SSI in the past or present, and 10% of survivors reported being enrolled on SSDI at some point. This was substantially higher than for the comparison group, in which 2.6% of patients reported SSI enrollment and 5.4% reported SSDI enrollment.

In addition, CCSs reported current enrollment in SSI more frequently than the US population, at rates of 7.3% and 2.5%, respectively.

Dr Kirchoff and her colleagues also identified survivor socio-demographic and treatment characteristics that were associated with a higher rate of enrollment in federal support programs.

“Survivors that were younger at diagnosis, age 4 or under, were about 7 times more likely to be on SSI than we see with survivors that were diagnosed in their adolescence,” she said.

SSI enrollment was more likely for female CCSs and for survivors with a history of cranial radiation treatment as well.

Dr Kirchhoff noted that, over the years, research on CCSs has caused hospitals to rethink how to better care for cancer survivors.

“There’s really a growing strategy to support survivors in the long-term,” she said. “For example, here at Huntsman Cancer Institute, we have a pediatric cancer late-effects clinic, which helps manage issues that might come up with childhood cancer survivors in the long term, including health-management support, health-behavior support, and access to providers to help them with other issues.” ![]()

Photo courtesy of

Huntsman Cancer Institute

A new study indicates that childhood cancer survivors (CCSs) are more likely than individuals without a cancer history to enroll on federal programs that provide disability benefits.

CCSs diagnosed between 1970 and 1986 were about 2 to 5 times as likely as control subjects to utilize such a program.

“The long-term impact of cancer can affect other issues besides health outcomes,” said study author Anne Kirchhoff, PhD, of the Huntsman Cancer Institute at the University of Utah.

“We need to do a better job of helping people function throughout their lives, not just when they’re finishing their cancer therapy.”

Dr Kirchhoff and her colleagues conducted this research and detailed the results in the Journal of the National Cancer Institute.

The researchers looked at health insurance surveys completed in 2011 and 2012 by a random sample of 698 CCSs who were diagnosed between the ages of 0 and 20 years. Today, they range in age from 20s to early 60s.

The patients are part of a National Cancer Institute initiative, called the Childhood Cancer Survivor Study, which has followed more than 14,000 children and adolescents since 1994 who were diagnosed with cancer and survived at least 5 years after diagnosis. A comparison group of 210 siblings without cancer also responded to the survey and were used as controls.

Dr Kirchhoff and her colleagues looked at current or former enrollment on 2 federal disability programs:

- Supplemental security income (SSI), which is for people with limited income who have no prior work history

- Social security disability insurance (SSDI), which pays disability benefits to adults ages 18 years and older who have worked and paid social security taxes.

In all, 13.5% of CCSs reported being enrolled on SSI in the past or present, and 10% of survivors reported being enrolled on SSDI at some point. This was substantially higher than for the comparison group, in which 2.6% of patients reported SSI enrollment and 5.4% reported SSDI enrollment.

In addition, CCSs reported current enrollment in SSI more frequently than the US population, at rates of 7.3% and 2.5%, respectively.

Dr Kirchoff and her colleagues also identified survivor socio-demographic and treatment characteristics that were associated with a higher rate of enrollment in federal support programs.

“Survivors that were younger at diagnosis, age 4 or under, were about 7 times more likely to be on SSI than we see with survivors that were diagnosed in their adolescence,” she said.

SSI enrollment was more likely for female CCSs and for survivors with a history of cranial radiation treatment as well.

Dr Kirchhoff noted that, over the years, research on CCSs has caused hospitals to rethink how to better care for cancer survivors.

“There’s really a growing strategy to support survivors in the long-term,” she said. “For example, here at Huntsman Cancer Institute, we have a pediatric cancer late-effects clinic, which helps manage issues that might come up with childhood cancer survivors in the long term, including health-management support, health-behavior support, and access to providers to help them with other issues.” ![]()

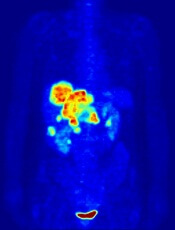

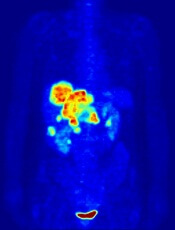

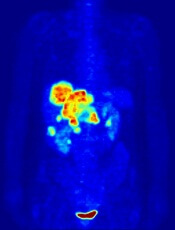

Team images immune response

Photo by Aaron Logan

Researchers say they have devised an approach that allows for real-time imaging of the immune system’s response to tumors, without the need for blood draws or biopsies.

The method harnesses PET to identify areas of immune cell activity associated with inflammation or tumor development.

The researchers believe the approach offers a potential breakthrough in diagnostics and the ability to monitor the efficacy of cancer therapies.

The team described their method in PNAS.

Study author Hidde Ploegh, PhD, of the Whitehead Institute for Biomedical Research in Cambridge, Massachusetts, said that every experimental immunologist wants to monitor an ongoing immune response, but options are limited.

“One can look at blood, but blood is a vehicle of transport for immune cells and is not where immune responses occur,” he said. “Surgical biopsies are invasive and non-random, so, for example, a fine-needle aspirate of a tumor could miss a significant feature of that condition.”

In search of a better monitoring approach, Dr Ploegh and his colleagues leveraged two research tools that have become staples in his lab in recent years.

The first exploits single-domain antibodies known as VHHs, derived from the heavy chain-only antibodies made by the immune systems of animals in the camelid family. Dr Ploegh’s lab immunizes alpacas—his camelid of choice—to generate VHHs specific to immune cells of interest.

The second tool, sortagging, labels the VHHs in a site-specific fashion so that researchers can track the VHHs and their targets in a living animal.

Knowing that the tissue around tumors often contains immune cells such as neutrophils and macrophages, Dr Ploegh and his colleagues hypothesized that appropriately labeled VHHs might allow them to pinpoint tumor locations by finding the tumor-associated immune cells.

Dr Ploegh noted that VHHs’ extremely small size—approximately one-tenth that of conventional antibodies—are likely responsible for their superior tissue penetration and, thus, makes them particularly well suited for such use.

So the researchers generated VHHs that recognize mouse immune cells, then labeled these VHHs with radioisotopes and injected them into tumor-bearing mice. Subsequent PET imaging detected the location of immune cells around the tumor quickly and accurately.

“We were able to image tumors as small as 1 mm in size and within just a few days of their starting to grow,” said Mohammad Rashidian, PhD, a researcher in Dr Ploegh’s lab.

“We’re very excited about this because it’s a powerful approach to pick up inflammation in and around the tumor.”

Drs Rashidian and Ploegh believe that, with further refinement, the method could be used to monitor response to—and perhaps modify—cancer immunotherapy.

“To succeed with immunotherapy, we need more information about the tumor microenvironment,” Dr Rashidian said. “With this method, you could perhaps start immunotherapy and then, a few weeks later, image with VHHs to figure out progress and success of treatment.”

“PET imaging should allow a much more comprehensive look at the entire tumor in its environment,” Dr Ploegh added. “Then we can ask, ‘Did the tumor grow? Did immune cells invade? What has happened to the tumor?’ And to be able to see this without going in invasively is a significant achievement.” ![]()

Photo by Aaron Logan

Researchers say they have devised an approach that allows for real-time imaging of the immune system’s response to tumors, without the need for blood draws or biopsies.

The method harnesses PET to identify areas of immune cell activity associated with inflammation or tumor development.

The researchers believe the approach offers a potential breakthrough in diagnostics and the ability to monitor the efficacy of cancer therapies.

The team described their method in PNAS.

Study author Hidde Ploegh, PhD, of the Whitehead Institute for Biomedical Research in Cambridge, Massachusetts, said that every experimental immunologist wants to monitor an ongoing immune response, but options are limited.

“One can look at blood, but blood is a vehicle of transport for immune cells and is not where immune responses occur,” he said. “Surgical biopsies are invasive and non-random, so, for example, a fine-needle aspirate of a tumor could miss a significant feature of that condition.”

In search of a better monitoring approach, Dr Ploegh and his colleagues leveraged two research tools that have become staples in his lab in recent years.

The first exploits single-domain antibodies known as VHHs, derived from the heavy chain-only antibodies made by the immune systems of animals in the camelid family. Dr Ploegh’s lab immunizes alpacas—his camelid of choice—to generate VHHs specific to immune cells of interest.

The second tool, sortagging, labels the VHHs in a site-specific fashion so that researchers can track the VHHs and their targets in a living animal.

Knowing that the tissue around tumors often contains immune cells such as neutrophils and macrophages, Dr Ploegh and his colleagues hypothesized that appropriately labeled VHHs might allow them to pinpoint tumor locations by finding the tumor-associated immune cells.

Dr Ploegh noted that VHHs’ extremely small size—approximately one-tenth that of conventional antibodies—are likely responsible for their superior tissue penetration and, thus, makes them particularly well suited for such use.

So the researchers generated VHHs that recognize mouse immune cells, then labeled these VHHs with radioisotopes and injected them into tumor-bearing mice. Subsequent PET imaging detected the location of immune cells around the tumor quickly and accurately.

“We were able to image tumors as small as 1 mm in size and within just a few days of their starting to grow,” said Mohammad Rashidian, PhD, a researcher in Dr Ploegh’s lab.

“We’re very excited about this because it’s a powerful approach to pick up inflammation in and around the tumor.”

Drs Rashidian and Ploegh believe that, with further refinement, the method could be used to monitor response to—and perhaps modify—cancer immunotherapy.

“To succeed with immunotherapy, we need more information about the tumor microenvironment,” Dr Rashidian said. “With this method, you could perhaps start immunotherapy and then, a few weeks later, image with VHHs to figure out progress and success of treatment.”

“PET imaging should allow a much more comprehensive look at the entire tumor in its environment,” Dr Ploegh added. “Then we can ask, ‘Did the tumor grow? Did immune cells invade? What has happened to the tumor?’ And to be able to see this without going in invasively is a significant achievement.” ![]()

Photo by Aaron Logan

Researchers say they have devised an approach that allows for real-time imaging of the immune system’s response to tumors, without the need for blood draws or biopsies.

The method harnesses PET to identify areas of immune cell activity associated with inflammation or tumor development.

The researchers believe the approach offers a potential breakthrough in diagnostics and the ability to monitor the efficacy of cancer therapies.

The team described their method in PNAS.

Study author Hidde Ploegh, PhD, of the Whitehead Institute for Biomedical Research in Cambridge, Massachusetts, said that every experimental immunologist wants to monitor an ongoing immune response, but options are limited.

“One can look at blood, but blood is a vehicle of transport for immune cells and is not where immune responses occur,” he said. “Surgical biopsies are invasive and non-random, so, for example, a fine-needle aspirate of a tumor could miss a significant feature of that condition.”

In search of a better monitoring approach, Dr Ploegh and his colleagues leveraged two research tools that have become staples in his lab in recent years.

The first exploits single-domain antibodies known as VHHs, derived from the heavy chain-only antibodies made by the immune systems of animals in the camelid family. Dr Ploegh’s lab immunizes alpacas—his camelid of choice—to generate VHHs specific to immune cells of interest.

The second tool, sortagging, labels the VHHs in a site-specific fashion so that researchers can track the VHHs and their targets in a living animal.

Knowing that the tissue around tumors often contains immune cells such as neutrophils and macrophages, Dr Ploegh and his colleagues hypothesized that appropriately labeled VHHs might allow them to pinpoint tumor locations by finding the tumor-associated immune cells.

Dr Ploegh noted that VHHs’ extremely small size—approximately one-tenth that of conventional antibodies—are likely responsible for their superior tissue penetration and, thus, makes them particularly well suited for such use.

So the researchers generated VHHs that recognize mouse immune cells, then labeled these VHHs with radioisotopes and injected them into tumor-bearing mice. Subsequent PET imaging detected the location of immune cells around the tumor quickly and accurately.

“We were able to image tumors as small as 1 mm in size and within just a few days of their starting to grow,” said Mohammad Rashidian, PhD, a researcher in Dr Ploegh’s lab.

“We’re very excited about this because it’s a powerful approach to pick up inflammation in and around the tumor.”

Drs Rashidian and Ploegh believe that, with further refinement, the method could be used to monitor response to—and perhaps modify—cancer immunotherapy.

“To succeed with immunotherapy, we need more information about the tumor microenvironment,” Dr Rashidian said. “With this method, you could perhaps start immunotherapy and then, a few weeks later, image with VHHs to figure out progress and success of treatment.”

“PET imaging should allow a much more comprehensive look at the entire tumor in its environment,” Dr Ploegh added. “Then we can ask, ‘Did the tumor grow? Did immune cells invade? What has happened to the tumor?’ And to be able to see this without going in invasively is a significant achievement.” ![]()

PET scans could prevent unnecessary RT in HL

Image by Jens Langner

Performing PET scans immediately after chemotherapy may reveal which Hodgkin lymphoma (HL) patients need radiotherapy (RT).

A study published in NEJM showed similar rates of progression-free survival in HL patients who werePET-negative after chemotherapy, whether they received subsequent RT or not.

However, the investigators said longer follow-up is needed to determine if eliminating RT in PET-negative patients will lead to fewer late effects and improved overall survival.

The 602 patients who agreed to take part in this trial, known as RAPID, had a PET scan performed after chemotherapy. Patients who tested positive received RT.

Those who tested negative were divided into 2 groups. One group of 211 patients received no further treatment, and the other group of 209 patients had the standard RT.

At a median of 60 months of follow-up, the proportion of patients who were alive and disease-free was 94.6% in the RT group and 90.8% in the group that hadn’t received further treatment.

Eight patients in the RT group progressed, and 8 died (3 with disease progression, 1 of whom died from HL). Five of the deaths occurred in patients who did not ultimately receive RT.

In the untreated group, 20 patients progressed, and 4 patients died (2 with disease progression and none from HL).

“This research is an important step forward,” said study author John Radford, of The University of Manchester and The Christie NHS Foundation Trust in the UK.

“The results of RAPID show that, in early stage Hodgkin lymphoma, radiotherapy after initial chemotherapy marginally reduces the recurrence rate, but this is bought at the expense of exposing to radiation all patients with negative PET findings, most of whom are already cured.” ![]()

Image by Jens Langner

Performing PET scans immediately after chemotherapy may reveal which Hodgkin lymphoma (HL) patients need radiotherapy (RT).

A study published in NEJM showed similar rates of progression-free survival in HL patients who werePET-negative after chemotherapy, whether they received subsequent RT or not.

However, the investigators said longer follow-up is needed to determine if eliminating RT in PET-negative patients will lead to fewer late effects and improved overall survival.

The 602 patients who agreed to take part in this trial, known as RAPID, had a PET scan performed after chemotherapy. Patients who tested positive received RT.

Those who tested negative were divided into 2 groups. One group of 211 patients received no further treatment, and the other group of 209 patients had the standard RT.

At a median of 60 months of follow-up, the proportion of patients who were alive and disease-free was 94.6% in the RT group and 90.8% in the group that hadn’t received further treatment.

Eight patients in the RT group progressed, and 8 died (3 with disease progression, 1 of whom died from HL). Five of the deaths occurred in patients who did not ultimately receive RT.

In the untreated group, 20 patients progressed, and 4 patients died (2 with disease progression and none from HL).

“This research is an important step forward,” said study author John Radford, of The University of Manchester and The Christie NHS Foundation Trust in the UK.

“The results of RAPID show that, in early stage Hodgkin lymphoma, radiotherapy after initial chemotherapy marginally reduces the recurrence rate, but this is bought at the expense of exposing to radiation all patients with negative PET findings, most of whom are already cured.” ![]()

Image by Jens Langner

Performing PET scans immediately after chemotherapy may reveal which Hodgkin lymphoma (HL) patients need radiotherapy (RT).

A study published in NEJM showed similar rates of progression-free survival in HL patients who werePET-negative after chemotherapy, whether they received subsequent RT or not.

However, the investigators said longer follow-up is needed to determine if eliminating RT in PET-negative patients will lead to fewer late effects and improved overall survival.

The 602 patients who agreed to take part in this trial, known as RAPID, had a PET scan performed after chemotherapy. Patients who tested positive received RT.

Those who tested negative were divided into 2 groups. One group of 211 patients received no further treatment, and the other group of 209 patients had the standard RT.

At a median of 60 months of follow-up, the proportion of patients who were alive and disease-free was 94.6% in the RT group and 90.8% in the group that hadn’t received further treatment.

Eight patients in the RT group progressed, and 8 died (3 with disease progression, 1 of whom died from HL). Five of the deaths occurred in patients who did not ultimately receive RT.

In the untreated group, 20 patients progressed, and 4 patients died (2 with disease progression and none from HL).

“This research is an important step forward,” said study author John Radford, of The University of Manchester and The Christie NHS Foundation Trust in the UK.

“The results of RAPID show that, in early stage Hodgkin lymphoma, radiotherapy after initial chemotherapy marginally reduces the recurrence rate, but this is bought at the expense of exposing to radiation all patients with negative PET findings, most of whom are already cured.” ![]()

Decreased RBC clearance predicts ill health

New research suggests that increased red blood cell distribution width (RDW) is caused by reduced clearance of aging red blood cells (RBCs) from the bloodstream.

And previous studies showed that elevations in RDW predict the development, progression, and risk of death from many conditions.

“It appears that the human body slightly slows down the production and destruction of red blood cells in just about every major disease,” said John Higgins, MD, of Massachusetts General Hospital in Boston.

“If we can accurately measure the production or destruction rates, we might be able to identify many of these diseases in their earlier stages when they are most treatable. Existing measures of the production rate are far too imprecise to detect these subtle changes, but this paper shows how the destruction rate can be estimated using existing blood count data and a mathematical model.”

The paper has been published in the American Journal of Hematology.

Healthy adults generate RBCs at a rate of more than 2 million per second, and the cells circulate in the bloodstream for 100 to 120 days, during which their size decreases by around 30%. Aged RBCs are then cleared at about the same rate of 2 million per second.

Prior to the reports linking elevated RDW with an increased risk for many diseases, that measure had only been used to help distinguish between different forms of anemia.

To understand the correlation between RDW and disease prognosis, Dr Higgins and his colleagues analyzed raw data from more than 60,000 randomly selected blood samples. They used a mathematical model to replicate how RBC populations behave differently in health and in disease.

The researchers measured the extent to which RBCs in different phases of their life cycle contribute to increased RDW and found that the variance in size was strongly determined by mild increases in the numbers of the smallest and oldest cells.

Since the most important mechanism controlling the number of the oldest cells is the rate at which they are cleared from the bloodstream, the team made several predictions, which were validated by applying their model to clinical data from the blood samples and to data from several published studies.

The researchers found that increased RDW was associated with delayed RBC clearance, and increased RDW was associated with increased average age of RBCs.

Delayed RBC clearance was as strongly associated with overall risk of death, as was increased RDW, and delayed RBC clearance was associated with the presence of early signs of hidden diseases associated with increased RDW.

Patients with delayed RBC clearance had a greater risk of developing signs of those diseases in the future than patients with a typical clearance rate. In healthy patients, the rate of RBC clearance varied less than any other traditional blood-count characteristic.

Dr Higgins said there are many potential clinical applications of these findings, which need to be validated by future studies.

“Finding a reduced clearance rate in an apparently healthy person would likely mean that an underlying disease process was developing—such as the early stages of iron deficiency, kidney disease, colon cancer, or congestive heart failure—and would warrant further diagnostic evaluation,” Dr Higgins said.

“Based on this analysis of routine blood tests, a primary care physician could immediately consider appropriate follow-up diagnostic testing, instead of waiting for other signs and symptoms to appear as the condition progresses. In a patient with established disease, a reduced clearance rate could mean progression of disease or treatment failure, and imminent complications could be avoided or reduced by adjusting treatment right away or at least by more frequent monitoring.”

“In addition to confirming our findings in prospective studies that would follow a group of patients over time, we hope to identify the diseases for which an early warning provided by delayed clearance would lead to the most significant improvements in patient outcomes. We’d also like to understand more about the processes controlling red blood cell clearance and are actively developing similar models for populations of white blood cells and platelets.” ![]()

New research suggests that increased red blood cell distribution width (RDW) is caused by reduced clearance of aging red blood cells (RBCs) from the bloodstream.

And previous studies showed that elevations in RDW predict the development, progression, and risk of death from many conditions.

“It appears that the human body slightly slows down the production and destruction of red blood cells in just about every major disease,” said John Higgins, MD, of Massachusetts General Hospital in Boston.

“If we can accurately measure the production or destruction rates, we might be able to identify many of these diseases in their earlier stages when they are most treatable. Existing measures of the production rate are far too imprecise to detect these subtle changes, but this paper shows how the destruction rate can be estimated using existing blood count data and a mathematical model.”

The paper has been published in the American Journal of Hematology.

Healthy adults generate RBCs at a rate of more than 2 million per second, and the cells circulate in the bloodstream for 100 to 120 days, during which their size decreases by around 30%. Aged RBCs are then cleared at about the same rate of 2 million per second.

Prior to the reports linking elevated RDW with an increased risk for many diseases, that measure had only been used to help distinguish between different forms of anemia.

To understand the correlation between RDW and disease prognosis, Dr Higgins and his colleagues analyzed raw data from more than 60,000 randomly selected blood samples. They used a mathematical model to replicate how RBC populations behave differently in health and in disease.

The researchers measured the extent to which RBCs in different phases of their life cycle contribute to increased RDW and found that the variance in size was strongly determined by mild increases in the numbers of the smallest and oldest cells.

Since the most important mechanism controlling the number of the oldest cells is the rate at which they are cleared from the bloodstream, the team made several predictions, which were validated by applying their model to clinical data from the blood samples and to data from several published studies.

The researchers found that increased RDW was associated with delayed RBC clearance, and increased RDW was associated with increased average age of RBCs.

Delayed RBC clearance was as strongly associated with overall risk of death, as was increased RDW, and delayed RBC clearance was associated with the presence of early signs of hidden diseases associated with increased RDW.

Patients with delayed RBC clearance had a greater risk of developing signs of those diseases in the future than patients with a typical clearance rate. In healthy patients, the rate of RBC clearance varied less than any other traditional blood-count characteristic.

Dr Higgins said there are many potential clinical applications of these findings, which need to be validated by future studies.

“Finding a reduced clearance rate in an apparently healthy person would likely mean that an underlying disease process was developing—such as the early stages of iron deficiency, kidney disease, colon cancer, or congestive heart failure—and would warrant further diagnostic evaluation,” Dr Higgins said.

“Based on this analysis of routine blood tests, a primary care physician could immediately consider appropriate follow-up diagnostic testing, instead of waiting for other signs and symptoms to appear as the condition progresses. In a patient with established disease, a reduced clearance rate could mean progression of disease or treatment failure, and imminent complications could be avoided or reduced by adjusting treatment right away or at least by more frequent monitoring.”

“In addition to confirming our findings in prospective studies that would follow a group of patients over time, we hope to identify the diseases for which an early warning provided by delayed clearance would lead to the most significant improvements in patient outcomes. We’d also like to understand more about the processes controlling red blood cell clearance and are actively developing similar models for populations of white blood cells and platelets.” ![]()

New research suggests that increased red blood cell distribution width (RDW) is caused by reduced clearance of aging red blood cells (RBCs) from the bloodstream.

And previous studies showed that elevations in RDW predict the development, progression, and risk of death from many conditions.

“It appears that the human body slightly slows down the production and destruction of red blood cells in just about every major disease,” said John Higgins, MD, of Massachusetts General Hospital in Boston.

“If we can accurately measure the production or destruction rates, we might be able to identify many of these diseases in their earlier stages when they are most treatable. Existing measures of the production rate are far too imprecise to detect these subtle changes, but this paper shows how the destruction rate can be estimated using existing blood count data and a mathematical model.”

The paper has been published in the American Journal of Hematology.

Healthy adults generate RBCs at a rate of more than 2 million per second, and the cells circulate in the bloodstream for 100 to 120 days, during which their size decreases by around 30%. Aged RBCs are then cleared at about the same rate of 2 million per second.

Prior to the reports linking elevated RDW with an increased risk for many diseases, that measure had only been used to help distinguish between different forms of anemia.

To understand the correlation between RDW and disease prognosis, Dr Higgins and his colleagues analyzed raw data from more than 60,000 randomly selected blood samples. They used a mathematical model to replicate how RBC populations behave differently in health and in disease.

The researchers measured the extent to which RBCs in different phases of their life cycle contribute to increased RDW and found that the variance in size was strongly determined by mild increases in the numbers of the smallest and oldest cells.

Since the most important mechanism controlling the number of the oldest cells is the rate at which they are cleared from the bloodstream, the team made several predictions, which were validated by applying their model to clinical data from the blood samples and to data from several published studies.

The researchers found that increased RDW was associated with delayed RBC clearance, and increased RDW was associated with increased average age of RBCs.

Delayed RBC clearance was as strongly associated with overall risk of death, as was increased RDW, and delayed RBC clearance was associated with the presence of early signs of hidden diseases associated with increased RDW.

Patients with delayed RBC clearance had a greater risk of developing signs of those diseases in the future than patients with a typical clearance rate. In healthy patients, the rate of RBC clearance varied less than any other traditional blood-count characteristic.

Dr Higgins said there are many potential clinical applications of these findings, which need to be validated by future studies.

“Finding a reduced clearance rate in an apparently healthy person would likely mean that an underlying disease process was developing—such as the early stages of iron deficiency, kidney disease, colon cancer, or congestive heart failure—and would warrant further diagnostic evaluation,” Dr Higgins said.

“Based on this analysis of routine blood tests, a primary care physician could immediately consider appropriate follow-up diagnostic testing, instead of waiting for other signs and symptoms to appear as the condition progresses. In a patient with established disease, a reduced clearance rate could mean progression of disease or treatment failure, and imminent complications could be avoided or reduced by adjusting treatment right away or at least by more frequent monitoring.”

“In addition to confirming our findings in prospective studies that would follow a group of patients over time, we hope to identify the diseases for which an early warning provided by delayed clearance would lead to the most significant improvements in patient outcomes. We’d also like to understand more about the processes controlling red blood cell clearance and are actively developing similar models for populations of white blood cells and platelets.” ![]()

Gene therapy appears effective against WAS

Photo by Chad McNeeley

Results of a small study suggest gene therapy can lead to clinical improvements in children and teens with Wiskott-Aldrich syndrome (WAS).

The gene therapy—autologous, gene-corrected hematopoietic stem cells (HSCs) given along with chemotherapy—improved infectious complications, severe eczema, and symptoms of autoimmunity in 6 of the 7 patients studied.

The therapy also reduced patients’ use of blood products and the amount of time they spent in the hospital.

Marina Cavazzana, MD, PhD, of Necker Children’s Hospital in Paris, France, and colleagues reported these results in JAMA. The study was sponsored by Genethon.

The researchers noted that WAS is caused by loss-of-function mutations in the WAS gene. The condition is characterized by thrombocytopenia, eczema, and recurring infections. In the absence of definitive treatment, patients with classic WAS generally do not survive beyond their second or third decade of life.

Partially HLA-matched, allogeneic HSC transplant is often curative, but it is associated with a high incidence of complications. Dr Cavazzana and colleagues speculated that transplanting autologous, gene-corrected HSCs may be an effective and potentially safer alternative.

So the team assessed the outcomes and safety of autologous HSC gene therapy in 7 patients (age range, 0.8-15.5 years) with severe WAS who lacked HLA antigen-matched related or unrelated HSC donors.

Patients were enrolled in France and England and treated between December 2010 and January 2014. Follow-up ranged from 9 months to 42 months.

The treatment involved collecting mutated HSCs from patients and correcting the cells in the lab by introducing a healthy WAS gene using a lentiviral vector developed and produced by Genethon. The corrected cells were reinjected into patients who, in parallel, received chemotherapy to suppress their defective stem cells and autoimmune cells to make room for new, corrected cells.

Six of the 7 patients saw clinical improvements after this treatment. One patient died of pre-existing, treatment-refractory infectious disease.

In the 6 surviving patients, infectious complications resolved after gene therapy, and prophylactic antibiotic therapy was successfully discontinued in 3 cases. Severe eczema resolved in all affected patients, as did signs and symptoms of autoimmunity.

There were no severe bleeding episodes after treatment. And, at last follow-up, none of the 6 surviving patients required blood product support.

The median number of hospitalization days decreased from 25 during the 2 years before treatment to 0 during the 2 years after treatment.

“[T]he patients showed a significant clinical improvement due to the re-expression of the protein WASp in the cells of the immune system,” Dr

Cavazzana said.

However, the researchers also noted that the interpretation of these results is constrained by the small number of patients studied. So the team said they could not draw conclusions on long-term outcomes and safety without further follow-up and additional trials. ![]()

Photo by Chad McNeeley

Results of a small study suggest gene therapy can lead to clinical improvements in children and teens with Wiskott-Aldrich syndrome (WAS).

The gene therapy—autologous, gene-corrected hematopoietic stem cells (HSCs) given along with chemotherapy—improved infectious complications, severe eczema, and symptoms of autoimmunity in 6 of the 7 patients studied.

The therapy also reduced patients’ use of blood products and the amount of time they spent in the hospital.

Marina Cavazzana, MD, PhD, of Necker Children’s Hospital in Paris, France, and colleagues reported these results in JAMA. The study was sponsored by Genethon.

The researchers noted that WAS is caused by loss-of-function mutations in the WAS gene. The condition is characterized by thrombocytopenia, eczema, and recurring infections. In the absence of definitive treatment, patients with classic WAS generally do not survive beyond their second or third decade of life.

Partially HLA-matched, allogeneic HSC transplant is often curative, but it is associated with a high incidence of complications. Dr Cavazzana and colleagues speculated that transplanting autologous, gene-corrected HSCs may be an effective and potentially safer alternative.

So the team assessed the outcomes and safety of autologous HSC gene therapy in 7 patients (age range, 0.8-15.5 years) with severe WAS who lacked HLA antigen-matched related or unrelated HSC donors.

Patients were enrolled in France and England and treated between December 2010 and January 2014. Follow-up ranged from 9 months to 42 months.

The treatment involved collecting mutated HSCs from patients and correcting the cells in the lab by introducing a healthy WAS gene using a lentiviral vector developed and produced by Genethon. The corrected cells were reinjected into patients who, in parallel, received chemotherapy to suppress their defective stem cells and autoimmune cells to make room for new, corrected cells.

Six of the 7 patients saw clinical improvements after this treatment. One patient died of pre-existing, treatment-refractory infectious disease.

In the 6 surviving patients, infectious complications resolved after gene therapy, and prophylactic antibiotic therapy was successfully discontinued in 3 cases. Severe eczema resolved in all affected patients, as did signs and symptoms of autoimmunity.

There were no severe bleeding episodes after treatment. And, at last follow-up, none of the 6 surviving patients required blood product support.

The median number of hospitalization days decreased from 25 during the 2 years before treatment to 0 during the 2 years after treatment.

“[T]he patients showed a significant clinical improvement due to the re-expression of the protein WASp in the cells of the immune system,” Dr

Cavazzana said.

However, the researchers also noted that the interpretation of these results is constrained by the small number of patients studied. So the team said they could not draw conclusions on long-term outcomes and safety without further follow-up and additional trials. ![]()

Photo by Chad McNeeley

Results of a small study suggest gene therapy can lead to clinical improvements in children and teens with Wiskott-Aldrich syndrome (WAS).

The gene therapy—autologous, gene-corrected hematopoietic stem cells (HSCs) given along with chemotherapy—improved infectious complications, severe eczema, and symptoms of autoimmunity in 6 of the 7 patients studied.

The therapy also reduced patients’ use of blood products and the amount of time they spent in the hospital.

Marina Cavazzana, MD, PhD, of Necker Children’s Hospital in Paris, France, and colleagues reported these results in JAMA. The study was sponsored by Genethon.

The researchers noted that WAS is caused by loss-of-function mutations in the WAS gene. The condition is characterized by thrombocytopenia, eczema, and recurring infections. In the absence of definitive treatment, patients with classic WAS generally do not survive beyond their second or third decade of life.

Partially HLA-matched, allogeneic HSC transplant is often curative, but it is associated with a high incidence of complications. Dr Cavazzana and colleagues speculated that transplanting autologous, gene-corrected HSCs may be an effective and potentially safer alternative.

So the team assessed the outcomes and safety of autologous HSC gene therapy in 7 patients (age range, 0.8-15.5 years) with severe WAS who lacked HLA antigen-matched related or unrelated HSC donors.

Patients were enrolled in France and England and treated between December 2010 and January 2014. Follow-up ranged from 9 months to 42 months.

The treatment involved collecting mutated HSCs from patients and correcting the cells in the lab by introducing a healthy WAS gene using a lentiviral vector developed and produced by Genethon. The corrected cells were reinjected into patients who, in parallel, received chemotherapy to suppress their defective stem cells and autoimmune cells to make room for new, corrected cells.

Six of the 7 patients saw clinical improvements after this treatment. One patient died of pre-existing, treatment-refractory infectious disease.

In the 6 surviving patients, infectious complications resolved after gene therapy, and prophylactic antibiotic therapy was successfully discontinued in 3 cases. Severe eczema resolved in all affected patients, as did signs and symptoms of autoimmunity.

There were no severe bleeding episodes after treatment. And, at last follow-up, none of the 6 surviving patients required blood product support.

The median number of hospitalization days decreased from 25 during the 2 years before treatment to 0 during the 2 years after treatment.

“[T]he patients showed a significant clinical improvement due to the re-expression of the protein WASp in the cells of the immune system,” Dr

Cavazzana said.

However, the researchers also noted that the interpretation of these results is constrained by the small number of patients studied. So the team said they could not draw conclusions on long-term outcomes and safety without further follow-up and additional trials. ![]()

Interventions reduce bloodstream infections

A bundled intervention can considerably reduce central line-associated bloodstream infections (CLABSIs), according to research published in Infection Control & Hospital Epidemiology.

The intervention focused on evidence-based infection prevention practices, safety culture and teamwork, and scheduled measurement of infection rates.

By implementing these measures, intensive care units (ICUs) in Abu Dhabi achieved an overall 38% reduction in CLABSIs.

“These hospitals were able to show significant improvements in infection rates and have been able to sustain the improvements a year after we finished the project,” said study author Asad Latif, MBBS, MD, of the Johns Hopkins University School of Medicine in Baltimore, Maryland.

“Our results suggest that ICUs in disparate settings around the world could use this program and achieve similar results, significantly reducing the global morbidity, mortality, and excess costs associated with CLABSIs. In addition, this collaborative could serve as a model for future efforts to reduce other types of preventable medical harms in the Middle East and around the world.”

This study was a collaborative effort by the Armstrong Institute, Johns Hopkins Medicine International, and the Abu Dhabi Health Services Company (SEHA), which operates the government healthcare system in Abu Dhabi.

For the study, ICUs were instructed to assemble a comprehensive unit-based safety program (CUSP) team comprising local physician and nursing leaders, a senior executive, frontline healthcare providers, an infection control provider, and hospital quality and safety leaders.

The ICUs included 10 adult, 5 neonatal, and 3 pediatric ICUs, accounting for 77% of the adult, 74% of the neonatal, and 100% of the pediatric ICU beds in Abu Dhabi.

Starting in May 2012, the SEHA corporate quality team and ICU CUSP teams attended 14 weekly live webinars on CLABSI prevention conducted by Armstrong Institute faculty, followed by content and coaching webinars every 2 weeks for 24 months. The webinars were recorded by SEHA and posted on a local, shared computer drive, along with educational and training materials.

Armstrong faculty also conducted 4 site visits in Abu Dhabi at the beginning of the study, visiting each ICU to meet the CUSP team and tour the units. A year later, they conducted a 3-day patient safety workshop for participating hospitals.

CUSP teams implemented 3 interventions as part of the program: an effort to prevent CLABSIs that targeted clinicians’ use of evidence-based infection prevention recommendations from the Centers for Disease Control and Prevention, a CUSP process to improve safety culture and teamwork, and measurement of monthly CLABSI data and feedback to safety teams, senior leaders, and ICU staff.

The overall mean crude CLABSI rate for participating ICUs decreased from 2.56 infections per 1000 catheter days to 1.79 per 1,000 catheter days by the end of the study, corresponding to a 30% reduction.

By unit type, CLABSI rates decreased by 16% among adult ICUs, 48% among pediatric ICUs, and 47% in neonatal ICUs. The percentage of ICUs that achieved a quarterly CLABSI rate of less than 1 infection per 1000 catheter days increased from 44% to 61% after the interventions.

“Despite growing awareness, many hospitals around the world continue to struggle in their efforts to meaningfully reduce their CLABSI rates in a sustained manner,” said Sean M. Berenholtz, MD, of the Johns Hopkins University School of Medicine.

“In addition, hospitals and healthcare systems in the Middle East have unique barriers to implementing quality improvement programs, such as challenges with staff recruitment and retention, and personnel fearful of punitive repercussions from speaking up regarding patient safety concerns. In our study, bringing all stakeholders to the same table allowed everyone to share their concerns and ensure their voices were heard.” ![]()

A bundled intervention can considerably reduce central line-associated bloodstream infections (CLABSIs), according to research published in Infection Control & Hospital Epidemiology.

The intervention focused on evidence-based infection prevention practices, safety culture and teamwork, and scheduled measurement of infection rates.

By implementing these measures, intensive care units (ICUs) in Abu Dhabi achieved an overall 38% reduction in CLABSIs.

“These hospitals were able to show significant improvements in infection rates and have been able to sustain the improvements a year after we finished the project,” said study author Asad Latif, MBBS, MD, of the Johns Hopkins University School of Medicine in Baltimore, Maryland.

“Our results suggest that ICUs in disparate settings around the world could use this program and achieve similar results, significantly reducing the global morbidity, mortality, and excess costs associated with CLABSIs. In addition, this collaborative could serve as a model for future efforts to reduce other types of preventable medical harms in the Middle East and around the world.”

This study was a collaborative effort by the Armstrong Institute, Johns Hopkins Medicine International, and the Abu Dhabi Health Services Company (SEHA), which operates the government healthcare system in Abu Dhabi.

For the study, ICUs were instructed to assemble a comprehensive unit-based safety program (CUSP) team comprising local physician and nursing leaders, a senior executive, frontline healthcare providers, an infection control provider, and hospital quality and safety leaders.

The ICUs included 10 adult, 5 neonatal, and 3 pediatric ICUs, accounting for 77% of the adult, 74% of the neonatal, and 100% of the pediatric ICU beds in Abu Dhabi.

Starting in May 2012, the SEHA corporate quality team and ICU CUSP teams attended 14 weekly live webinars on CLABSI prevention conducted by Armstrong Institute faculty, followed by content and coaching webinars every 2 weeks for 24 months. The webinars were recorded by SEHA and posted on a local, shared computer drive, along with educational and training materials.

Armstrong faculty also conducted 4 site visits in Abu Dhabi at the beginning of the study, visiting each ICU to meet the CUSP team and tour the units. A year later, they conducted a 3-day patient safety workshop for participating hospitals.

CUSP teams implemented 3 interventions as part of the program: an effort to prevent CLABSIs that targeted clinicians’ use of evidence-based infection prevention recommendations from the Centers for Disease Control and Prevention, a CUSP process to improve safety culture and teamwork, and measurement of monthly CLABSI data and feedback to safety teams, senior leaders, and ICU staff.

The overall mean crude CLABSI rate for participating ICUs decreased from 2.56 infections per 1000 catheter days to 1.79 per 1,000 catheter days by the end of the study, corresponding to a 30% reduction.

By unit type, CLABSI rates decreased by 16% among adult ICUs, 48% among pediatric ICUs, and 47% in neonatal ICUs. The percentage of ICUs that achieved a quarterly CLABSI rate of less than 1 infection per 1000 catheter days increased from 44% to 61% after the interventions.

“Despite growing awareness, many hospitals around the world continue to struggle in their efforts to meaningfully reduce their CLABSI rates in a sustained manner,” said Sean M. Berenholtz, MD, of the Johns Hopkins University School of Medicine.

“In addition, hospitals and healthcare systems in the Middle East have unique barriers to implementing quality improvement programs, such as challenges with staff recruitment and retention, and personnel fearful of punitive repercussions from speaking up regarding patient safety concerns. In our study, bringing all stakeholders to the same table allowed everyone to share their concerns and ensure their voices were heard.” ![]()

A bundled intervention can considerably reduce central line-associated bloodstream infections (CLABSIs), according to research published in Infection Control & Hospital Epidemiology.

The intervention focused on evidence-based infection prevention practices, safety culture and teamwork, and scheduled measurement of infection rates.

By implementing these measures, intensive care units (ICUs) in Abu Dhabi achieved an overall 38% reduction in CLABSIs.

“These hospitals were able to show significant improvements in infection rates and have been able to sustain the improvements a year after we finished the project,” said study author Asad Latif, MBBS, MD, of the Johns Hopkins University School of Medicine in Baltimore, Maryland.

“Our results suggest that ICUs in disparate settings around the world could use this program and achieve similar results, significantly reducing the global morbidity, mortality, and excess costs associated with CLABSIs. In addition, this collaborative could serve as a model for future efforts to reduce other types of preventable medical harms in the Middle East and around the world.”

This study was a collaborative effort by the Armstrong Institute, Johns Hopkins Medicine International, and the Abu Dhabi Health Services Company (SEHA), which operates the government healthcare system in Abu Dhabi.

For the study, ICUs were instructed to assemble a comprehensive unit-based safety program (CUSP) team comprising local physician and nursing leaders, a senior executive, frontline healthcare providers, an infection control provider, and hospital quality and safety leaders.

The ICUs included 10 adult, 5 neonatal, and 3 pediatric ICUs, accounting for 77% of the adult, 74% of the neonatal, and 100% of the pediatric ICU beds in Abu Dhabi.

Starting in May 2012, the SEHA corporate quality team and ICU CUSP teams attended 14 weekly live webinars on CLABSI prevention conducted by Armstrong Institute faculty, followed by content and coaching webinars every 2 weeks for 24 months. The webinars were recorded by SEHA and posted on a local, shared computer drive, along with educational and training materials.

Armstrong faculty also conducted 4 site visits in Abu Dhabi at the beginning of the study, visiting each ICU to meet the CUSP team and tour the units. A year later, they conducted a 3-day patient safety workshop for participating hospitals.

CUSP teams implemented 3 interventions as part of the program: an effort to prevent CLABSIs that targeted clinicians’ use of evidence-based infection prevention recommendations from the Centers for Disease Control and Prevention, a CUSP process to improve safety culture and teamwork, and measurement of monthly CLABSI data and feedback to safety teams, senior leaders, and ICU staff.

The overall mean crude CLABSI rate for participating ICUs decreased from 2.56 infections per 1000 catheter days to 1.79 per 1,000 catheter days by the end of the study, corresponding to a 30% reduction.

By unit type, CLABSI rates decreased by 16% among adult ICUs, 48% among pediatric ICUs, and 47% in neonatal ICUs. The percentage of ICUs that achieved a quarterly CLABSI rate of less than 1 infection per 1000 catheter days increased from 44% to 61% after the interventions.

“Despite growing awareness, many hospitals around the world continue to struggle in their efforts to meaningfully reduce their CLABSI rates in a sustained manner,” said Sean M. Berenholtz, MD, of the Johns Hopkins University School of Medicine.

“In addition, hospitals and healthcare systems in the Middle East have unique barriers to implementing quality improvement programs, such as challenges with staff recruitment and retention, and personnel fearful of punitive repercussions from speaking up regarding patient safety concerns. In our study, bringing all stakeholders to the same table allowed everyone to share their concerns and ensure their voices were heard.”

Discovery could aid treatment of leukemia, lymphoma

Photo courtesy of IRCM

Researchers say they have uncovered a mechanism that could aid the development of therapies for lymphomas and leukemias.

The group’s research shed new light on a mechanism affecting activation-induced deaminase (AID), an enzyme that has proven crucial for immune response.

Javier Di Noia, PhD, of Institut de Recherches Cliniques de Montreal (IRCM) in Quebec, Canada, and his colleagues described this mechanism in The Journal of Experimental Medicine.

Dr Di Noia noted that, although AID is crucial for an efficient antibody response, high levels of the enzyme can have harmful effects and lead to cancer-causing mutations.

“The objective is to find the perfect level of AID activity to maximize the protection it provides to the body while reducing the risk of damage it can cause to cells,” he said.

Dr Di Noia and his colleagues previously found that heat-shock protein 90 (Hsp90) maintains the levels of AID by stabilizing it while it is still immature. In fact, they discovered that inhibiting Hsp90 significantly reduces the levels of AID in the cell.

“Through this new study, we identified another mechanism, controlled by the protein eEF1a [elongation factor eukaryotic elongation factor 1 α], that has the opposite effect,” said Stephen P. Methot, a PhD student in Dr Di Noia’s lab.

“The protein eEF1a retains AID in the cell’s cytoplasm, away from the genome. However, unlike Hsp90, it maintains AID in a ready-to-act state. We discovered that blocking the interaction between AID and eEF1a helps AID access the cell nucleus and thereby boosts AID activity. As a result, this could increase immune response and help fight infections, for instance.”

“We found the eEF1a mechanism is necessary to restrict AID activity in the cell. It acts as a buffer by allowing the cell to accumulate enough AID to be efficient but limits its activity to prevent the oncogenic or toxic effects that could result if too much AID is in continuous contact with the genome.”

The researchers also identified 2 existing drugs that can act on the eEF1a mechanism to release AID into the cell. The team said these drugs could potentially be used to boost AID activity and, thus, immune responses.

“With this discovery, we now understand mechanisms that can both reduce and increase the activity of AID by targeting different proteins,” Dr Di Noia said.

“This knowledge could eventually lead to new treatments to boost the immune system and help our aging population fight influenza, for example, as AID activity in our cells decreases with age. On the other hand, therapies could also be developed to lower toxic levels of AID in certain cancers such as B-cell lymphoma and leukemia.”

Photo courtesy of IRCM

Researchers say they have uncovered a mechanism that could aid the development of therapies for lymphomas and leukemias.

The group’s research shed new light on a mechanism affecting activation-induced deaminase (AID), an enzyme that has proven crucial for immune response.

Javier Di Noia, PhD, of Institut de Recherches Cliniques de Montreal (IRCM) in Quebec, Canada, and his colleagues described this mechanism in The Journal of Experimental Medicine.

Dr Di Noia noted that, although AID is crucial for an efficient antibody response, high levels of the enzyme can have harmful effects and lead to cancer-causing mutations.

“The objective is to find the perfect level of AID activity to maximize the protection it provides to the body while reducing the risk of damage it can cause to cells,” he said.

Dr Di Noia and his colleagues previously found that heat-shock protein 90 (Hsp90) maintains the levels of AID by stabilizing it while it is still immature. In fact, they discovered that inhibiting Hsp90 significantly reduces the levels of AID in the cell.

“Through this new study, we identified another mechanism, controlled by the protein eEF1a [elongation factor eukaryotic elongation factor 1 α], that has the opposite effect,” said Stephen P. Methot, a PhD student in Dr Di Noia’s lab.

“The protein eEF1a retains AID in the cell’s cytoplasm, away from the genome. However, unlike Hsp90, it maintains AID in a ready-to-act state. We discovered that blocking the interaction between AID and eEF1a helps AID access the cell nucleus and thereby boosts AID activity. As a result, this could increase immune response and help fight infections, for instance.”

“We found the eEF1a mechanism is necessary to restrict AID activity in the cell. It acts as a buffer by allowing the cell to accumulate enough AID to be efficient but limits its activity to prevent the oncogenic or toxic effects that could result if too much AID is in continuous contact with the genome.”

The researchers also identified 2 existing drugs that can act on the eEF1a mechanism to release AID into the cell. The team said these drugs could potentially be used to boost AID activity and, thus, immune responses.

“With this discovery, we now understand mechanisms that can both reduce and increase the activity of AID by targeting different proteins,” Dr Di Noia said.

“This knowledge could eventually lead to new treatments to boost the immune system and help our aging population fight influenza, for example, as AID activity in our cells decreases with age. On the other hand, therapies could also be developed to lower toxic levels of AID in certain cancers such as B-cell lymphoma and leukemia.”

Photo courtesy of IRCM

Researchers say they have uncovered a mechanism that could aid the development of therapies for lymphomas and leukemias.

The group’s research shed new light on a mechanism affecting activation-induced deaminase (AID), an enzyme that has proven crucial for immune response.

Javier Di Noia, PhD, of Institut de Recherches Cliniques de Montreal (IRCM) in Quebec, Canada, and his colleagues described this mechanism in The Journal of Experimental Medicine.

Dr Di Noia noted that, although AID is crucial for an efficient antibody response, high levels of the enzyme can have harmful effects and lead to cancer-causing mutations.

“The objective is to find the perfect level of AID activity to maximize the protection it provides to the body while reducing the risk of damage it can cause to cells,” he said.

Dr Di Noia and his colleagues previously found that heat-shock protein 90 (Hsp90) maintains the levels of AID by stabilizing it while it is still immature. In fact, they discovered that inhibiting Hsp90 significantly reduces the levels of AID in the cell.

“Through this new study, we identified another mechanism, controlled by the protein eEF1a [elongation factor eukaryotic elongation factor 1 α], that has the opposite effect,” said Stephen P. Methot, a PhD student in Dr Di Noia’s lab.

“The protein eEF1a retains AID in the cell’s cytoplasm, away from the genome. However, unlike Hsp90, it maintains AID in a ready-to-act state. We discovered that blocking the interaction between AID and eEF1a helps AID access the cell nucleus and thereby boosts AID activity. As a result, this could increase immune response and help fight infections, for instance.”

“We found the eEF1a mechanism is necessary to restrict AID activity in the cell. It acts as a buffer by allowing the cell to accumulate enough AID to be efficient but limits its activity to prevent the oncogenic or toxic effects that could result if too much AID is in continuous contact with the genome.”

The researchers also identified 2 existing drugs that can act on the eEF1a mechanism to release AID into the cell. The team said these drugs could potentially be used to boost AID activity and, thus, immune responses.