User login

Abdominal distention and pain

Given the patient's symptomatology, laboratory studies, and the histopathology and immunophenotyping of the polypoid lesions in the transverse colon, this patient is diagnosed with advanced mantle cell lymphoma (MCL). The gastroenterologist shares the findings with the patient, and over the next several days, a multidisciplinary team forms to guide the patient through potential next steps and treatment options.

MCL is a type of B-cell neoplasm that, with advancements in the understanding of non-Hodgkin lymphoma (NHL) in the past 30 years, has been defined as its own clinicopathologic entity by the Revised European-American Lymphoma and World Health Organization classifications. Up to 10% of all non-Hodgkin lymphomas are MCL. Clinical presentation includes advanced disease with B symptoms (eg, night sweats, fever, weight loss), generalized lymphadenopathy, abdominal distention associated with hepatosplenomegaly, and fatigue. One of the most frequent areas for extra-nodal MCL presentation is the gastrointestinal tract. Men are more likely to present with MCL than are women by a ratio of 3:1. Median age at presentation is 67 years.

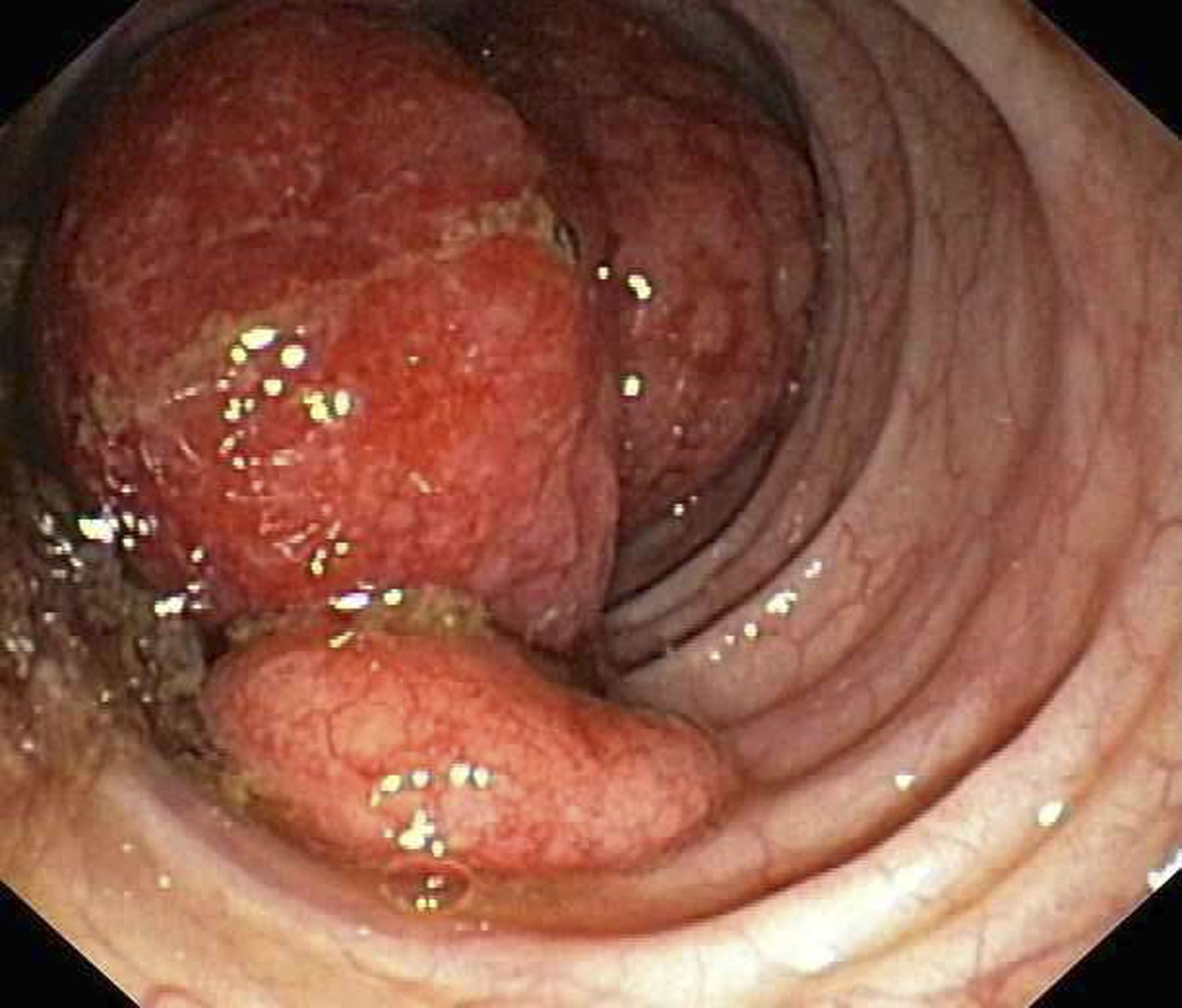

Diagnosing MCL is a multipronged approach. Physical examination may reveal lymphadenopathy and hepatosplenomegaly. Lymph node biopsy and aspiration with immunophenotyping in MCL reveals monoclonal B cells expressing surface immunoglobulin (Ig), IgM, or IgD, which are characteristically CD5+ and pan B-cell antigen–positive (eg, CD19, CD20, CD22) but lack expression of CD10 and CD23 and overexpress cyclin D1. Bone marrow aspirate/biopsy are used more for staging than for diagnosis. Blood studies, including anemia and cytopenias secondary to bone marrow infiltration (with up to 40% of cases showing lymphocytosis > 4000/μL), abnormal liver function tests, and a negative Coombs test, also help diagnose MCL. Gastrointestinal involvement of MCL typically presents as lymphoid polyposis on colonoscopy imaging and can appear in the colon, ileum, stomach, and duodenum.

Pathogenesis of MCL involves disordered lymphoproliferation in a subset of naive pregerminal center cells in primary follicles or in the mantle region of secondary follicles. Most cases are linked with translocation of chromosome 14 and 11, which induces overexpression of protein cyclin D1. Viral infection (Epstein-Barr virus, HIV, human T-lymphotropic virus type 1, human herpes virus 6), environmental factors, and primary and secondary immunodeficiency are also associated with the development of NHL.

Patient education should include detailed information about clinical trials, available treatment options and associated adverse events, as well as psychosocial and nutrition counseling.

Chemoimmunotherapy is standard initial treatment for MCL, but relapse is expected. Chemotherapy-free regimens with biologic targets, when used in second-line treatment, have increasingly become an important first-line treatment given their efficacy in the relapsed/refractory setting. Chimeric antigen receptor T-cell therapy is also a second-line treatment option. In patients with MCL and a TP53 mutation, clinical trial participation is encouraged because of poor prognosis.

Karl J. D'Silva, MD, Clinical Assistant Professor, Department of Medicine, Tufts University School of Medicine, Boston; Medical Director, Department of Oncology and Hematology, Lahey Hospital and Medical Center, Peabody, Massachusetts.

Karl J. D'Silva, MD, has disclosed no relevant financial relationships.

Image Quizzes are fictional or fictionalized clinical scenarios intended to provide evidence-based educational takeaways.

Given the patient's symptomatology, laboratory studies, and the histopathology and immunophenotyping of the polypoid lesions in the transverse colon, this patient is diagnosed with advanced mantle cell lymphoma (MCL). The gastroenterologist shares the findings with the patient, and over the next several days, a multidisciplinary team forms to guide the patient through potential next steps and treatment options.

MCL is a type of B-cell neoplasm that, with advancements in the understanding of non-Hodgkin lymphoma (NHL) in the past 30 years, has been defined as its own clinicopathologic entity by the Revised European-American Lymphoma and World Health Organization classifications. Up to 10% of all non-Hodgkin lymphomas are MCL. Clinical presentation includes advanced disease with B symptoms (eg, night sweats, fever, weight loss), generalized lymphadenopathy, abdominal distention associated with hepatosplenomegaly, and fatigue. One of the most frequent areas for extra-nodal MCL presentation is the gastrointestinal tract. Men are more likely to present with MCL than are women by a ratio of 3:1. Median age at presentation is 67 years.

Diagnosing MCL is a multipronged approach. Physical examination may reveal lymphadenopathy and hepatosplenomegaly. Lymph node biopsy and aspiration with immunophenotyping in MCL reveals monoclonal B cells expressing surface immunoglobulin (Ig), IgM, or IgD, which are characteristically CD5+ and pan B-cell antigen–positive (eg, CD19, CD20, CD22) but lack expression of CD10 and CD23 and overexpress cyclin D1. Bone marrow aspirate/biopsy are used more for staging than for diagnosis. Blood studies, including anemia and cytopenias secondary to bone marrow infiltration (with up to 40% of cases showing lymphocytosis > 4000/μL), abnormal liver function tests, and a negative Coombs test, also help diagnose MCL. Gastrointestinal involvement of MCL typically presents as lymphoid polyposis on colonoscopy imaging and can appear in the colon, ileum, stomach, and duodenum.

Pathogenesis of MCL involves disordered lymphoproliferation in a subset of naive pregerminal center cells in primary follicles or in the mantle region of secondary follicles. Most cases are linked with translocation of chromosome 14 and 11, which induces overexpression of protein cyclin D1. Viral infection (Epstein-Barr virus, HIV, human T-lymphotropic virus type 1, human herpes virus 6), environmental factors, and primary and secondary immunodeficiency are also associated with the development of NHL.

Patient education should include detailed information about clinical trials, available treatment options and associated adverse events, as well as psychosocial and nutrition counseling.

Chemoimmunotherapy is standard initial treatment for MCL, but relapse is expected. Chemotherapy-free regimens with biologic targets, when used in second-line treatment, have increasingly become an important first-line treatment given their efficacy in the relapsed/refractory setting. Chimeric antigen receptor T-cell therapy is also a second-line treatment option. In patients with MCL and a TP53 mutation, clinical trial participation is encouraged because of poor prognosis.

Karl J. D'Silva, MD, Clinical Assistant Professor, Department of Medicine, Tufts University School of Medicine, Boston; Medical Director, Department of Oncology and Hematology, Lahey Hospital and Medical Center, Peabody, Massachusetts.

Karl J. D'Silva, MD, has disclosed no relevant financial relationships.

Image Quizzes are fictional or fictionalized clinical scenarios intended to provide evidence-based educational takeaways.

Given the patient's symptomatology, laboratory studies, and the histopathology and immunophenotyping of the polypoid lesions in the transverse colon, this patient is diagnosed with advanced mantle cell lymphoma (MCL). The gastroenterologist shares the findings with the patient, and over the next several days, a multidisciplinary team forms to guide the patient through potential next steps and treatment options.

MCL is a type of B-cell neoplasm that, with advancements in the understanding of non-Hodgkin lymphoma (NHL) in the past 30 years, has been defined as its own clinicopathologic entity by the Revised European-American Lymphoma and World Health Organization classifications. Up to 10% of all non-Hodgkin lymphomas are MCL. Clinical presentation includes advanced disease with B symptoms (eg, night sweats, fever, weight loss), generalized lymphadenopathy, abdominal distention associated with hepatosplenomegaly, and fatigue. One of the most frequent areas for extra-nodal MCL presentation is the gastrointestinal tract. Men are more likely to present with MCL than are women by a ratio of 3:1. Median age at presentation is 67 years.

Diagnosing MCL is a multipronged approach. Physical examination may reveal lymphadenopathy and hepatosplenomegaly. Lymph node biopsy and aspiration with immunophenotyping in MCL reveals monoclonal B cells expressing surface immunoglobulin (Ig), IgM, or IgD, which are characteristically CD5+ and pan B-cell antigen–positive (eg, CD19, CD20, CD22) but lack expression of CD10 and CD23 and overexpress cyclin D1. Bone marrow aspirate/biopsy are used more for staging than for diagnosis. Blood studies, including anemia and cytopenias secondary to bone marrow infiltration (with up to 40% of cases showing lymphocytosis > 4000/μL), abnormal liver function tests, and a negative Coombs test, also help diagnose MCL. Gastrointestinal involvement of MCL typically presents as lymphoid polyposis on colonoscopy imaging and can appear in the colon, ileum, stomach, and duodenum.

Pathogenesis of MCL involves disordered lymphoproliferation in a subset of naive pregerminal center cells in primary follicles or in the mantle region of secondary follicles. Most cases are linked with translocation of chromosome 14 and 11, which induces overexpression of protein cyclin D1. Viral infection (Epstein-Barr virus, HIV, human T-lymphotropic virus type 1, human herpes virus 6), environmental factors, and primary and secondary immunodeficiency are also associated with the development of NHL.

Patient education should include detailed information about clinical trials, available treatment options and associated adverse events, as well as psychosocial and nutrition counseling.

Chemoimmunotherapy is standard initial treatment for MCL, but relapse is expected. Chemotherapy-free regimens with biologic targets, when used in second-line treatment, have increasingly become an important first-line treatment given their efficacy in the relapsed/refractory setting. Chimeric antigen receptor T-cell therapy is also a second-line treatment option. In patients with MCL and a TP53 mutation, clinical trial participation is encouraged because of poor prognosis.

Karl J. D'Silva, MD, Clinical Assistant Professor, Department of Medicine, Tufts University School of Medicine, Boston; Medical Director, Department of Oncology and Hematology, Lahey Hospital and Medical Center, Peabody, Massachusetts.

Karl J. D'Silva, MD, has disclosed no relevant financial relationships.

Image Quizzes are fictional or fictionalized clinical scenarios intended to provide evidence-based educational takeaways.

A 60-year-old man presents to his primary care physician with weight loss, constipation, and abdominal distention and pain as well as fatigue and night sweats that have lasted for several months. The physician orders a complete blood count with differential and an ultrasound of the abdomen. Lab studies reveal anemia and cytopenias; ultrasound reveals hepatosplenomegaly and abdominal lymphadenopathy. The physician refers the patient to gastroenterology; he undergoes a colonoscopy. Multiple polypoid lesions are found throughout the transverse colon. Immunophenotyping shows CD5 and CD20 expression but a lack of CD23 and CD10 expression; cyclin D1 is overexpressed. Additional blood studies show lymphocytosis > 4000/μL, elevated lactate dehydrogenase levels, abnormal liver function tests, and a negative result on Coombs test.

SCD mortality rates improved for Black patients in 2010s

But the news is not all positive. Mortality rates still jumped markedly as patients transitioned from pediatric to adult care, lead author Kristine A. Karkoska, MD, a pediatric hematology/oncologist with the University of Cincinnati College of Medicine, said at the annual meeting of the American Society of Hematology.

“This reflects that young adults are getting lost to care, and then they’re presenting with acute, life-threatening complications,” she said. “We still need more emphasis on comprehensive lifetime sickle-cell care and the transition to adult clinics to improve mortality in young adults.”

According to Dr. Karkoska, researchers launched the analysis of sickle-cell mortality rates to update previously available data up to the year 2009, which showed improvements as current standard-of-care treatments were introduced. Updated numbers, she said, would reflect the influence of a rise in dedicated SCD clinics and a 2014 National Heart, Lung, and Blood Institute recommendation that all children with SCD be treated with hydroxyurea starting at 9 months.

For the study, Dr. Karkoska and colleagues analyzed mortality statistics from the period of 1979-2020 via a CDC database. They found that 5272 Black patients died of SCD from 2010 to 2020. The crude mortality rate was 1.1 per 100,000 Black people, lower than the 1.2 per 100,000 rate of 1999-2009 (P < .0001).

The researchers also found that from 2010 to 2020, the mortality rate jumped for patients in the 15-19 to 20-24 age group: It rose from 0.9 per 100,000 to 1.4 per 100,000, P < .0001).

The researchers also examined contributors to death other than SCD. In 39% of cases, underlying causes were noted: cardiovascular disease (28%), accidents (7%), cerebrovascular disease (7%), malignancy (6%), septicemia (4.8%), and renal disease (3.8%). The population of people with SCD is “getting older, and they’re developing a combination of both sickle-related chronic organ damage as well as non-sickle-related chronic disease,” Dr. Karkoska said.

She noted that limitations include a reliance on data that can be incomplete or inaccurate. She also mentioned that the study only focuses on Black patients, who make up the vast majority of those with SCD.

How good is the news about improved mortality numbers? One member of the audience at the ASH presentation was disappointed that they hadn’t gotten even better. “I was hoping to come here to be cheered up,” he said, “and I’m not.”

Three physicians who didn’t take part in the research but are familiar with the new study spoke in interviews about the findings.

Michael Bender, MD, PhD, director of the Odessa Brown Comprehensive Sickle Cell Clinic in Seattle, pointed out that mortality rates improve slowly over time, as new treatments enter the picture. When new therapies come along, he said, “it’s tough if someone’s already 40 years old and their body has gone through a lot. They’re not going to have as much benefit as someone who started [on therapy] when they were 5 years old, and they grew up with that improvement.”

Sickle cell specialist Asmaa Ferdjallah, MD, MPH, of the Mayo Clinic in Rochester, Minnesota, said that the data showing a spike in mortality rates during the pediatric-adult transition are not surprising but still “really hard to digest.”

“It is a testament to the fact that we are not meeting patients where they are,” she said. “We struggle immensely with the transition period. This is something that is difficult across all providers all over the country,” she said. “There are different ways to ensure a successful transition from the pediatric side to the adult side. Here at Mayo Clinic, we use a slow transition, and we rotate appointments with peds and adults until age 30.”

Sophie Miriam Lanzkron, MD, MHS, director of the Sickle Cell Center for Adults at Johns Hopkins Hospital, Baltimore, said increases in mortality in the post-pediatric period appear to be due in part to “lack of access to high-quality sickle cell care for adults because there aren’t enough hematologists.” Worsening disease due to aging is another factor, she said, and “there might also be some behavioral changes. Young people think they will live forever. Sometimes they choose not to adhere to medical recommendations, which for this population is very risky.”

Dr. Lanzkron said her team is developing a long-term patient registry that should provide more insight.

No study funding was reported. Dr. Karkoska had no disclosures. The other coauthor disclosed research funding and safety advisory board relationships with Novartis. Dr. Ferdjallah, Dr. Lanzkron, and Dr. Bender reported no disclosures.

But the news is not all positive. Mortality rates still jumped markedly as patients transitioned from pediatric to adult care, lead author Kristine A. Karkoska, MD, a pediatric hematology/oncologist with the University of Cincinnati College of Medicine, said at the annual meeting of the American Society of Hematology.

“This reflects that young adults are getting lost to care, and then they’re presenting with acute, life-threatening complications,” she said. “We still need more emphasis on comprehensive lifetime sickle-cell care and the transition to adult clinics to improve mortality in young adults.”

According to Dr. Karkoska, researchers launched the analysis of sickle-cell mortality rates to update previously available data up to the year 2009, which showed improvements as current standard-of-care treatments were introduced. Updated numbers, she said, would reflect the influence of a rise in dedicated SCD clinics and a 2014 National Heart, Lung, and Blood Institute recommendation that all children with SCD be treated with hydroxyurea starting at 9 months.

For the study, Dr. Karkoska and colleagues analyzed mortality statistics from the period of 1979-2020 via a CDC database. They found that 5272 Black patients died of SCD from 2010 to 2020. The crude mortality rate was 1.1 per 100,000 Black people, lower than the 1.2 per 100,000 rate of 1999-2009 (P < .0001).

The researchers also found that from 2010 to 2020, the mortality rate jumped for patients in the 15-19 to 20-24 age group: It rose from 0.9 per 100,000 to 1.4 per 100,000, P < .0001).

The researchers also examined contributors to death other than SCD. In 39% of cases, underlying causes were noted: cardiovascular disease (28%), accidents (7%), cerebrovascular disease (7%), malignancy (6%), septicemia (4.8%), and renal disease (3.8%). The population of people with SCD is “getting older, and they’re developing a combination of both sickle-related chronic organ damage as well as non-sickle-related chronic disease,” Dr. Karkoska said.

She noted that limitations include a reliance on data that can be incomplete or inaccurate. She also mentioned that the study only focuses on Black patients, who make up the vast majority of those with SCD.

How good is the news about improved mortality numbers? One member of the audience at the ASH presentation was disappointed that they hadn’t gotten even better. “I was hoping to come here to be cheered up,” he said, “and I’m not.”

Three physicians who didn’t take part in the research but are familiar with the new study spoke in interviews about the findings.

Michael Bender, MD, PhD, director of the Odessa Brown Comprehensive Sickle Cell Clinic in Seattle, pointed out that mortality rates improve slowly over time, as new treatments enter the picture. When new therapies come along, he said, “it’s tough if someone’s already 40 years old and their body has gone through a lot. They’re not going to have as much benefit as someone who started [on therapy] when they were 5 years old, and they grew up with that improvement.”

Sickle cell specialist Asmaa Ferdjallah, MD, MPH, of the Mayo Clinic in Rochester, Minnesota, said that the data showing a spike in mortality rates during the pediatric-adult transition are not surprising but still “really hard to digest.”

“It is a testament to the fact that we are not meeting patients where they are,” she said. “We struggle immensely with the transition period. This is something that is difficult across all providers all over the country,” she said. “There are different ways to ensure a successful transition from the pediatric side to the adult side. Here at Mayo Clinic, we use a slow transition, and we rotate appointments with peds and adults until age 30.”

Sophie Miriam Lanzkron, MD, MHS, director of the Sickle Cell Center for Adults at Johns Hopkins Hospital, Baltimore, said increases in mortality in the post-pediatric period appear to be due in part to “lack of access to high-quality sickle cell care for adults because there aren’t enough hematologists.” Worsening disease due to aging is another factor, she said, and “there might also be some behavioral changes. Young people think they will live forever. Sometimes they choose not to adhere to medical recommendations, which for this population is very risky.”

Dr. Lanzkron said her team is developing a long-term patient registry that should provide more insight.

No study funding was reported. Dr. Karkoska had no disclosures. The other coauthor disclosed research funding and safety advisory board relationships with Novartis. Dr. Ferdjallah, Dr. Lanzkron, and Dr. Bender reported no disclosures.

But the news is not all positive. Mortality rates still jumped markedly as patients transitioned from pediatric to adult care, lead author Kristine A. Karkoska, MD, a pediatric hematology/oncologist with the University of Cincinnati College of Medicine, said at the annual meeting of the American Society of Hematology.

“This reflects that young adults are getting lost to care, and then they’re presenting with acute, life-threatening complications,” she said. “We still need more emphasis on comprehensive lifetime sickle-cell care and the transition to adult clinics to improve mortality in young adults.”

According to Dr. Karkoska, researchers launched the analysis of sickle-cell mortality rates to update previously available data up to the year 2009, which showed improvements as current standard-of-care treatments were introduced. Updated numbers, she said, would reflect the influence of a rise in dedicated SCD clinics and a 2014 National Heart, Lung, and Blood Institute recommendation that all children with SCD be treated with hydroxyurea starting at 9 months.

For the study, Dr. Karkoska and colleagues analyzed mortality statistics from the period of 1979-2020 via a CDC database. They found that 5272 Black patients died of SCD from 2010 to 2020. The crude mortality rate was 1.1 per 100,000 Black people, lower than the 1.2 per 100,000 rate of 1999-2009 (P < .0001).

The researchers also found that from 2010 to 2020, the mortality rate jumped for patients in the 15-19 to 20-24 age group: It rose from 0.9 per 100,000 to 1.4 per 100,000, P < .0001).

The researchers also examined contributors to death other than SCD. In 39% of cases, underlying causes were noted: cardiovascular disease (28%), accidents (7%), cerebrovascular disease (7%), malignancy (6%), septicemia (4.8%), and renal disease (3.8%). The population of people with SCD is “getting older, and they’re developing a combination of both sickle-related chronic organ damage as well as non-sickle-related chronic disease,” Dr. Karkoska said.

She noted that limitations include a reliance on data that can be incomplete or inaccurate. She also mentioned that the study only focuses on Black patients, who make up the vast majority of those with SCD.

How good is the news about improved mortality numbers? One member of the audience at the ASH presentation was disappointed that they hadn’t gotten even better. “I was hoping to come here to be cheered up,” he said, “and I’m not.”

Three physicians who didn’t take part in the research but are familiar with the new study spoke in interviews about the findings.

Michael Bender, MD, PhD, director of the Odessa Brown Comprehensive Sickle Cell Clinic in Seattle, pointed out that mortality rates improve slowly over time, as new treatments enter the picture. When new therapies come along, he said, “it’s tough if someone’s already 40 years old and their body has gone through a lot. They’re not going to have as much benefit as someone who started [on therapy] when they were 5 years old, and they grew up with that improvement.”

Sickle cell specialist Asmaa Ferdjallah, MD, MPH, of the Mayo Clinic in Rochester, Minnesota, said that the data showing a spike in mortality rates during the pediatric-adult transition are not surprising but still “really hard to digest.”

“It is a testament to the fact that we are not meeting patients where they are,” she said. “We struggle immensely with the transition period. This is something that is difficult across all providers all over the country,” she said. “There are different ways to ensure a successful transition from the pediatric side to the adult side. Here at Mayo Clinic, we use a slow transition, and we rotate appointments with peds and adults until age 30.”

Sophie Miriam Lanzkron, MD, MHS, director of the Sickle Cell Center for Adults at Johns Hopkins Hospital, Baltimore, said increases in mortality in the post-pediatric period appear to be due in part to “lack of access to high-quality sickle cell care for adults because there aren’t enough hematologists.” Worsening disease due to aging is another factor, she said, and “there might also be some behavioral changes. Young people think they will live forever. Sometimes they choose not to adhere to medical recommendations, which for this population is very risky.”

Dr. Lanzkron said her team is developing a long-term patient registry that should provide more insight.

No study funding was reported. Dr. Karkoska had no disclosures. The other coauthor disclosed research funding and safety advisory board relationships with Novartis. Dr. Ferdjallah, Dr. Lanzkron, and Dr. Bender reported no disclosures.

FROM ASH 2023

More evidence that modified Atkins diet lowers seizures in adults

ORLANDO —

The results of the small new review and meta-analysis suggest that “the MAD may be an effective adjuvant therapy for older patients who have failed anti-seizure medications,” study investigator Aiswarya Raj, MBBS, Aster Malabar Institute of Medical Sciences, Kerala, India, said in an interview.

The findings were presented at the annual meeting of the American Epilepsy Society.

Paucity of Adult Data

The MAD is a less restrictive hybrid of the ketogenic diet that limits carbohydrate intake and encourages fat consumption. It does not restrict fluids, calories, or proteins and does not require fats to be weighed or measured.

The diet includes fewer carbohydrates than the traditional Atkins diet and places more emphasis on fat intake. Dr. Raj said that the research suggests that the MAD “is a promising therapy in pediatric populations, but there’s not a lot of data in adults.”

Dr. Raj noted that this diet type has not been that popular in patients who clinicians believe might be better treated with drug therapy, possibly because of concern about the cardiac impact of consuming high-fat foods.

After conducting a systematic literature review assessing the efficacy of MAD in adults, the researchers included three randomized controlled trials and four observational studies published from January 2000 to May 2023 in the analysis.

The randomized controlled trials in the review assessed the primary outcome, a greater than 50% seizure reduction, at the end of 2 months, 3 months, and 6 months. In the MAD group, 32.5% of participants had more than a 50% seizure reduction vs 3% in the control group (odds ratio [OR], 12.62; 95% CI, 4.05-39.29; P < .0001).

Four participants who followed the diet achieved complete seizure-freedom compared with no participants in the control group (OR, 16.20; 95% CI, 0.82-318.82; P = .07).

The prospective studies examined this outcome at the end of 1 month or 3 months. In these studies, 41.9% of individuals experienced more than a 50% seizure reduction after 1 month of following the MAD, and 34.2% experienced this reduction after 3 months (OR, 1.41; 95% CI, 0.79-2.52; P = .24), with zero heterogeneity across studies.

It’s difficult to interpret the difference in seizure reduction between 1 and 3 months of therapy, Dr. Raj noted, because “there’s always the issue of compliance when you put a patient on a long-term diet.”

Positive results for MAD in adults were shown in another recent systematic review and meta-analysis published in Seizure: European Journal of Epilepsy.

That analysis included six studies with 575 patients who were randomly assigned to MAD or usual diet (UD) plus standard drug therapy. After an average follow-up of 12 weeks, MAD was associated with a higher rate of 50% or greater reduction in seizure frequency (relative risk [RR], 6.28; 95% CI, 3.52-10.50; P < .001), both in adults with drug-resistant epilepsy (RR, 6.14; 95% CI, 1.15-32.66; P = .033) and children (RR, 6.28; 95% CI, 3.43-11.49; P < .001).

MAD was also associated with a higher seizure freedom rate compared with UD (RR, 5.94; 95% CI, 1.93-18.31; P = .002).

Cholesterol Concern

In Dr. Raj’s analysis, there was an increment in blood total cholesterol level after 3 months of MAD (standard mean difference, -0.82; 95% CI, -1.23 to -0.40; P = .0001).

Concern about elevated blood cholesterol affecting coronary artery disease risk may explain why doctors sometimes shy away from recommending the MAD to their adult patients. “Some may not want to take that risk; you don’t want patients to succumb to coronary artery disease,” said Dr. Raj.

She noted that 3 months “is a very short time period,” and studies looking at cholesterol levels at the end of at least 1 year are needed to determine whether levels return to normal.

“We’re seeing a lot of literature now that suggests dietary intake does not really have a link with cholesterol levels,” she said. If this can be proven, “then this is definitely a great therapy.”

The evidence of cardiovascular safety of the MAD includes a study of 37 patients who showed that although total cholesterol and low-density lipoprotein (LDL) cholesterol increased over the first 3 months of MAD treatment, these values normalized within 1 year of treatment, including in patients treated with MAD for more than 3 years.

Primary Diet Recommendation

This news organization asked one of the authors of that study, Mackenzie C. Cervenka, MD, professor of neurology and medical director of the Adult Epilepsy Diet Center, Johns Hopkins Hospital, Baltimore, Maryland, to comment on the new research.

She said that she was “thrilled” to see more evidence showing that this diet therapy can be as effective for adults as for children. “This is a really important message to get out there.”

At her adult epilepsy diet center, the MAD is the “primary” diet recommended for patients who are resistant to seizure medication, not tube fed, and are keen to try diet therapy, said Dr. Cervenka.

In her experience, the likelihood of having a 50% or greater seizure reduction is about 40% among medication-resistant patients, “so very similar to what they reported in that review,” she said.

However, she noted that she emphasizes to patients that “diet therapy is not meant to be monotherapy.”

Dr. Cervenka’s team is examining LDL cholesterol levels as well as LDL particle size in adults who have been on the MAD for 2 years. LDL particle size, she noted, is a better predictor of long-term cardiovascular health.

No conflicts of interest were reported.

A version of this article appeared on Medscape.com.

ORLANDO —

The results of the small new review and meta-analysis suggest that “the MAD may be an effective adjuvant therapy for older patients who have failed anti-seizure medications,” study investigator Aiswarya Raj, MBBS, Aster Malabar Institute of Medical Sciences, Kerala, India, said in an interview.

The findings were presented at the annual meeting of the American Epilepsy Society.

Paucity of Adult Data

The MAD is a less restrictive hybrid of the ketogenic diet that limits carbohydrate intake and encourages fat consumption. It does not restrict fluids, calories, or proteins and does not require fats to be weighed or measured.

The diet includes fewer carbohydrates than the traditional Atkins diet and places more emphasis on fat intake. Dr. Raj said that the research suggests that the MAD “is a promising therapy in pediatric populations, but there’s not a lot of data in adults.”

Dr. Raj noted that this diet type has not been that popular in patients who clinicians believe might be better treated with drug therapy, possibly because of concern about the cardiac impact of consuming high-fat foods.

After conducting a systematic literature review assessing the efficacy of MAD in adults, the researchers included three randomized controlled trials and four observational studies published from January 2000 to May 2023 in the analysis.

The randomized controlled trials in the review assessed the primary outcome, a greater than 50% seizure reduction, at the end of 2 months, 3 months, and 6 months. In the MAD group, 32.5% of participants had more than a 50% seizure reduction vs 3% in the control group (odds ratio [OR], 12.62; 95% CI, 4.05-39.29; P < .0001).

Four participants who followed the diet achieved complete seizure-freedom compared with no participants in the control group (OR, 16.20; 95% CI, 0.82-318.82; P = .07).

The prospective studies examined this outcome at the end of 1 month or 3 months. In these studies, 41.9% of individuals experienced more than a 50% seizure reduction after 1 month of following the MAD, and 34.2% experienced this reduction after 3 months (OR, 1.41; 95% CI, 0.79-2.52; P = .24), with zero heterogeneity across studies.

It’s difficult to interpret the difference in seizure reduction between 1 and 3 months of therapy, Dr. Raj noted, because “there’s always the issue of compliance when you put a patient on a long-term diet.”

Positive results for MAD in adults were shown in another recent systematic review and meta-analysis published in Seizure: European Journal of Epilepsy.

That analysis included six studies with 575 patients who were randomly assigned to MAD or usual diet (UD) plus standard drug therapy. After an average follow-up of 12 weeks, MAD was associated with a higher rate of 50% or greater reduction in seizure frequency (relative risk [RR], 6.28; 95% CI, 3.52-10.50; P < .001), both in adults with drug-resistant epilepsy (RR, 6.14; 95% CI, 1.15-32.66; P = .033) and children (RR, 6.28; 95% CI, 3.43-11.49; P < .001).

MAD was also associated with a higher seizure freedom rate compared with UD (RR, 5.94; 95% CI, 1.93-18.31; P = .002).

Cholesterol Concern

In Dr. Raj’s analysis, there was an increment in blood total cholesterol level after 3 months of MAD (standard mean difference, -0.82; 95% CI, -1.23 to -0.40; P = .0001).

Concern about elevated blood cholesterol affecting coronary artery disease risk may explain why doctors sometimes shy away from recommending the MAD to their adult patients. “Some may not want to take that risk; you don’t want patients to succumb to coronary artery disease,” said Dr. Raj.

She noted that 3 months “is a very short time period,” and studies looking at cholesterol levels at the end of at least 1 year are needed to determine whether levels return to normal.

“We’re seeing a lot of literature now that suggests dietary intake does not really have a link with cholesterol levels,” she said. If this can be proven, “then this is definitely a great therapy.”

The evidence of cardiovascular safety of the MAD includes a study of 37 patients who showed that although total cholesterol and low-density lipoprotein (LDL) cholesterol increased over the first 3 months of MAD treatment, these values normalized within 1 year of treatment, including in patients treated with MAD for more than 3 years.

Primary Diet Recommendation

This news organization asked one of the authors of that study, Mackenzie C. Cervenka, MD, professor of neurology and medical director of the Adult Epilepsy Diet Center, Johns Hopkins Hospital, Baltimore, Maryland, to comment on the new research.

She said that she was “thrilled” to see more evidence showing that this diet therapy can be as effective for adults as for children. “This is a really important message to get out there.”

At her adult epilepsy diet center, the MAD is the “primary” diet recommended for patients who are resistant to seizure medication, not tube fed, and are keen to try diet therapy, said Dr. Cervenka.

In her experience, the likelihood of having a 50% or greater seizure reduction is about 40% among medication-resistant patients, “so very similar to what they reported in that review,” she said.

However, she noted that she emphasizes to patients that “diet therapy is not meant to be monotherapy.”

Dr. Cervenka’s team is examining LDL cholesterol levels as well as LDL particle size in adults who have been on the MAD for 2 years. LDL particle size, she noted, is a better predictor of long-term cardiovascular health.

No conflicts of interest were reported.

A version of this article appeared on Medscape.com.

ORLANDO —

The results of the small new review and meta-analysis suggest that “the MAD may be an effective adjuvant therapy for older patients who have failed anti-seizure medications,” study investigator Aiswarya Raj, MBBS, Aster Malabar Institute of Medical Sciences, Kerala, India, said in an interview.

The findings were presented at the annual meeting of the American Epilepsy Society.

Paucity of Adult Data

The MAD is a less restrictive hybrid of the ketogenic diet that limits carbohydrate intake and encourages fat consumption. It does not restrict fluids, calories, or proteins and does not require fats to be weighed or measured.

The diet includes fewer carbohydrates than the traditional Atkins diet and places more emphasis on fat intake. Dr. Raj said that the research suggests that the MAD “is a promising therapy in pediatric populations, but there’s not a lot of data in adults.”

Dr. Raj noted that this diet type has not been that popular in patients who clinicians believe might be better treated with drug therapy, possibly because of concern about the cardiac impact of consuming high-fat foods.

After conducting a systematic literature review assessing the efficacy of MAD in adults, the researchers included three randomized controlled trials and four observational studies published from January 2000 to May 2023 in the analysis.

The randomized controlled trials in the review assessed the primary outcome, a greater than 50% seizure reduction, at the end of 2 months, 3 months, and 6 months. In the MAD group, 32.5% of participants had more than a 50% seizure reduction vs 3% in the control group (odds ratio [OR], 12.62; 95% CI, 4.05-39.29; P < .0001).

Four participants who followed the diet achieved complete seizure-freedom compared with no participants in the control group (OR, 16.20; 95% CI, 0.82-318.82; P = .07).

The prospective studies examined this outcome at the end of 1 month or 3 months. In these studies, 41.9% of individuals experienced more than a 50% seizure reduction after 1 month of following the MAD, and 34.2% experienced this reduction after 3 months (OR, 1.41; 95% CI, 0.79-2.52; P = .24), with zero heterogeneity across studies.

It’s difficult to interpret the difference in seizure reduction between 1 and 3 months of therapy, Dr. Raj noted, because “there’s always the issue of compliance when you put a patient on a long-term diet.”

Positive results for MAD in adults were shown in another recent systematic review and meta-analysis published in Seizure: European Journal of Epilepsy.

That analysis included six studies with 575 patients who were randomly assigned to MAD or usual diet (UD) plus standard drug therapy. After an average follow-up of 12 weeks, MAD was associated with a higher rate of 50% or greater reduction in seizure frequency (relative risk [RR], 6.28; 95% CI, 3.52-10.50; P < .001), both in adults with drug-resistant epilepsy (RR, 6.14; 95% CI, 1.15-32.66; P = .033) and children (RR, 6.28; 95% CI, 3.43-11.49; P < .001).

MAD was also associated with a higher seizure freedom rate compared with UD (RR, 5.94; 95% CI, 1.93-18.31; P = .002).

Cholesterol Concern

In Dr. Raj’s analysis, there was an increment in blood total cholesterol level after 3 months of MAD (standard mean difference, -0.82; 95% CI, -1.23 to -0.40; P = .0001).

Concern about elevated blood cholesterol affecting coronary artery disease risk may explain why doctors sometimes shy away from recommending the MAD to their adult patients. “Some may not want to take that risk; you don’t want patients to succumb to coronary artery disease,” said Dr. Raj.

She noted that 3 months “is a very short time period,” and studies looking at cholesterol levels at the end of at least 1 year are needed to determine whether levels return to normal.

“We’re seeing a lot of literature now that suggests dietary intake does not really have a link with cholesterol levels,” she said. If this can be proven, “then this is definitely a great therapy.”

The evidence of cardiovascular safety of the MAD includes a study of 37 patients who showed that although total cholesterol and low-density lipoprotein (LDL) cholesterol increased over the first 3 months of MAD treatment, these values normalized within 1 year of treatment, including in patients treated with MAD for more than 3 years.

Primary Diet Recommendation

This news organization asked one of the authors of that study, Mackenzie C. Cervenka, MD, professor of neurology and medical director of the Adult Epilepsy Diet Center, Johns Hopkins Hospital, Baltimore, Maryland, to comment on the new research.

She said that she was “thrilled” to see more evidence showing that this diet therapy can be as effective for adults as for children. “This is a really important message to get out there.”

At her adult epilepsy diet center, the MAD is the “primary” diet recommended for patients who are resistant to seizure medication, not tube fed, and are keen to try diet therapy, said Dr. Cervenka.

In her experience, the likelihood of having a 50% or greater seizure reduction is about 40% among medication-resistant patients, “so very similar to what they reported in that review,” she said.

However, she noted that she emphasizes to patients that “diet therapy is not meant to be monotherapy.”

Dr. Cervenka’s team is examining LDL cholesterol levels as well as LDL particle size in adults who have been on the MAD for 2 years. LDL particle size, she noted, is a better predictor of long-term cardiovascular health.

No conflicts of interest were reported.

A version of this article appeared on Medscape.com.

FROM AES 2023

What is the link between cellphones and male fertility?

Infertility affects approximately one in six couples worldwide. More than half the time, it is the man’s low sperm quality that is to blame. Over the last three decades, sperm quality seems to have declined for no clearly identifiable reason. Theories are running rampant without anyone having the proof to back them up.

Potential Causes

The environment, lifestyle, excess weight or obesity, smoking, alcohol consumption, and psychological stress have all been alternately offered up as potential causes, following low-quality epidemiological studies. Cellphones are not exempt from this list, due to their emission of high-frequency (800-2200 MHz) electromagnetic waves that can be absorbed by the body.

Clinical trials conducted in rats or mice suggest that these waves can affect sperm quality and lead to histological changes to the testicles, bearing in mind that the conditions met in these trials are very far from our day-to-day exposure to electromagnetic waves, mostly via our cellphones.

The same observation can be made about experiments conducted on human sperm in vitro, but changes to the latter caused by electromagnetic waves leave doubts. Observational studies are rare, carried out in small cohorts, and marred by largely conflicting results. Publication bias plays a major role, just as much as the abundance of potential confounding factors does.

Swiss Observational Study

An observational study carried out in Switzerland had the benefit of involving a large cohort of 2886 young men who were representative of the general population. The participants completed an online questionnaire describing their relationship with their cellphone in detail and in qualitative and quantitative terms.

The study was launched in 2005, before cellphone use became so widespread, and this timeline was considered when looking for a link between cellphone exposure and sperm quality. In addition, multiple adjustments were made in the multivariate analyses to account for as many potential confounding factors as possible.

The participants, aged between 18 and 22 years, were recruited during a 3-day period to assess their suitability for military service. Each year, this cohort makes up 97% of the male population in Switzerland in this age range, with the remaining 3% being excluded from the selection process due to disability or chronic illness.

Regardless of the review board’s decision, subjects wishing to take part in the study were given a detailed description of what it involved, a consent form, and two questionnaires. The first focused on the individual directly, asking questions about his health and lifestyle. The second, intended for his parents, dealt with the period before conception.

This recruitment, which took place between September 2005 and November 2018, involved the researchers contacting 106,924 men. Ultimately, only 5.3% of subjects contacted returned the completed documentation. In the end, the study involved 2886 participants (3.1%) who provided all the necessary information, especially the laboratory testing (including a sperm analysis) needed to meet the study objectives. The number of hours spent on a smartphone and how it was used were routinely considered, as was sperm quality (volume, concentration, and total sperm count, as well as sperm mobility and morphology).

Significant Associations

A data analysis using an adjusted linear model revealed a significant association between frequent phone use (> 20 times per day) and lower sperm concentration (in mL) (adjusted β: -0.152, 95% CI -0.316 to 0.011). The same was found for their total concentration in ejaculate (adjusted β: -0.271, 95% CI -0.515 to -0.027).

An adjusted logistic regression analysis estimated that the risk for subnormal male fertility levels, as determined by the World Health Organization (WHO), was increased by at most 30%, when referring to the concentration of sperm per mL (21% in terms of total concentration). This inverse link was shown to be more pronounced during the first phase of the study (2005-2007), compared with the other two phases (2008-2011 and 2012-2018). Yet no links involving sperm mobility or morphology were found, and carrying a cellphone in a trouser pocket had no impact on the results.

This study certainly involves a large cohort of nearly 3000 young men. It is, nonetheless, retrospective, and its methodology, despite being better than that of previous studies, is still open to criticism. Its results can only fuel hypotheses, nothing more. Only prospective cohort studies will allow conclusions to be drawn and, in the meantime,

This article was translated from JIM, which is part of the Medscape professional network. A version of this article appeared on Medscape.com.

Infertility affects approximately one in six couples worldwide. More than half the time, it is the man’s low sperm quality that is to blame. Over the last three decades, sperm quality seems to have declined for no clearly identifiable reason. Theories are running rampant without anyone having the proof to back them up.

Potential Causes

The environment, lifestyle, excess weight or obesity, smoking, alcohol consumption, and psychological stress have all been alternately offered up as potential causes, following low-quality epidemiological studies. Cellphones are not exempt from this list, due to their emission of high-frequency (800-2200 MHz) electromagnetic waves that can be absorbed by the body.

Clinical trials conducted in rats or mice suggest that these waves can affect sperm quality and lead to histological changes to the testicles, bearing in mind that the conditions met in these trials are very far from our day-to-day exposure to electromagnetic waves, mostly via our cellphones.

The same observation can be made about experiments conducted on human sperm in vitro, but changes to the latter caused by electromagnetic waves leave doubts. Observational studies are rare, carried out in small cohorts, and marred by largely conflicting results. Publication bias plays a major role, just as much as the abundance of potential confounding factors does.

Swiss Observational Study

An observational study carried out in Switzerland had the benefit of involving a large cohort of 2886 young men who were representative of the general population. The participants completed an online questionnaire describing their relationship with their cellphone in detail and in qualitative and quantitative terms.

The study was launched in 2005, before cellphone use became so widespread, and this timeline was considered when looking for a link between cellphone exposure and sperm quality. In addition, multiple adjustments were made in the multivariate analyses to account for as many potential confounding factors as possible.

The participants, aged between 18 and 22 years, were recruited during a 3-day period to assess their suitability for military service. Each year, this cohort makes up 97% of the male population in Switzerland in this age range, with the remaining 3% being excluded from the selection process due to disability or chronic illness.

Regardless of the review board’s decision, subjects wishing to take part in the study were given a detailed description of what it involved, a consent form, and two questionnaires. The first focused on the individual directly, asking questions about his health and lifestyle. The second, intended for his parents, dealt with the period before conception.

This recruitment, which took place between September 2005 and November 2018, involved the researchers contacting 106,924 men. Ultimately, only 5.3% of subjects contacted returned the completed documentation. In the end, the study involved 2886 participants (3.1%) who provided all the necessary information, especially the laboratory testing (including a sperm analysis) needed to meet the study objectives. The number of hours spent on a smartphone and how it was used were routinely considered, as was sperm quality (volume, concentration, and total sperm count, as well as sperm mobility and morphology).

Significant Associations

A data analysis using an adjusted linear model revealed a significant association between frequent phone use (> 20 times per day) and lower sperm concentration (in mL) (adjusted β: -0.152, 95% CI -0.316 to 0.011). The same was found for their total concentration in ejaculate (adjusted β: -0.271, 95% CI -0.515 to -0.027).

An adjusted logistic regression analysis estimated that the risk for subnormal male fertility levels, as determined by the World Health Organization (WHO), was increased by at most 30%, when referring to the concentration of sperm per mL (21% in terms of total concentration). This inverse link was shown to be more pronounced during the first phase of the study (2005-2007), compared with the other two phases (2008-2011 and 2012-2018). Yet no links involving sperm mobility or morphology were found, and carrying a cellphone in a trouser pocket had no impact on the results.

This study certainly involves a large cohort of nearly 3000 young men. It is, nonetheless, retrospective, and its methodology, despite being better than that of previous studies, is still open to criticism. Its results can only fuel hypotheses, nothing more. Only prospective cohort studies will allow conclusions to be drawn and, in the meantime,

This article was translated from JIM, which is part of the Medscape professional network. A version of this article appeared on Medscape.com.

Infertility affects approximately one in six couples worldwide. More than half the time, it is the man’s low sperm quality that is to blame. Over the last three decades, sperm quality seems to have declined for no clearly identifiable reason. Theories are running rampant without anyone having the proof to back them up.

Potential Causes

The environment, lifestyle, excess weight or obesity, smoking, alcohol consumption, and psychological stress have all been alternately offered up as potential causes, following low-quality epidemiological studies. Cellphones are not exempt from this list, due to their emission of high-frequency (800-2200 MHz) electromagnetic waves that can be absorbed by the body.

Clinical trials conducted in rats or mice suggest that these waves can affect sperm quality and lead to histological changes to the testicles, bearing in mind that the conditions met in these trials are very far from our day-to-day exposure to electromagnetic waves, mostly via our cellphones.

The same observation can be made about experiments conducted on human sperm in vitro, but changes to the latter caused by electromagnetic waves leave doubts. Observational studies are rare, carried out in small cohorts, and marred by largely conflicting results. Publication bias plays a major role, just as much as the abundance of potential confounding factors does.

Swiss Observational Study

An observational study carried out in Switzerland had the benefit of involving a large cohort of 2886 young men who were representative of the general population. The participants completed an online questionnaire describing their relationship with their cellphone in detail and in qualitative and quantitative terms.

The study was launched in 2005, before cellphone use became so widespread, and this timeline was considered when looking for a link between cellphone exposure and sperm quality. In addition, multiple adjustments were made in the multivariate analyses to account for as many potential confounding factors as possible.

The participants, aged between 18 and 22 years, were recruited during a 3-day period to assess their suitability for military service. Each year, this cohort makes up 97% of the male population in Switzerland in this age range, with the remaining 3% being excluded from the selection process due to disability or chronic illness.

Regardless of the review board’s decision, subjects wishing to take part in the study were given a detailed description of what it involved, a consent form, and two questionnaires. The first focused on the individual directly, asking questions about his health and lifestyle. The second, intended for his parents, dealt with the period before conception.

This recruitment, which took place between September 2005 and November 2018, involved the researchers contacting 106,924 men. Ultimately, only 5.3% of subjects contacted returned the completed documentation. In the end, the study involved 2886 participants (3.1%) who provided all the necessary information, especially the laboratory testing (including a sperm analysis) needed to meet the study objectives. The number of hours spent on a smartphone and how it was used were routinely considered, as was sperm quality (volume, concentration, and total sperm count, as well as sperm mobility and morphology).

Significant Associations

A data analysis using an adjusted linear model revealed a significant association between frequent phone use (> 20 times per day) and lower sperm concentration (in mL) (adjusted β: -0.152, 95% CI -0.316 to 0.011). The same was found for their total concentration in ejaculate (adjusted β: -0.271, 95% CI -0.515 to -0.027).

An adjusted logistic regression analysis estimated that the risk for subnormal male fertility levels, as determined by the World Health Organization (WHO), was increased by at most 30%, when referring to the concentration of sperm per mL (21% in terms of total concentration). This inverse link was shown to be more pronounced during the first phase of the study (2005-2007), compared with the other two phases (2008-2011 and 2012-2018). Yet no links involving sperm mobility or morphology were found, and carrying a cellphone in a trouser pocket had no impact on the results.

This study certainly involves a large cohort of nearly 3000 young men. It is, nonetheless, retrospective, and its methodology, despite being better than that of previous studies, is still open to criticism. Its results can only fuel hypotheses, nothing more. Only prospective cohort studies will allow conclusions to be drawn and, in the meantime,

This article was translated from JIM, which is part of the Medscape professional network. A version of this article appeared on Medscape.com.

1 in 3 women have lasting health problems after giving birth: Study

Those problems include pain during sexual intercourse (35%), low back pain (32%), urinary incontinence (8% to 31%), anxiety (9% to 24%), anal incontinence (19%), depression (11% to 17%), fear of childbirth (6% to 15%), perineal pain (11%), and secondary infertility (11%).

Other problems included pelvic organ prolapse, posttraumatic stress disorder, thyroid dysfunction, mastitis, HIV seroconversion (when the body begins to produce detectable levels of HIV antibodies), nerve injury, and psychosis.

The study says most women see a doctor 6 to 12 weeks after birth and then rarely talk to doctors about these nagging health problems. Many of the problems don’t show up until 6 or more weeks after birth.

“To comprehensively address these conditions, broader and more comprehensive health service opportunities are needed, which should extend beyond 6 weeks postpartum and embrace multidisciplinary models of care,” the study says. “This approach can ensure that these conditions are promptly identified and given the attention that they deserve.”

The study is part of a series organized by the United Nation’s Special Program on Human Reproduction, the World Health Organization, and the U.S. Agency for International Development. The authors said most of the data came from high-income nations. There was little data from low-income and middle-income countries except for postpartum depression, anxiety, and psychosis.

“Many postpartum conditions cause considerable suffering in women’s daily life long after birth, both emotionally and physically, and yet they are largely underappreciated, underrecognized, and underreported,” Pascale Allotey, MD, director of Sexual and Reproductive Health and Research at WHO, said in a statement.

“Throughout their lives, and beyond motherhood, women need access to a range of services from health-care providers who listen to their concerns and meet their needs — so they not only survive childbirth but can enjoy good health and quality of life.”

A version of this article appeared on WebMD.com.

Those problems include pain during sexual intercourse (35%), low back pain (32%), urinary incontinence (8% to 31%), anxiety (9% to 24%), anal incontinence (19%), depression (11% to 17%), fear of childbirth (6% to 15%), perineal pain (11%), and secondary infertility (11%).

Other problems included pelvic organ prolapse, posttraumatic stress disorder, thyroid dysfunction, mastitis, HIV seroconversion (when the body begins to produce detectable levels of HIV antibodies), nerve injury, and psychosis.

The study says most women see a doctor 6 to 12 weeks after birth and then rarely talk to doctors about these nagging health problems. Many of the problems don’t show up until 6 or more weeks after birth.

“To comprehensively address these conditions, broader and more comprehensive health service opportunities are needed, which should extend beyond 6 weeks postpartum and embrace multidisciplinary models of care,” the study says. “This approach can ensure that these conditions are promptly identified and given the attention that they deserve.”

The study is part of a series organized by the United Nation’s Special Program on Human Reproduction, the World Health Organization, and the U.S. Agency for International Development. The authors said most of the data came from high-income nations. There was little data from low-income and middle-income countries except for postpartum depression, anxiety, and psychosis.

“Many postpartum conditions cause considerable suffering in women’s daily life long after birth, both emotionally and physically, and yet they are largely underappreciated, underrecognized, and underreported,” Pascale Allotey, MD, director of Sexual and Reproductive Health and Research at WHO, said in a statement.

“Throughout their lives, and beyond motherhood, women need access to a range of services from health-care providers who listen to their concerns and meet their needs — so they not only survive childbirth but can enjoy good health and quality of life.”

A version of this article appeared on WebMD.com.

Those problems include pain during sexual intercourse (35%), low back pain (32%), urinary incontinence (8% to 31%), anxiety (9% to 24%), anal incontinence (19%), depression (11% to 17%), fear of childbirth (6% to 15%), perineal pain (11%), and secondary infertility (11%).

Other problems included pelvic organ prolapse, posttraumatic stress disorder, thyroid dysfunction, mastitis, HIV seroconversion (when the body begins to produce detectable levels of HIV antibodies), nerve injury, and psychosis.

The study says most women see a doctor 6 to 12 weeks after birth and then rarely talk to doctors about these nagging health problems. Many of the problems don’t show up until 6 or more weeks after birth.

“To comprehensively address these conditions, broader and more comprehensive health service opportunities are needed, which should extend beyond 6 weeks postpartum and embrace multidisciplinary models of care,” the study says. “This approach can ensure that these conditions are promptly identified and given the attention that they deserve.”

The study is part of a series organized by the United Nation’s Special Program on Human Reproduction, the World Health Organization, and the U.S. Agency for International Development. The authors said most of the data came from high-income nations. There was little data from low-income and middle-income countries except for postpartum depression, anxiety, and psychosis.

“Many postpartum conditions cause considerable suffering in women’s daily life long after birth, both emotionally and physically, and yet they are largely underappreciated, underrecognized, and underreported,” Pascale Allotey, MD, director of Sexual and Reproductive Health and Research at WHO, said in a statement.

“Throughout their lives, and beyond motherhood, women need access to a range of services from health-care providers who listen to their concerns and meet their needs — so they not only survive childbirth but can enjoy good health and quality of life.”

A version of this article appeared on WebMD.com.

FROM THE LANCET GLOBAL HEALTH

MRD status predicts transplant benefit in NPM1-mutated AML

.

This survival benefit did not extend to patients who were MRD-negative after their second induction therapy, Jad Othman, MBBS, reported at the American Society of Hematology annual meeting.

The findings confirm the value of assessing MRD after induction chemotherapy to help identify patients with NPM1-mutated AML in first complete remission who are more likely to benefit from allogeneic transplant, said Dr. Othman, of King’s College London and Guy’s and St Thomas’ NHS Foundation Trust, London, and the University of Sydney, Australia.

Recently, updated European LeukemiaNet recommendations, which stratify patients with AML by favorable, intermediate, and adverse prognoses, now include a revised genetic-risk classification. This classification generally considers NPM1-mutated AML favorable risk. However, having a co-mutation with FLT3-ITD raises the risk to intermediate.

Despite this increased granularity in risk stratification, “it’s still not really clear who should have transplant in first remission with NPM1-mutated AML,” Dr. Othman said. “And there is still significant variation in practice, not just worldwide but even center to center.”

Although accumulating evidence suggests that MRD-negative patients with intermediate-risk AML are unlikely to benefit from allogeneic transplant in first complete remission, the presence of a FLT3-ITD mutation is often considered an indication for transplant, Othman explained. However, most studies supporting this view occurred before the development of sensitive molecular MRD measurement techniques.

The latest findings, from two sequential prospective randomized trials of intensive chemotherapy in adults aged 18-60 years with newly diagnosed AML may help clarify who will probably benefit from transplant and who won’t based on MRD status and relevant molecular features.

The first study (AML17), conducted from 2009 to 2014, selected patients for transplant in first complete remission using a validated risk score that incorporated features including age, sex, and response after therapy. The other (AML19), conducted from 2015 to 2020, selected patients with NPM1-mutated AML for transplant only if they tested positive for MRD in peripheral blood after their second course of treatment, regardless of FLT3-ITD status or other baseline risk factors.

Overall, the current analysis included the 737 patients with NPM1-mutated AML, 348 from AML17 and 389 from AML19, who were in complete remission after two courses of treatment and had an MRD sample at that point.

In AML17, 27% of MRD-positive patients (16 of 60) and 18% of MRD-negative patients (52 of 288) underwent transplant in first complete remission compared with 60% (50 of 83) and 16% (49 of 306), respectively, in AML19.

Among all 737 patients, Dr. Othman and colleagues did not observe an overall survival benefit among those who underwent transplant vs those who did not (hazard ratio [HR], 1.01) or among patients who were MRD-negative (HR, 0.82).

However, patients who were MRD-positive did have a significant survival advantage after transplant (HR, 0.39). In these patients, 3-year overall survival was 61% among those who underwent transplant vs 24% among those who did not.

In MRD-negative patients, transplant in first complete remission did not improve overall survival despite improved relapse-free survival (HR, 0.50). This outcome, Othman explained, probably occurred because most patients who did not undergo transplant and who relapsed were salvaged, with about two thirds undergoing a transplant during their second complete response.

Results in patients with NPM1 FLT3-ITD co-mutation mirrored those in the overall population: MRD-positive patients in first complete remission who underwent transplant demonstrated improved overall survival compared with those without transplant (HR, 0.52), but the overall survival benefit did not extend to MRD-negative patients (HR, 0.80).

The findings show that molecular MRD after induction chemotherapy can identify patients with NPM1-mutated AML who are more likely to benefit from transplant in first remission, Dr. Othman concluded. However, he noted, because only 16% of patients overall were older than 60 years, the results may not be generalizable to older patients.

A version of this article appeared on Medscape.com.

.

This survival benefit did not extend to patients who were MRD-negative after their second induction therapy, Jad Othman, MBBS, reported at the American Society of Hematology annual meeting.

The findings confirm the value of assessing MRD after induction chemotherapy to help identify patients with NPM1-mutated AML in first complete remission who are more likely to benefit from allogeneic transplant, said Dr. Othman, of King’s College London and Guy’s and St Thomas’ NHS Foundation Trust, London, and the University of Sydney, Australia.

Recently, updated European LeukemiaNet recommendations, which stratify patients with AML by favorable, intermediate, and adverse prognoses, now include a revised genetic-risk classification. This classification generally considers NPM1-mutated AML favorable risk. However, having a co-mutation with FLT3-ITD raises the risk to intermediate.

Despite this increased granularity in risk stratification, “it’s still not really clear who should have transplant in first remission with NPM1-mutated AML,” Dr. Othman said. “And there is still significant variation in practice, not just worldwide but even center to center.”

Although accumulating evidence suggests that MRD-negative patients with intermediate-risk AML are unlikely to benefit from allogeneic transplant in first complete remission, the presence of a FLT3-ITD mutation is often considered an indication for transplant, Othman explained. However, most studies supporting this view occurred before the development of sensitive molecular MRD measurement techniques.

The latest findings, from two sequential prospective randomized trials of intensive chemotherapy in adults aged 18-60 years with newly diagnosed AML may help clarify who will probably benefit from transplant and who won’t based on MRD status and relevant molecular features.

The first study (AML17), conducted from 2009 to 2014, selected patients for transplant in first complete remission using a validated risk score that incorporated features including age, sex, and response after therapy. The other (AML19), conducted from 2015 to 2020, selected patients with NPM1-mutated AML for transplant only if they tested positive for MRD in peripheral blood after their second course of treatment, regardless of FLT3-ITD status or other baseline risk factors.

Overall, the current analysis included the 737 patients with NPM1-mutated AML, 348 from AML17 and 389 from AML19, who were in complete remission after two courses of treatment and had an MRD sample at that point.

In AML17, 27% of MRD-positive patients (16 of 60) and 18% of MRD-negative patients (52 of 288) underwent transplant in first complete remission compared with 60% (50 of 83) and 16% (49 of 306), respectively, in AML19.

Among all 737 patients, Dr. Othman and colleagues did not observe an overall survival benefit among those who underwent transplant vs those who did not (hazard ratio [HR], 1.01) or among patients who were MRD-negative (HR, 0.82).

However, patients who were MRD-positive did have a significant survival advantage after transplant (HR, 0.39). In these patients, 3-year overall survival was 61% among those who underwent transplant vs 24% among those who did not.

In MRD-negative patients, transplant in first complete remission did not improve overall survival despite improved relapse-free survival (HR, 0.50). This outcome, Othman explained, probably occurred because most patients who did not undergo transplant and who relapsed were salvaged, with about two thirds undergoing a transplant during their second complete response.

Results in patients with NPM1 FLT3-ITD co-mutation mirrored those in the overall population: MRD-positive patients in first complete remission who underwent transplant demonstrated improved overall survival compared with those without transplant (HR, 0.52), but the overall survival benefit did not extend to MRD-negative patients (HR, 0.80).

The findings show that molecular MRD after induction chemotherapy can identify patients with NPM1-mutated AML who are more likely to benefit from transplant in first remission, Dr. Othman concluded. However, he noted, because only 16% of patients overall were older than 60 years, the results may not be generalizable to older patients.

A version of this article appeared on Medscape.com.

.

This survival benefit did not extend to patients who were MRD-negative after their second induction therapy, Jad Othman, MBBS, reported at the American Society of Hematology annual meeting.

The findings confirm the value of assessing MRD after induction chemotherapy to help identify patients with NPM1-mutated AML in first complete remission who are more likely to benefit from allogeneic transplant, said Dr. Othman, of King’s College London and Guy’s and St Thomas’ NHS Foundation Trust, London, and the University of Sydney, Australia.

Recently, updated European LeukemiaNet recommendations, which stratify patients with AML by favorable, intermediate, and adverse prognoses, now include a revised genetic-risk classification. This classification generally considers NPM1-mutated AML favorable risk. However, having a co-mutation with FLT3-ITD raises the risk to intermediate.

Despite this increased granularity in risk stratification, “it’s still not really clear who should have transplant in first remission with NPM1-mutated AML,” Dr. Othman said. “And there is still significant variation in practice, not just worldwide but even center to center.”

Although accumulating evidence suggests that MRD-negative patients with intermediate-risk AML are unlikely to benefit from allogeneic transplant in first complete remission, the presence of a FLT3-ITD mutation is often considered an indication for transplant, Othman explained. However, most studies supporting this view occurred before the development of sensitive molecular MRD measurement techniques.

The latest findings, from two sequential prospective randomized trials of intensive chemotherapy in adults aged 18-60 years with newly diagnosed AML may help clarify who will probably benefit from transplant and who won’t based on MRD status and relevant molecular features.

The first study (AML17), conducted from 2009 to 2014, selected patients for transplant in first complete remission using a validated risk score that incorporated features including age, sex, and response after therapy. The other (AML19), conducted from 2015 to 2020, selected patients with NPM1-mutated AML for transplant only if they tested positive for MRD in peripheral blood after their second course of treatment, regardless of FLT3-ITD status or other baseline risk factors.

Overall, the current analysis included the 737 patients with NPM1-mutated AML, 348 from AML17 and 389 from AML19, who were in complete remission after two courses of treatment and had an MRD sample at that point.

In AML17, 27% of MRD-positive patients (16 of 60) and 18% of MRD-negative patients (52 of 288) underwent transplant in first complete remission compared with 60% (50 of 83) and 16% (49 of 306), respectively, in AML19.

Among all 737 patients, Dr. Othman and colleagues did not observe an overall survival benefit among those who underwent transplant vs those who did not (hazard ratio [HR], 1.01) or among patients who were MRD-negative (HR, 0.82).

However, patients who were MRD-positive did have a significant survival advantage after transplant (HR, 0.39). In these patients, 3-year overall survival was 61% among those who underwent transplant vs 24% among those who did not.

In MRD-negative patients, transplant in first complete remission did not improve overall survival despite improved relapse-free survival (HR, 0.50). This outcome, Othman explained, probably occurred because most patients who did not undergo transplant and who relapsed were salvaged, with about two thirds undergoing a transplant during their second complete response.

Results in patients with NPM1 FLT3-ITD co-mutation mirrored those in the overall population: MRD-positive patients in first complete remission who underwent transplant demonstrated improved overall survival compared with those without transplant (HR, 0.52), but the overall survival benefit did not extend to MRD-negative patients (HR, 0.80).

The findings show that molecular MRD after induction chemotherapy can identify patients with NPM1-mutated AML who are more likely to benefit from transplant in first remission, Dr. Othman concluded. However, he noted, because only 16% of patients overall were older than 60 years, the results may not be generalizable to older patients.

A version of this article appeared on Medscape.com.

FROM ASH 2023

Federal program offers free COVID, flu at-home tests, treatments

The U.S. government has expanded a program offering free COVID-19 and flu tests and treatment.

The Home Test to Treat program is virtual and offers at-home rapid tests, telehealth sessions, and at-home treatments to people nationwide. The program is a collaboration among the National Institutes of Health, the Administration for Strategic Preparedness and Response, and the CDC. It began as a pilot program in some locations this year.

“With its expansion, the Home Test to Treat program will now offer free testing, telehealth and treatment for both COVID-19 and for influenza (flu) A and B,” the NIH said in a press release. “It is the first public health program that includes home testing technology at such a scale for both COVID-19 and flu.”

The news release says that anyone 18 or over with a current positive test for COVID-19 or flu can get free telehealth care and medicine delivered to their home.

Adults who don’t have COVID-19 or the flu can get free tests if they are uninsured or are enrolled in Medicare, Medicaid, the Veterans Affairs health care system, or Indian Health Services. If they test positive later, they can get free telehealth care and, if prescribed, treatment.

“I think that these [telehealth] delivery mechanisms are going to be absolutely crucial to unburden the in-person offices and the lines that we have and wait times,” said Michael Mina, MD, chief science officer at eMed, the company that helped implement the new Home Test to Treat program, to ABC News.

ABC notes that COVID tests can also be ordered at covidtests.gov – four tests per household or eight for those who have yet to order any this fall.

A version of this article appeared on WebMD.com .

The U.S. government has expanded a program offering free COVID-19 and flu tests and treatment.

The Home Test to Treat program is virtual and offers at-home rapid tests, telehealth sessions, and at-home treatments to people nationwide. The program is a collaboration among the National Institutes of Health, the Administration for Strategic Preparedness and Response, and the CDC. It began as a pilot program in some locations this year.