User login

Return on investment slipping in biomedical research

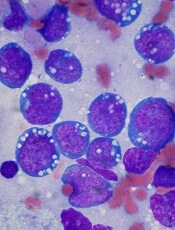

Photo by Rhoda Baer

As more and more money has been spent on biomedical research in the US over the past 50 years, there has been diminished return on investment in terms of life expectancy gains and new drug approvals, according to a report published in PNAS.

Investigators found that the number of scientists in the US has increased about 9-fold since 1965, and the National Institutes of Health (NIH) budget has increased about 4-fold.

But the number of new drugs approved by the Food and Drug Administration has only increased about 2-fold, and life expectancy gains have remained constant, at roughly 2 months per year.

“The idea of public support for biomedical research is to make lives better, but there is increasing friction in the system,” said study author Arturo Casadevall, MD, PhD, of the Johns Hopkins Bloomberg School of Public Health in Baltimore, Maryland.

“We are spending more money now just to get the same results we always have, and this is going to keep happening if we don’t fix things.”

“There is something wrong in the process, but there are no simple answers,” said study author Anthony Bowen, an MD/PhD student at Albert Einstein College of Medicine in the Bronx, New York.

“It may be a confluence of factors that are causing us not to be getting more bang for our buck.”

Bowen and Dr Casadevall said one such factor may be that increased regulations have added to the non-scientific burdens on scientists who could otherwise spend more time at the bench.

Another potential explanation is that the “easy” cures for various conditions have been found, but to tackle cancers, Alzheimer’s disease, and autoimmune diseases, for example, is inherently more complex.

Dr Casadevall and Bowen also cited “perverse” incentives for researchers to cut corners or oversimplify their studies to gain acceptance into top-tier medical journals. The pair said this has led to an “epidemic” of retractions and findings that cannot be reproduced and are therefore worthless.

“The medical literature isn’t as good as it used to be,” Dr Casadevall said. “The culture of science appears to be changing. Less important work is being hyped, when the quality of work may not be clear until decades later when someone builds on your success to find a cure.”

In one recent study, researchers estimated that more than $28 billion, from both public and private sources, is spent each year in the US on preclinical research that can’t be reproduced, and the prevalence of these studies in the literature is 50%.

“We have more journals and more papers than ever,” Bowen said. “But the number of biomedical publications has dramatically outpaced the production of new drugs, which are key to improving people’s lives, especially in areas for which we have no good treatments.”

Dr Casadevall said he doesn’t doubt that more cures for diseases are out there to be found, and a more efficient system of biomedical research could help push along scientific discovery.

“Scientists, regulators, and citizens need to take a hard look at the scientific enterprise and see which [problems] can be resolved,” he said. “We need a system with rigor, reproducibility, and integrity, and we need to find a way to get there as soon as we can.” ![]()

Photo by Rhoda Baer

As more and more money has been spent on biomedical research in the US over the past 50 years, there has been diminished return on investment in terms of life expectancy gains and new drug approvals, according to a report published in PNAS.

Investigators found that the number of scientists in the US has increased about 9-fold since 1965, and the National Institutes of Health (NIH) budget has increased about 4-fold.

But the number of new drugs approved by the Food and Drug Administration has only increased about 2-fold, and life expectancy gains have remained constant, at roughly 2 months per year.

“The idea of public support for biomedical research is to make lives better, but there is increasing friction in the system,” said study author Arturo Casadevall, MD, PhD, of the Johns Hopkins Bloomberg School of Public Health in Baltimore, Maryland.

“We are spending more money now just to get the same results we always have, and this is going to keep happening if we don’t fix things.”

“There is something wrong in the process, but there are no simple answers,” said study author Anthony Bowen, an MD/PhD student at Albert Einstein College of Medicine in the Bronx, New York.

“It may be a confluence of factors that are causing us not to be getting more bang for our buck.”

Bowen and Dr Casadevall said one such factor may be that increased regulations have added to the non-scientific burdens on scientists who could otherwise spend more time at the bench.

Another potential explanation is that the “easy” cures for various conditions have been found, but to tackle cancers, Alzheimer’s disease, and autoimmune diseases, for example, is inherently more complex.

Dr Casadevall and Bowen also cited “perverse” incentives for researchers to cut corners or oversimplify their studies to gain acceptance into top-tier medical journals. The pair said this has led to an “epidemic” of retractions and findings that cannot be reproduced and are therefore worthless.

“The medical literature isn’t as good as it used to be,” Dr Casadevall said. “The culture of science appears to be changing. Less important work is being hyped, when the quality of work may not be clear until decades later when someone builds on your success to find a cure.”

In one recent study, researchers estimated that more than $28 billion, from both public and private sources, is spent each year in the US on preclinical research that can’t be reproduced, and the prevalence of these studies in the literature is 50%.

“We have more journals and more papers than ever,” Bowen said. “But the number of biomedical publications has dramatically outpaced the production of new drugs, which are key to improving people’s lives, especially in areas for which we have no good treatments.”

Dr Casadevall said he doesn’t doubt that more cures for diseases are out there to be found, and a more efficient system of biomedical research could help push along scientific discovery.

“Scientists, regulators, and citizens need to take a hard look at the scientific enterprise and see which [problems] can be resolved,” he said. “We need a system with rigor, reproducibility, and integrity, and we need to find a way to get there as soon as we can.” ![]()

Photo by Rhoda Baer

As more and more money has been spent on biomedical research in the US over the past 50 years, there has been diminished return on investment in terms of life expectancy gains and new drug approvals, according to a report published in PNAS.

Investigators found that the number of scientists in the US has increased about 9-fold since 1965, and the National Institutes of Health (NIH) budget has increased about 4-fold.

But the number of new drugs approved by the Food and Drug Administration has only increased about 2-fold, and life expectancy gains have remained constant, at roughly 2 months per year.

“The idea of public support for biomedical research is to make lives better, but there is increasing friction in the system,” said study author Arturo Casadevall, MD, PhD, of the Johns Hopkins Bloomberg School of Public Health in Baltimore, Maryland.

“We are spending more money now just to get the same results we always have, and this is going to keep happening if we don’t fix things.”

“There is something wrong in the process, but there are no simple answers,” said study author Anthony Bowen, an MD/PhD student at Albert Einstein College of Medicine in the Bronx, New York.

“It may be a confluence of factors that are causing us not to be getting more bang for our buck.”

Bowen and Dr Casadevall said one such factor may be that increased regulations have added to the non-scientific burdens on scientists who could otherwise spend more time at the bench.

Another potential explanation is that the “easy” cures for various conditions have been found, but to tackle cancers, Alzheimer’s disease, and autoimmune diseases, for example, is inherently more complex.

Dr Casadevall and Bowen also cited “perverse” incentives for researchers to cut corners or oversimplify their studies to gain acceptance into top-tier medical journals. The pair said this has led to an “epidemic” of retractions and findings that cannot be reproduced and are therefore worthless.

“The medical literature isn’t as good as it used to be,” Dr Casadevall said. “The culture of science appears to be changing. Less important work is being hyped, when the quality of work may not be clear until decades later when someone builds on your success to find a cure.”

In one recent study, researchers estimated that more than $28 billion, from both public and private sources, is spent each year in the US on preclinical research that can’t be reproduced, and the prevalence of these studies in the literature is 50%.

“We have more journals and more papers than ever,” Bowen said. “But the number of biomedical publications has dramatically outpaced the production of new drugs, which are key to improving people’s lives, especially in areas for which we have no good treatments.”

Dr Casadevall said he doesn’t doubt that more cures for diseases are out there to be found, and a more efficient system of biomedical research could help push along scientific discovery.

“Scientists, regulators, and citizens need to take a hard look at the scientific enterprise and see which [problems] can be resolved,” he said. “We need a system with rigor, reproducibility, and integrity, and we need to find a way to get there as soon as we can.” ![]()

FDA approves new formulation of pain patch for cancer patients

receiving treatment

Photo by Rhoda Baer

The US Food and Drug Administration (FDA) has approved a new formulation of fentanyl buccal soluble film CII (Onsolis), a patch used to manage breakthrough pain in adult cancer patients who are opioid-tolerant.

This decision allows BioDelivery Sciences International, Inc. (BDSI), the company developing Onsolis, to bring the product back to the US marketplace.

However, the company said this will not happen before 2016.

Onsolis is an opioid agonist indicated for the management of breakthrough pain in cancer patients 18 years of age and older who are already receiving and are tolerant to opioid therapy for their underlying persistent cancer pain.

Onsolis utilizes BioErodible MucoAdhesive drug delivery technology, which consists of a small, bioerodible polymer film that is applied to the inner lining of the cheek. Onsolis is the only differentiated fentanyl-containing product for this indication that provides buccal administration.

Onsolis off the US market

Onsolis was originally approved by the FDA in July 2009, but BDSI stopped manufacturing the product in March 2012, after the FDA uncovered 2 issues with Onsolis.

The FDA found that, during Onsolis’s 24-month shelf-life, microscopic crystals formed on the product, and the color faded slightly. BDSI said these changes did not affect the product’s underlying integrity or safety, but the FDA thought the fading color in particular might cause patients to question the product’s efficacy.

So the FDA required that Onsolis be modified before additional product could be manufactured and distributed. Supplies of Onsolis that were already on the market remained on the market.

An analysis by BDSI showed that the changes in Onsolis were related to an excipient used in the manufacturing process that could be removed to resolve the problem.

The excipient was specific to the manufacture of Onsolis in the US. Therefore, it did not impact the launch of Breakyl, which is the brand name for Onsolis in the European Union.

Return to market

After BDSI reformulated Onsolis to prevent the aforementioned changes in the product’s appearance, the FDA approved the product’s return to market.

“We are pleased to have obtained FDA approval of our [supplemental new drug application] and to now be in a position to move toward returning Onsolis to the US marketplace,” said Mark A. Sirgo, PharmD, President and Chief Executive Officer of BDSI.

“Although we have options for Onsolis, including commercializing it on our own, our current plan is to determine the value we can secure in a partnership with a company that has access to the target physician audience. We have been engaged with a number of potential partners, and, with this approval, we can now proceed forward with those discussions in earnest. We will provide more definitive timing in the near future about the reintroduction, but this would not be prior to 2016.”

Once Onsolis does return to the market, it will only be available via the Transmucosal Immediate Release Fentanyl (TIRF) Risk Evaluation and Mitigation Strategy (REMS) program. This is an FDA-required program designed to mitigate the risk of misuse, abuse, addiction, overdose, and serious complications due to medication errors with the use of TIRF medicines.

Outpatients, healthcare professionals who prescribe to outpatients, pharmacies, and distributors must enroll in the program to receive Onsolis. Further information is available at www.TIRFREMSAccess.com. ![]()

receiving treatment

Photo by Rhoda Baer

The US Food and Drug Administration (FDA) has approved a new formulation of fentanyl buccal soluble film CII (Onsolis), a patch used to manage breakthrough pain in adult cancer patients who are opioid-tolerant.

This decision allows BioDelivery Sciences International, Inc. (BDSI), the company developing Onsolis, to bring the product back to the US marketplace.

However, the company said this will not happen before 2016.

Onsolis is an opioid agonist indicated for the management of breakthrough pain in cancer patients 18 years of age and older who are already receiving and are tolerant to opioid therapy for their underlying persistent cancer pain.

Onsolis utilizes BioErodible MucoAdhesive drug delivery technology, which consists of a small, bioerodible polymer film that is applied to the inner lining of the cheek. Onsolis is the only differentiated fentanyl-containing product for this indication that provides buccal administration.

Onsolis off the US market

Onsolis was originally approved by the FDA in July 2009, but BDSI stopped manufacturing the product in March 2012, after the FDA uncovered 2 issues with Onsolis.

The FDA found that, during Onsolis’s 24-month shelf-life, microscopic crystals formed on the product, and the color faded slightly. BDSI said these changes did not affect the product’s underlying integrity or safety, but the FDA thought the fading color in particular might cause patients to question the product’s efficacy.

So the FDA required that Onsolis be modified before additional product could be manufactured and distributed. Supplies of Onsolis that were already on the market remained on the market.

An analysis by BDSI showed that the changes in Onsolis were related to an excipient used in the manufacturing process that could be removed to resolve the problem.

The excipient was specific to the manufacture of Onsolis in the US. Therefore, it did not impact the launch of Breakyl, which is the brand name for Onsolis in the European Union.

Return to market

After BDSI reformulated Onsolis to prevent the aforementioned changes in the product’s appearance, the FDA approved the product’s return to market.

“We are pleased to have obtained FDA approval of our [supplemental new drug application] and to now be in a position to move toward returning Onsolis to the US marketplace,” said Mark A. Sirgo, PharmD, President and Chief Executive Officer of BDSI.

“Although we have options for Onsolis, including commercializing it on our own, our current plan is to determine the value we can secure in a partnership with a company that has access to the target physician audience. We have been engaged with a number of potential partners, and, with this approval, we can now proceed forward with those discussions in earnest. We will provide more definitive timing in the near future about the reintroduction, but this would not be prior to 2016.”

Once Onsolis does return to the market, it will only be available via the Transmucosal Immediate Release Fentanyl (TIRF) Risk Evaluation and Mitigation Strategy (REMS) program. This is an FDA-required program designed to mitigate the risk of misuse, abuse, addiction, overdose, and serious complications due to medication errors with the use of TIRF medicines.

Outpatients, healthcare professionals who prescribe to outpatients, pharmacies, and distributors must enroll in the program to receive Onsolis. Further information is available at www.TIRFREMSAccess.com. ![]()

receiving treatment

Photo by Rhoda Baer

The US Food and Drug Administration (FDA) has approved a new formulation of fentanyl buccal soluble film CII (Onsolis), a patch used to manage breakthrough pain in adult cancer patients who are opioid-tolerant.

This decision allows BioDelivery Sciences International, Inc. (BDSI), the company developing Onsolis, to bring the product back to the US marketplace.

However, the company said this will not happen before 2016.

Onsolis is an opioid agonist indicated for the management of breakthrough pain in cancer patients 18 years of age and older who are already receiving and are tolerant to opioid therapy for their underlying persistent cancer pain.

Onsolis utilizes BioErodible MucoAdhesive drug delivery technology, which consists of a small, bioerodible polymer film that is applied to the inner lining of the cheek. Onsolis is the only differentiated fentanyl-containing product for this indication that provides buccal administration.

Onsolis off the US market

Onsolis was originally approved by the FDA in July 2009, but BDSI stopped manufacturing the product in March 2012, after the FDA uncovered 2 issues with Onsolis.

The FDA found that, during Onsolis’s 24-month shelf-life, microscopic crystals formed on the product, and the color faded slightly. BDSI said these changes did not affect the product’s underlying integrity or safety, but the FDA thought the fading color in particular might cause patients to question the product’s efficacy.

So the FDA required that Onsolis be modified before additional product could be manufactured and distributed. Supplies of Onsolis that were already on the market remained on the market.

An analysis by BDSI showed that the changes in Onsolis were related to an excipient used in the manufacturing process that could be removed to resolve the problem.

The excipient was specific to the manufacture of Onsolis in the US. Therefore, it did not impact the launch of Breakyl, which is the brand name for Onsolis in the European Union.

Return to market

After BDSI reformulated Onsolis to prevent the aforementioned changes in the product’s appearance, the FDA approved the product’s return to market.

“We are pleased to have obtained FDA approval of our [supplemental new drug application] and to now be in a position to move toward returning Onsolis to the US marketplace,” said Mark A. Sirgo, PharmD, President and Chief Executive Officer of BDSI.

“Although we have options for Onsolis, including commercializing it on our own, our current plan is to determine the value we can secure in a partnership with a company that has access to the target physician audience. We have been engaged with a number of potential partners, and, with this approval, we can now proceed forward with those discussions in earnest. We will provide more definitive timing in the near future about the reintroduction, but this would not be prior to 2016.”

Once Onsolis does return to the market, it will only be available via the Transmucosal Immediate Release Fentanyl (TIRF) Risk Evaluation and Mitigation Strategy (REMS) program. This is an FDA-required program designed to mitigate the risk of misuse, abuse, addiction, overdose, and serious complications due to medication errors with the use of TIRF medicines.

Outpatients, healthcare professionals who prescribe to outpatients, pharmacies, and distributors must enroll in the program to receive Onsolis. Further information is available at www.TIRFREMSAccess.com. ![]()

How malaria increases the risk of Burkitt lymphoma

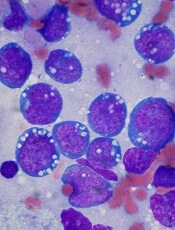

Image by Ed Uthman

A link between malaria and Burkitt lymphoma was first described more than 50 years ago, but how the parasitic infection promotes lymphomagenesis has remained a mystery.

Now, research in mice has revealed that B-cell DNA becomes vulnerable to cancer-causing mutations during prolonged combat against the malaria parasite.

Davide Robbiani, MD, PhD, of The Rockefeller University in New York, New York, and his colleagues described this research in Cell.

The team infected mice with the malaria parasite Plasmodium chabaudi and, immediately, the mice experienced an increase in germinal center (GC) B lymphocytes, which can give rise to Burkitt lymphoma.

“In malaria-infected mice, these cells divide very rapidly over the course of months,” Dr Robbiani said.

As the GC B lymphocytes proliferate, they also express high levels of activation-induced cytidine deaminase (AID), which induces mutations in their DNA. As a result, these cells can diversify to generate a wide range of antibodies.

But in addition to beneficial mutations in antibody genes, AID can cause off-target damage and shuffling of cancer-causing genes.

“In mice infected with the malaria parasite, these so-called chromosomal rearrangements occur very frequently in GC lymphocytes,” Dr Robbiani said. “And at least some of the changes are due to AID.”

To further investigate this phenomenon, the researchers bred mice lacking the p53 gene, which is known to protect cells from Burkitt lymphoma. All of the mice that expressed AID but not p53 ultimately developed lymphoma.

And when these mice were infected with the malaria parasite, they developed lymphomas specifically in mature B cells, similar to what happens in Burkitt lymphoma.

“This finding sheds new light on a long-standing mystery of why two seemingly different diseases are associated with each other,” Dr Robbiani said.

Researchers are now attempting to determine how AID causes its off-target damage to DNA, which could lead to new treatments.

“If we could somehow limit this collateral damage to cancer-causing genes without reducing the infection-fighting powers of B cells, that could be very useful,” Dr Robbiani said. “But first, we have to find out how the collateral DNA damage occurs in the first place.”

Dr Robbiani noted that hepatitis C virus and Helicobacter pylori infections, as well as some autoimmune diseases, are also linked with

chronic B lymphocyte activation and an increased risk of lymphoma.

Therefore,

strategies aimed at reducing unintended DNA damage caused by AID might

also help reduce the risk of lymphoma in patients with these conditions.

“It’s possible that AID also plays a role in the association between these other infections and cancer,” Dr Robbiani said. “This is purely a speculation at this point, though highly suggestive.” ![]()

Image by Ed Uthman

A link between malaria and Burkitt lymphoma was first described more than 50 years ago, but how the parasitic infection promotes lymphomagenesis has remained a mystery.

Now, research in mice has revealed that B-cell DNA becomes vulnerable to cancer-causing mutations during prolonged combat against the malaria parasite.

Davide Robbiani, MD, PhD, of The Rockefeller University in New York, New York, and his colleagues described this research in Cell.

The team infected mice with the malaria parasite Plasmodium chabaudi and, immediately, the mice experienced an increase in germinal center (GC) B lymphocytes, which can give rise to Burkitt lymphoma.

“In malaria-infected mice, these cells divide very rapidly over the course of months,” Dr Robbiani said.

As the GC B lymphocytes proliferate, they also express high levels of activation-induced cytidine deaminase (AID), which induces mutations in their DNA. As a result, these cells can diversify to generate a wide range of antibodies.

But in addition to beneficial mutations in antibody genes, AID can cause off-target damage and shuffling of cancer-causing genes.

“In mice infected with the malaria parasite, these so-called chromosomal rearrangements occur very frequently in GC lymphocytes,” Dr Robbiani said. “And at least some of the changes are due to AID.”

To further investigate this phenomenon, the researchers bred mice lacking the p53 gene, which is known to protect cells from Burkitt lymphoma. All of the mice that expressed AID but not p53 ultimately developed lymphoma.

And when these mice were infected with the malaria parasite, they developed lymphomas specifically in mature B cells, similar to what happens in Burkitt lymphoma.

“This finding sheds new light on a long-standing mystery of why two seemingly different diseases are associated with each other,” Dr Robbiani said.

Researchers are now attempting to determine how AID causes its off-target damage to DNA, which could lead to new treatments.

“If we could somehow limit this collateral damage to cancer-causing genes without reducing the infection-fighting powers of B cells, that could be very useful,” Dr Robbiani said. “But first, we have to find out how the collateral DNA damage occurs in the first place.”

Dr Robbiani noted that hepatitis C virus and Helicobacter pylori infections, as well as some autoimmune diseases, are also linked with

chronic B lymphocyte activation and an increased risk of lymphoma.

Therefore,

strategies aimed at reducing unintended DNA damage caused by AID might

also help reduce the risk of lymphoma in patients with these conditions.

“It’s possible that AID also plays a role in the association between these other infections and cancer,” Dr Robbiani said. “This is purely a speculation at this point, though highly suggestive.” ![]()

Image by Ed Uthman

A link between malaria and Burkitt lymphoma was first described more than 50 years ago, but how the parasitic infection promotes lymphomagenesis has remained a mystery.

Now, research in mice has revealed that B-cell DNA becomes vulnerable to cancer-causing mutations during prolonged combat against the malaria parasite.

Davide Robbiani, MD, PhD, of The Rockefeller University in New York, New York, and his colleagues described this research in Cell.

The team infected mice with the malaria parasite Plasmodium chabaudi and, immediately, the mice experienced an increase in germinal center (GC) B lymphocytes, which can give rise to Burkitt lymphoma.

“In malaria-infected mice, these cells divide very rapidly over the course of months,” Dr Robbiani said.

As the GC B lymphocytes proliferate, they also express high levels of activation-induced cytidine deaminase (AID), which induces mutations in their DNA. As a result, these cells can diversify to generate a wide range of antibodies.

But in addition to beneficial mutations in antibody genes, AID can cause off-target damage and shuffling of cancer-causing genes.

“In mice infected with the malaria parasite, these so-called chromosomal rearrangements occur very frequently in GC lymphocytes,” Dr Robbiani said. “And at least some of the changes are due to AID.”

To further investigate this phenomenon, the researchers bred mice lacking the p53 gene, which is known to protect cells from Burkitt lymphoma. All of the mice that expressed AID but not p53 ultimately developed lymphoma.

And when these mice were infected with the malaria parasite, they developed lymphomas specifically in mature B cells, similar to what happens in Burkitt lymphoma.

“This finding sheds new light on a long-standing mystery of why two seemingly different diseases are associated with each other,” Dr Robbiani said.

Researchers are now attempting to determine how AID causes its off-target damage to DNA, which could lead to new treatments.

“If we could somehow limit this collateral damage to cancer-causing genes without reducing the infection-fighting powers of B cells, that could be very useful,” Dr Robbiani said. “But first, we have to find out how the collateral DNA damage occurs in the first place.”

Dr Robbiani noted that hepatitis C virus and Helicobacter pylori infections, as well as some autoimmune diseases, are also linked with

chronic B lymphocyte activation and an increased risk of lymphoma.

Therefore,

strategies aimed at reducing unintended DNA damage caused by AID might

also help reduce the risk of lymphoma in patients with these conditions.

“It’s possible that AID also plays a role in the association between these other infections and cancer,” Dr Robbiani said. “This is purely a speculation at this point, though highly suggestive.” ![]()

Algorithm can enhance clustering, aid trial design

Chenyue Wendy Hu

Photo courtesy of Jeff Fitlow

and Rice University

A newly developed algorithm for “big data” could have a significant impact on clinical trials, according to researchers.

The algorithm, called progeny clustering, was the only method to successfully reveal “clinically meaningful” groupings of proteomic data from patients with acute myeloid leukemia.

And the algorithm is currently being used in a hospital study to identify optimal treatment for children with leukemia.

Details on progeny clustering have been published in Scientific Reports.

The authors noted that clustering is important for its ability to reveal information in complex sets of data like medical records.

“Doctors who design clinical trials need to know how to group patients so they receive the most appropriate treatment,” said author Amina Qutub, PhD, of Rice University in Houston, Texas. “First, they need to estimate the optimal number of clusters in their data.”

The more accurate the clusters, the more personalized the treatment can be, Dr Qutub said. She added that separating groups by a single data point would be easy, but when separating patients by the types of proteins in their bloodstreams, for example, it becomes more difficult.

“That’s the kind of data that’s become prevalent everywhere in biology, and it’s good to have,” Dr Qutub said. “We want to know hundreds of features about a single person. The problem is identifying how to use all that data.”

Progeny clustering provides a way to ensure the number of clusters is as accurate as possible, Dr Qutub said. The algorithm extracts characteristics about patients from a data set, mixing and matching them randomly to create artificial populations—the “progeny” of the parent data. The characteristics appear in roughly the same ratios in the progeny as they do among the parents.

These characteristics, called dimensions, can be anything: as simple as hair color or place of birth, or as detailed as blood cell count or the proteins expressed by tumor cells. For even a small population, each individual may have hundreds or thousands of dimensions.

By creating progeny with the same dimensions of features, the algorithm increases the size of the data set. With this additional data, the distinct patterns become more apparent, allowing the algorithm to optimize the number of clusters that warrant attention from doctors and researchers.

Dr Qutub said this technique is just as reliable as state-of-the-art clustering evaluation algorithms, but at a fraction of the computational cost. In lab tests, progeny clustering compared favorably to other popular methods.

And it was the only method to provide clinically meaningful groupings in an acute myeloid leukemia reverse-phase protein array data set.

Progeny clustering also allows researchers to determine the ideal number of clusters in small populations, Dr Qutub noted.

The algorithm was used to design an ongoing trial involving leukemia patients at Texas Children’s Hospital.

“Progeny clustering allowed them to design a robust clinical trial, even though that trial did not involve a large number of children,” Dr Qutub said. “It meant they didn’t have to wait to enroll more.”

Dr Qutub added that the algorithm could apply to any data set.

“We could just as easily use it for a population of voters to see who should get campaign materials from a candidate,” she said. “Progeny clustering has a lot of possible applications.”

Dr Qutub and her colleagues plan to make the algorithm available for free on her lab’s website. ![]()

Chenyue Wendy Hu

Photo courtesy of Jeff Fitlow

and Rice University

A newly developed algorithm for “big data” could have a significant impact on clinical trials, according to researchers.

The algorithm, called progeny clustering, was the only method to successfully reveal “clinically meaningful” groupings of proteomic data from patients with acute myeloid leukemia.

And the algorithm is currently being used in a hospital study to identify optimal treatment for children with leukemia.

Details on progeny clustering have been published in Scientific Reports.

The authors noted that clustering is important for its ability to reveal information in complex sets of data like medical records.

“Doctors who design clinical trials need to know how to group patients so they receive the most appropriate treatment,” said author Amina Qutub, PhD, of Rice University in Houston, Texas. “First, they need to estimate the optimal number of clusters in their data.”

The more accurate the clusters, the more personalized the treatment can be, Dr Qutub said. She added that separating groups by a single data point would be easy, but when separating patients by the types of proteins in their bloodstreams, for example, it becomes more difficult.

“That’s the kind of data that’s become prevalent everywhere in biology, and it’s good to have,” Dr Qutub said. “We want to know hundreds of features about a single person. The problem is identifying how to use all that data.”

Progeny clustering provides a way to ensure the number of clusters is as accurate as possible, Dr Qutub said. The algorithm extracts characteristics about patients from a data set, mixing and matching them randomly to create artificial populations—the “progeny” of the parent data. The characteristics appear in roughly the same ratios in the progeny as they do among the parents.

These characteristics, called dimensions, can be anything: as simple as hair color or place of birth, or as detailed as blood cell count or the proteins expressed by tumor cells. For even a small population, each individual may have hundreds or thousands of dimensions.

By creating progeny with the same dimensions of features, the algorithm increases the size of the data set. With this additional data, the distinct patterns become more apparent, allowing the algorithm to optimize the number of clusters that warrant attention from doctors and researchers.

Dr Qutub said this technique is just as reliable as state-of-the-art clustering evaluation algorithms, but at a fraction of the computational cost. In lab tests, progeny clustering compared favorably to other popular methods.

And it was the only method to provide clinically meaningful groupings in an acute myeloid leukemia reverse-phase protein array data set.

Progeny clustering also allows researchers to determine the ideal number of clusters in small populations, Dr Qutub noted.

The algorithm was used to design an ongoing trial involving leukemia patients at Texas Children’s Hospital.

“Progeny clustering allowed them to design a robust clinical trial, even though that trial did not involve a large number of children,” Dr Qutub said. “It meant they didn’t have to wait to enroll more.”

Dr Qutub added that the algorithm could apply to any data set.

“We could just as easily use it for a population of voters to see who should get campaign materials from a candidate,” she said. “Progeny clustering has a lot of possible applications.”

Dr Qutub and her colleagues plan to make the algorithm available for free on her lab’s website. ![]()

Chenyue Wendy Hu

Photo courtesy of Jeff Fitlow

and Rice University

A newly developed algorithm for “big data” could have a significant impact on clinical trials, according to researchers.

The algorithm, called progeny clustering, was the only method to successfully reveal “clinically meaningful” groupings of proteomic data from patients with acute myeloid leukemia.

And the algorithm is currently being used in a hospital study to identify optimal treatment for children with leukemia.

Details on progeny clustering have been published in Scientific Reports.

The authors noted that clustering is important for its ability to reveal information in complex sets of data like medical records.

“Doctors who design clinical trials need to know how to group patients so they receive the most appropriate treatment,” said author Amina Qutub, PhD, of Rice University in Houston, Texas. “First, they need to estimate the optimal number of clusters in their data.”

The more accurate the clusters, the more personalized the treatment can be, Dr Qutub said. She added that separating groups by a single data point would be easy, but when separating patients by the types of proteins in their bloodstreams, for example, it becomes more difficult.

“That’s the kind of data that’s become prevalent everywhere in biology, and it’s good to have,” Dr Qutub said. “We want to know hundreds of features about a single person. The problem is identifying how to use all that data.”

Progeny clustering provides a way to ensure the number of clusters is as accurate as possible, Dr Qutub said. The algorithm extracts characteristics about patients from a data set, mixing and matching them randomly to create artificial populations—the “progeny” of the parent data. The characteristics appear in roughly the same ratios in the progeny as they do among the parents.

These characteristics, called dimensions, can be anything: as simple as hair color or place of birth, or as detailed as blood cell count or the proteins expressed by tumor cells. For even a small population, each individual may have hundreds or thousands of dimensions.

By creating progeny with the same dimensions of features, the algorithm increases the size of the data set. With this additional data, the distinct patterns become more apparent, allowing the algorithm to optimize the number of clusters that warrant attention from doctors and researchers.

Dr Qutub said this technique is just as reliable as state-of-the-art clustering evaluation algorithms, but at a fraction of the computational cost. In lab tests, progeny clustering compared favorably to other popular methods.

And it was the only method to provide clinically meaningful groupings in an acute myeloid leukemia reverse-phase protein array data set.

Progeny clustering also allows researchers to determine the ideal number of clusters in small populations, Dr Qutub noted.

The algorithm was used to design an ongoing trial involving leukemia patients at Texas Children’s Hospital.

“Progeny clustering allowed them to design a robust clinical trial, even though that trial did not involve a large number of children,” Dr Qutub said. “It meant they didn’t have to wait to enroll more.”

Dr Qutub added that the algorithm could apply to any data set.

“We could just as easily use it for a population of voters to see who should get campaign materials from a candidate,” she said. “Progeny clustering has a lot of possible applications.”

Dr Qutub and her colleagues plan to make the algorithm available for free on her lab’s website. ![]()

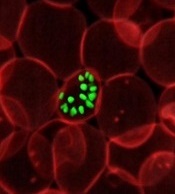

Nanoparticle-based vaccine could prevent EBV

Photo by Sakura Midori

Researchers say they have developed a nanoparticle-based vaccine against Epstein-Barr virus (EBV) that can induce potent neutralizing antibodies in mice and monkeys.

These results suggest that using a structure-based vaccine design and self-assembling nanoparticles to deliver a viral protein that prompts an immune response could be a promising approach for developing an EBV vaccine for humans.

Most efforts to develop a preventive EBV vaccine have focused on glycoprotein 350 (gp350), a molecule on the surface of EBV that helps the virus attach to B cells. EBV gp350 is thought to be a key target for antibodies capable of preventing viral infection.

Previously, researchers showed that vaccinating monkeys with gp350 protected the animals from developing lymphomas after exposure to a high dose of EBV.

However, in the only large human trial of an experimental EBV vaccine conducted to date, the EBV gp350 vaccine did not prevent EBV infection, although it did reduce the rate of infectious mononucleosis by 78%.

With this in mind, Masaru Kanekiyo, DVM, PhD, of the National Institutes of Health in Bethesda, Maryland, and his colleagues set out to create a better vaccine.

They described their work in a paper published in Cell.

The team designed a nanoparticle-based vaccine that expressed the cell-binding portion of gp350. In tests, the experimental vaccine induced potent neutralizing antibodies in both mice and cynomolgus macaques (Macaca fascicularis).

In fact, compared with soluble gp350, the nanoparticle-based vaccine induced 10- to 100-fold higher levels of neutralizing antibodies in mice.

The researchers believe the nanoparticle vaccine design could be used to create or redesign vaccines against other pathogens as well. ![]()

Photo by Sakura Midori

Researchers say they have developed a nanoparticle-based vaccine against Epstein-Barr virus (EBV) that can induce potent neutralizing antibodies in mice and monkeys.

These results suggest that using a structure-based vaccine design and self-assembling nanoparticles to deliver a viral protein that prompts an immune response could be a promising approach for developing an EBV vaccine for humans.

Most efforts to develop a preventive EBV vaccine have focused on glycoprotein 350 (gp350), a molecule on the surface of EBV that helps the virus attach to B cells. EBV gp350 is thought to be a key target for antibodies capable of preventing viral infection.

Previously, researchers showed that vaccinating monkeys with gp350 protected the animals from developing lymphomas after exposure to a high dose of EBV.

However, in the only large human trial of an experimental EBV vaccine conducted to date, the EBV gp350 vaccine did not prevent EBV infection, although it did reduce the rate of infectious mononucleosis by 78%.

With this in mind, Masaru Kanekiyo, DVM, PhD, of the National Institutes of Health in Bethesda, Maryland, and his colleagues set out to create a better vaccine.

They described their work in a paper published in Cell.

The team designed a nanoparticle-based vaccine that expressed the cell-binding portion of gp350. In tests, the experimental vaccine induced potent neutralizing antibodies in both mice and cynomolgus macaques (Macaca fascicularis).

In fact, compared with soluble gp350, the nanoparticle-based vaccine induced 10- to 100-fold higher levels of neutralizing antibodies in mice.

The researchers believe the nanoparticle vaccine design could be used to create or redesign vaccines against other pathogens as well. ![]()

Photo by Sakura Midori

Researchers say they have developed a nanoparticle-based vaccine against Epstein-Barr virus (EBV) that can induce potent neutralizing antibodies in mice and monkeys.

These results suggest that using a structure-based vaccine design and self-assembling nanoparticles to deliver a viral protein that prompts an immune response could be a promising approach for developing an EBV vaccine for humans.

Most efforts to develop a preventive EBV vaccine have focused on glycoprotein 350 (gp350), a molecule on the surface of EBV that helps the virus attach to B cells. EBV gp350 is thought to be a key target for antibodies capable of preventing viral infection.

Previously, researchers showed that vaccinating monkeys with gp350 protected the animals from developing lymphomas after exposure to a high dose of EBV.

However, in the only large human trial of an experimental EBV vaccine conducted to date, the EBV gp350 vaccine did not prevent EBV infection, although it did reduce the rate of infectious mononucleosis by 78%.

With this in mind, Masaru Kanekiyo, DVM, PhD, of the National Institutes of Health in Bethesda, Maryland, and his colleagues set out to create a better vaccine.

They described their work in a paper published in Cell.

The team designed a nanoparticle-based vaccine that expressed the cell-binding portion of gp350. In tests, the experimental vaccine induced potent neutralizing antibodies in both mice and cynomolgus macaques (Macaca fascicularis).

In fact, compared with soluble gp350, the nanoparticle-based vaccine induced 10- to 100-fold higher levels of neutralizing antibodies in mice.

The researchers believe the nanoparticle vaccine design could be used to create or redesign vaccines against other pathogens as well. ![]()

Platform simplifies data analysis, team says

Photo by Rhoda Baer

Researchers say they have developed a user-friendly platform for analyzing transcriptomic and epigenomic sequencing data.

This platform, BioWardrobe, was designed to help biomedical researchers analyze data that might answer questions about diseases and basic biology.

“Although biologists can perform experiments and obtain the data, they often lack the programming expertise required to perform computational data analysis,” said Artem Barski, PhD, of the University of Cincinnati in Ohio.

“BioWardrobe aims to empower researchers by bridging this gap between data and knowledge.”

Dr Barski and Andrey Kartashov, also of the University of Cincinnati, described BioWardrobe in Genome Biology.

The pair said the recent proliferation of sequencing-based methods for analysis of gene expression, chromatin structure, and protein-DNA interactions has widened our horizons, but the volume of data obtained from sequencing requires computational data analysis.

Unfortunately, the bioinformatics and programming expertise required for this analysis may be absent in biomedical laboratories. And this can result in data inaccessibility or delays in applying modern sequencing-based technologies to pressing questions in basic and health-related research.

Dr Barski and Kartashov believe BioWardrobe can solve those problems by providing a “biologist-friendly” web interface.

BioWardrobe users can download data from institutional facilities or public databases, map reads, and visualize results on a genome browser. The platform also allows for differential gene expression and binding analysis, and it can create average tag-density profiles and heatmaps.

Dr Barski and Kartashov plan to continue improving BioWardrobe and continue using the platform in their own research on epigenetic regulation in the immune system, as well as in collaborative projects with other investigators. ![]()

Photo by Rhoda Baer

Researchers say they have developed a user-friendly platform for analyzing transcriptomic and epigenomic sequencing data.

This platform, BioWardrobe, was designed to help biomedical researchers analyze data that might answer questions about diseases and basic biology.

“Although biologists can perform experiments and obtain the data, they often lack the programming expertise required to perform computational data analysis,” said Artem Barski, PhD, of the University of Cincinnati in Ohio.

“BioWardrobe aims to empower researchers by bridging this gap between data and knowledge.”

Dr Barski and Andrey Kartashov, also of the University of Cincinnati, described BioWardrobe in Genome Biology.

The pair said the recent proliferation of sequencing-based methods for analysis of gene expression, chromatin structure, and protein-DNA interactions has widened our horizons, but the volume of data obtained from sequencing requires computational data analysis.

Unfortunately, the bioinformatics and programming expertise required for this analysis may be absent in biomedical laboratories. And this can result in data inaccessibility or delays in applying modern sequencing-based technologies to pressing questions in basic and health-related research.

Dr Barski and Kartashov believe BioWardrobe can solve those problems by providing a “biologist-friendly” web interface.

BioWardrobe users can download data from institutional facilities or public databases, map reads, and visualize results on a genome browser. The platform also allows for differential gene expression and binding analysis, and it can create average tag-density profiles and heatmaps.

Dr Barski and Kartashov plan to continue improving BioWardrobe and continue using the platform in their own research on epigenetic regulation in the immune system, as well as in collaborative projects with other investigators. ![]()

Photo by Rhoda Baer

Researchers say they have developed a user-friendly platform for analyzing transcriptomic and epigenomic sequencing data.

This platform, BioWardrobe, was designed to help biomedical researchers analyze data that might answer questions about diseases and basic biology.

“Although biologists can perform experiments and obtain the data, they often lack the programming expertise required to perform computational data analysis,” said Artem Barski, PhD, of the University of Cincinnati in Ohio.

“BioWardrobe aims to empower researchers by bridging this gap between data and knowledge.”

Dr Barski and Andrey Kartashov, also of the University of Cincinnati, described BioWardrobe in Genome Biology.

The pair said the recent proliferation of sequencing-based methods for analysis of gene expression, chromatin structure, and protein-DNA interactions has widened our horizons, but the volume of data obtained from sequencing requires computational data analysis.

Unfortunately, the bioinformatics and programming expertise required for this analysis may be absent in biomedical laboratories. And this can result in data inaccessibility or delays in applying modern sequencing-based technologies to pressing questions in basic and health-related research.

Dr Barski and Kartashov believe BioWardrobe can solve those problems by providing a “biologist-friendly” web interface.

BioWardrobe users can download data from institutional facilities or public databases, map reads, and visualize results on a genome browser. The platform also allows for differential gene expression and binding analysis, and it can create average tag-density profiles and heatmaps.

Dr Barski and Kartashov plan to continue improving BioWardrobe and continue using the platform in their own research on epigenetic regulation in the immune system, as well as in collaborative projects with other investigators. ![]()

Method can predict genes likely to cause diseases

Photo by Darren Baker

A new computational method improves the detection of genes linked to complex diseases and biological traits, according to researchers.

The method, PrediXcan, estimates gene expression levels across the whole genome and integrates this data with genome-wide association study (GWAS) data.

Researchers say PrediXcan has the potential to identify gene targets for therapeutic applications faster and with greater accuracy than traditional methods.

“PrediXcan tells us which genes are more likely to affect a disease or trait by learning the relationship between genotype, gene expression levels from large-scale transcriptome studies, and disease associations from GWAS studies,” said Hae Kyung Im, PhD, of the University of Chicago in Illinois.

“This is the first method that accounts for the mechanisms of gene regulation and can be applied to any heritable disease or phenotype.”

Dr Im and her colleagues described the method in Nature Genetics.

They said PrediXcan uses computational algorithms to learn how genome sequence influences gene expression, based on large-scale transcriptome datasets. This can then be used to create computational estimates of gene expression levels from any whole-genome sequence or chip dataset.

Genomes that have been sequenced as part of a GWAS can be run through PrediXcan to generate a gene expression level profile, which is then analyzed to determine the association between gene expression levels and the disease states or the trait of interest being studied.

The researchers said this method can reveal potentially causal genes and determine directionality—whether high or low levels of expression might cause the disease or trait.

As calculations are based on DNA sequence data and not physical measurements, PrediXcan can tease apart the genetically determined component of gene expression from the effects of the trait itself (avoiding reverse causality) and other factors such as environment.

The researchers said that, with PrediXcan, validation studies need to test a few thousand genes, instead of millions of potential single mutations. In addition, the method can be used to re-analyze existing genomic datasets, with a focus on mechanism, in a high-throughput manner.

“This integrates what we know about consequences of genetic variation in the transcriptome in order to discover genes, instead of just looking at mutations,” Dr Im said. “In a way, we’re modeling one mechanism through which genes affect disease or traits, which is the regulation of gene expression level.”

Dr Im noted that, because PrediXcan creates estimates based on genome sequence data, it is most accurate for strongly heritable traits. However, almost every complex trait or disease has a genetic component. The method can be used to predict the influence of that component, reducing the complexity of follow-up studies.

Dr Im is now working to improve the prediction of PrediXcan and applying it to mental health disorders. In addition, she is working to expand it beyond gene expression levels, to predict the links between diseases or traits and protein levels, epigenetics, and other measurements that can be estimated based on genomic data.

“GWAS studies have been incredibly successful at finding genetic links to disease, but they have been unable to account for mechanism,” Dr Im said. “We now have a computational method that allows us to understand the consequences of GWAS studies.” ![]()

Photo by Darren Baker

A new computational method improves the detection of genes linked to complex diseases and biological traits, according to researchers.

The method, PrediXcan, estimates gene expression levels across the whole genome and integrates this data with genome-wide association study (GWAS) data.

Researchers say PrediXcan has the potential to identify gene targets for therapeutic applications faster and with greater accuracy than traditional methods.

“PrediXcan tells us which genes are more likely to affect a disease or trait by learning the relationship between genotype, gene expression levels from large-scale transcriptome studies, and disease associations from GWAS studies,” said Hae Kyung Im, PhD, of the University of Chicago in Illinois.

“This is the first method that accounts for the mechanisms of gene regulation and can be applied to any heritable disease or phenotype.”

Dr Im and her colleagues described the method in Nature Genetics.

They said PrediXcan uses computational algorithms to learn how genome sequence influences gene expression, based on large-scale transcriptome datasets. This can then be used to create computational estimates of gene expression levels from any whole-genome sequence or chip dataset.

Genomes that have been sequenced as part of a GWAS can be run through PrediXcan to generate a gene expression level profile, which is then analyzed to determine the association between gene expression levels and the disease states or the trait of interest being studied.

The researchers said this method can reveal potentially causal genes and determine directionality—whether high or low levels of expression might cause the disease or trait.

As calculations are based on DNA sequence data and not physical measurements, PrediXcan can tease apart the genetically determined component of gene expression from the effects of the trait itself (avoiding reverse causality) and other factors such as environment.

The researchers said that, with PrediXcan, validation studies need to test a few thousand genes, instead of millions of potential single mutations. In addition, the method can be used to re-analyze existing genomic datasets, with a focus on mechanism, in a high-throughput manner.

“This integrates what we know about consequences of genetic variation in the transcriptome in order to discover genes, instead of just looking at mutations,” Dr Im said. “In a way, we’re modeling one mechanism through which genes affect disease or traits, which is the regulation of gene expression level.”

Dr Im noted that, because PrediXcan creates estimates based on genome sequence data, it is most accurate for strongly heritable traits. However, almost every complex trait or disease has a genetic component. The method can be used to predict the influence of that component, reducing the complexity of follow-up studies.

Dr Im is now working to improve the prediction of PrediXcan and applying it to mental health disorders. In addition, she is working to expand it beyond gene expression levels, to predict the links between diseases or traits and protein levels, epigenetics, and other measurements that can be estimated based on genomic data.

“GWAS studies have been incredibly successful at finding genetic links to disease, but they have been unable to account for mechanism,” Dr Im said. “We now have a computational method that allows us to understand the consequences of GWAS studies.” ![]()

Photo by Darren Baker

A new computational method improves the detection of genes linked to complex diseases and biological traits, according to researchers.

The method, PrediXcan, estimates gene expression levels across the whole genome and integrates this data with genome-wide association study (GWAS) data.

Researchers say PrediXcan has the potential to identify gene targets for therapeutic applications faster and with greater accuracy than traditional methods.

“PrediXcan tells us which genes are more likely to affect a disease or trait by learning the relationship between genotype, gene expression levels from large-scale transcriptome studies, and disease associations from GWAS studies,” said Hae Kyung Im, PhD, of the University of Chicago in Illinois.

“This is the first method that accounts for the mechanisms of gene regulation and can be applied to any heritable disease or phenotype.”

Dr Im and her colleagues described the method in Nature Genetics.

They said PrediXcan uses computational algorithms to learn how genome sequence influences gene expression, based on large-scale transcriptome datasets. This can then be used to create computational estimates of gene expression levels from any whole-genome sequence or chip dataset.

Genomes that have been sequenced as part of a GWAS can be run through PrediXcan to generate a gene expression level profile, which is then analyzed to determine the association between gene expression levels and the disease states or the trait of interest being studied.

The researchers said this method can reveal potentially causal genes and determine directionality—whether high or low levels of expression might cause the disease or trait.

As calculations are based on DNA sequence data and not physical measurements, PrediXcan can tease apart the genetically determined component of gene expression from the effects of the trait itself (avoiding reverse causality) and other factors such as environment.

The researchers said that, with PrediXcan, validation studies need to test a few thousand genes, instead of millions of potential single mutations. In addition, the method can be used to re-analyze existing genomic datasets, with a focus on mechanism, in a high-throughput manner.

“This integrates what we know about consequences of genetic variation in the transcriptome in order to discover genes, instead of just looking at mutations,” Dr Im said. “In a way, we’re modeling one mechanism through which genes affect disease or traits, which is the regulation of gene expression level.”

Dr Im noted that, because PrediXcan creates estimates based on genome sequence data, it is most accurate for strongly heritable traits. However, almost every complex trait or disease has a genetic component. The method can be used to predict the influence of that component, reducing the complexity of follow-up studies.

Dr Im is now working to improve the prediction of PrediXcan and applying it to mental health disorders. In addition, she is working to expand it beyond gene expression levels, to predict the links between diseases or traits and protein levels, epigenetics, and other measurements that can be estimated based on genomic data.

“GWAS studies have been incredibly successful at finding genetic links to disease, but they have been unable to account for mechanism,” Dr Im said. “We now have a computational method that allows us to understand the consequences of GWAS studies.”

How religion affects well-being in cancer patients

Photo by Petr Kratochvil

Three meta-analyses shed new light on the role religion and spirituality play in cancer patients’ mental, social, and physical well-being.

The analyses, published in Cancer, indicate that religion and spirituality have significant associations with patients’ health.

But investigators observed wide variability among studies with regard to how different dimensions of religion and spirituality relate to different aspects of health.

In the first analysis, the investigators focused on physical health. Patients reporting greater overall religiousness and spirituality reported better physical health, greater ability to perform their usual daily tasks, and fewer physical symptoms of cancer and treatment.

“These relationships were particularly strong in patients who experienced greater emotional aspects of religion and spirituality, including a sense of meaning and purpose in life as well as a connection to a source larger than oneself,” said study author Heather Jim, PhD, of the Moffitt Cancer Center in Tampa, Florida.

Dr Jim noted that patients who reported greater cognitive aspects of religion and spirituality, such as the ability to integrate the cancer into their religious or spiritual beliefs, also reported better physical health. However, physical health was not related to behavioral aspects of religion and spiritualty, such as church attendance, prayer, or meditation.

In the second analysis, the investigators examined patients’ mental health. The team discovered that emotional aspects of religion and spirituality were more strongly associated with positive mental health than behavioral or cognitive aspects of religion and spirituality.

“Spiritual well-being was, unsurprisingly, associated with less anxiety, depression, or distress,” said study author John Salsman, PhD, of Wake Forest School of Medicine in Winston-Salem, North Carolina.

“Also, greater levels of spiritual distress and a sense of disconnectedness with God or a religious community was associated with greater psychological distress or poorer emotional well-being.”

The third analysis pertained to social health, or patients’ capacity to retain social roles and relationships in the face of illness. Religion and spirituality, as well as each of its dimensions, had modest but reliable links with social health.

“When we took a closer look, we found that patients with stronger spiritual well-being, more benign images of God (such as perceptions of a benevolent God rather than an angry or distant God), or stronger beliefs (such as convictions that a personal God can be called upon for assistance) reported better social health,” said study author Allen Sherman, PhD, of the University of Arkansas for Medical Sciences in Little Rock. “In contrast, those who struggled with their faith fared more poorly.”

The investigators believe future research should focus on how relationships between religious or spiritual involvement and health change over time and whether support services designed to enhance particular aspects of religion and spirituality in interested patients might help improve their well-being.

“In addition, some patients struggle with the religious or spiritual significance of their cancer, which is normal,” Dr Jim said. “How they resolve their struggle may impact their health, but more research is needed to better understand and support these patients.”

Photo by Petr Kratochvil

Three meta-analyses shed new light on the role religion and spirituality play in cancer patients’ mental, social, and physical well-being.

The analyses, published in Cancer, indicate that religion and spirituality have significant associations with patients’ health.

But investigators observed wide variability among studies with regard to how different dimensions of religion and spirituality relate to different aspects of health.

In the first analysis, the investigators focused on physical health. Patients reporting greater overall religiousness and spirituality reported better physical health, greater ability to perform their usual daily tasks, and fewer physical symptoms of cancer and treatment.

“These relationships were particularly strong in patients who experienced greater emotional aspects of religion and spirituality, including a sense of meaning and purpose in life as well as a connection to a source larger than oneself,” said study author Heather Jim, PhD, of the Moffitt Cancer Center in Tampa, Florida.

Dr Jim noted that patients who reported greater cognitive aspects of religion and spirituality, such as the ability to integrate the cancer into their religious or spiritual beliefs, also reported better physical health. However, physical health was not related to behavioral aspects of religion and spiritualty, such as church attendance, prayer, or meditation.

In the second analysis, the investigators examined patients’ mental health. The team discovered that emotional aspects of religion and spirituality were more strongly associated with positive mental health than behavioral or cognitive aspects of religion and spirituality.

“Spiritual well-being was, unsurprisingly, associated with less anxiety, depression, or distress,” said study author John Salsman, PhD, of Wake Forest School of Medicine in Winston-Salem, North Carolina.

“Also, greater levels of spiritual distress and a sense of disconnectedness with God or a religious community was associated with greater psychological distress or poorer emotional well-being.”

The third analysis pertained to social health, or patients’ capacity to retain social roles and relationships in the face of illness. Religion and spirituality, as well as each of its dimensions, had modest but reliable links with social health.

“When we took a closer look, we found that patients with stronger spiritual well-being, more benign images of God (such as perceptions of a benevolent God rather than an angry or distant God), or stronger beliefs (such as convictions that a personal God can be called upon for assistance) reported better social health,” said study author Allen Sherman, PhD, of the University of Arkansas for Medical Sciences in Little Rock. “In contrast, those who struggled with their faith fared more poorly.”

The investigators believe future research should focus on how relationships between religious or spiritual involvement and health change over time and whether support services designed to enhance particular aspects of religion and spirituality in interested patients might help improve their well-being.

“In addition, some patients struggle with the religious or spiritual significance of their cancer, which is normal,” Dr Jim said. “How they resolve their struggle may impact their health, but more research is needed to better understand and support these patients.”

Photo by Petr Kratochvil

Three meta-analyses shed new light on the role religion and spirituality play in cancer patients’ mental, social, and physical well-being.

The analyses, published in Cancer, indicate that religion and spirituality have significant associations with patients’ health.

But investigators observed wide variability among studies with regard to how different dimensions of religion and spirituality relate to different aspects of health.

In the first analysis, the investigators focused on physical health. Patients reporting greater overall religiousness and spirituality reported better physical health, greater ability to perform their usual daily tasks, and fewer physical symptoms of cancer and treatment.

“These relationships were particularly strong in patients who experienced greater emotional aspects of religion and spirituality, including a sense of meaning and purpose in life as well as a connection to a source larger than oneself,” said study author Heather Jim, PhD, of the Moffitt Cancer Center in Tampa, Florida.

Dr Jim noted that patients who reported greater cognitive aspects of religion and spirituality, such as the ability to integrate the cancer into their religious or spiritual beliefs, also reported better physical health. However, physical health was not related to behavioral aspects of religion and spiritualty, such as church attendance, prayer, or meditation.

In the second analysis, the investigators examined patients’ mental health. The team discovered that emotional aspects of religion and spirituality were more strongly associated with positive mental health than behavioral or cognitive aspects of religion and spirituality.

“Spiritual well-being was, unsurprisingly, associated with less anxiety, depression, or distress,” said study author John Salsman, PhD, of Wake Forest School of Medicine in Winston-Salem, North Carolina.

“Also, greater levels of spiritual distress and a sense of disconnectedness with God or a religious community was associated with greater psychological distress or poorer emotional well-being.”

The third analysis pertained to social health, or patients’ capacity to retain social roles and relationships in the face of illness. Religion and spirituality, as well as each of its dimensions, had modest but reliable links with social health.

“When we took a closer look, we found that patients with stronger spiritual well-being, more benign images of God (such as perceptions of a benevolent God rather than an angry or distant God), or stronger beliefs (such as convictions that a personal God can be called upon for assistance) reported better social health,” said study author Allen Sherman, PhD, of the University of Arkansas for Medical Sciences in Little Rock. “In contrast, those who struggled with their faith fared more poorly.”

The investigators believe future research should focus on how relationships between religious or spiritual involvement and health change over time and whether support services designed to enhance particular aspects of religion and spirituality in interested patients might help improve their well-being.

“In addition, some patients struggle with the religious or spiritual significance of their cancer, which is normal,” Dr Jim said. “How they resolve their struggle may impact their health, but more research is needed to better understand and support these patients.”

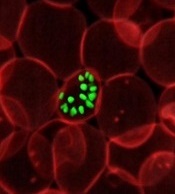

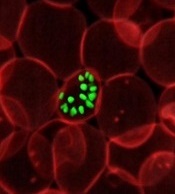

Compound could aid fight against malaria

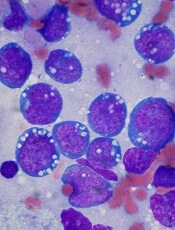

infecting an RBC

Image courtesy of St. Jude

Children’s Research Hospital

Luminol, the compound detectives spray at crime scenes to find trace amounts of blood, can help kill malaria parasites, according to preclinical research published in eLife.

Luminol glows blue when it encounters the hemoglobin in red blood cells (RBCs), and researchers have found they can trick malaria-infected RBCs into

building up a volatile chemical stockpile that can be set off by luminol’s glow.

To achieve this, the researchers exposed infected RBCs to an amino acid known as 5-aminolevulinic acid (ALA), luminol, and 4-iodophenol (a small-molecule that enhances the intensity and duration of luminol chemiluminescence).

This triggered buildup of the chemical, protoporphyrin IX (PPIX), which effectively killed the parasites. When the team substituted artemisinin for 4-iodophenol, they observed similar results.

“The light that luminol emits is enhanced by the antimalarial drug artemisinin,” said study author Daniel Goldberg, MD, PhD, of the Washington University School of Medicine in St Louis, Missouri.

“We think these agents could be combined to form an innovative treatment for malaria.”

The researchers believe this type of therapy would have an advantage over current malaria treatments, which have become less effective as the parasite mutates. That is because the new approach targets proteins made by human RBCs, which the parasite can’t mutate.

To uncover this approach, Dr Goldberg and his colleagues worked with human RBCs infected with Plasmodium falciparum. The team wanted to better understand how the parasite gets hold of heme, which is essential to the parasite’s survival.

They found that P falciparum opens an unnatural channel on the surface of RBCs. When the researchers put ALA (an ingredient of heme) into a solution containing infected RBCs, ALA entered the cells through the channel and started the heme-making process.

This led to a buildup of PPIX. When exposed to luminol and 4-iodophenol, PPIX emitted free radicals. This potently inhibited parasite growth, according to the researchers. And microscopic examination revealed widespread parasite death.

The ALA/luminol/4-iodophenol combination also worked in a parasite line that was resistant to antifolate and quinolone antibiotics, as well as one with a kelch-13 protein mutation, which confers artemisinin tolerance.

The researchers then wanted to determine if artemisinin would enhance their strategy. So they incubated malaria-infected RBCs with ALA, luminol, and/or sub-therapeutic doses of dihydroartemisinin.

Each of the components alone or 2 of them together had little effect, but all 3 in combination successfully ablated parasite growth.

The researchers are now planning to test this treatment approach in vivo.

“All of these agents—the amino acid, the luminol, and artemisinin—have been cleared for use in humans individually, so we are optimistic that they won’t present any safety problems together,” Dr Goldberg said. “This could be a promising new treatment for a devastating disease.”

infecting an RBC

Image courtesy of St. Jude

Children’s Research Hospital

Luminol, the compound detectives spray at crime scenes to find trace amounts of blood, can help kill malaria parasites, according to preclinical research published in eLife.

Luminol glows blue when it encounters the hemoglobin in red blood cells (RBCs), and researchers have found they can trick malaria-infected RBCs into

building up a volatile chemical stockpile that can be set off by luminol’s glow.

To achieve this, the researchers exposed infected RBCs to an amino acid known as 5-aminolevulinic acid (ALA), luminol, and 4-iodophenol (a small-molecule that enhances the intensity and duration of luminol chemiluminescence).

This triggered buildup of the chemical, protoporphyrin IX (PPIX), which effectively killed the parasites. When the team substituted artemisinin for 4-iodophenol, they observed similar results.

“The light that luminol emits is enhanced by the antimalarial drug artemisinin,” said study author Daniel Goldberg, MD, PhD, of the Washington University School of Medicine in St Louis, Missouri.

“We think these agents could be combined to form an innovative treatment for malaria.”

The researchers believe this type of therapy would have an advantage over current malaria treatments, which have become less effective as the parasite mutates. That is because the new approach targets proteins made by human RBCs, which the parasite can’t mutate.

To uncover this approach, Dr Goldberg and his colleagues worked with human RBCs infected with Plasmodium falciparum. The team wanted to better understand how the parasite gets hold of heme, which is essential to the parasite’s survival.

They found that P falciparum opens an unnatural channel on the surface of RBCs. When the researchers put ALA (an ingredient of heme) into a solution containing infected RBCs, ALA entered the cells through the channel and started the heme-making process.

This led to a buildup of PPIX. When exposed to luminol and 4-iodophenol, PPIX emitted free radicals. This potently inhibited parasite growth, according to the researchers. And microscopic examination revealed widespread parasite death.

The ALA/luminol/4-iodophenol combination also worked in a parasite line that was resistant to antifolate and quinolone antibiotics, as well as one with a kelch-13 protein mutation, which confers artemisinin tolerance.

The researchers then wanted to determine if artemisinin would enhance their strategy. So they incubated malaria-infected RBCs with ALA, luminol, and/or sub-therapeutic doses of dihydroartemisinin.

Each of the components alone or 2 of them together had little effect, but all 3 in combination successfully ablated parasite growth.

The researchers are now planning to test this treatment approach in vivo.

“All of these agents—the amino acid, the luminol, and artemisinin—have been cleared for use in humans individually, so we are optimistic that they won’t present any safety problems together,” Dr Goldberg said. “This could be a promising new treatment for a devastating disease.”

infecting an RBC