User login

Ergonomic Strain in Minimally Invasive Surgery: Addressing the Strain Epidemic

From the Division of Reproductive Endocrinology and Infertility, Department of Obstetrics, Gynecology and Reproductive Science, Robert Wood Johnson Medical School, Rutgers University, New Brunswick, NJ (Dr. Fransasiak), and the Division of Gynecologic Oncology, Department of Obstetrics and Gynecology, University of North Carolina, Chapel Hill, NC (Dr. Gehrig).

Abstract

- Background: Minimally invasive surgery (MIS) has benefits to both patients and society and its use has increased markedly over the past 3 decades. With its introduction, new mental and physical challenges were presented to the surgeons, leading to concerns regarding operative ergonomics. Applied ergonomics has been used to study and improve operative techniques and technologies as they apply to MIS.

- Objective: To review the ergonomic challenges presented by both traditional MIS as well as robot-assisted MIS and discuss how ergonomic science has evolved to address these issues.

- Methods: Review of the literature involving MIS and applied ergonomics

- Results: Surgeon strain as it relates to MIS has historically been thought to occur in only approximately 15% of MIS surgeons. More recent data suggests this number is much higher. Rates of strain have been reported to be as high as 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons. Strain results from a number of factors, including instrument design and use, optics placement and resolution, patient and surgeon positioning, and the drive to implement surgical technologies which aim to further minimize the invasiveness of surgical procedures.

- Conclusion: Improvements in applied ergonomics in MIS have resulted in improved optics, more sophisticated and ergonomic instruments, and methods of optimizing positioning. However, despite these advancements, ergonomic strain rates amongst surgeons remain alarmingly high. With the ever-increasing demand for MIS, more research and development as well as MIS surgeon training are needed to improve the safety of surgeons and ensure the career longevity required to meet the patient and societal demand for MIS.

Since its introduction to North America in the 1980s, minimally invasive surgery (MIS) has become widely accepted and practiced across surgical disciplines including general surgery, gynecologic surgery, oncology, and thoracic surgery [1]. Procedures once done through large incisions, such as cholecystectomies, have been supplanted by those utilizing 2 or 3 small punctures as the gold standard.

The demand for MIS has been rising and is driven by both providers and patients. The minimally invasive approaches have been shown to decrease recovery time, result in less postoperative pain, and decrease blood loss and other surgical complications [2,3], allowing for patients and their supports to return to baseline function more quickly [4]. In this way, both individual patients and society as a whole derives benefits from MIS through decreased recovery times and return to productivity.

Despite the clear benefits to patients and society, there has been increasing evidence of an unanticipated side effect of MIS: surgeon ergonomic strain and injury [5].Although the same ultimate procedure is performed when open techniques are employed as when MIS is utilized, surgeons have reported increased physical stress and mental strain when utilizing minimally invasive technologies [6,7]. The phenomenon was first noted during the laparoscopic surgery boom of the early 1990s and has been revisited more recently in the setting of both traditional and robotic-assisted MIS techniques [5,8,9].

The source of the issues arises from the fact the surgeons are, by definition, operating with reduced access to the patient. This requires limiting the degrees of freedom in movements, employing specialized and often awkward or cumbersome instruments, and requiring use of an intracorporeal camera that projects the surgical field onto a screen, which causes increased mental strain due to perceptual challenges as well as visual strain [7,10–13].

The initial large survey studies characterizing surgeon strain during MIS revealed rates of strain and discomfort in the 12% to 18% range, with many reporting that strain was persistent and not simply limited to operative time [11,14]. In part, these early estimates focused on very experienced surgeons and thus may have underreported the rates of strain. Subsequently, other studies have quoted rates of strain in the 40% to 60% range [15,16]. These studies focused on a larger and more hetero-geneous group of MIS surgeons, which may explain the higher rates of strain. Most recently, in the setting of an ever growing demand for MIS, large survey studies have revealed rates of surgeon strain to be as high as 87% and 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons, with 26% reporting persistent strain beyond the robotic console time [5,9,17]. This prolonged strain can impact productivity, with 14% of surgeons limiting the number of surgical cases they do per day, and may impact quality of life, with 29% needing to seek treatment for strain related to MIS [9].

Here we review the 2 major forms of MIS, traditional and robotic-assisted surgery. The unique features of each type of MIS that predispose to surgeon strain are discussed along with the techniques and technologies that have been employed to improve the ergonomics of MIS and reduce surgeon strain.

Traditional MIS

Traditional MIS, developed in the 1980s, involves use of a surgeon-manipulated intracorporeal video camera to view the surgical field. Instruments are placed through fixed ports inserted through the body wall called trocars. Most MIS surgical suites involve 1 or more surgeons standing aside a patient holding the camera and surgical instruments and viewing the surgical field on monitors placed around the patient.

The field and technology have evolved greatly since its inception. However, there are a number of factors that persist in creating ergonomic strain during traditional MIS.

Instruments

MIS instruments are limited by several factors. They must have long, thin shafts that can be placed and removed through fixed trocars. The majority of trocars commonly used are 5 to 10 mm in diameter. Given this fixed point, the instrument motion is inverted in the operative cavity, which requires mental adjustment and scaling. Additionally, the range of motion of MIS instruments is limited to 5 degrees of freedom, which allows for less dexterity than is commonly enjoyed during open surgery through large incisions, which accommodate the surgeons hands and allows for more degrees of freedom and dexterity [18]. These limitations have historically yielded instruments that have not been ergonomically sound. Indeed, MIS instrument are identified as an ergonomic problem by over 80% of minimally invasive surgeons [19].

Additionally, given that the surgeon’s hands are often occupied with the camera and an operative tool, the activation of suction devices and electrocautery devices often requires use of instrument foot pedals. Requiring that the instrument and camera be optimally positioned and relatively stationary and the foot pedal be activated simultaneously can exacerbate poor posture and back strain as the surgeon balances on one foot. The addition of foot pedals around the operative table also further limits space for proper surgeon

positioning [19].

Ergonomic engineering has focused on instrument handles. To accommodate the varied sizes of surgeon hands, many companies have altered the size of the device handles allowing for a more comfortable grip. To address the issue of poor posture induced by the use of foot pedals, many instruments now have trigger finger or thumb-activated buttons on the handle of the device itself, which alleviates the need for positioning to activate a foot pedal. However, many of these may not be suitable to accommodate smaller hands.

Optics

A major limitation of MIS is the limited visual field. The video monitor is positioned outside of the sterile operative field, often requiring that the surgeon looks in one direction and operates in another direction, placing strain in both the axial or rotational and frontal or flexion/extension planes [20]. The surgeon does not have immediate visual access to the entire surgical field but rather must rely on movement of the camera, which can at times result in unnatural and uncomfortable positions in order to position the camera optimally [19]. Additionally, eye strain can result from constant visualization of the operative monitor throughout the surgery. Finally, until only recently, optic systems required operating in 2 dimensions, without the depth perception enjoyed during traditional open surgery.

To address neck and upper body strain as well as optic strain, operative monitor positioning has received significant emphasis. The original MIS video monitors were small, had poor resolution, and were fixed in their position. Over time, monitors have increased in size and resolution, allowing for easier viewing and decreased optic strain. Additionally, in the 1990s the MIS operative suite concept allowed for placement of monitors on swing arms, which allow for movement about the operating room with ease. Subsequently, use of systems that employed multiple monitors placed around the patient at different angles with independent height and inclination adjustment allowed for comfortable positioning for all members of the surgical team, particularly in cases where 2 or more surgeons are operating simultaneously [21]. The implementation of these monitor systems not only decrease ergonomic strain but have also been shown to improve intraoperative speed and surgical accuracy when performing standardized tasks [22,23].

The most recent advance in surgical optics has been the introduction of 3-dimensional (3D) imaging systems [21,24,25]. At present, most of these systems are cost prohibitive and have poorer resolution than the traditional 2-dimensional monitors which may in fact increase optic strain. The modern high-definition 2D monitor systems in current use have done much to decrease optic strain and further refinement of 3D technology may prove to mitigate this strain even further.

Operative Posture

MIS often involves assuming unnatural postures to manipulate instruments and visualize the operative monitors. When non-neutral posture is maintained, muscles require an increase in energy production in order to maintain the same contractile forces and the contractile forces required to stabilize joints is increased [20]. Maintaining these static positions for long periods of time results in rapid fatigue, muscle pain, and cramping, and strain that can persist after the operation is complete [19].

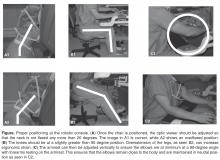

Attention to ideal posture is paramount during MIS. The surgeon should be upright next to the patient with the head slightly bent forward, ideally employing a shift in position of the neck from time to time throughout the surgery to avoid prolonged static positioning [11]. The arms should rest so that the elbow is at the side with a 90- to 120-degree bend to accommodate instrument manipulation. This angle can be tolerated for long period of time as opposed to angles that require the elbow be taken away from the side of the body [19]. The forearm should rest in the neutral rotating position between pronated and supinated whenever possible with the wrist slightly extended and the fingers slightly bent [26]. This neutral position allows for rapid and simple changes in grip.

Adjustment of table height or use of operative foot platforms is crucial to ensuring the arms remain in neutral position. Given that the patient is often positioned in steep Trendelenburg or reverse Trendelenburg for MIS, the standard operating beds may not be at a height that allows the surgeon to operate in a relaxed, neutral posture [27,28]. In these circumstances, rather than operating with arms and shoulders in an elevated position, a position that produces rapid upper extremity fatigue, surgeons should elevate themselves with the assistance of an operative platform or step.

Single Incision Laparoscopic Surgery

Most recently, single port laparoscopic surgery (SPLS), also called single incision laparoscopic surgery (SILS) has been introduced. This technique involves use of a slightly larger, single incision that allows for a single port, which accommodates several instruments and the operative camera. This enhances some of the challenges posed with traditional MIS, namely maintaining exposure of the operative field, sustaining pneumatic pressure in the operative space, avoiding instrument collision both intra- and extracorporeally, and avoiding instrument interference with optics [29].

A number of techniques have been employed to minimize these issues. For example, percutaneous sutures may be placed intraoperatively in order to assist with retraction and improve visualization. The most important technological advances have come in the form of coaxial, flexible, and articulating instruments to avoid collisions [29]. While there is a learning curve with these technologies in terms of instrument triangulation, they can be successfully employed to improve operative efficiency and ergonomics.

Robot-Assisted MIS

Robot-assisted MIS involves the use of intracorporeal instruments attached to robotic arms that have been docked to trocars. The surgeon controls these robotic arms with a computer console and a video monitor is available for the surgical assistants. Many robot-assisted surgeries involve the use of an assistant, who utilizes traditional MIS instruments and trocars. The same issues in ergonomics discussed above apply to the assistant surgeon. Here, we will focus on the ergonomic challenges unique to operating surgeon at a robotic console.

There have been several robotic systems developed for use during surgery. At present, only the da Vinci surgical system (Intuitive Surgical, Sunnyvale, CA) is in use in the United States. Several components of the robotic systems allow for potential advantages over traditional MIS in terms of ergonomics. First, as discussed, the primary surgeon is seated at a robotic console rather than standing next to the patient. The camera and instruments are held intracorporeally by the robotic system and controlled by the surgeon at the console. The mechanical engineering, which is associated with the instruments of the robotic system, allows for many for degrees of freedom in range of motion, which permits the surgeon to employ techniques which more closely mimic open surgical techniques.

The robotic surgical system has experienced rapid acceptance and growth over the past decade [30–33]. One small case comparison study and 1 large prospective analysis of over 200 procedures have suggested that robotic surgery is more ergonomically favorable and potentially less mentally stressful than conventional minimally invasive surgery [34,35]. However, although robotic surgery is often thought of as a tool to alleviate strain related to MIS [36,37], there are still high levels of strain, with some survey data indicating strain rates as high as 45%, involved with robotic surgery [8,9]. It is clear that, as with traditional MIS, effective interventions are needed to prevent and reduce strain to prevent work-related injury in robot-assisted MIS.

A primary cause of ergonomic strain during robot-assisted MIS is the lack of knowledge and training regard-ing proper ergonomic techniques at seated console work stations amongst surgeons, with as few as 16% of surgeons reporting any formal training [5,9,17,38]. Furthermore, when compared with traditional MIS, there is little available literature specific to robotic surgery ergonomics. Much of the data available has been extrapolated from recommendations from the U.S. Department of Labor’s Occupational Safety and Health Administration’s (OSHA’s) guidelines for working positions at workstations and on the available body of literature on the ergonomics of microscopy, which, due to somewhat similar positioning, have been adapted for robotic-assisted MIS [39].

The Robotic Console

When addressing applied ergonomics in robot-assisted MIS, the primary focuses in on the robotic console set-up and the surgeon positioning. The primary focus is on ensuring a comfortable headrest and adjustable ocular height, which relieves neck, shoulder, and upper back strain, and proper adjustment of armrests and finger controls aimed at minimizing arm and upper back strain due to static load forces [40].

The most common pitfalls during robotic-assisted MIS are when the arms are moved from the arm rest and the elbows flare out from the side of the operator. This departure from neutral position, common when attempting to reach for structures that are on the edge of the surgical field, causes significant tension and strain and is often not corrected for long period of time. Frequent use of the robotic clutch, which freezes the intracorporeal arms and allows for movement of the console arms freely, to bring the body back to neutral position is of paramount importance [17].

Conclusion

The field of applied ergonomics in surgery has never been more important. The fields of both traditional and robot-assisted MIS are growing rapidly and demand for these technologies will only increase as outcomes continue to improve. As the increasing workload of MIS is handled by a pool of surgeons that is not increasing rapidly enough to meet the demand, more volume will be handled by each surgeon.

With ergonomic strain now reported by nearly 90% of surgeons, much of it persistent strain beyond operative time, we will run into situations where surgeons may compensate for this persistent strain by decreasing operative volume and may decide to retire earlier than they otherwise might have. This could result in a health care supply problem as demand for MIS increases and the surgeon pool available to perform it stays constant or decreases.

To date, there has been relatively little research into this issue. The epidemiologic data on surgeon strain comes primarily from survey research done within various MIS subspecialties. There has been some data based on objective measures of strain utilizing validated strain indicators, but more work in this area is needed. Standardized methods of reporting strain will assist in clarifying both the epidemiology and standardize the response to interventions. Studies that aim to address the reported rates of strain are also needed. Much of the early work focused on operating room set-up and resulted in great improvements. More work is needed to assess optimal ergonomic positioning and formal surgeon training.

The solution will involve a combination of engineering advances in operating room set-up and equipment design along with a renewed focus on teaching ergonomic techniques and principles to MIS surgeons. While early data is promising and shows that training sessions in ergonomics are easy, acceptable by surgeons, and effective, more data is needed to develop optimal training session and modules when it comes to traditional and robot-assisted MIS ergonomics [17]. As ergonomic studies specifically designed to address this population accrue, more data driven guidelines can be developed and implemented.

Corresponding author: Jason M. Franasiak, MD, 140 Allen Rd., Basking Ridge, NJ 07920, [email protected].

Financial disclosures: None

1. Cuschieri A. Laparoscopic surgery: current status, issues and future developments. Surg J R Coll Surg Edinb Irel 2005;3:125–30, 132–3, 135–8.

2. Gehrig PA, Cantrell LA, Shafer A, et al. What is the optimal minimally invasive surgical procedure for endometrial cancer staging in the obese and morbidly obese woman? Gynecol Oncol 2008;111:41–5.

3. Walker JL, Piedmonte MR, Spirtos NM, et al. Laparoscopy compared with laparotomy for comprehensive surgical staging of uterine cancer: Gynecologic Oncology Group Study LAP2. J Clin Oncol 2009;27:5331–6.

4. Bell MC, Torgerson J, Seshadri-Kreaden U, et al. Comparison of outcomes and cost for endometrial cancer staging via traditional laparotomy, standard laparoscopy and robotic techniques. Gynecol Oncol 2008;111:407–11.

5. Park A, Lee G, Seagull FJ, et al. Patients benefit while surgeons suffer: an impending epidemic. J Am Coll Surg 2010;210:306–13.

6. Kant IJ, de Jong LC, van Rijssen-Moll M, Borm PJ. A survey of static and dynamic work postures of operating room staff. Int Arch Occup Environ Health 1992;63:423–8.

7. Patkin M, Isabel L. Ergonomics, engineering and surgery of endosurgical dissection. J R Coll Surg Edinb 1995;40:120–32.

8. Craven R, Franasiak J, Mosaly P, Gehrig PA. Ergonomic deficits in robotic gynecologic oncology surgery: a need for intervention. J Minim Invasive Gynecol 2013;20:648–55.

9. Franasiak J, Ko EM, Kidd J, et al. Physical strain and urgent need for ergonomic training among gynecologic oncologists who perform minimally invasive surgery. Gynecol Oncol 2012;126:437–42.

10. Berguer R. Surgical technology and the ergonomics of laparoscopic instruments. Surg Endosc 1998;12:458–62.

11. Berguer R. Surgery and ergonomics. Arch Surg 1999;134:1011–6.

12. Berguer R, Forkey DL, Smith WD. The effect of laparoscopic instrument working angle on surgeons’ upper extremity workload. Surg Endosc 2001;15:1027–9.

13. Lawson EH, Curet MJ, Sanchez BR, et al. Postural ergonomics during robotic and laparoscopic gastric bypass surgery: a pilot project. J Robot Surg 2007;1:61–7.

14. Van Veelen MA, Meijer DW. Ergonomics and design of laparoscopic instruments: results of a survey among laparoscopic surgeons. J Laparoendosc Adv Surg Tech A 1999;9:481–9.

15. Van Veelen MA, Nederlof EA, Goossens RHM, et al. Ergonomic problems encountered by the medical team related to products used for minimally invasive surgery. Surg Endosc 2003;17:1077–81.

16. Lawther RE, Kirk GR, Regan MC. Laparoscopic procedures are associated with a significant risk of digital nerve injury for general surgeons. Ann R Coll Surg Engl 2002;84:443.

17. Franasiak J, Craven R, Mosaly P, Gehrig PA. Feasibility and acceptance of a robotic surgery ergonomic training program. JSLS 2014;18(4).

18. Ballantyne GH. The pitfalls of laparoscopic surgery: challenges for robotics and telerobotic surgery. Surg Laparosc Endosc Percutan Tech 2002;12:1–5.

19. Matern U. Ergonomic deficiencies in the operating room: examples from minimally invasive surgery. Work 2009;33:165–8.

20. Van Det MJ, Meijerink WJHJ, Hoff C, et al. Optimal ergonomics for laparoscopic surgery in minimally invasive surgery suites: a review and guidelines. Surg Endosc 2009;23:1279–85.

21. Veelen MA, Jakimowicz JJ, Goossens RHM, et al. Evaluation of the usability of two types of image display systems, during laparoscopy. Surg Endosc 2002;16:674–8.

22. Haveran LA, Novitsky YW, Czerniach DR, et al. Optimizing laparoscopic task efficiency: the role of camera and monitor positions. Surg Endosc 2007;21:980–4.

23. Hanna GB, Shimi SM, Cuschieri A. Task performance in endoscopic surgery is influenced by location of the image display. Ann Surg 1998;227:481–4.

24. Ballantyne GH. Robotic surgery, telerobotic surgery, telepresence, and telementoring. Review of early clinical results. Surg Endosc 2002;16:1389–402.

25. Boppart SA, Deutsch TF, Rattner DW. Optical imaging technology in minimally invasive surgery. Current status and future directions. Surg Endosc 1999;13:718–22.

26. Matern U, Waller P. Instruments for minimally invasive surgery: principles of ergonomic handles. Surg Endosc 1999;13:174–82.

27. Matern U, Waller P, Giebmeyer C, et al. Ergonomics: requirements for adjusting the height of laparoscopic operating tables. JSLS 2001;5:7–12.

28. Van Veelen MA, Kazemier G, Koopman J, et al. Assessment of the ergonomically optimal operating surface height for laparoscopic surgery. J Laparoendosc Adv Surg Tech A 2002;12:47–52.

29. Tang B, Hou S, Cuschieri SA. Ergonomics of and technologies for single-port lapaxroscopic surgery. Minim Invasive Ther Allied Technol 2012;21:46–54.

30. Wexner SD, Bergamaschi R, Lacy A, et al. The current status of robotic pelvic surgery: results of a multinational interdisciplinary consensus conference. Surg Endosc 2009;23:438–43.

31. Challacombe BJ, Khan MS, Murphy D, Dasgupta P. The history of robotics in urology. World J Urol 2006;24:120–7.

32. Ballantyne GH, Moll F. The da Vinci telerobotic surgical system: the virtual operative field and telepresence surgery. Surg Clin North Am 2003;83:1293–304, vii.

33. Ruurda JP, van Vroonhoven TJ, Broeders IA. Robot-assisted surgical systems: a new era in laparoscopic surgery. Ann R Coll Surg Engl 2002;84:223–6.

34. Mohr CJ, Nadzam GS, Curet MJ. Totally robotic Roux-en-Y gastric bypass. Arch Surg 2005;140:779–86.

35. Talamini MA, Chapman S, Horgan S, Melvin WS; Academic Robotics Group. A prospective analysis of 211 robotic-assisted surgical procedures. Surg Endosc 2003;17:1521–4.

36. Mucksavage P, Kerbl DC, Lee JY. The da Vinci Surgical System overcomes innate hand dominance. J Endourol 2011;25:1385–8.

37. Schreuder HW, Verheijen RH. Robotic surgery. BJOG 2009;116:198–213.

38. Stone R, McCloy R. Ergonomics in medicine and surgery. BMJ 2004;328:1115–8.

39. Lux MM, Marshall M, Erturk E, Joseph JV. Ergonomic evaluation and guidelines for use of the daVinci Robot system. J Endourol 2010;24:371–5.

40. Sillanpaa J, Nyberg M, Laippala P. A new table for work with a microscope, a solution to ergonomic problems. Appl Ergon 2003;34:621–8.

From the Division of Reproductive Endocrinology and Infertility, Department of Obstetrics, Gynecology and Reproductive Science, Robert Wood Johnson Medical School, Rutgers University, New Brunswick, NJ (Dr. Fransasiak), and the Division of Gynecologic Oncology, Department of Obstetrics and Gynecology, University of North Carolina, Chapel Hill, NC (Dr. Gehrig).

Abstract

- Background: Minimally invasive surgery (MIS) has benefits to both patients and society and its use has increased markedly over the past 3 decades. With its introduction, new mental and physical challenges were presented to the surgeons, leading to concerns regarding operative ergonomics. Applied ergonomics has been used to study and improve operative techniques and technologies as they apply to MIS.

- Objective: To review the ergonomic challenges presented by both traditional MIS as well as robot-assisted MIS and discuss how ergonomic science has evolved to address these issues.

- Methods: Review of the literature involving MIS and applied ergonomics

- Results: Surgeon strain as it relates to MIS has historically been thought to occur in only approximately 15% of MIS surgeons. More recent data suggests this number is much higher. Rates of strain have been reported to be as high as 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons. Strain results from a number of factors, including instrument design and use, optics placement and resolution, patient and surgeon positioning, and the drive to implement surgical technologies which aim to further minimize the invasiveness of surgical procedures.

- Conclusion: Improvements in applied ergonomics in MIS have resulted in improved optics, more sophisticated and ergonomic instruments, and methods of optimizing positioning. However, despite these advancements, ergonomic strain rates amongst surgeons remain alarmingly high. With the ever-increasing demand for MIS, more research and development as well as MIS surgeon training are needed to improve the safety of surgeons and ensure the career longevity required to meet the patient and societal demand for MIS.

Since its introduction to North America in the 1980s, minimally invasive surgery (MIS) has become widely accepted and practiced across surgical disciplines including general surgery, gynecologic surgery, oncology, and thoracic surgery [1]. Procedures once done through large incisions, such as cholecystectomies, have been supplanted by those utilizing 2 or 3 small punctures as the gold standard.

The demand for MIS has been rising and is driven by both providers and patients. The minimally invasive approaches have been shown to decrease recovery time, result in less postoperative pain, and decrease blood loss and other surgical complications [2,3], allowing for patients and their supports to return to baseline function more quickly [4]. In this way, both individual patients and society as a whole derives benefits from MIS through decreased recovery times and return to productivity.

Despite the clear benefits to patients and society, there has been increasing evidence of an unanticipated side effect of MIS: surgeon ergonomic strain and injury [5].Although the same ultimate procedure is performed when open techniques are employed as when MIS is utilized, surgeons have reported increased physical stress and mental strain when utilizing minimally invasive technologies [6,7]. The phenomenon was first noted during the laparoscopic surgery boom of the early 1990s and has been revisited more recently in the setting of both traditional and robotic-assisted MIS techniques [5,8,9].

The source of the issues arises from the fact the surgeons are, by definition, operating with reduced access to the patient. This requires limiting the degrees of freedom in movements, employing specialized and often awkward or cumbersome instruments, and requiring use of an intracorporeal camera that projects the surgical field onto a screen, which causes increased mental strain due to perceptual challenges as well as visual strain [7,10–13].

The initial large survey studies characterizing surgeon strain during MIS revealed rates of strain and discomfort in the 12% to 18% range, with many reporting that strain was persistent and not simply limited to operative time [11,14]. In part, these early estimates focused on very experienced surgeons and thus may have underreported the rates of strain. Subsequently, other studies have quoted rates of strain in the 40% to 60% range [15,16]. These studies focused on a larger and more hetero-geneous group of MIS surgeons, which may explain the higher rates of strain. Most recently, in the setting of an ever growing demand for MIS, large survey studies have revealed rates of surgeon strain to be as high as 87% and 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons, with 26% reporting persistent strain beyond the robotic console time [5,9,17]. This prolonged strain can impact productivity, with 14% of surgeons limiting the number of surgical cases they do per day, and may impact quality of life, with 29% needing to seek treatment for strain related to MIS [9].

Here we review the 2 major forms of MIS, traditional and robotic-assisted surgery. The unique features of each type of MIS that predispose to surgeon strain are discussed along with the techniques and technologies that have been employed to improve the ergonomics of MIS and reduce surgeon strain.

Traditional MIS

Traditional MIS, developed in the 1980s, involves use of a surgeon-manipulated intracorporeal video camera to view the surgical field. Instruments are placed through fixed ports inserted through the body wall called trocars. Most MIS surgical suites involve 1 or more surgeons standing aside a patient holding the camera and surgical instruments and viewing the surgical field on monitors placed around the patient.

The field and technology have evolved greatly since its inception. However, there are a number of factors that persist in creating ergonomic strain during traditional MIS.

Instruments

MIS instruments are limited by several factors. They must have long, thin shafts that can be placed and removed through fixed trocars. The majority of trocars commonly used are 5 to 10 mm in diameter. Given this fixed point, the instrument motion is inverted in the operative cavity, which requires mental adjustment and scaling. Additionally, the range of motion of MIS instruments is limited to 5 degrees of freedom, which allows for less dexterity than is commonly enjoyed during open surgery through large incisions, which accommodate the surgeons hands and allows for more degrees of freedom and dexterity [18]. These limitations have historically yielded instruments that have not been ergonomically sound. Indeed, MIS instrument are identified as an ergonomic problem by over 80% of minimally invasive surgeons [19].

Additionally, given that the surgeon’s hands are often occupied with the camera and an operative tool, the activation of suction devices and electrocautery devices often requires use of instrument foot pedals. Requiring that the instrument and camera be optimally positioned and relatively stationary and the foot pedal be activated simultaneously can exacerbate poor posture and back strain as the surgeon balances on one foot. The addition of foot pedals around the operative table also further limits space for proper surgeon

positioning [19].

Ergonomic engineering has focused on instrument handles. To accommodate the varied sizes of surgeon hands, many companies have altered the size of the device handles allowing for a more comfortable grip. To address the issue of poor posture induced by the use of foot pedals, many instruments now have trigger finger or thumb-activated buttons on the handle of the device itself, which alleviates the need for positioning to activate a foot pedal. However, many of these may not be suitable to accommodate smaller hands.

Optics

A major limitation of MIS is the limited visual field. The video monitor is positioned outside of the sterile operative field, often requiring that the surgeon looks in one direction and operates in another direction, placing strain in both the axial or rotational and frontal or flexion/extension planes [20]. The surgeon does not have immediate visual access to the entire surgical field but rather must rely on movement of the camera, which can at times result in unnatural and uncomfortable positions in order to position the camera optimally [19]. Additionally, eye strain can result from constant visualization of the operative monitor throughout the surgery. Finally, until only recently, optic systems required operating in 2 dimensions, without the depth perception enjoyed during traditional open surgery.

To address neck and upper body strain as well as optic strain, operative monitor positioning has received significant emphasis. The original MIS video monitors were small, had poor resolution, and were fixed in their position. Over time, monitors have increased in size and resolution, allowing for easier viewing and decreased optic strain. Additionally, in the 1990s the MIS operative suite concept allowed for placement of monitors on swing arms, which allow for movement about the operating room with ease. Subsequently, use of systems that employed multiple monitors placed around the patient at different angles with independent height and inclination adjustment allowed for comfortable positioning for all members of the surgical team, particularly in cases where 2 or more surgeons are operating simultaneously [21]. The implementation of these monitor systems not only decrease ergonomic strain but have also been shown to improve intraoperative speed and surgical accuracy when performing standardized tasks [22,23].

The most recent advance in surgical optics has been the introduction of 3-dimensional (3D) imaging systems [21,24,25]. At present, most of these systems are cost prohibitive and have poorer resolution than the traditional 2-dimensional monitors which may in fact increase optic strain. The modern high-definition 2D monitor systems in current use have done much to decrease optic strain and further refinement of 3D technology may prove to mitigate this strain even further.

Operative Posture

MIS often involves assuming unnatural postures to manipulate instruments and visualize the operative monitors. When non-neutral posture is maintained, muscles require an increase in energy production in order to maintain the same contractile forces and the contractile forces required to stabilize joints is increased [20]. Maintaining these static positions for long periods of time results in rapid fatigue, muscle pain, and cramping, and strain that can persist after the operation is complete [19].

Attention to ideal posture is paramount during MIS. The surgeon should be upright next to the patient with the head slightly bent forward, ideally employing a shift in position of the neck from time to time throughout the surgery to avoid prolonged static positioning [11]. The arms should rest so that the elbow is at the side with a 90- to 120-degree bend to accommodate instrument manipulation. This angle can be tolerated for long period of time as opposed to angles that require the elbow be taken away from the side of the body [19]. The forearm should rest in the neutral rotating position between pronated and supinated whenever possible with the wrist slightly extended and the fingers slightly bent [26]. This neutral position allows for rapid and simple changes in grip.

Adjustment of table height or use of operative foot platforms is crucial to ensuring the arms remain in neutral position. Given that the patient is often positioned in steep Trendelenburg or reverse Trendelenburg for MIS, the standard operating beds may not be at a height that allows the surgeon to operate in a relaxed, neutral posture [27,28]. In these circumstances, rather than operating with arms and shoulders in an elevated position, a position that produces rapid upper extremity fatigue, surgeons should elevate themselves with the assistance of an operative platform or step.

Single Incision Laparoscopic Surgery

Most recently, single port laparoscopic surgery (SPLS), also called single incision laparoscopic surgery (SILS) has been introduced. This technique involves use of a slightly larger, single incision that allows for a single port, which accommodates several instruments and the operative camera. This enhances some of the challenges posed with traditional MIS, namely maintaining exposure of the operative field, sustaining pneumatic pressure in the operative space, avoiding instrument collision both intra- and extracorporeally, and avoiding instrument interference with optics [29].

A number of techniques have been employed to minimize these issues. For example, percutaneous sutures may be placed intraoperatively in order to assist with retraction and improve visualization. The most important technological advances have come in the form of coaxial, flexible, and articulating instruments to avoid collisions [29]. While there is a learning curve with these technologies in terms of instrument triangulation, they can be successfully employed to improve operative efficiency and ergonomics.

Robot-Assisted MIS

Robot-assisted MIS involves the use of intracorporeal instruments attached to robotic arms that have been docked to trocars. The surgeon controls these robotic arms with a computer console and a video monitor is available for the surgical assistants. Many robot-assisted surgeries involve the use of an assistant, who utilizes traditional MIS instruments and trocars. The same issues in ergonomics discussed above apply to the assistant surgeon. Here, we will focus on the ergonomic challenges unique to operating surgeon at a robotic console.

There have been several robotic systems developed for use during surgery. At present, only the da Vinci surgical system (Intuitive Surgical, Sunnyvale, CA) is in use in the United States. Several components of the robotic systems allow for potential advantages over traditional MIS in terms of ergonomics. First, as discussed, the primary surgeon is seated at a robotic console rather than standing next to the patient. The camera and instruments are held intracorporeally by the robotic system and controlled by the surgeon at the console. The mechanical engineering, which is associated with the instruments of the robotic system, allows for many for degrees of freedom in range of motion, which permits the surgeon to employ techniques which more closely mimic open surgical techniques.

The robotic surgical system has experienced rapid acceptance and growth over the past decade [30–33]. One small case comparison study and 1 large prospective analysis of over 200 procedures have suggested that robotic surgery is more ergonomically favorable and potentially less mentally stressful than conventional minimally invasive surgery [34,35]. However, although robotic surgery is often thought of as a tool to alleviate strain related to MIS [36,37], there are still high levels of strain, with some survey data indicating strain rates as high as 45%, involved with robotic surgery [8,9]. It is clear that, as with traditional MIS, effective interventions are needed to prevent and reduce strain to prevent work-related injury in robot-assisted MIS.

A primary cause of ergonomic strain during robot-assisted MIS is the lack of knowledge and training regard-ing proper ergonomic techniques at seated console work stations amongst surgeons, with as few as 16% of surgeons reporting any formal training [5,9,17,38]. Furthermore, when compared with traditional MIS, there is little available literature specific to robotic surgery ergonomics. Much of the data available has been extrapolated from recommendations from the U.S. Department of Labor’s Occupational Safety and Health Administration’s (OSHA’s) guidelines for working positions at workstations and on the available body of literature on the ergonomics of microscopy, which, due to somewhat similar positioning, have been adapted for robotic-assisted MIS [39].

The Robotic Console

When addressing applied ergonomics in robot-assisted MIS, the primary focuses in on the robotic console set-up and the surgeon positioning. The primary focus is on ensuring a comfortable headrest and adjustable ocular height, which relieves neck, shoulder, and upper back strain, and proper adjustment of armrests and finger controls aimed at minimizing arm and upper back strain due to static load forces [40].

The most common pitfalls during robotic-assisted MIS are when the arms are moved from the arm rest and the elbows flare out from the side of the operator. This departure from neutral position, common when attempting to reach for structures that are on the edge of the surgical field, causes significant tension and strain and is often not corrected for long period of time. Frequent use of the robotic clutch, which freezes the intracorporeal arms and allows for movement of the console arms freely, to bring the body back to neutral position is of paramount importance [17].

Conclusion

The field of applied ergonomics in surgery has never been more important. The fields of both traditional and robot-assisted MIS are growing rapidly and demand for these technologies will only increase as outcomes continue to improve. As the increasing workload of MIS is handled by a pool of surgeons that is not increasing rapidly enough to meet the demand, more volume will be handled by each surgeon.

With ergonomic strain now reported by nearly 90% of surgeons, much of it persistent strain beyond operative time, we will run into situations where surgeons may compensate for this persistent strain by decreasing operative volume and may decide to retire earlier than they otherwise might have. This could result in a health care supply problem as demand for MIS increases and the surgeon pool available to perform it stays constant or decreases.

To date, there has been relatively little research into this issue. The epidemiologic data on surgeon strain comes primarily from survey research done within various MIS subspecialties. There has been some data based on objective measures of strain utilizing validated strain indicators, but more work in this area is needed. Standardized methods of reporting strain will assist in clarifying both the epidemiology and standardize the response to interventions. Studies that aim to address the reported rates of strain are also needed. Much of the early work focused on operating room set-up and resulted in great improvements. More work is needed to assess optimal ergonomic positioning and formal surgeon training.

The solution will involve a combination of engineering advances in operating room set-up and equipment design along with a renewed focus on teaching ergonomic techniques and principles to MIS surgeons. While early data is promising and shows that training sessions in ergonomics are easy, acceptable by surgeons, and effective, more data is needed to develop optimal training session and modules when it comes to traditional and robot-assisted MIS ergonomics [17]. As ergonomic studies specifically designed to address this population accrue, more data driven guidelines can be developed and implemented.

Corresponding author: Jason M. Franasiak, MD, 140 Allen Rd., Basking Ridge, NJ 07920, [email protected].

Financial disclosures: None

From the Division of Reproductive Endocrinology and Infertility, Department of Obstetrics, Gynecology and Reproductive Science, Robert Wood Johnson Medical School, Rutgers University, New Brunswick, NJ (Dr. Fransasiak), and the Division of Gynecologic Oncology, Department of Obstetrics and Gynecology, University of North Carolina, Chapel Hill, NC (Dr. Gehrig).

Abstract

- Background: Minimally invasive surgery (MIS) has benefits to both patients and society and its use has increased markedly over the past 3 decades. With its introduction, new mental and physical challenges were presented to the surgeons, leading to concerns regarding operative ergonomics. Applied ergonomics has been used to study and improve operative techniques and technologies as they apply to MIS.

- Objective: To review the ergonomic challenges presented by both traditional MIS as well as robot-assisted MIS and discuss how ergonomic science has evolved to address these issues.

- Methods: Review of the literature involving MIS and applied ergonomics

- Results: Surgeon strain as it relates to MIS has historically been thought to occur in only approximately 15% of MIS surgeons. More recent data suggests this number is much higher. Rates of strain have been reported to be as high as 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons. Strain results from a number of factors, including instrument design and use, optics placement and resolution, patient and surgeon positioning, and the drive to implement surgical technologies which aim to further minimize the invasiveness of surgical procedures.

- Conclusion: Improvements in applied ergonomics in MIS have resulted in improved optics, more sophisticated and ergonomic instruments, and methods of optimizing positioning. However, despite these advancements, ergonomic strain rates amongst surgeons remain alarmingly high. With the ever-increasing demand for MIS, more research and development as well as MIS surgeon training are needed to improve the safety of surgeons and ensure the career longevity required to meet the patient and societal demand for MIS.

Since its introduction to North America in the 1980s, minimally invasive surgery (MIS) has become widely accepted and practiced across surgical disciplines including general surgery, gynecologic surgery, oncology, and thoracic surgery [1]. Procedures once done through large incisions, such as cholecystectomies, have been supplanted by those utilizing 2 or 3 small punctures as the gold standard.

The demand for MIS has been rising and is driven by both providers and patients. The minimally invasive approaches have been shown to decrease recovery time, result in less postoperative pain, and decrease blood loss and other surgical complications [2,3], allowing for patients and their supports to return to baseline function more quickly [4]. In this way, both individual patients and society as a whole derives benefits from MIS through decreased recovery times and return to productivity.

Despite the clear benefits to patients and society, there has been increasing evidence of an unanticipated side effect of MIS: surgeon ergonomic strain and injury [5].Although the same ultimate procedure is performed when open techniques are employed as when MIS is utilized, surgeons have reported increased physical stress and mental strain when utilizing minimally invasive technologies [6,7]. The phenomenon was first noted during the laparoscopic surgery boom of the early 1990s and has been revisited more recently in the setting of both traditional and robotic-assisted MIS techniques [5,8,9].

The source of the issues arises from the fact the surgeons are, by definition, operating with reduced access to the patient. This requires limiting the degrees of freedom in movements, employing specialized and often awkward or cumbersome instruments, and requiring use of an intracorporeal camera that projects the surgical field onto a screen, which causes increased mental strain due to perceptual challenges as well as visual strain [7,10–13].

The initial large survey studies characterizing surgeon strain during MIS revealed rates of strain and discomfort in the 12% to 18% range, with many reporting that strain was persistent and not simply limited to operative time [11,14]. In part, these early estimates focused on very experienced surgeons and thus may have underreported the rates of strain. Subsequently, other studies have quoted rates of strain in the 40% to 60% range [15,16]. These studies focused on a larger and more hetero-geneous group of MIS surgeons, which may explain the higher rates of strain. Most recently, in the setting of an ever growing demand for MIS, large survey studies have revealed rates of surgeon strain to be as high as 87% and 88% among traditional MIS surgeons and 45% among robotic-assisted MIS surgeons, with 26% reporting persistent strain beyond the robotic console time [5,9,17]. This prolonged strain can impact productivity, with 14% of surgeons limiting the number of surgical cases they do per day, and may impact quality of life, with 29% needing to seek treatment for strain related to MIS [9].

Here we review the 2 major forms of MIS, traditional and robotic-assisted surgery. The unique features of each type of MIS that predispose to surgeon strain are discussed along with the techniques and technologies that have been employed to improve the ergonomics of MIS and reduce surgeon strain.

Traditional MIS

Traditional MIS, developed in the 1980s, involves use of a surgeon-manipulated intracorporeal video camera to view the surgical field. Instruments are placed through fixed ports inserted through the body wall called trocars. Most MIS surgical suites involve 1 or more surgeons standing aside a patient holding the camera and surgical instruments and viewing the surgical field on monitors placed around the patient.

The field and technology have evolved greatly since its inception. However, there are a number of factors that persist in creating ergonomic strain during traditional MIS.

Instruments

MIS instruments are limited by several factors. They must have long, thin shafts that can be placed and removed through fixed trocars. The majority of trocars commonly used are 5 to 10 mm in diameter. Given this fixed point, the instrument motion is inverted in the operative cavity, which requires mental adjustment and scaling. Additionally, the range of motion of MIS instruments is limited to 5 degrees of freedom, which allows for less dexterity than is commonly enjoyed during open surgery through large incisions, which accommodate the surgeons hands and allows for more degrees of freedom and dexterity [18]. These limitations have historically yielded instruments that have not been ergonomically sound. Indeed, MIS instrument are identified as an ergonomic problem by over 80% of minimally invasive surgeons [19].

Additionally, given that the surgeon’s hands are often occupied with the camera and an operative tool, the activation of suction devices and electrocautery devices often requires use of instrument foot pedals. Requiring that the instrument and camera be optimally positioned and relatively stationary and the foot pedal be activated simultaneously can exacerbate poor posture and back strain as the surgeon balances on one foot. The addition of foot pedals around the operative table also further limits space for proper surgeon

positioning [19].

Ergonomic engineering has focused on instrument handles. To accommodate the varied sizes of surgeon hands, many companies have altered the size of the device handles allowing for a more comfortable grip. To address the issue of poor posture induced by the use of foot pedals, many instruments now have trigger finger or thumb-activated buttons on the handle of the device itself, which alleviates the need for positioning to activate a foot pedal. However, many of these may not be suitable to accommodate smaller hands.

Optics

A major limitation of MIS is the limited visual field. The video monitor is positioned outside of the sterile operative field, often requiring that the surgeon looks in one direction and operates in another direction, placing strain in both the axial or rotational and frontal or flexion/extension planes [20]. The surgeon does not have immediate visual access to the entire surgical field but rather must rely on movement of the camera, which can at times result in unnatural and uncomfortable positions in order to position the camera optimally [19]. Additionally, eye strain can result from constant visualization of the operative monitor throughout the surgery. Finally, until only recently, optic systems required operating in 2 dimensions, without the depth perception enjoyed during traditional open surgery.

To address neck and upper body strain as well as optic strain, operative monitor positioning has received significant emphasis. The original MIS video monitors were small, had poor resolution, and were fixed in their position. Over time, monitors have increased in size and resolution, allowing for easier viewing and decreased optic strain. Additionally, in the 1990s the MIS operative suite concept allowed for placement of monitors on swing arms, which allow for movement about the operating room with ease. Subsequently, use of systems that employed multiple monitors placed around the patient at different angles with independent height and inclination adjustment allowed for comfortable positioning for all members of the surgical team, particularly in cases where 2 or more surgeons are operating simultaneously [21]. The implementation of these monitor systems not only decrease ergonomic strain but have also been shown to improve intraoperative speed and surgical accuracy when performing standardized tasks [22,23].

The most recent advance in surgical optics has been the introduction of 3-dimensional (3D) imaging systems [21,24,25]. At present, most of these systems are cost prohibitive and have poorer resolution than the traditional 2-dimensional monitors which may in fact increase optic strain. The modern high-definition 2D monitor systems in current use have done much to decrease optic strain and further refinement of 3D technology may prove to mitigate this strain even further.

Operative Posture

MIS often involves assuming unnatural postures to manipulate instruments and visualize the operative monitors. When non-neutral posture is maintained, muscles require an increase in energy production in order to maintain the same contractile forces and the contractile forces required to stabilize joints is increased [20]. Maintaining these static positions for long periods of time results in rapid fatigue, muscle pain, and cramping, and strain that can persist after the operation is complete [19].

Attention to ideal posture is paramount during MIS. The surgeon should be upright next to the patient with the head slightly bent forward, ideally employing a shift in position of the neck from time to time throughout the surgery to avoid prolonged static positioning [11]. The arms should rest so that the elbow is at the side with a 90- to 120-degree bend to accommodate instrument manipulation. This angle can be tolerated for long period of time as opposed to angles that require the elbow be taken away from the side of the body [19]. The forearm should rest in the neutral rotating position between pronated and supinated whenever possible with the wrist slightly extended and the fingers slightly bent [26]. This neutral position allows for rapid and simple changes in grip.

Adjustment of table height or use of operative foot platforms is crucial to ensuring the arms remain in neutral position. Given that the patient is often positioned in steep Trendelenburg or reverse Trendelenburg for MIS, the standard operating beds may not be at a height that allows the surgeon to operate in a relaxed, neutral posture [27,28]. In these circumstances, rather than operating with arms and shoulders in an elevated position, a position that produces rapid upper extremity fatigue, surgeons should elevate themselves with the assistance of an operative platform or step.

Single Incision Laparoscopic Surgery

Most recently, single port laparoscopic surgery (SPLS), also called single incision laparoscopic surgery (SILS) has been introduced. This technique involves use of a slightly larger, single incision that allows for a single port, which accommodates several instruments and the operative camera. This enhances some of the challenges posed with traditional MIS, namely maintaining exposure of the operative field, sustaining pneumatic pressure in the operative space, avoiding instrument collision both intra- and extracorporeally, and avoiding instrument interference with optics [29].

A number of techniques have been employed to minimize these issues. For example, percutaneous sutures may be placed intraoperatively in order to assist with retraction and improve visualization. The most important technological advances have come in the form of coaxial, flexible, and articulating instruments to avoid collisions [29]. While there is a learning curve with these technologies in terms of instrument triangulation, they can be successfully employed to improve operative efficiency and ergonomics.

Robot-Assisted MIS

Robot-assisted MIS involves the use of intracorporeal instruments attached to robotic arms that have been docked to trocars. The surgeon controls these robotic arms with a computer console and a video monitor is available for the surgical assistants. Many robot-assisted surgeries involve the use of an assistant, who utilizes traditional MIS instruments and trocars. The same issues in ergonomics discussed above apply to the assistant surgeon. Here, we will focus on the ergonomic challenges unique to operating surgeon at a robotic console.

There have been several robotic systems developed for use during surgery. At present, only the da Vinci surgical system (Intuitive Surgical, Sunnyvale, CA) is in use in the United States. Several components of the robotic systems allow for potential advantages over traditional MIS in terms of ergonomics. First, as discussed, the primary surgeon is seated at a robotic console rather than standing next to the patient. The camera and instruments are held intracorporeally by the robotic system and controlled by the surgeon at the console. The mechanical engineering, which is associated with the instruments of the robotic system, allows for many for degrees of freedom in range of motion, which permits the surgeon to employ techniques which more closely mimic open surgical techniques.

The robotic surgical system has experienced rapid acceptance and growth over the past decade [30–33]. One small case comparison study and 1 large prospective analysis of over 200 procedures have suggested that robotic surgery is more ergonomically favorable and potentially less mentally stressful than conventional minimally invasive surgery [34,35]. However, although robotic surgery is often thought of as a tool to alleviate strain related to MIS [36,37], there are still high levels of strain, with some survey data indicating strain rates as high as 45%, involved with robotic surgery [8,9]. It is clear that, as with traditional MIS, effective interventions are needed to prevent and reduce strain to prevent work-related injury in robot-assisted MIS.

A primary cause of ergonomic strain during robot-assisted MIS is the lack of knowledge and training regard-ing proper ergonomic techniques at seated console work stations amongst surgeons, with as few as 16% of surgeons reporting any formal training [5,9,17,38]. Furthermore, when compared with traditional MIS, there is little available literature specific to robotic surgery ergonomics. Much of the data available has been extrapolated from recommendations from the U.S. Department of Labor’s Occupational Safety and Health Administration’s (OSHA’s) guidelines for working positions at workstations and on the available body of literature on the ergonomics of microscopy, which, due to somewhat similar positioning, have been adapted for robotic-assisted MIS [39].

The Robotic Console

When addressing applied ergonomics in robot-assisted MIS, the primary focuses in on the robotic console set-up and the surgeon positioning. The primary focus is on ensuring a comfortable headrest and adjustable ocular height, which relieves neck, shoulder, and upper back strain, and proper adjustment of armrests and finger controls aimed at minimizing arm and upper back strain due to static load forces [40].

The most common pitfalls during robotic-assisted MIS are when the arms are moved from the arm rest and the elbows flare out from the side of the operator. This departure from neutral position, common when attempting to reach for structures that are on the edge of the surgical field, causes significant tension and strain and is often not corrected for long period of time. Frequent use of the robotic clutch, which freezes the intracorporeal arms and allows for movement of the console arms freely, to bring the body back to neutral position is of paramount importance [17].

Conclusion

The field of applied ergonomics in surgery has never been more important. The fields of both traditional and robot-assisted MIS are growing rapidly and demand for these technologies will only increase as outcomes continue to improve. As the increasing workload of MIS is handled by a pool of surgeons that is not increasing rapidly enough to meet the demand, more volume will be handled by each surgeon.

With ergonomic strain now reported by nearly 90% of surgeons, much of it persistent strain beyond operative time, we will run into situations where surgeons may compensate for this persistent strain by decreasing operative volume and may decide to retire earlier than they otherwise might have. This could result in a health care supply problem as demand for MIS increases and the surgeon pool available to perform it stays constant or decreases.

To date, there has been relatively little research into this issue. The epidemiologic data on surgeon strain comes primarily from survey research done within various MIS subspecialties. There has been some data based on objective measures of strain utilizing validated strain indicators, but more work in this area is needed. Standardized methods of reporting strain will assist in clarifying both the epidemiology and standardize the response to interventions. Studies that aim to address the reported rates of strain are also needed. Much of the early work focused on operating room set-up and resulted in great improvements. More work is needed to assess optimal ergonomic positioning and formal surgeon training.

The solution will involve a combination of engineering advances in operating room set-up and equipment design along with a renewed focus on teaching ergonomic techniques and principles to MIS surgeons. While early data is promising and shows that training sessions in ergonomics are easy, acceptable by surgeons, and effective, more data is needed to develop optimal training session and modules when it comes to traditional and robot-assisted MIS ergonomics [17]. As ergonomic studies specifically designed to address this population accrue, more data driven guidelines can be developed and implemented.

Corresponding author: Jason M. Franasiak, MD, 140 Allen Rd., Basking Ridge, NJ 07920, [email protected].

Financial disclosures: None

1. Cuschieri A. Laparoscopic surgery: current status, issues and future developments. Surg J R Coll Surg Edinb Irel 2005;3:125–30, 132–3, 135–8.

2. Gehrig PA, Cantrell LA, Shafer A, et al. What is the optimal minimally invasive surgical procedure for endometrial cancer staging in the obese and morbidly obese woman? Gynecol Oncol 2008;111:41–5.

3. Walker JL, Piedmonte MR, Spirtos NM, et al. Laparoscopy compared with laparotomy for comprehensive surgical staging of uterine cancer: Gynecologic Oncology Group Study LAP2. J Clin Oncol 2009;27:5331–6.

4. Bell MC, Torgerson J, Seshadri-Kreaden U, et al. Comparison of outcomes and cost for endometrial cancer staging via traditional laparotomy, standard laparoscopy and robotic techniques. Gynecol Oncol 2008;111:407–11.

5. Park A, Lee G, Seagull FJ, et al. Patients benefit while surgeons suffer: an impending epidemic. J Am Coll Surg 2010;210:306–13.

6. Kant IJ, de Jong LC, van Rijssen-Moll M, Borm PJ. A survey of static and dynamic work postures of operating room staff. Int Arch Occup Environ Health 1992;63:423–8.

7. Patkin M, Isabel L. Ergonomics, engineering and surgery of endosurgical dissection. J R Coll Surg Edinb 1995;40:120–32.

8. Craven R, Franasiak J, Mosaly P, Gehrig PA. Ergonomic deficits in robotic gynecologic oncology surgery: a need for intervention. J Minim Invasive Gynecol 2013;20:648–55.

9. Franasiak J, Ko EM, Kidd J, et al. Physical strain and urgent need for ergonomic training among gynecologic oncologists who perform minimally invasive surgery. Gynecol Oncol 2012;126:437–42.

10. Berguer R. Surgical technology and the ergonomics of laparoscopic instruments. Surg Endosc 1998;12:458–62.

11. Berguer R. Surgery and ergonomics. Arch Surg 1999;134:1011–6.

12. Berguer R, Forkey DL, Smith WD. The effect of laparoscopic instrument working angle on surgeons’ upper extremity workload. Surg Endosc 2001;15:1027–9.

13. Lawson EH, Curet MJ, Sanchez BR, et al. Postural ergonomics during robotic and laparoscopic gastric bypass surgery: a pilot project. J Robot Surg 2007;1:61–7.

14. Van Veelen MA, Meijer DW. Ergonomics and design of laparoscopic instruments: results of a survey among laparoscopic surgeons. J Laparoendosc Adv Surg Tech A 1999;9:481–9.

15. Van Veelen MA, Nederlof EA, Goossens RHM, et al. Ergonomic problems encountered by the medical team related to products used for minimally invasive surgery. Surg Endosc 2003;17:1077–81.

16. Lawther RE, Kirk GR, Regan MC. Laparoscopic procedures are associated with a significant risk of digital nerve injury for general surgeons. Ann R Coll Surg Engl 2002;84:443.

17. Franasiak J, Craven R, Mosaly P, Gehrig PA. Feasibility and acceptance of a robotic surgery ergonomic training program. JSLS 2014;18(4).

18. Ballantyne GH. The pitfalls of laparoscopic surgery: challenges for robotics and telerobotic surgery. Surg Laparosc Endosc Percutan Tech 2002;12:1–5.

19. Matern U. Ergonomic deficiencies in the operating room: examples from minimally invasive surgery. Work 2009;33:165–8.

20. Van Det MJ, Meijerink WJHJ, Hoff C, et al. Optimal ergonomics for laparoscopic surgery in minimally invasive surgery suites: a review and guidelines. Surg Endosc 2009;23:1279–85.

21. Veelen MA, Jakimowicz JJ, Goossens RHM, et al. Evaluation of the usability of two types of image display systems, during laparoscopy. Surg Endosc 2002;16:674–8.

22. Haveran LA, Novitsky YW, Czerniach DR, et al. Optimizing laparoscopic task efficiency: the role of camera and monitor positions. Surg Endosc 2007;21:980–4.

23. Hanna GB, Shimi SM, Cuschieri A. Task performance in endoscopic surgery is influenced by location of the image display. Ann Surg 1998;227:481–4.

24. Ballantyne GH. Robotic surgery, telerobotic surgery, telepresence, and telementoring. Review of early clinical results. Surg Endosc 2002;16:1389–402.

25. Boppart SA, Deutsch TF, Rattner DW. Optical imaging technology in minimally invasive surgery. Current status and future directions. Surg Endosc 1999;13:718–22.

26. Matern U, Waller P. Instruments for minimally invasive surgery: principles of ergonomic handles. Surg Endosc 1999;13:174–82.

27. Matern U, Waller P, Giebmeyer C, et al. Ergonomics: requirements for adjusting the height of laparoscopic operating tables. JSLS 2001;5:7–12.

28. Van Veelen MA, Kazemier G, Koopman J, et al. Assessment of the ergonomically optimal operating surface height for laparoscopic surgery. J Laparoendosc Adv Surg Tech A 2002;12:47–52.

29. Tang B, Hou S, Cuschieri SA. Ergonomics of and technologies for single-port lapaxroscopic surgery. Minim Invasive Ther Allied Technol 2012;21:46–54.

30. Wexner SD, Bergamaschi R, Lacy A, et al. The current status of robotic pelvic surgery: results of a multinational interdisciplinary consensus conference. Surg Endosc 2009;23:438–43.

31. Challacombe BJ, Khan MS, Murphy D, Dasgupta P. The history of robotics in urology. World J Urol 2006;24:120–7.

32. Ballantyne GH, Moll F. The da Vinci telerobotic surgical system: the virtual operative field and telepresence surgery. Surg Clin North Am 2003;83:1293–304, vii.

33. Ruurda JP, van Vroonhoven TJ, Broeders IA. Robot-assisted surgical systems: a new era in laparoscopic surgery. Ann R Coll Surg Engl 2002;84:223–6.

34. Mohr CJ, Nadzam GS, Curet MJ. Totally robotic Roux-en-Y gastric bypass. Arch Surg 2005;140:779–86.

35. Talamini MA, Chapman S, Horgan S, Melvin WS; Academic Robotics Group. A prospective analysis of 211 robotic-assisted surgical procedures. Surg Endosc 2003;17:1521–4.

36. Mucksavage P, Kerbl DC, Lee JY. The da Vinci Surgical System overcomes innate hand dominance. J Endourol 2011;25:1385–8.

37. Schreuder HW, Verheijen RH. Robotic surgery. BJOG 2009;116:198–213.

38. Stone R, McCloy R. Ergonomics in medicine and surgery. BMJ 2004;328:1115–8.

39. Lux MM, Marshall M, Erturk E, Joseph JV. Ergonomic evaluation and guidelines for use of the daVinci Robot system. J Endourol 2010;24:371–5.

40. Sillanpaa J, Nyberg M, Laippala P. A new table for work with a microscope, a solution to ergonomic problems. Appl Ergon 2003;34:621–8.

1. Cuschieri A. Laparoscopic surgery: current status, issues and future developments. Surg J R Coll Surg Edinb Irel 2005;3:125–30, 132–3, 135–8.

2. Gehrig PA, Cantrell LA, Shafer A, et al. What is the optimal minimally invasive surgical procedure for endometrial cancer staging in the obese and morbidly obese woman? Gynecol Oncol 2008;111:41–5.

3. Walker JL, Piedmonte MR, Spirtos NM, et al. Laparoscopy compared with laparotomy for comprehensive surgical staging of uterine cancer: Gynecologic Oncology Group Study LAP2. J Clin Oncol 2009;27:5331–6.

4. Bell MC, Torgerson J, Seshadri-Kreaden U, et al. Comparison of outcomes and cost for endometrial cancer staging via traditional laparotomy, standard laparoscopy and robotic techniques. Gynecol Oncol 2008;111:407–11.

5. Park A, Lee G, Seagull FJ, et al. Patients benefit while surgeons suffer: an impending epidemic. J Am Coll Surg 2010;210:306–13.

6. Kant IJ, de Jong LC, van Rijssen-Moll M, Borm PJ. A survey of static and dynamic work postures of operating room staff. Int Arch Occup Environ Health 1992;63:423–8.

7. Patkin M, Isabel L. Ergonomics, engineering and surgery of endosurgical dissection. J R Coll Surg Edinb 1995;40:120–32.

8. Craven R, Franasiak J, Mosaly P, Gehrig PA. Ergonomic deficits in robotic gynecologic oncology surgery: a need for intervention. J Minim Invasive Gynecol 2013;20:648–55.

9. Franasiak J, Ko EM, Kidd J, et al. Physical strain and urgent need for ergonomic training among gynecologic oncologists who perform minimally invasive surgery. Gynecol Oncol 2012;126:437–42.

10. Berguer R. Surgical technology and the ergonomics of laparoscopic instruments. Surg Endosc 1998;12:458–62.

11. Berguer R. Surgery and ergonomics. Arch Surg 1999;134:1011–6.

12. Berguer R, Forkey DL, Smith WD. The effect of laparoscopic instrument working angle on surgeons’ upper extremity workload. Surg Endosc 2001;15:1027–9.

13. Lawson EH, Curet MJ, Sanchez BR, et al. Postural ergonomics during robotic and laparoscopic gastric bypass surgery: a pilot project. J Robot Surg 2007;1:61–7.

14. Van Veelen MA, Meijer DW. Ergonomics and design of laparoscopic instruments: results of a survey among laparoscopic surgeons. J Laparoendosc Adv Surg Tech A 1999;9:481–9.

15. Van Veelen MA, Nederlof EA, Goossens RHM, et al. Ergonomic problems encountered by the medical team related to products used for minimally invasive surgery. Surg Endosc 2003;17:1077–81.

16. Lawther RE, Kirk GR, Regan MC. Laparoscopic procedures are associated with a significant risk of digital nerve injury for general surgeons. Ann R Coll Surg Engl 2002;84:443.

17. Franasiak J, Craven R, Mosaly P, Gehrig PA. Feasibility and acceptance of a robotic surgery ergonomic training program. JSLS 2014;18(4).

18. Ballantyne GH. The pitfalls of laparoscopic surgery: challenges for robotics and telerobotic surgery. Surg Laparosc Endosc Percutan Tech 2002;12:1–5.

19. Matern U. Ergonomic deficiencies in the operating room: examples from minimally invasive surgery. Work 2009;33:165–8.

20. Van Det MJ, Meijerink WJHJ, Hoff C, et al. Optimal ergonomics for laparoscopic surgery in minimally invasive surgery suites: a review and guidelines. Surg Endosc 2009;23:1279–85.

21. Veelen MA, Jakimowicz JJ, Goossens RHM, et al. Evaluation of the usability of two types of image display systems, during laparoscopy. Surg Endosc 2002;16:674–8.

22. Haveran LA, Novitsky YW, Czerniach DR, et al. Optimizing laparoscopic task efficiency: the role of camera and monitor positions. Surg Endosc 2007;21:980–4.

23. Hanna GB, Shimi SM, Cuschieri A. Task performance in endoscopic surgery is influenced by location of the image display. Ann Surg 1998;227:481–4.

24. Ballantyne GH. Robotic surgery, telerobotic surgery, telepresence, and telementoring. Review of early clinical results. Surg Endosc 2002;16:1389–402.

25. Boppart SA, Deutsch TF, Rattner DW. Optical imaging technology in minimally invasive surgery. Current status and future directions. Surg Endosc 1999;13:718–22.

26. Matern U, Waller P. Instruments for minimally invasive surgery: principles of ergonomic handles. Surg Endosc 1999;13:174–82.

27. Matern U, Waller P, Giebmeyer C, et al. Ergonomics: requirements for adjusting the height of laparoscopic operating tables. JSLS 2001;5:7–12.

28. Van Veelen MA, Kazemier G, Koopman J, et al. Assessment of the ergonomically optimal operating surface height for laparoscopic surgery. J Laparoendosc Adv Surg Tech A 2002;12:47–52.

29. Tang B, Hou S, Cuschieri SA. Ergonomics of and technologies for single-port lapaxroscopic surgery. Minim Invasive Ther Allied Technol 2012;21:46–54.

30. Wexner SD, Bergamaschi R, Lacy A, et al. The current status of robotic pelvic surgery: results of a multinational interdisciplinary consensus conference. Surg Endosc 2009;23:438–43.

31. Challacombe BJ, Khan MS, Murphy D, Dasgupta P. The history of robotics in urology. World J Urol 2006;24:120–7.

32. Ballantyne GH, Moll F. The da Vinci telerobotic surgical system: the virtual operative field and telepresence surgery. Surg Clin North Am 2003;83:1293–304, vii.

33. Ruurda JP, van Vroonhoven TJ, Broeders IA. Robot-assisted surgical systems: a new era in laparoscopic surgery. Ann R Coll Surg Engl 2002;84:223–6.

34. Mohr CJ, Nadzam GS, Curet MJ. Totally robotic Roux-en-Y gastric bypass. Arch Surg 2005;140:779–86.

35. Talamini MA, Chapman S, Horgan S, Melvin WS; Academic Robotics Group. A prospective analysis of 211 robotic-assisted surgical procedures. Surg Endosc 2003;17:1521–4.

36. Mucksavage P, Kerbl DC, Lee JY. The da Vinci Surgical System overcomes innate hand dominance. J Endourol 2011;25:1385–8.

37. Schreuder HW, Verheijen RH. Robotic surgery. BJOG 2009;116:198–213.

38. Stone R, McCloy R. Ergonomics in medicine and surgery. BMJ 2004;328:1115–8.

39. Lux MM, Marshall M, Erturk E, Joseph JV. Ergonomic evaluation and guidelines for use of the daVinci Robot system. J Endourol 2010;24:371–5.

40. Sillanpaa J, Nyberg M, Laippala P. A new table for work with a microscope, a solution to ergonomic problems. Appl Ergon 2003;34:621–8.

Early Parkinsonism: Distinguishing Idiopathic Parkinson’s Disease from Other Syndromes

From the VA Medical Center (Dr. Lehosit) and the Parkinson’s and Movement Disorders Center, Virginia Commonwealth University (Dr. Cloud), Richmond, VA.

Abstract

- Objective: To provide an overview of the importance and challenges of accurate diagnosis of early idiopathic Parkinson’s disease and practical guidelines for clinicians.

- Methods: Review of the relevant literature.