User login

Collaborative care aids seniors’ mild depression

A collaborative care model significantly mitigated mild depression in adults aged 65 and older, compared with usual care in the short term, based on data from 705 patients. The findings were published online Feb. 21.

“There is limited research about older people with mild depressive disorders who have insufficient levels of depressive symptoms to meet diagnostic criteria (called subclinical, subthreshold, or subsyndromal depression) but also reduced quality of life and function,” wrote Simon Gilbody, PhD, of the University of York (England) and colleagues. However, subthreshold depression increases the risk of a severe depressive illness, the researchers added.

Overall, patients in the collaborative care group improved from an average score of 7.8 at baseline to 5.4 after 4 months; the usual care group improved from an average of 7.8 at baseline to 6.7 at 4 months. The difference in scores persisted at 12 months in the secondary analysis (JAMA. 2017;317:728-37. doi: 10.1001/jama.2017.0130). “For populations with case-level depression, a successful treatment outcome has been defined as 5 points on the PHQ-9,” the researchers noted. “This magnitude of benefit was not observed in either group of the trial when comparing scores before and after treatment, although this result would be anticipated given the lower baseline PHQ-9 scores in populations with subthreshold depression.’

The study participants came from 32 primary care practices in northern England; the average age was 77 years, and 58% were women.

The results were limited by several factors, including the absence of a standardized interview to diagnose depression, differences in retention and attrition between groups, and the absence of long-term follow-up, “and further research is needed to assess longer-term efficacy,” the researchers said.

Neither Dr. Gilbody nor his colleagues had financial conflicts to disclose.

The CASPER trial “provides the first evidence that collaborative care may benefit patients with subthreshold depression,” Kurt Kroenke, MD, wrote in an accompanying editorial. In addition to the improvements on the Patient Health Questionnaire and the reduction in risk of progression to threshold level depression, the findings further support the use of behavioral activation, which was the core treatment in the study, he said. “Strong evidence for the effectiveness of behavioral activation was provided by the recent COBRA trial. … and behavioral activation was found to be noninferior to cognitive-behavioral therapy for the outcome of depression,” he wrote. However, more research is needed before clinicians routinely expand treatment beyond major depression to include subthreshold depression, Dr. Kroenke noted. Key factors include the variable rate of progression from subthreshold depression to major depression, the duration and context of subthreshold depression, patient preferences, and the possible role of antidepressants, he noted. However, the CASPER findings show “new evidence that collaborative care improves outcomes for at least some patients with subthreshold depression,” Dr. Kroenke said. “Patients with persistent symptoms, functional impairment, and a desire for treatment may particularly benefit,” he added (JAMA. 2017;317:702-4).

Kurt Kroenke, MD, is affiliated with the VA Health Services Research and Development Service Center for Health Communication and Information, Regenstrief Institute and Indiana University, both in Indianapolis. He had no financial conflicts to disclose.

The CASPER trial “provides the first evidence that collaborative care may benefit patients with subthreshold depression,” Kurt Kroenke, MD, wrote in an accompanying editorial. In addition to the improvements on the Patient Health Questionnaire and the reduction in risk of progression to threshold level depression, the findings further support the use of behavioral activation, which was the core treatment in the study, he said. “Strong evidence for the effectiveness of behavioral activation was provided by the recent COBRA trial. … and behavioral activation was found to be noninferior to cognitive-behavioral therapy for the outcome of depression,” he wrote. However, more research is needed before clinicians routinely expand treatment beyond major depression to include subthreshold depression, Dr. Kroenke noted. Key factors include the variable rate of progression from subthreshold depression to major depression, the duration and context of subthreshold depression, patient preferences, and the possible role of antidepressants, he noted. However, the CASPER findings show “new evidence that collaborative care improves outcomes for at least some patients with subthreshold depression,” Dr. Kroenke said. “Patients with persistent symptoms, functional impairment, and a desire for treatment may particularly benefit,” he added (JAMA. 2017;317:702-4).

Kurt Kroenke, MD, is affiliated with the VA Health Services Research and Development Service Center for Health Communication and Information, Regenstrief Institute and Indiana University, both in Indianapolis. He had no financial conflicts to disclose.

The CASPER trial “provides the first evidence that collaborative care may benefit patients with subthreshold depression,” Kurt Kroenke, MD, wrote in an accompanying editorial. In addition to the improvements on the Patient Health Questionnaire and the reduction in risk of progression to threshold level depression, the findings further support the use of behavioral activation, which was the core treatment in the study, he said. “Strong evidence for the effectiveness of behavioral activation was provided by the recent COBRA trial. … and behavioral activation was found to be noninferior to cognitive-behavioral therapy for the outcome of depression,” he wrote. However, more research is needed before clinicians routinely expand treatment beyond major depression to include subthreshold depression, Dr. Kroenke noted. Key factors include the variable rate of progression from subthreshold depression to major depression, the duration and context of subthreshold depression, patient preferences, and the possible role of antidepressants, he noted. However, the CASPER findings show “new evidence that collaborative care improves outcomes for at least some patients with subthreshold depression,” Dr. Kroenke said. “Patients with persistent symptoms, functional impairment, and a desire for treatment may particularly benefit,” he added (JAMA. 2017;317:702-4).

Kurt Kroenke, MD, is affiliated with the VA Health Services Research and Development Service Center for Health Communication and Information, Regenstrief Institute and Indiana University, both in Indianapolis. He had no financial conflicts to disclose.

A collaborative care model significantly mitigated mild depression in adults aged 65 and older, compared with usual care in the short term, based on data from 705 patients. The findings were published online Feb. 21.

“There is limited research about older people with mild depressive disorders who have insufficient levels of depressive symptoms to meet diagnostic criteria (called subclinical, subthreshold, or subsyndromal depression) but also reduced quality of life and function,” wrote Simon Gilbody, PhD, of the University of York (England) and colleagues. However, subthreshold depression increases the risk of a severe depressive illness, the researchers added.

Overall, patients in the collaborative care group improved from an average score of 7.8 at baseline to 5.4 after 4 months; the usual care group improved from an average of 7.8 at baseline to 6.7 at 4 months. The difference in scores persisted at 12 months in the secondary analysis (JAMA. 2017;317:728-37. doi: 10.1001/jama.2017.0130). “For populations with case-level depression, a successful treatment outcome has been defined as 5 points on the PHQ-9,” the researchers noted. “This magnitude of benefit was not observed in either group of the trial when comparing scores before and after treatment, although this result would be anticipated given the lower baseline PHQ-9 scores in populations with subthreshold depression.’

The study participants came from 32 primary care practices in northern England; the average age was 77 years, and 58% were women.

The results were limited by several factors, including the absence of a standardized interview to diagnose depression, differences in retention and attrition between groups, and the absence of long-term follow-up, “and further research is needed to assess longer-term efficacy,” the researchers said.

Neither Dr. Gilbody nor his colleagues had financial conflicts to disclose.

A collaborative care model significantly mitigated mild depression in adults aged 65 and older, compared with usual care in the short term, based on data from 705 patients. The findings were published online Feb. 21.

“There is limited research about older people with mild depressive disorders who have insufficient levels of depressive symptoms to meet diagnostic criteria (called subclinical, subthreshold, or subsyndromal depression) but also reduced quality of life and function,” wrote Simon Gilbody, PhD, of the University of York (England) and colleagues. However, subthreshold depression increases the risk of a severe depressive illness, the researchers added.

Overall, patients in the collaborative care group improved from an average score of 7.8 at baseline to 5.4 after 4 months; the usual care group improved from an average of 7.8 at baseline to 6.7 at 4 months. The difference in scores persisted at 12 months in the secondary analysis (JAMA. 2017;317:728-37. doi: 10.1001/jama.2017.0130). “For populations with case-level depression, a successful treatment outcome has been defined as 5 points on the PHQ-9,” the researchers noted. “This magnitude of benefit was not observed in either group of the trial when comparing scores before and after treatment, although this result would be anticipated given the lower baseline PHQ-9 scores in populations with subthreshold depression.’

The study participants came from 32 primary care practices in northern England; the average age was 77 years, and 58% were women.

The results were limited by several factors, including the absence of a standardized interview to diagnose depression, differences in retention and attrition between groups, and the absence of long-term follow-up, “and further research is needed to assess longer-term efficacy,” the researchers said.

Neither Dr. Gilbody nor his colleagues had financial conflicts to disclose.

FROM JAMA

Key clinical point: Collaborative care reduced subthreshold depression in older adults, compared with usual care after 4 months.

Major finding: Older adults with subthreshold depression who received collaborative care had lower depression scores on the Patient Health Questionnaire than those who received usual care after 4 months (average scores 5.4 and 6.7, respectively).

Data source: A randomized trial of 705 adults aged 65 years and older with subthreshold depression.

Disclosures: The researchers had no financial conflicts to disclose.

Hope on the horizon for novel antidepressants

LAS VEGAS – There remains a great unmet need for more effective and rapidly acting treatments for major depressive disorder, and research is revealing that both new and existing drugs may help, according to one expert.

One argument for additional treatment options is the current rate of suicide in the United States, which ranks as the 10th leading cause of death among persons aged 10 years and older, Gerard Sanacora, MD, PhD, said at an annual psychopharmacology update held by the Nevada Psychiatric Association. Another argument for new antidepressants stems from the results of the STAR*D trial, which found that 37% of patients with major depressive disorder who were treated with citalopram monotherapy had remission with the first treatment.

Dr. Sanacora, who is also director of the Yale Depression Research Program, said that there is a reconceptualization of how clinicians think about the pathophysiology of depression and the path to novel treatment development. A variety of novel pharmacologic and somatic treatments, with new mechanisms of action, are currently undergoing validation for treatment-resistant depression. These include glutamatergic, GABAergic, opioid, and anti‐inflammatory drugs.

Drugs that modulate GABAergic and glutamatergic neurotransmission have anxiolytic and antidepressant activities in rodent models of depression. In addition, the robust, rapid, and relatively sustained antidepressant effects of low-dose ketamine have been observed in double-blind placebo crossover trials in patients with treatment-resistant major depression (Biol Psych. 2000 Feb 15;47[4]:351-4 and Arch Gen Psych. 2006 Aug;63[8]:856-64). Currently, Dr. Sanacora said, more than 80 clinics in the United States provide ketamine therapy, yet clinicians face balancing the potential benefits of the drug with inherent limitations of the ketamine studies to date. These include the fact that the study drug blinding is ineffective; the optimal dose, route, or frequency has not been determined; the duration of effect is unknown; the long-term effectiveness and safety are unclear; and the moderators and mediators of response are unknown.

Results from a National Institutes of Mental Health–funded double-blind, placebo-controlled study examining various doses of ketamine in treatment-resistant depression are anticipated sometime this year. In 2013, a trial sponsored by Janssen Research & Development titled a Study to Evaluate the Safety and Efficacy of Intranasal Esketamine in Treatment-Resistant Depression (SYNAPSE) set out to assess the efficacy and dose response of intranasal esketamine (panel A: 28 mg, 56 mg, and 84 mg; panel B: 14 mg and 56 mg), compared with placebo, in improving depressive symptoms in participants with treatment-resistant depression. The researchers found a positive effect of esketamine vs. saline placebo with some evidence of a dose-response curve, suggesting higher doses to be more effective. Some published studies suggest that chronic ketamine use causes impairments in working memory and other cognitive effects (Addiction. 2009 Jan;104[1]:77-87 and Front Psych. 2014 Dec 4;5:149), while others have found that ketamine does not cause memory deficits when given on up to six occasions (Int J Neuropsychopharmacol. 2014 Jun 25;17[11]:1805-13 and J Psychopharmacol. 2014 Apr 3;28[6]:536-44).

Another drug being studied for major depressive disorder is the investigational agent SAGE-547, an allosteric neurosteroid modulator of both synaptic and extrasynaptic GABA receptors. Preliminary results from a double-blind, placebo-controlled phase II trial in 21 patients with postpartum depression showed that the Hamilton Rating Scale for Depression (HAM-D) total score was reduced by SAGE-547, compared with placebo, at 60 hours (P = .008).

Buprenorphine, a partial mu opioid agonist commonly used in addiction treatment, may also play a future role in helping patients with treatment-resistant depression. One randomized study of 88 patients found that those who took very low doses of buprenorphine for 2 or 4 weeks had significantly lower scores on the Beck Suicide Ideation Scale, compared with their counterparts on placebo (Am J Psychiatry. 2016 May 1;173[5]491-8).

Drugs with anti-inflammatory properties may also have a role. One study of 60 patients found that the tumor necrosis factor–alpha antagonist infliximab may benefit patients with treatment-resistant depression who have high inflammatory biomarkers at baseline (JAMA Psychiatry. 2013 Jan;70[1]:31-41).

“Active participation in clinical research efforts is critical to the advancement of future treatment approaches,” he said.

Dr. Sanacora disclosed having received consulting fees and/or research agreements from numerous industry sources. In addition, free medication was provided to Dr. Sanacora by sanofi‐aventis for a study sponsored by the National Institutes of Health.

LAS VEGAS – There remains a great unmet need for more effective and rapidly acting treatments for major depressive disorder, and research is revealing that both new and existing drugs may help, according to one expert.

One argument for additional treatment options is the current rate of suicide in the United States, which ranks as the 10th leading cause of death among persons aged 10 years and older, Gerard Sanacora, MD, PhD, said at an annual psychopharmacology update held by the Nevada Psychiatric Association. Another argument for new antidepressants stems from the results of the STAR*D trial, which found that 37% of patients with major depressive disorder who were treated with citalopram monotherapy had remission with the first treatment.

Dr. Sanacora, who is also director of the Yale Depression Research Program, said that there is a reconceptualization of how clinicians think about the pathophysiology of depression and the path to novel treatment development. A variety of novel pharmacologic and somatic treatments, with new mechanisms of action, are currently undergoing validation for treatment-resistant depression. These include glutamatergic, GABAergic, opioid, and anti‐inflammatory drugs.

Drugs that modulate GABAergic and glutamatergic neurotransmission have anxiolytic and antidepressant activities in rodent models of depression. In addition, the robust, rapid, and relatively sustained antidepressant effects of low-dose ketamine have been observed in double-blind placebo crossover trials in patients with treatment-resistant major depression (Biol Psych. 2000 Feb 15;47[4]:351-4 and Arch Gen Psych. 2006 Aug;63[8]:856-64). Currently, Dr. Sanacora said, more than 80 clinics in the United States provide ketamine therapy, yet clinicians face balancing the potential benefits of the drug with inherent limitations of the ketamine studies to date. These include the fact that the study drug blinding is ineffective; the optimal dose, route, or frequency has not been determined; the duration of effect is unknown; the long-term effectiveness and safety are unclear; and the moderators and mediators of response are unknown.

Results from a National Institutes of Mental Health–funded double-blind, placebo-controlled study examining various doses of ketamine in treatment-resistant depression are anticipated sometime this year. In 2013, a trial sponsored by Janssen Research & Development titled a Study to Evaluate the Safety and Efficacy of Intranasal Esketamine in Treatment-Resistant Depression (SYNAPSE) set out to assess the efficacy and dose response of intranasal esketamine (panel A: 28 mg, 56 mg, and 84 mg; panel B: 14 mg and 56 mg), compared with placebo, in improving depressive symptoms in participants with treatment-resistant depression. The researchers found a positive effect of esketamine vs. saline placebo with some evidence of a dose-response curve, suggesting higher doses to be more effective. Some published studies suggest that chronic ketamine use causes impairments in working memory and other cognitive effects (Addiction. 2009 Jan;104[1]:77-87 and Front Psych. 2014 Dec 4;5:149), while others have found that ketamine does not cause memory deficits when given on up to six occasions (Int J Neuropsychopharmacol. 2014 Jun 25;17[11]:1805-13 and J Psychopharmacol. 2014 Apr 3;28[6]:536-44).

Another drug being studied for major depressive disorder is the investigational agent SAGE-547, an allosteric neurosteroid modulator of both synaptic and extrasynaptic GABA receptors. Preliminary results from a double-blind, placebo-controlled phase II trial in 21 patients with postpartum depression showed that the Hamilton Rating Scale for Depression (HAM-D) total score was reduced by SAGE-547, compared with placebo, at 60 hours (P = .008).

Buprenorphine, a partial mu opioid agonist commonly used in addiction treatment, may also play a future role in helping patients with treatment-resistant depression. One randomized study of 88 patients found that those who took very low doses of buprenorphine for 2 or 4 weeks had significantly lower scores on the Beck Suicide Ideation Scale, compared with their counterparts on placebo (Am J Psychiatry. 2016 May 1;173[5]491-8).

Drugs with anti-inflammatory properties may also have a role. One study of 60 patients found that the tumor necrosis factor–alpha antagonist infliximab may benefit patients with treatment-resistant depression who have high inflammatory biomarkers at baseline (JAMA Psychiatry. 2013 Jan;70[1]:31-41).

“Active participation in clinical research efforts is critical to the advancement of future treatment approaches,” he said.

Dr. Sanacora disclosed having received consulting fees and/or research agreements from numerous industry sources. In addition, free medication was provided to Dr. Sanacora by sanofi‐aventis for a study sponsored by the National Institutes of Health.

LAS VEGAS – There remains a great unmet need for more effective and rapidly acting treatments for major depressive disorder, and research is revealing that both new and existing drugs may help, according to one expert.

One argument for additional treatment options is the current rate of suicide in the United States, which ranks as the 10th leading cause of death among persons aged 10 years and older, Gerard Sanacora, MD, PhD, said at an annual psychopharmacology update held by the Nevada Psychiatric Association. Another argument for new antidepressants stems from the results of the STAR*D trial, which found that 37% of patients with major depressive disorder who were treated with citalopram monotherapy had remission with the first treatment.

Dr. Sanacora, who is also director of the Yale Depression Research Program, said that there is a reconceptualization of how clinicians think about the pathophysiology of depression and the path to novel treatment development. A variety of novel pharmacologic and somatic treatments, with new mechanisms of action, are currently undergoing validation for treatment-resistant depression. These include glutamatergic, GABAergic, opioid, and anti‐inflammatory drugs.

Drugs that modulate GABAergic and glutamatergic neurotransmission have anxiolytic and antidepressant activities in rodent models of depression. In addition, the robust, rapid, and relatively sustained antidepressant effects of low-dose ketamine have been observed in double-blind placebo crossover trials in patients with treatment-resistant major depression (Biol Psych. 2000 Feb 15;47[4]:351-4 and Arch Gen Psych. 2006 Aug;63[8]:856-64). Currently, Dr. Sanacora said, more than 80 clinics in the United States provide ketamine therapy, yet clinicians face balancing the potential benefits of the drug with inherent limitations of the ketamine studies to date. These include the fact that the study drug blinding is ineffective; the optimal dose, route, or frequency has not been determined; the duration of effect is unknown; the long-term effectiveness and safety are unclear; and the moderators and mediators of response are unknown.

Results from a National Institutes of Mental Health–funded double-blind, placebo-controlled study examining various doses of ketamine in treatment-resistant depression are anticipated sometime this year. In 2013, a trial sponsored by Janssen Research & Development titled a Study to Evaluate the Safety and Efficacy of Intranasal Esketamine in Treatment-Resistant Depression (SYNAPSE) set out to assess the efficacy and dose response of intranasal esketamine (panel A: 28 mg, 56 mg, and 84 mg; panel B: 14 mg and 56 mg), compared with placebo, in improving depressive symptoms in participants with treatment-resistant depression. The researchers found a positive effect of esketamine vs. saline placebo with some evidence of a dose-response curve, suggesting higher doses to be more effective. Some published studies suggest that chronic ketamine use causes impairments in working memory and other cognitive effects (Addiction. 2009 Jan;104[1]:77-87 and Front Psych. 2014 Dec 4;5:149), while others have found that ketamine does not cause memory deficits when given on up to six occasions (Int J Neuropsychopharmacol. 2014 Jun 25;17[11]:1805-13 and J Psychopharmacol. 2014 Apr 3;28[6]:536-44).

Another drug being studied for major depressive disorder is the investigational agent SAGE-547, an allosteric neurosteroid modulator of both synaptic and extrasynaptic GABA receptors. Preliminary results from a double-blind, placebo-controlled phase II trial in 21 patients with postpartum depression showed that the Hamilton Rating Scale for Depression (HAM-D) total score was reduced by SAGE-547, compared with placebo, at 60 hours (P = .008).

Buprenorphine, a partial mu opioid agonist commonly used in addiction treatment, may also play a future role in helping patients with treatment-resistant depression. One randomized study of 88 patients found that those who took very low doses of buprenorphine for 2 or 4 weeks had significantly lower scores on the Beck Suicide Ideation Scale, compared with their counterparts on placebo (Am J Psychiatry. 2016 May 1;173[5]491-8).

Drugs with anti-inflammatory properties may also have a role. One study of 60 patients found that the tumor necrosis factor–alpha antagonist infliximab may benefit patients with treatment-resistant depression who have high inflammatory biomarkers at baseline (JAMA Psychiatry. 2013 Jan;70[1]:31-41).

“Active participation in clinical research efforts is critical to the advancement of future treatment approaches,” he said.

Dr. Sanacora disclosed having received consulting fees and/or research agreements from numerous industry sources. In addition, free medication was provided to Dr. Sanacora by sanofi‐aventis for a study sponsored by the National Institutes of Health.

EXPERT ANALYSIS FROM THE NPA PSYCHOPHARMACOLOGY UPDATE

Light therapy eases Parkinson’s-related sleep disturbances

Light therapy significantly reduced excessive daytime sleepiness, improved sleep quality, decreased overnight awakenings, shortened sleep latency, enhanced daytime alertness and activity level, and improved motor symptoms in patients with Parkinson’s disease, according to a report published online Feb. 20 in JAMA Neurology.

The noninvasive, nonpharmacologic treatment was well tolerated, and patient adherence was excellent in a small, multicenter, randomized controlled trial. Light therapy is widely available as a treatment for several sleep and psychiatric disorders and is “relatively easy to prescribe and incorporate into a clinical practice,” said Aleksandar Videnovic, MD, of the department of neurology at Massachusetts General Hospital and the division of sleep medicine at Harvard Medical School, both in Boston, and his associates.

To assess the safety and efficacy of light therapy as a novel treatment for PD, they studied 31 adults (age range, 32-77 years) who had a mean disease duration of 6 years. These study participants were randomly assigned to use 1 hour of exposure to 10,000 lux of bright light (16 patients in the intervention group) or 1 hour of exposure to less than 300 lux of dim red light (15 control subjects) every morning and every afternoon for 2 weeks.

The study participants – 13 men and 18 women – also wore actigraphy monitors all day and all night, completed daily sleep diaries, and noted daytime sleepiness in a log every 2 hours, 3 days per week.

Bright light significantly improved excessive daytime sleepiness as measured by the Epworth Sleepiness Scale and self-reported alertness during wake time, as well as several sleep metrics such as overall sleep quality, overnight awakenings, and ease of falling asleep. All the patients in the intervention group reported being more refreshed in the mornings during the study period, as compared with baseline.

Light therapy also improved overall PD severity as measured by the Unified Parkinson’s Disease Rating Scale, particularly in scores related to activities of daily living and motor symptoms. Moreover, this effect persisted during the 2-week washout period after treatment was discontinued, Dr. Videnovic and his associates said (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5192).

The treatment was well tolerated. In the intervention group, one patient reported headache and another sleepiness, and in the control group one patient reported itchy eyes. The effects resolved spontaneously, and neither lead to treatment withdrawal.

“Based on these results, the next logical step is to optimize various parameters of light therapy (e.g., intensity, duration, and wavelength) not only for impaired sleep and alertness but also for other motor and nonmotor manifestation of PD,” the investigators wrote.

A major limitation of this study was that exposure to ambient light throughout the day was not measured. Some people in the control group received as much or even more light exposure than those assigned to bright-light therapy. “Future studies may be more strict in controlling such exposures,” Dr. Videnovic and his associates said.

This study was supported by the National Parkinson Foundation and the National Institutes of Health. Dr. Videnovic reported having no relevant financial disclosures. One of his associates reported ties to Merck, Phillips, Eisai, and Teva.

The study by Dr. Videnovic and his associates is important because it introduces a new concept into the much-studied phenomenon of sleep disturbances in Parkinson’s disease.

The authors demonstrated that chronobiological interventions can be used therapeutically in PD. Accounting for circadian physiology also sets a new standard for future studies of sleep, nighttime wakefulness, and daytime function not only in PD but, it is hoped, in other diseases as well.

Birgit Högl, MD, is with the department of neurology at the Medical University of Innsbruck (Austria). She reported receiving honoraria as a speaker, advisory board member, or consultant from UCB, Otsuka, Lundbeck, Lilly, Axovant, AbbVie, Mundipharma, Benevolent Bio, and Janssen Cilag, and travel support from Habel Medizintechnik and Vivisol. Dr. Högl made these remarks in an editorial (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5519) accompanying the report by Dr. Videnovic and his colleagues.

The study by Dr. Videnovic and his associates is important because it introduces a new concept into the much-studied phenomenon of sleep disturbances in Parkinson’s disease.

The authors demonstrated that chronobiological interventions can be used therapeutically in PD. Accounting for circadian physiology also sets a new standard for future studies of sleep, nighttime wakefulness, and daytime function not only in PD but, it is hoped, in other diseases as well.

Birgit Högl, MD, is with the department of neurology at the Medical University of Innsbruck (Austria). She reported receiving honoraria as a speaker, advisory board member, or consultant from UCB, Otsuka, Lundbeck, Lilly, Axovant, AbbVie, Mundipharma, Benevolent Bio, and Janssen Cilag, and travel support from Habel Medizintechnik and Vivisol. Dr. Högl made these remarks in an editorial (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5519) accompanying the report by Dr. Videnovic and his colleagues.

The study by Dr. Videnovic and his associates is important because it introduces a new concept into the much-studied phenomenon of sleep disturbances in Parkinson’s disease.

The authors demonstrated that chronobiological interventions can be used therapeutically in PD. Accounting for circadian physiology also sets a new standard for future studies of sleep, nighttime wakefulness, and daytime function not only in PD but, it is hoped, in other diseases as well.

Birgit Högl, MD, is with the department of neurology at the Medical University of Innsbruck (Austria). She reported receiving honoraria as a speaker, advisory board member, or consultant from UCB, Otsuka, Lundbeck, Lilly, Axovant, AbbVie, Mundipharma, Benevolent Bio, and Janssen Cilag, and travel support from Habel Medizintechnik and Vivisol. Dr. Högl made these remarks in an editorial (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5519) accompanying the report by Dr. Videnovic and his colleagues.

Light therapy significantly reduced excessive daytime sleepiness, improved sleep quality, decreased overnight awakenings, shortened sleep latency, enhanced daytime alertness and activity level, and improved motor symptoms in patients with Parkinson’s disease, according to a report published online Feb. 20 in JAMA Neurology.

The noninvasive, nonpharmacologic treatment was well tolerated, and patient adherence was excellent in a small, multicenter, randomized controlled trial. Light therapy is widely available as a treatment for several sleep and psychiatric disorders and is “relatively easy to prescribe and incorporate into a clinical practice,” said Aleksandar Videnovic, MD, of the department of neurology at Massachusetts General Hospital and the division of sleep medicine at Harvard Medical School, both in Boston, and his associates.

To assess the safety and efficacy of light therapy as a novel treatment for PD, they studied 31 adults (age range, 32-77 years) who had a mean disease duration of 6 years. These study participants were randomly assigned to use 1 hour of exposure to 10,000 lux of bright light (16 patients in the intervention group) or 1 hour of exposure to less than 300 lux of dim red light (15 control subjects) every morning and every afternoon for 2 weeks.

The study participants – 13 men and 18 women – also wore actigraphy monitors all day and all night, completed daily sleep diaries, and noted daytime sleepiness in a log every 2 hours, 3 days per week.

Bright light significantly improved excessive daytime sleepiness as measured by the Epworth Sleepiness Scale and self-reported alertness during wake time, as well as several sleep metrics such as overall sleep quality, overnight awakenings, and ease of falling asleep. All the patients in the intervention group reported being more refreshed in the mornings during the study period, as compared with baseline.

Light therapy also improved overall PD severity as measured by the Unified Parkinson’s Disease Rating Scale, particularly in scores related to activities of daily living and motor symptoms. Moreover, this effect persisted during the 2-week washout period after treatment was discontinued, Dr. Videnovic and his associates said (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5192).

The treatment was well tolerated. In the intervention group, one patient reported headache and another sleepiness, and in the control group one patient reported itchy eyes. The effects resolved spontaneously, and neither lead to treatment withdrawal.

“Based on these results, the next logical step is to optimize various parameters of light therapy (e.g., intensity, duration, and wavelength) not only for impaired sleep and alertness but also for other motor and nonmotor manifestation of PD,” the investigators wrote.

A major limitation of this study was that exposure to ambient light throughout the day was not measured. Some people in the control group received as much or even more light exposure than those assigned to bright-light therapy. “Future studies may be more strict in controlling such exposures,” Dr. Videnovic and his associates said.

This study was supported by the National Parkinson Foundation and the National Institutes of Health. Dr. Videnovic reported having no relevant financial disclosures. One of his associates reported ties to Merck, Phillips, Eisai, and Teva.

Light therapy significantly reduced excessive daytime sleepiness, improved sleep quality, decreased overnight awakenings, shortened sleep latency, enhanced daytime alertness and activity level, and improved motor symptoms in patients with Parkinson’s disease, according to a report published online Feb. 20 in JAMA Neurology.

The noninvasive, nonpharmacologic treatment was well tolerated, and patient adherence was excellent in a small, multicenter, randomized controlled trial. Light therapy is widely available as a treatment for several sleep and psychiatric disorders and is “relatively easy to prescribe and incorporate into a clinical practice,” said Aleksandar Videnovic, MD, of the department of neurology at Massachusetts General Hospital and the division of sleep medicine at Harvard Medical School, both in Boston, and his associates.

To assess the safety and efficacy of light therapy as a novel treatment for PD, they studied 31 adults (age range, 32-77 years) who had a mean disease duration of 6 years. These study participants were randomly assigned to use 1 hour of exposure to 10,000 lux of bright light (16 patients in the intervention group) or 1 hour of exposure to less than 300 lux of dim red light (15 control subjects) every morning and every afternoon for 2 weeks.

The study participants – 13 men and 18 women – also wore actigraphy monitors all day and all night, completed daily sleep diaries, and noted daytime sleepiness in a log every 2 hours, 3 days per week.

Bright light significantly improved excessive daytime sleepiness as measured by the Epworth Sleepiness Scale and self-reported alertness during wake time, as well as several sleep metrics such as overall sleep quality, overnight awakenings, and ease of falling asleep. All the patients in the intervention group reported being more refreshed in the mornings during the study period, as compared with baseline.

Light therapy also improved overall PD severity as measured by the Unified Parkinson’s Disease Rating Scale, particularly in scores related to activities of daily living and motor symptoms. Moreover, this effect persisted during the 2-week washout period after treatment was discontinued, Dr. Videnovic and his associates said (JAMA Neurol. 2017 Feb 20. doi: 10.1001/jamaneurol.2016.5192).

The treatment was well tolerated. In the intervention group, one patient reported headache and another sleepiness, and in the control group one patient reported itchy eyes. The effects resolved spontaneously, and neither lead to treatment withdrawal.

“Based on these results, the next logical step is to optimize various parameters of light therapy (e.g., intensity, duration, and wavelength) not only for impaired sleep and alertness but also for other motor and nonmotor manifestation of PD,” the investigators wrote.

A major limitation of this study was that exposure to ambient light throughout the day was not measured. Some people in the control group received as much or even more light exposure than those assigned to bright-light therapy. “Future studies may be more strict in controlling such exposures,” Dr. Videnovic and his associates said.

This study was supported by the National Parkinson Foundation and the National Institutes of Health. Dr. Videnovic reported having no relevant financial disclosures. One of his associates reported ties to Merck, Phillips, Eisai, and Teva.

FROM JAMA NEUROLOGY

Key clinical point:

Major finding: Compared with a control condition, bright light significantly improved excessive daytime sleepiness as measured by the Epworth Sleepiness Scale and self-reported alertness during wake time.

Data source: A randomized controlled trial involving 31 adults with Parkinson’s disease–related sleep disturbances.

Disclosures: This study was supported by the National Parkinson Foundation and the National Institutes of Health. Dr. Videnovic reported having no relevant financial disclosures. One of his associates reported ties to Merck, Phillips, Eisai, and Teva.

Safety of Superior Labrum Anterior and Posterior (SLAP) Repair Posterior to Biceps Tendon Is Improved With a Percutaneous Approach

Take-Home Points

- Anchors placed posterior to the biceps during SLAP repair are at risk for glenoid vault penetration and/or suprascapular nerve (SSN) injury.

- Vault penetration and SSN injury are avoided by using a Port of Wilmington (PW) portal instead of an anterior portal.

- A percutaneous PW portal is safe and passes through the rotator cuff muscle only.

Since being classified by Snyder and colleagues,1 various arthroscopic techniques have been used to repair superior labrum anterior and posterior (SLAP) tears, particularly type II tears. Despite being commonly performed, repairs of SLAP lesions remain challenging. There is high variability in the rate of good/excellent functional outcomes and athletes’ return to previous level of play after SLAP repairs.2,3 Furthermore, the rate of complications after SLAP repair is as high as 5%.4

One of the most common complications of repair of a type II SLAP tear is nerve injury.4 In particular, suprascapular nerve (SSN) injury has occurred after arthroscopic repair of SLAP tears.5,6 Three cadaveric studies have demonstrated that glenoid vault penetration is common during placement of knotted anchors for SLAP repair and that the SSN is at risk during placement of these anchors.7-9 However, 2 of the 3 studies used only an anterior portal in their evaluation of anchor placement. Safety of anchor placement posterior to the biceps tendon may be improved with a percutaneous approach using a Port of Wilmington (PW) portal.10,11 No studies have evaluated the risk of glenoid vault penetration and SSN injury with shorter knotless anchors.

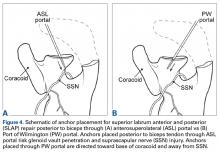

We conducted a study to compare a standard anterosuperolateral (ASL) portal with a percutaneous PW portal for knotless anchors placed posterior to the biceps tendon during repair of SLAP tears. We hypothesized that anchors placed through the PW portal would be less likely to penetrate the glenoid vault and would be farther from the SSN in the event of bone penetration.

Materials and Methods

Six matched pairs of fresh human cadaveric shoulders were used in this study. Each specimen included the scapula, the clavicle, and the humerus. All 6 specimens were male, and their mean age was 41.2 years (range, 23-59 years). Shoulder arthroscopy was performed for placement of SLAP anchors, and open dissection followed.

Anchor Placement

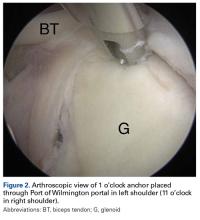

The scapula was clamped and the shoulder placed in the lateral decubitus position with 30° of abduction, 20° of forward flexion, and neutral rotation.10 A standard posterior glenohumeral viewing portal was established and a 30° arthroscope inserted. Both shoulders of each matched pair were randomly assigned to anchor placement through either an ASL portal or a PW portal. Two anchors were placed in the superior glenoid to simulate repair of a posterior SLAP tear.11 Each was a 2.9-mm short (12.5-mm) knotless anchor (BioComposite PushLock; Arthrex) that included a polyetheretherketone (PEEK) eyelet for threading sutures before anchor placement. A drill guide was inserted according to manufacturer guidelines, and a 2.9-mm drill was used to make a bone socket 18 mm deep. The anchor eyelet was loaded with suture tape (Labral Tape; Arthrex), and the anchor and suture were inserted into the socket. The sutures were left uncut to aid in anchor visualization during open dissection. On a right shoulder, the first anchor was placed just posterior to the biceps tendon, at 11 o’clock, and the second anchor about 1 cm posterior to the first, at 10 o’clock. All anchors were placed by an arthroscopy fellowship–trained shoulder surgeon. Before placement, anchor location was confirmed by another arthroscopy fellowship–trained shoulder surgeon.

The ASL portal was created, with an 18-gauge spinal needle and an outside-in technique, about 1 cm lateral to the anterolateral corner of the acromion.

In the opposite shoulder, the PW portal was created, with a percutaneous technique, about 1 cm anterior and 1 cm lateral to the posterolateral corner of the acromion. An 18-gauge spinal needle was inserted to allow a 45° angle of approach to the posterosuperior glenoid.11

Cadaveric Dissection

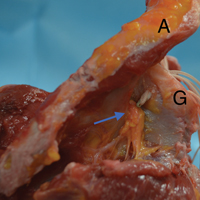

After anchor placement, another shoulder surgeon performed the dissection. Skin, subcutaneous tissue, deltoid, and clavicle were removed. In the percutaneous specimens, PW portal location relative to rotator cuff was recorded before cuff removal. After overlying soft tissues were removed from a specimen, the anchors were examined for glenoid vault penetration. In the setting of vault penetration, digital calipers were used to measure the shortest distance from anchor to SSN.

Results

In the ASL portal group, 8 (66.7%) of 12 anchors (4/6 at 11 o’clock, 4/6 at 10 o’clock) penetrated the medial glenoid vault.

In the PW portal group, 2 (16.7%) of 12 anchors (1/6 at 11 o’clock, 1/6 at 10 o’clock, both from a single specimen) penetrated the medial glenoid vault. Actually, in each case the eyelet and not the anchor penetrated the vault. In the penetration cases, distance to SSN was 20 mm for the 11 o’clock anchor and 8 mm for the 10 o’clock anchor (Table). Of the 6 portals, 3 passed through the supraspinatus muscle, 2 through the infraspinatus musculotendinous junction, and 1 through the infraspinatus muscle.

Discussion

Our study findings support the hypothesis that SLAP repair anchors placed posterior to the biceps tendon are more likely to remain in bone with use of a percutaneous approach relative to an ASL approach. Our findings also support the growing body of evidence that such anchors placed with an anterior approach increase the risk for SSN injury.

Three other cadaveric studies have evaluated anchor placement for SLAP repair. Chan and colleagues7 evaluated drill penetration during bone socket preparation for SLAP repair in 21 matched pairs of formalin-embalmed cadavers. A 20-mm drill was used for correspondence to a 14.5-mm anchor, though no anchors were inserted, and sockets were created in an open manner. Through a mimicked ASL portal, 1 socket was made anterior to the biceps tendon, at 1 o’clock; then, through a mimicked PW portal, 2 sockets were made posterior to the tendon, at 11 o’clock and 9 to 10 o’clock. Glenoid vault penetration occurred in 29% of the 42 anterior sockets, but only 1 anchor (2.4%) touched the SSN. Penetration did not occur with the 11 o’clock anchors. The 9 to 10 o’clock anchor was at highest risk for SSN injury (9.5%, 4 cases). The study was limited by lack of anchor placement and open creation of bone sockets in embalmed cadavers.

Koh and colleagues8 evaluated arthroscopic placement of anterior SLAP anchors in 6 matched pairs of fresh-frozen cadavers. Through an ASL portal, each 14.5-mm knotted anchor was placed anterior to the biceps tendon, at 1 o’clock. As in the study by Chan and colleagues,7 drill depth was 20 mm. Notably, anchors were seated 2 mm beyond manufacturer recommendations, and the cadavers were of Asian origin, likely indicating smaller glenoids compared to specimens from North America or Europe. All 12 anchors penetrated the glenoid vault; mean distance to SSN was 3.1 mm.

Morgan and colleagues9 compared anterior and ASL portals created for SLAP repairs in 10 matched-pair cadavers. Anchors were placed at 1 o’clock, 11 o’clock, and 10 o’clock. As in the studies by Chan and colleagues7 and Koh and colleagues,8 14.5-mm knotted anchors were used. One anterior anchor (10%) placed through an ASL portal penetrated the cortex by 1 mm, and 2 anterior anchors (20%) placed through anterior portals penetrated the cortex (1 was completely out of the bone). Overall, 65% of 11 o’clock anchors and 100% of 10 o’clock anchors violated the glenoid vault. With the 11 o’clock anchors, mean distance to SSN was 6 mm for ASL portals and 4.2 mm for anterior portals; with the 10 o’clock anchors, mean distance to SSN was 8 mm for ASL portals and 2.1 mm for anterior portals.

Overall, the results of these 3 studies suggest that, with use of ASL portals, placement of SLAP anchors anterior to the biceps tendon is safe. Using the same portals, however, anchors placed posterior to the tendon are at higher risk for glenoid vault penetration. Supporting these findings are our study’s penetration rates: 66.7% for anchors placed through ASL portals and 16.7% for anchors placed through percutaneous PW portals. The different rates are not surprising given that the coracoid process projects anterior to the glenoid and provides additional bone stock for placement of anchors anteriorly vs posteriorly. Therefore, with percutaneous PW portals, the approach angle directs the anchor toward the bone of the coracoid base. Furthermore, the SSN passes nearest the posterior aspect of the glenoid. In a study by Shishido and Kikuchi,12 the distance from the posterior rim of the glenoid to the SSN was 18 mm, and from the superior rim was 29 mm. Therefore, anchors placed with an anterior approach naturally are directed toward the SSN.

In addition to portal placement and approach angle, anchor length likely affects the risks for glenoid vault penetration and SSN injury.

One limitation of this study was the small number of cadavers, all of which were male. Female cadavers and cadavers of other ethnic origins likely have smaller glenoid vaults, and thus their inclusion would have altered our results. This issue was well described in studies mentioned in this article, and our goal was simply to compare ASL portals with percutaneous PW portals, so we think it does not change the fact that the risks for glenoid vault penetration and SSN injury are reduced with use of PW portals for anchors placed posterior to the biceps tendon.

Conclusion

This study was the first to examine glenoid vault penetration and SSN proximity with short anchors for SLAP repair. The risk for glenoid vault penetration during repair of SLAP tears posterior to the biceps tendon was reduced by anchor placement with a percutaneous posterior approach. The percutaneous posterior approach also directs the anchor away from the SSN.

Am J Orthop. 2017;46(1):E60-E64. Copyright Frontline Medical Communications Inc. 2017. All rights reserved.

1. Snyder SJ, Banas MP, Karzel RP. An analysis of 140 injuries to the superior glenoid labrum. J Shoulder Elbow Surg. 1995;4(4):243-248.

2. Denard PJ, Lädermann A, Burkhart SS. Long-term outcome after arthroscopic repair of type II SLAP lesions: results according to age and workers’ compensation status. Arthroscopy. 2012;28(4):451-457.

3. Gorantla K, Gill C, Wright RW. The outcome of type II SLAP repair: a systematic review. Arthroscopy. 2010;26(4):537-545.

4. Weber SC, Martin DF, Seiler JG 3rd, Harrast JJ. Superior labrum anterior and posterior lesions of the shoulder: incidence rates, complications, and outcomes as reported by American Board of Orthopedic Surgery. Part II candidates. Am J Sports Med. 2012;40(7):1538-1543.

5. Kim SH, Koh YG, Sung CH, Moon HK, Park YS. Iatrogenic suprascapular nerve injury after repair of type II SLAP lesion. Arthroscopy. 2010;26(7):1005-1008.

6. Yoo JC, Lee YS, Ahn JH, Park JH, Kang HJ, Koh KH. Isolated suprascapular nerve injury below the spinoglenoid notch after SLAP repair. J Shoulder Elbow Surg. 2009;18(4):e27-e29.

7. Chan H, Beaupre LA, Bouliane MJ. Injury of the suprascapular nerve during arthroscopic repair of superior labral tears: an anatomic study. J Shoulder Elbow Surg. 2010;19(5):709-715.

8. Koh KH, Park WH, Lim TK, Yoo JC. Medial perforation of the glenoid neck following SLAP repair places the suprascapular nerve at risk: a cadaveric study. J Shoulder Elbow Surg. 2011;20(2):245-250.

9. Morgan RT, Henn RF 3rd, Paryavi E, Dreese J. Injury to the suprascapular nerve during superior labrum anterior and posterior repair: is a rotator interval portal safer than an anterosuperior portal? Arthroscopy. 2014;30(11):1418-1423.

10. Lo IK, Lind CC, Burkhart SS. Glenohumeral arthroscopy portals established using an outside-in technique: neurovascular anatomy at risk. Arthroscopy. 2004;20(6):596-602.

11. Morgan CD, Burkhart SS, Palmeri M, Gillespie M. Type II SLAP lesions: three subtypes and their relationships to superior instability and rotator cuff tears. Arthroscopy. 1998;14(6):553-565.

12. Shishido H, Kikuchi S. Injury of the suprascapular nerve in shoulder surgery: an anatomic study. J Shoulder Elbow Surg. 2001;10(4):372-376.

13. Uggen C, Wei A, Glousman RE, et al. Biomechanical comparison of knotless anchor repair versus simple suture repair for type II SLAP lesions. Arthroscopy. 2009;25(10):1085-1092.

14. Kim SH, Crater RB, Hargens AR. Movement-induced knot migration after anterior stabilization in the shoulder. Arthroscopy. 2013;29(3):485-490.

Take-Home Points

- Anchors placed posterior to the biceps during SLAP repair are at risk for glenoid vault penetration and/or suprascapular nerve (SSN) injury.

- Vault penetration and SSN injury are avoided by using a Port of Wilmington (PW) portal instead of an anterior portal.

- A percutaneous PW portal is safe and passes through the rotator cuff muscle only.

Since being classified by Snyder and colleagues,1 various arthroscopic techniques have been used to repair superior labrum anterior and posterior (SLAP) tears, particularly type II tears. Despite being commonly performed, repairs of SLAP lesions remain challenging. There is high variability in the rate of good/excellent functional outcomes and athletes’ return to previous level of play after SLAP repairs.2,3 Furthermore, the rate of complications after SLAP repair is as high as 5%.4

One of the most common complications of repair of a type II SLAP tear is nerve injury.4 In particular, suprascapular nerve (SSN) injury has occurred after arthroscopic repair of SLAP tears.5,6 Three cadaveric studies have demonstrated that glenoid vault penetration is common during placement of knotted anchors for SLAP repair and that the SSN is at risk during placement of these anchors.7-9 However, 2 of the 3 studies used only an anterior portal in their evaluation of anchor placement. Safety of anchor placement posterior to the biceps tendon may be improved with a percutaneous approach using a Port of Wilmington (PW) portal.10,11 No studies have evaluated the risk of glenoid vault penetration and SSN injury with shorter knotless anchors.

We conducted a study to compare a standard anterosuperolateral (ASL) portal with a percutaneous PW portal for knotless anchors placed posterior to the biceps tendon during repair of SLAP tears. We hypothesized that anchors placed through the PW portal would be less likely to penetrate the glenoid vault and would be farther from the SSN in the event of bone penetration.

Materials and Methods

Six matched pairs of fresh human cadaveric shoulders were used in this study. Each specimen included the scapula, the clavicle, and the humerus. All 6 specimens were male, and their mean age was 41.2 years (range, 23-59 years). Shoulder arthroscopy was performed for placement of SLAP anchors, and open dissection followed.

Anchor Placement

The scapula was clamped and the shoulder placed in the lateral decubitus position with 30° of abduction, 20° of forward flexion, and neutral rotation.10 A standard posterior glenohumeral viewing portal was established and a 30° arthroscope inserted. Both shoulders of each matched pair were randomly assigned to anchor placement through either an ASL portal or a PW portal. Two anchors were placed in the superior glenoid to simulate repair of a posterior SLAP tear.11 Each was a 2.9-mm short (12.5-mm) knotless anchor (BioComposite PushLock; Arthrex) that included a polyetheretherketone (PEEK) eyelet for threading sutures before anchor placement. A drill guide was inserted according to manufacturer guidelines, and a 2.9-mm drill was used to make a bone socket 18 mm deep. The anchor eyelet was loaded with suture tape (Labral Tape; Arthrex), and the anchor and suture were inserted into the socket. The sutures were left uncut to aid in anchor visualization during open dissection. On a right shoulder, the first anchor was placed just posterior to the biceps tendon, at 11 o’clock, and the second anchor about 1 cm posterior to the first, at 10 o’clock. All anchors were placed by an arthroscopy fellowship–trained shoulder surgeon. Before placement, anchor location was confirmed by another arthroscopy fellowship–trained shoulder surgeon.

The ASL portal was created, with an 18-gauge spinal needle and an outside-in technique, about 1 cm lateral to the anterolateral corner of the acromion.

In the opposite shoulder, the PW portal was created, with a percutaneous technique, about 1 cm anterior and 1 cm lateral to the posterolateral corner of the acromion. An 18-gauge spinal needle was inserted to allow a 45° angle of approach to the posterosuperior glenoid.11

Cadaveric Dissection

After anchor placement, another shoulder surgeon performed the dissection. Skin, subcutaneous tissue, deltoid, and clavicle were removed. In the percutaneous specimens, PW portal location relative to rotator cuff was recorded before cuff removal. After overlying soft tissues were removed from a specimen, the anchors were examined for glenoid vault penetration. In the setting of vault penetration, digital calipers were used to measure the shortest distance from anchor to SSN.

Results

In the ASL portal group, 8 (66.7%) of 12 anchors (4/6 at 11 o’clock, 4/6 at 10 o’clock) penetrated the medial glenoid vault.

In the PW portal group, 2 (16.7%) of 12 anchors (1/6 at 11 o’clock, 1/6 at 10 o’clock, both from a single specimen) penetrated the medial glenoid vault. Actually, in each case the eyelet and not the anchor penetrated the vault. In the penetration cases, distance to SSN was 20 mm for the 11 o’clock anchor and 8 mm for the 10 o’clock anchor (Table). Of the 6 portals, 3 passed through the supraspinatus muscle, 2 through the infraspinatus musculotendinous junction, and 1 through the infraspinatus muscle.

Discussion

Our study findings support the hypothesis that SLAP repair anchors placed posterior to the biceps tendon are more likely to remain in bone with use of a percutaneous approach relative to an ASL approach. Our findings also support the growing body of evidence that such anchors placed with an anterior approach increase the risk for SSN injury.

Three other cadaveric studies have evaluated anchor placement for SLAP repair. Chan and colleagues7 evaluated drill penetration during bone socket preparation for SLAP repair in 21 matched pairs of formalin-embalmed cadavers. A 20-mm drill was used for correspondence to a 14.5-mm anchor, though no anchors were inserted, and sockets were created in an open manner. Through a mimicked ASL portal, 1 socket was made anterior to the biceps tendon, at 1 o’clock; then, through a mimicked PW portal, 2 sockets were made posterior to the tendon, at 11 o’clock and 9 to 10 o’clock. Glenoid vault penetration occurred in 29% of the 42 anterior sockets, but only 1 anchor (2.4%) touched the SSN. Penetration did not occur with the 11 o’clock anchors. The 9 to 10 o’clock anchor was at highest risk for SSN injury (9.5%, 4 cases). The study was limited by lack of anchor placement and open creation of bone sockets in embalmed cadavers.

Koh and colleagues8 evaluated arthroscopic placement of anterior SLAP anchors in 6 matched pairs of fresh-frozen cadavers. Through an ASL portal, each 14.5-mm knotted anchor was placed anterior to the biceps tendon, at 1 o’clock. As in the study by Chan and colleagues,7 drill depth was 20 mm. Notably, anchors were seated 2 mm beyond manufacturer recommendations, and the cadavers were of Asian origin, likely indicating smaller glenoids compared to specimens from North America or Europe. All 12 anchors penetrated the glenoid vault; mean distance to SSN was 3.1 mm.

Morgan and colleagues9 compared anterior and ASL portals created for SLAP repairs in 10 matched-pair cadavers. Anchors were placed at 1 o’clock, 11 o’clock, and 10 o’clock. As in the studies by Chan and colleagues7 and Koh and colleagues,8 14.5-mm knotted anchors were used. One anterior anchor (10%) placed through an ASL portal penetrated the cortex by 1 mm, and 2 anterior anchors (20%) placed through anterior portals penetrated the cortex (1 was completely out of the bone). Overall, 65% of 11 o’clock anchors and 100% of 10 o’clock anchors violated the glenoid vault. With the 11 o’clock anchors, mean distance to SSN was 6 mm for ASL portals and 4.2 mm for anterior portals; with the 10 o’clock anchors, mean distance to SSN was 8 mm for ASL portals and 2.1 mm for anterior portals.

Overall, the results of these 3 studies suggest that, with use of ASL portals, placement of SLAP anchors anterior to the biceps tendon is safe. Using the same portals, however, anchors placed posterior to the tendon are at higher risk for glenoid vault penetration. Supporting these findings are our study’s penetration rates: 66.7% for anchors placed through ASL portals and 16.7% for anchors placed through percutaneous PW portals. The different rates are not surprising given that the coracoid process projects anterior to the glenoid and provides additional bone stock for placement of anchors anteriorly vs posteriorly. Therefore, with percutaneous PW portals, the approach angle directs the anchor toward the bone of the coracoid base. Furthermore, the SSN passes nearest the posterior aspect of the glenoid. In a study by Shishido and Kikuchi,12 the distance from the posterior rim of the glenoid to the SSN was 18 mm, and from the superior rim was 29 mm. Therefore, anchors placed with an anterior approach naturally are directed toward the SSN.

In addition to portal placement and approach angle, anchor length likely affects the risks for glenoid vault penetration and SSN injury.

One limitation of this study was the small number of cadavers, all of which were male. Female cadavers and cadavers of other ethnic origins likely have smaller glenoid vaults, and thus their inclusion would have altered our results. This issue was well described in studies mentioned in this article, and our goal was simply to compare ASL portals with percutaneous PW portals, so we think it does not change the fact that the risks for glenoid vault penetration and SSN injury are reduced with use of PW portals for anchors placed posterior to the biceps tendon.

Conclusion

This study was the first to examine glenoid vault penetration and SSN proximity with short anchors for SLAP repair. The risk for glenoid vault penetration during repair of SLAP tears posterior to the biceps tendon was reduced by anchor placement with a percutaneous posterior approach. The percutaneous posterior approach also directs the anchor away from the SSN.

Am J Orthop. 2017;46(1):E60-E64. Copyright Frontline Medical Communications Inc. 2017. All rights reserved.

Take-Home Points

- Anchors placed posterior to the biceps during SLAP repair are at risk for glenoid vault penetration and/or suprascapular nerve (SSN) injury.

- Vault penetration and SSN injury are avoided by using a Port of Wilmington (PW) portal instead of an anterior portal.

- A percutaneous PW portal is safe and passes through the rotator cuff muscle only.

Since being classified by Snyder and colleagues,1 various arthroscopic techniques have been used to repair superior labrum anterior and posterior (SLAP) tears, particularly type II tears. Despite being commonly performed, repairs of SLAP lesions remain challenging. There is high variability in the rate of good/excellent functional outcomes and athletes’ return to previous level of play after SLAP repairs.2,3 Furthermore, the rate of complications after SLAP repair is as high as 5%.4

One of the most common complications of repair of a type II SLAP tear is nerve injury.4 In particular, suprascapular nerve (SSN) injury has occurred after arthroscopic repair of SLAP tears.5,6 Three cadaveric studies have demonstrated that glenoid vault penetration is common during placement of knotted anchors for SLAP repair and that the SSN is at risk during placement of these anchors.7-9 However, 2 of the 3 studies used only an anterior portal in their evaluation of anchor placement. Safety of anchor placement posterior to the biceps tendon may be improved with a percutaneous approach using a Port of Wilmington (PW) portal.10,11 No studies have evaluated the risk of glenoid vault penetration and SSN injury with shorter knotless anchors.

We conducted a study to compare a standard anterosuperolateral (ASL) portal with a percutaneous PW portal for knotless anchors placed posterior to the biceps tendon during repair of SLAP tears. We hypothesized that anchors placed through the PW portal would be less likely to penetrate the glenoid vault and would be farther from the SSN in the event of bone penetration.

Materials and Methods

Six matched pairs of fresh human cadaveric shoulders were used in this study. Each specimen included the scapula, the clavicle, and the humerus. All 6 specimens were male, and their mean age was 41.2 years (range, 23-59 years). Shoulder arthroscopy was performed for placement of SLAP anchors, and open dissection followed.

Anchor Placement

The scapula was clamped and the shoulder placed in the lateral decubitus position with 30° of abduction, 20° of forward flexion, and neutral rotation.10 A standard posterior glenohumeral viewing portal was established and a 30° arthroscope inserted. Both shoulders of each matched pair were randomly assigned to anchor placement through either an ASL portal or a PW portal. Two anchors were placed in the superior glenoid to simulate repair of a posterior SLAP tear.11 Each was a 2.9-mm short (12.5-mm) knotless anchor (BioComposite PushLock; Arthrex) that included a polyetheretherketone (PEEK) eyelet for threading sutures before anchor placement. A drill guide was inserted according to manufacturer guidelines, and a 2.9-mm drill was used to make a bone socket 18 mm deep. The anchor eyelet was loaded with suture tape (Labral Tape; Arthrex), and the anchor and suture were inserted into the socket. The sutures were left uncut to aid in anchor visualization during open dissection. On a right shoulder, the first anchor was placed just posterior to the biceps tendon, at 11 o’clock, and the second anchor about 1 cm posterior to the first, at 10 o’clock. All anchors were placed by an arthroscopy fellowship–trained shoulder surgeon. Before placement, anchor location was confirmed by another arthroscopy fellowship–trained shoulder surgeon.

The ASL portal was created, with an 18-gauge spinal needle and an outside-in technique, about 1 cm lateral to the anterolateral corner of the acromion.

In the opposite shoulder, the PW portal was created, with a percutaneous technique, about 1 cm anterior and 1 cm lateral to the posterolateral corner of the acromion. An 18-gauge spinal needle was inserted to allow a 45° angle of approach to the posterosuperior glenoid.11

Cadaveric Dissection

After anchor placement, another shoulder surgeon performed the dissection. Skin, subcutaneous tissue, deltoid, and clavicle were removed. In the percutaneous specimens, PW portal location relative to rotator cuff was recorded before cuff removal. After overlying soft tissues were removed from a specimen, the anchors were examined for glenoid vault penetration. In the setting of vault penetration, digital calipers were used to measure the shortest distance from anchor to SSN.

Results

In the ASL portal group, 8 (66.7%) of 12 anchors (4/6 at 11 o’clock, 4/6 at 10 o’clock) penetrated the medial glenoid vault.

In the PW portal group, 2 (16.7%) of 12 anchors (1/6 at 11 o’clock, 1/6 at 10 o’clock, both from a single specimen) penetrated the medial glenoid vault. Actually, in each case the eyelet and not the anchor penetrated the vault. In the penetration cases, distance to SSN was 20 mm for the 11 o’clock anchor and 8 mm for the 10 o’clock anchor (Table). Of the 6 portals, 3 passed through the supraspinatus muscle, 2 through the infraspinatus musculotendinous junction, and 1 through the infraspinatus muscle.

Discussion

Our study findings support the hypothesis that SLAP repair anchors placed posterior to the biceps tendon are more likely to remain in bone with use of a percutaneous approach relative to an ASL approach. Our findings also support the growing body of evidence that such anchors placed with an anterior approach increase the risk for SSN injury.

Three other cadaveric studies have evaluated anchor placement for SLAP repair. Chan and colleagues7 evaluated drill penetration during bone socket preparation for SLAP repair in 21 matched pairs of formalin-embalmed cadavers. A 20-mm drill was used for correspondence to a 14.5-mm anchor, though no anchors were inserted, and sockets were created in an open manner. Through a mimicked ASL portal, 1 socket was made anterior to the biceps tendon, at 1 o’clock; then, through a mimicked PW portal, 2 sockets were made posterior to the tendon, at 11 o’clock and 9 to 10 o’clock. Glenoid vault penetration occurred in 29% of the 42 anterior sockets, but only 1 anchor (2.4%) touched the SSN. Penetration did not occur with the 11 o’clock anchors. The 9 to 10 o’clock anchor was at highest risk for SSN injury (9.5%, 4 cases). The study was limited by lack of anchor placement and open creation of bone sockets in embalmed cadavers.

Koh and colleagues8 evaluated arthroscopic placement of anterior SLAP anchors in 6 matched pairs of fresh-frozen cadavers. Through an ASL portal, each 14.5-mm knotted anchor was placed anterior to the biceps tendon, at 1 o’clock. As in the study by Chan and colleagues,7 drill depth was 20 mm. Notably, anchors were seated 2 mm beyond manufacturer recommendations, and the cadavers were of Asian origin, likely indicating smaller glenoids compared to specimens from North America or Europe. All 12 anchors penetrated the glenoid vault; mean distance to SSN was 3.1 mm.

Morgan and colleagues9 compared anterior and ASL portals created for SLAP repairs in 10 matched-pair cadavers. Anchors were placed at 1 o’clock, 11 o’clock, and 10 o’clock. As in the studies by Chan and colleagues7 and Koh and colleagues,8 14.5-mm knotted anchors were used. One anterior anchor (10%) placed through an ASL portal penetrated the cortex by 1 mm, and 2 anterior anchors (20%) placed through anterior portals penetrated the cortex (1 was completely out of the bone). Overall, 65% of 11 o’clock anchors and 100% of 10 o’clock anchors violated the glenoid vault. With the 11 o’clock anchors, mean distance to SSN was 6 mm for ASL portals and 4.2 mm for anterior portals; with the 10 o’clock anchors, mean distance to SSN was 8 mm for ASL portals and 2.1 mm for anterior portals.

Overall, the results of these 3 studies suggest that, with use of ASL portals, placement of SLAP anchors anterior to the biceps tendon is safe. Using the same portals, however, anchors placed posterior to the tendon are at higher risk for glenoid vault penetration. Supporting these findings are our study’s penetration rates: 66.7% for anchors placed through ASL portals and 16.7% for anchors placed through percutaneous PW portals. The different rates are not surprising given that the coracoid process projects anterior to the glenoid and provides additional bone stock for placement of anchors anteriorly vs posteriorly. Therefore, with percutaneous PW portals, the approach angle directs the anchor toward the bone of the coracoid base. Furthermore, the SSN passes nearest the posterior aspect of the glenoid. In a study by Shishido and Kikuchi,12 the distance from the posterior rim of the glenoid to the SSN was 18 mm, and from the superior rim was 29 mm. Therefore, anchors placed with an anterior approach naturally are directed toward the SSN.

In addition to portal placement and approach angle, anchor length likely affects the risks for glenoid vault penetration and SSN injury.

One limitation of this study was the small number of cadavers, all of which were male. Female cadavers and cadavers of other ethnic origins likely have smaller glenoid vaults, and thus their inclusion would have altered our results. This issue was well described in studies mentioned in this article, and our goal was simply to compare ASL portals with percutaneous PW portals, so we think it does not change the fact that the risks for glenoid vault penetration and SSN injury are reduced with use of PW portals for anchors placed posterior to the biceps tendon.

Conclusion

This study was the first to examine glenoid vault penetration and SSN proximity with short anchors for SLAP repair. The risk for glenoid vault penetration during repair of SLAP tears posterior to the biceps tendon was reduced by anchor placement with a percutaneous posterior approach. The percutaneous posterior approach also directs the anchor away from the SSN.

Am J Orthop. 2017;46(1):E60-E64. Copyright Frontline Medical Communications Inc. 2017. All rights reserved.

1. Snyder SJ, Banas MP, Karzel RP. An analysis of 140 injuries to the superior glenoid labrum. J Shoulder Elbow Surg. 1995;4(4):243-248.

2. Denard PJ, Lädermann A, Burkhart SS. Long-term outcome after arthroscopic repair of type II SLAP lesions: results according to age and workers’ compensation status. Arthroscopy. 2012;28(4):451-457.

3. Gorantla K, Gill C, Wright RW. The outcome of type II SLAP repair: a systematic review. Arthroscopy. 2010;26(4):537-545.

4. Weber SC, Martin DF, Seiler JG 3rd, Harrast JJ. Superior labrum anterior and posterior lesions of the shoulder: incidence rates, complications, and outcomes as reported by American Board of Orthopedic Surgery. Part II candidates. Am J Sports Med. 2012;40(7):1538-1543.

5. Kim SH, Koh YG, Sung CH, Moon HK, Park YS. Iatrogenic suprascapular nerve injury after repair of type II SLAP lesion. Arthroscopy. 2010;26(7):1005-1008.

6. Yoo JC, Lee YS, Ahn JH, Park JH, Kang HJ, Koh KH. Isolated suprascapular nerve injury below the spinoglenoid notch after SLAP repair. J Shoulder Elbow Surg. 2009;18(4):e27-e29.

7. Chan H, Beaupre LA, Bouliane MJ. Injury of the suprascapular nerve during arthroscopic repair of superior labral tears: an anatomic study. J Shoulder Elbow Surg. 2010;19(5):709-715.

8. Koh KH, Park WH, Lim TK, Yoo JC. Medial perforation of the glenoid neck following SLAP repair places the suprascapular nerve at risk: a cadaveric study. J Shoulder Elbow Surg. 2011;20(2):245-250.

9. Morgan RT, Henn RF 3rd, Paryavi E, Dreese J. Injury to the suprascapular nerve during superior labrum anterior and posterior repair: is a rotator interval portal safer than an anterosuperior portal? Arthroscopy. 2014;30(11):1418-1423.

10. Lo IK, Lind CC, Burkhart SS. Glenohumeral arthroscopy portals established using an outside-in technique: neurovascular anatomy at risk. Arthroscopy. 2004;20(6):596-602.

11. Morgan CD, Burkhart SS, Palmeri M, Gillespie M. Type II SLAP lesions: three subtypes and their relationships to superior instability and rotator cuff tears. Arthroscopy. 1998;14(6):553-565.

12. Shishido H, Kikuchi S. Injury of the suprascapular nerve in shoulder surgery: an anatomic study. J Shoulder Elbow Surg. 2001;10(4):372-376.

13. Uggen C, Wei A, Glousman RE, et al. Biomechanical comparison of knotless anchor repair versus simple suture repair for type II SLAP lesions. Arthroscopy. 2009;25(10):1085-1092.

14. Kim SH, Crater RB, Hargens AR. Movement-induced knot migration after anterior stabilization in the shoulder. Arthroscopy. 2013;29(3):485-490.

1. Snyder SJ, Banas MP, Karzel RP. An analysis of 140 injuries to the superior glenoid labrum. J Shoulder Elbow Surg. 1995;4(4):243-248.

2. Denard PJ, Lädermann A, Burkhart SS. Long-term outcome after arthroscopic repair of type II SLAP lesions: results according to age and workers’ compensation status. Arthroscopy. 2012;28(4):451-457.

3. Gorantla K, Gill C, Wright RW. The outcome of type II SLAP repair: a systematic review. Arthroscopy. 2010;26(4):537-545.

4. Weber SC, Martin DF, Seiler JG 3rd, Harrast JJ. Superior labrum anterior and posterior lesions of the shoulder: incidence rates, complications, and outcomes as reported by American Board of Orthopedic Surgery. Part II candidates. Am J Sports Med. 2012;40(7):1538-1543.

5. Kim SH, Koh YG, Sung CH, Moon HK, Park YS. Iatrogenic suprascapular nerve injury after repair of type II SLAP lesion. Arthroscopy. 2010;26(7):1005-1008.

6. Yoo JC, Lee YS, Ahn JH, Park JH, Kang HJ, Koh KH. Isolated suprascapular nerve injury below the spinoglenoid notch after SLAP repair. J Shoulder Elbow Surg. 2009;18(4):e27-e29.

7. Chan H, Beaupre LA, Bouliane MJ. Injury of the suprascapular nerve during arthroscopic repair of superior labral tears: an anatomic study. J Shoulder Elbow Surg. 2010;19(5):709-715.

8. Koh KH, Park WH, Lim TK, Yoo JC. Medial perforation of the glenoid neck following SLAP repair places the suprascapular nerve at risk: a cadaveric study. J Shoulder Elbow Surg. 2011;20(2):245-250.

9. Morgan RT, Henn RF 3rd, Paryavi E, Dreese J. Injury to the suprascapular nerve during superior labrum anterior and posterior repair: is a rotator interval portal safer than an anterosuperior portal? Arthroscopy. 2014;30(11):1418-1423.

10. Lo IK, Lind CC, Burkhart SS. Glenohumeral arthroscopy portals established using an outside-in technique: neurovascular anatomy at risk. Arthroscopy. 2004;20(6):596-602.

11. Morgan CD, Burkhart SS, Palmeri M, Gillespie M. Type II SLAP lesions: three subtypes and their relationships to superior instability and rotator cuff tears. Arthroscopy. 1998;14(6):553-565.

12. Shishido H, Kikuchi S. Injury of the suprascapular nerve in shoulder surgery: an anatomic study. J Shoulder Elbow Surg. 2001;10(4):372-376.

13. Uggen C, Wei A, Glousman RE, et al. Biomechanical comparison of knotless anchor repair versus simple suture repair for type II SLAP lesions. Arthroscopy. 2009;25(10):1085-1092.

14. Kim SH, Crater RB, Hargens AR. Movement-induced knot migration after anterior stabilization in the shoulder. Arthroscopy. 2013;29(3):485-490.

Medication for life

Some areas of psychiatry would benefit from more controversy. One of them is the prescription of antidepressants to young people dealing with romantic disappointments.

I have seen many young men and women given an antidepressant for the very painful, but ordinary, romantic break-ups characteristic of this phase of life, who then become habituated to the drug. They take the medication indefinitely, their brains accommodate neurophysiologically to the presence of the chemical, and they become unable to discontinue it without intolerable withdrawal symptoms that look like an underlying illness. A parallel phenomenon occurs not infrequently with the use of amphetamines (and other stimulants) for attention-deficit hyperactivity disorder that is at times mistakenly diagnosed in this age group.