User login

Bringing you the latest news, research and reviews, exclusive interviews, podcasts, quizzes, and more.

div[contains(@class, 'header__large-screen')]

div[contains(@class, 'read-next-article')]

div[contains(@class, 'nav-primary')]

nav[contains(@class, 'nav-primary')]

section[contains(@class, 'footer-nav-section-wrapper')]

footer[@id='footer']

div[contains(@class, 'main-prefix')]

section[contains(@class, 'nav-hidden')]

div[contains(@class, 'ce-card-content')]

nav[contains(@class, 'nav-ce-stack')]

Study Finds Isotretinoin Effective for Acne in Transgender Patients on Hormone Rx

TOPLINE:

, but more information is needed on dosing and barriers to treatment.

METHODOLOGY:

- Acne can be a side effect of masculinizing hormone therapy for transmasculine individuals. While isotretinoin is an effective treatment option for acne, its effectiveness and safety in transgender and gender-diverse individuals are not well understood.

- This retrospective case series included 55 patients (mean age, 25.4 years) undergoing masculinizing hormone therapy at four medical centers, who were prescribed isotretinoin for acne associated with treatment.

- Isotretinoin treatment was started a median of 22.1 months after hormone therapy was initiated and continued for a median of 6 months with a median cumulative dose of 132.7 mg/kg.

- Researchers assessed acne improvement, clearance, recurrence, adverse effects, and reasons for treatment discontinuation.

TAKEAWAY:

- Overall, 48 patients (87.3%) experienced improvement, and 26 (47.3%) achieved clearance during treatment. A higher proportion of patients experienced improvement (97% vs 72.7%) and achieved clearance (63.6% vs 22.7%) with cumulative doses of ≥ 120 mg/kg than those who received cumulative doses < 120 mg/kg.

- The risk for recurrence was 20% (in four patients) among 20 patients who achieved clearance and had any subsequent health care encounters, with a mean follow-up time of 734.3 days.

- Common adverse effects included dryness (80%), joint pain (14.5%), and headaches (10.9%). Other adverse effects included nose bleeds (9.1%) and depression (5.5%).

- Of the 22 patients with a cumulative dose < 120 mg/kg, 14 (63.6%) were lost to follow-up; among those not lost to follow-up, 2 patients discontinued treatment because of transfer of care, 1 because of adverse effects, and 1 because of gender-affirming surgery, with concerns about wound healing.

IN PRACTICE:

“Although isotretinoin appears to be an effective treatment option for acne among individuals undergoing masculinizing hormone therapy, further efforts are needed to understand optimal dosing and treatment barriers to improve outcomes in transgender and gender-diverse individuals receiving testosterone,” the authors concluded.

SOURCE:

The study, led by James Choe, BS, Department of Dermatology, Brigham and Women’s Hospital, Harvard Medical School, Boston, was published online in JAMA Dermatology.

LIMITATIONS:

The study population was limited to four centers, and variability in clinician- and patient-reported acne outcomes and missing information could affect the reliability of data. Because of the small sample size, the association of masculinizing hormone therapy regimens with outcomes could not be evaluated.

DISCLOSURES:

One author is supported by the National Institute of Arthritis and Musculoskeletal and Skin Diseases. Three authors reported receiving grants or personal fees from various sources. The other authors declared no conflicts of interest.

A version of this article first appeared on Medscape.com.

TOPLINE:

, but more information is needed on dosing and barriers to treatment.

METHODOLOGY:

- Acne can be a side effect of masculinizing hormone therapy for transmasculine individuals. While isotretinoin is an effective treatment option for acne, its effectiveness and safety in transgender and gender-diverse individuals are not well understood.

- This retrospective case series included 55 patients (mean age, 25.4 years) undergoing masculinizing hormone therapy at four medical centers, who were prescribed isotretinoin for acne associated with treatment.

- Isotretinoin treatment was started a median of 22.1 months after hormone therapy was initiated and continued for a median of 6 months with a median cumulative dose of 132.7 mg/kg.

- Researchers assessed acne improvement, clearance, recurrence, adverse effects, and reasons for treatment discontinuation.

TAKEAWAY:

- Overall, 48 patients (87.3%) experienced improvement, and 26 (47.3%) achieved clearance during treatment. A higher proportion of patients experienced improvement (97% vs 72.7%) and achieved clearance (63.6% vs 22.7%) with cumulative doses of ≥ 120 mg/kg than those who received cumulative doses < 120 mg/kg.

- The risk for recurrence was 20% (in four patients) among 20 patients who achieved clearance and had any subsequent health care encounters, with a mean follow-up time of 734.3 days.

- Common adverse effects included dryness (80%), joint pain (14.5%), and headaches (10.9%). Other adverse effects included nose bleeds (9.1%) and depression (5.5%).

- Of the 22 patients with a cumulative dose < 120 mg/kg, 14 (63.6%) were lost to follow-up; among those not lost to follow-up, 2 patients discontinued treatment because of transfer of care, 1 because of adverse effects, and 1 because of gender-affirming surgery, with concerns about wound healing.

IN PRACTICE:

“Although isotretinoin appears to be an effective treatment option for acne among individuals undergoing masculinizing hormone therapy, further efforts are needed to understand optimal dosing and treatment barriers to improve outcomes in transgender and gender-diverse individuals receiving testosterone,” the authors concluded.

SOURCE:

The study, led by James Choe, BS, Department of Dermatology, Brigham and Women’s Hospital, Harvard Medical School, Boston, was published online in JAMA Dermatology.

LIMITATIONS:

The study population was limited to four centers, and variability in clinician- and patient-reported acne outcomes and missing information could affect the reliability of data. Because of the small sample size, the association of masculinizing hormone therapy regimens with outcomes could not be evaluated.

DISCLOSURES:

One author is supported by the National Institute of Arthritis and Musculoskeletal and Skin Diseases. Three authors reported receiving grants or personal fees from various sources. The other authors declared no conflicts of interest.

A version of this article first appeared on Medscape.com.

TOPLINE:

, but more information is needed on dosing and barriers to treatment.

METHODOLOGY:

- Acne can be a side effect of masculinizing hormone therapy for transmasculine individuals. While isotretinoin is an effective treatment option for acne, its effectiveness and safety in transgender and gender-diverse individuals are not well understood.

- This retrospective case series included 55 patients (mean age, 25.4 years) undergoing masculinizing hormone therapy at four medical centers, who were prescribed isotretinoin for acne associated with treatment.

- Isotretinoin treatment was started a median of 22.1 months after hormone therapy was initiated and continued for a median of 6 months with a median cumulative dose of 132.7 mg/kg.

- Researchers assessed acne improvement, clearance, recurrence, adverse effects, and reasons for treatment discontinuation.

TAKEAWAY:

- Overall, 48 patients (87.3%) experienced improvement, and 26 (47.3%) achieved clearance during treatment. A higher proportion of patients experienced improvement (97% vs 72.7%) and achieved clearance (63.6% vs 22.7%) with cumulative doses of ≥ 120 mg/kg than those who received cumulative doses < 120 mg/kg.

- The risk for recurrence was 20% (in four patients) among 20 patients who achieved clearance and had any subsequent health care encounters, with a mean follow-up time of 734.3 days.

- Common adverse effects included dryness (80%), joint pain (14.5%), and headaches (10.9%). Other adverse effects included nose bleeds (9.1%) and depression (5.5%).

- Of the 22 patients with a cumulative dose < 120 mg/kg, 14 (63.6%) were lost to follow-up; among those not lost to follow-up, 2 patients discontinued treatment because of transfer of care, 1 because of adverse effects, and 1 because of gender-affirming surgery, with concerns about wound healing.

IN PRACTICE:

“Although isotretinoin appears to be an effective treatment option for acne among individuals undergoing masculinizing hormone therapy, further efforts are needed to understand optimal dosing and treatment barriers to improve outcomes in transgender and gender-diverse individuals receiving testosterone,” the authors concluded.

SOURCE:

The study, led by James Choe, BS, Department of Dermatology, Brigham and Women’s Hospital, Harvard Medical School, Boston, was published online in JAMA Dermatology.

LIMITATIONS:

The study population was limited to four centers, and variability in clinician- and patient-reported acne outcomes and missing information could affect the reliability of data. Because of the small sample size, the association of masculinizing hormone therapy regimens with outcomes could not be evaluated.

DISCLOSURES:

One author is supported by the National Institute of Arthritis and Musculoskeletal and Skin Diseases. Three authors reported receiving grants or personal fees from various sources. The other authors declared no conflicts of interest.

A version of this article first appeared on Medscape.com.

Hidradenitis Suppurativa: Clinical Outcomes for Bimekizumab Positive in Phase 3 Studies

TOPLINE:

, in two phase 3 studies.

METHODOLOGY:

- To assess the efficacy and safety of bimekizumab, an interleukin (IL)-17A and IL-17F antagonist, 320 mg for HS, researchers conducted two 48-week phase 3 trials BE HEARD I (n = 505) and II (n = 509), which enrolled patients with moderate to severe HS and a history of inadequate response to systemic antibiotics.

- Patients were randomly assigned to one of four groups: Bimekizumab every 2 weeks, bimekizumab every 2 weeks for 16 weeks followed by every 4 weeks of dosing, bimekizumab every 4 weeks, or placebo for 16 weeks followed by bimekizumab every 2 weeks.

- The primary outcome was an HS clinical response of at least 50% (HiSCR50) at week 16, defined as at least a 50% reduction in total abscess and inflammatory nodule count.

TAKEAWAY:

- A higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved an HiSCR50 response at week 16 in BE HEARD I (48% vs 29%; odds ratio [OR], 2.23; P = .006) and II (52% vs 32%; OR, 2.29; P = .0032) trials.

- Patients receiving bimekizumab every 4 weeks also achieved a higher HiSCR50 response at week 16 vs placebo in the BE HEARD II trial (54% vs 32%; OR, 2.42; P = .0038).

- At week 16, a higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved at least a 75% HiSCR (HiSCR75) in both trials, and a higher proportion of those receiving bimekizumab every 4 weeks achieved HiSCR75 in the BE HEARD II trial.

- At week 48, 45%-68% of patients achieved HiSCR50 in both trials.

- Patients who received bimekizumab vs placebo for the initial 16 weeks had greater improvements in patient-reported outcomes, and bimekizumab was well tolerated with a low number of serious or severe treatment-emergent adverse events.

IN PRACTICE:

“Bimekizumab was well tolerated by patients with hidradenitis suppurativa and produced rapid and deep clinically meaningful responses that were maintained up to 48 weeks,” the authors wrote. “These data support the use of bimekizumab as a promising new therapeutic option for patients with moderate to severe hidradenitis suppurativa.”

SOURCE:

Alexa B. Kimball, MD, MPH, from Beth Israel Deaconess Medical Center and Harvard Medical School, Boston, led this study, which was published online in The Lancet.

LIMITATIONS:

The placebo-controlled part of this trial was relatively short at 16 weeks and may affect the interpretation of later efficacy data, there was a lack of an active comparator group, and the efficacy of treatment was evaluated in the presence of rescue treatment with systemic antibiotics.

DISCLOSURES:

The studies were funded by bimekizumab manufacturer UCB Pharma. Seven authors disclosed being current or former employees of UCB Pharma. Other authors reported several ties with many companies, including UCB Pharma.

A version of this article first appeared on Medscape.com.

TOPLINE:

, in two phase 3 studies.

METHODOLOGY:

- To assess the efficacy and safety of bimekizumab, an interleukin (IL)-17A and IL-17F antagonist, 320 mg for HS, researchers conducted two 48-week phase 3 trials BE HEARD I (n = 505) and II (n = 509), which enrolled patients with moderate to severe HS and a history of inadequate response to systemic antibiotics.

- Patients were randomly assigned to one of four groups: Bimekizumab every 2 weeks, bimekizumab every 2 weeks for 16 weeks followed by every 4 weeks of dosing, bimekizumab every 4 weeks, or placebo for 16 weeks followed by bimekizumab every 2 weeks.

- The primary outcome was an HS clinical response of at least 50% (HiSCR50) at week 16, defined as at least a 50% reduction in total abscess and inflammatory nodule count.

TAKEAWAY:

- A higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved an HiSCR50 response at week 16 in BE HEARD I (48% vs 29%; odds ratio [OR], 2.23; P = .006) and II (52% vs 32%; OR, 2.29; P = .0032) trials.

- Patients receiving bimekizumab every 4 weeks also achieved a higher HiSCR50 response at week 16 vs placebo in the BE HEARD II trial (54% vs 32%; OR, 2.42; P = .0038).

- At week 16, a higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved at least a 75% HiSCR (HiSCR75) in both trials, and a higher proportion of those receiving bimekizumab every 4 weeks achieved HiSCR75 in the BE HEARD II trial.

- At week 48, 45%-68% of patients achieved HiSCR50 in both trials.

- Patients who received bimekizumab vs placebo for the initial 16 weeks had greater improvements in patient-reported outcomes, and bimekizumab was well tolerated with a low number of serious or severe treatment-emergent adverse events.

IN PRACTICE:

“Bimekizumab was well tolerated by patients with hidradenitis suppurativa and produced rapid and deep clinically meaningful responses that were maintained up to 48 weeks,” the authors wrote. “These data support the use of bimekizumab as a promising new therapeutic option for patients with moderate to severe hidradenitis suppurativa.”

SOURCE:

Alexa B. Kimball, MD, MPH, from Beth Israel Deaconess Medical Center and Harvard Medical School, Boston, led this study, which was published online in The Lancet.

LIMITATIONS:

The placebo-controlled part of this trial was relatively short at 16 weeks and may affect the interpretation of later efficacy data, there was a lack of an active comparator group, and the efficacy of treatment was evaluated in the presence of rescue treatment with systemic antibiotics.

DISCLOSURES:

The studies were funded by bimekizumab manufacturer UCB Pharma. Seven authors disclosed being current or former employees of UCB Pharma. Other authors reported several ties with many companies, including UCB Pharma.

A version of this article first appeared on Medscape.com.

TOPLINE:

, in two phase 3 studies.

METHODOLOGY:

- To assess the efficacy and safety of bimekizumab, an interleukin (IL)-17A and IL-17F antagonist, 320 mg for HS, researchers conducted two 48-week phase 3 trials BE HEARD I (n = 505) and II (n = 509), which enrolled patients with moderate to severe HS and a history of inadequate response to systemic antibiotics.

- Patients were randomly assigned to one of four groups: Bimekizumab every 2 weeks, bimekizumab every 2 weeks for 16 weeks followed by every 4 weeks of dosing, bimekizumab every 4 weeks, or placebo for 16 weeks followed by bimekizumab every 2 weeks.

- The primary outcome was an HS clinical response of at least 50% (HiSCR50) at week 16, defined as at least a 50% reduction in total abscess and inflammatory nodule count.

TAKEAWAY:

- A higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved an HiSCR50 response at week 16 in BE HEARD I (48% vs 29%; odds ratio [OR], 2.23; P = .006) and II (52% vs 32%; OR, 2.29; P = .0032) trials.

- Patients receiving bimekizumab every 4 weeks also achieved a higher HiSCR50 response at week 16 vs placebo in the BE HEARD II trial (54% vs 32%; OR, 2.42; P = .0038).

- At week 16, a higher proportion of patients receiving bimekizumab every 2 weeks vs placebo achieved at least a 75% HiSCR (HiSCR75) in both trials, and a higher proportion of those receiving bimekizumab every 4 weeks achieved HiSCR75 in the BE HEARD II trial.

- At week 48, 45%-68% of patients achieved HiSCR50 in both trials.

- Patients who received bimekizumab vs placebo for the initial 16 weeks had greater improvements in patient-reported outcomes, and bimekizumab was well tolerated with a low number of serious or severe treatment-emergent adverse events.

IN PRACTICE:

“Bimekizumab was well tolerated by patients with hidradenitis suppurativa and produced rapid and deep clinically meaningful responses that were maintained up to 48 weeks,” the authors wrote. “These data support the use of bimekizumab as a promising new therapeutic option for patients with moderate to severe hidradenitis suppurativa.”

SOURCE:

Alexa B. Kimball, MD, MPH, from Beth Israel Deaconess Medical Center and Harvard Medical School, Boston, led this study, which was published online in The Lancet.

LIMITATIONS:

The placebo-controlled part of this trial was relatively short at 16 weeks and may affect the interpretation of later efficacy data, there was a lack of an active comparator group, and the efficacy of treatment was evaluated in the presence of rescue treatment with systemic antibiotics.

DISCLOSURES:

The studies were funded by bimekizumab manufacturer UCB Pharma. Seven authors disclosed being current or former employees of UCB Pharma. Other authors reported several ties with many companies, including UCB Pharma.

A version of this article first appeared on Medscape.com.

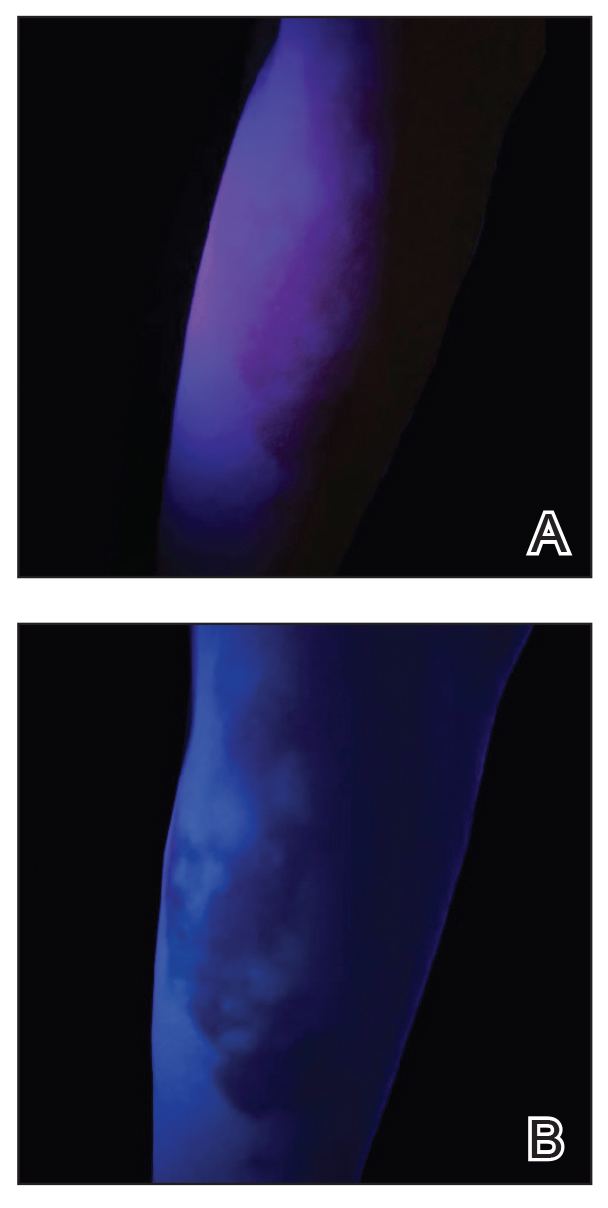

Erythematous Flaky Rash on the Toe

The Diagnosis: Necrolytic Migratory Erythema

Necrolytic migratory erythema (NME) is a waxing and waning rash associated with rare pancreatic neuroendocrine tumors called glucagonomas. It is characterized by pruritic and painful, well-demarcated, erythematous plaques that manifest in the intertriginous areas and on the perineum and buttocks.1 Due to the evolving nature of the rash, the histopathologic findings in NME vary depending on the stage of the cutaneous lesions at the time of biopsy.2 Multiple dyskeratotic keratinocytes spanning all epidermal layers may be a diagnostic clue in early lesions of NME.3 Typical features of longstanding lesions include confluent parakeratosis, psoriasiform hyperplasia with mild or absent spongiosis, and upper epidermal necrosis with keratinocyte vacuolization and pallor.4 Morphologic features that are present prior to the development of epidermal vacuolation and necrosis frequently are misattributed to psoriasis or eczema. Long-standing lesions also may develop a neutrophilic infiltrate with subcorneal and intraepidermal pustules.2 Other common features include a discrete perivascular lymphocytic infiltrate and an erosive or encrusted epidermis.5 Although direct immunofluorescence typically is negative, nonspecific findings can be seen, including apoptotic keratinocytes labeling with fibrinogen and C3, as well as scattered, clumped, IgM-positive cytoid bodies present at the dermal-epidermal junction (DEJ).6 Biopsies also have shown scattered, clumped, IgM-positive cytoid bodies present at the DEJ.5

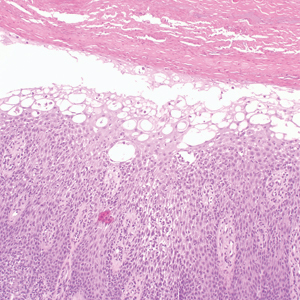

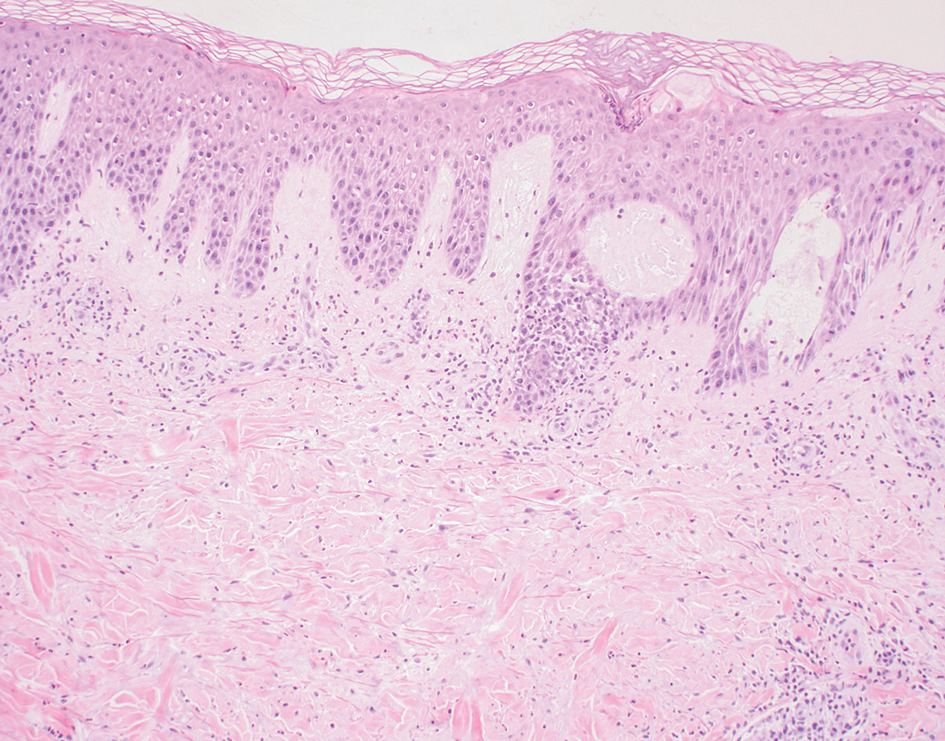

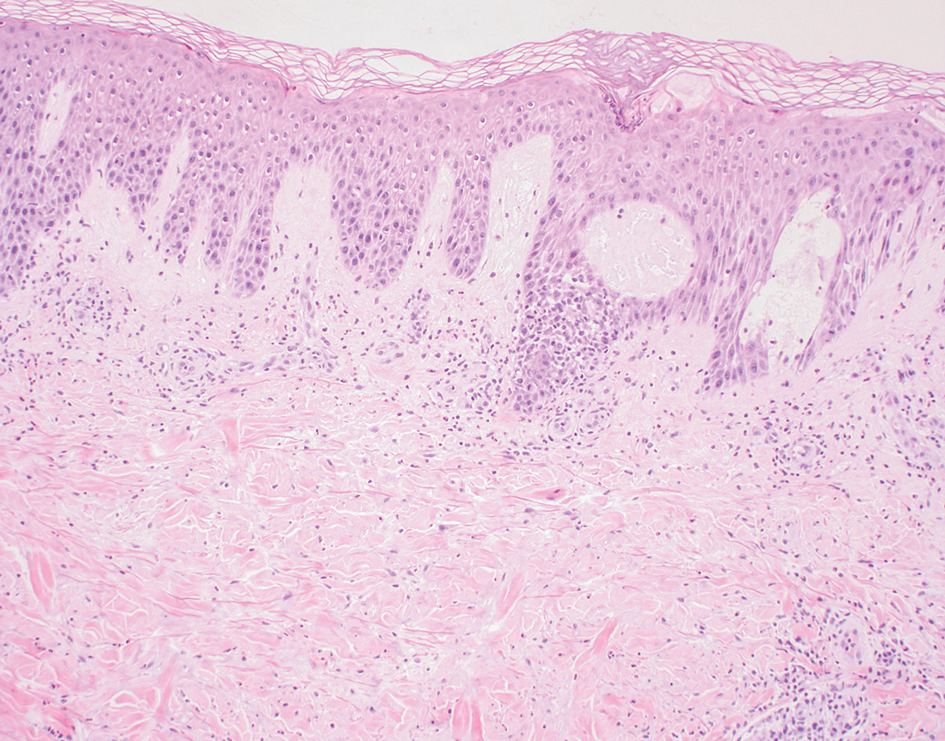

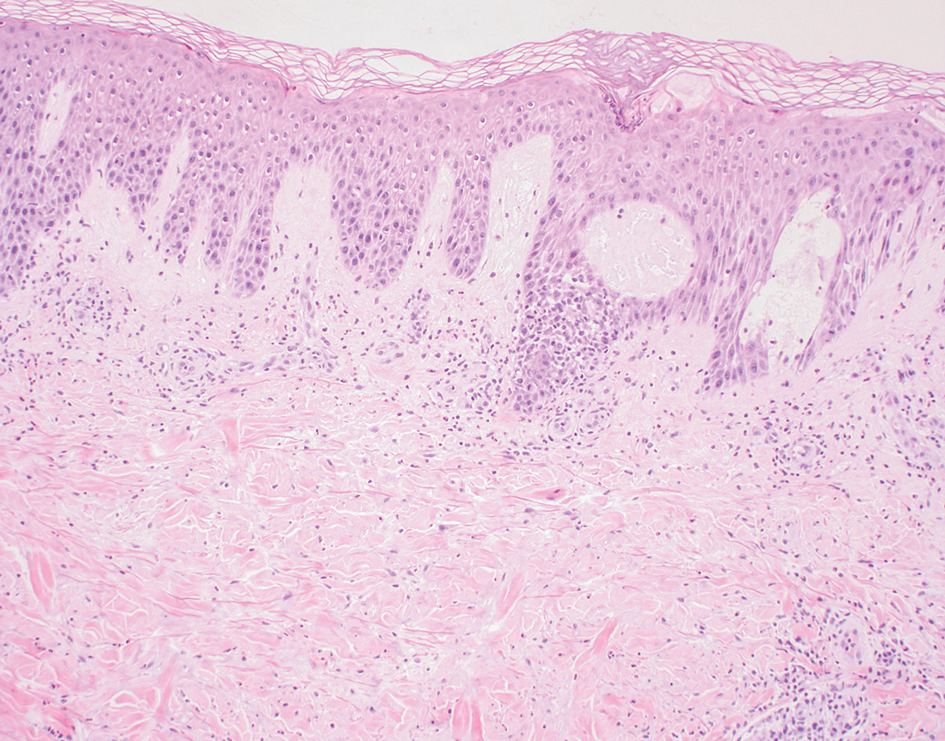

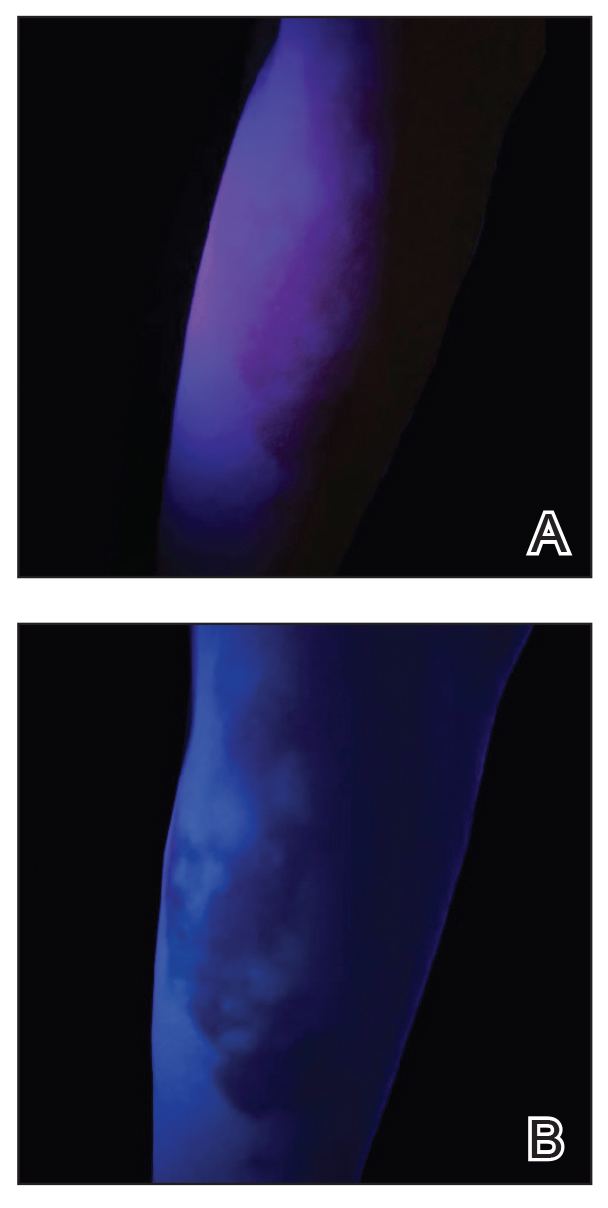

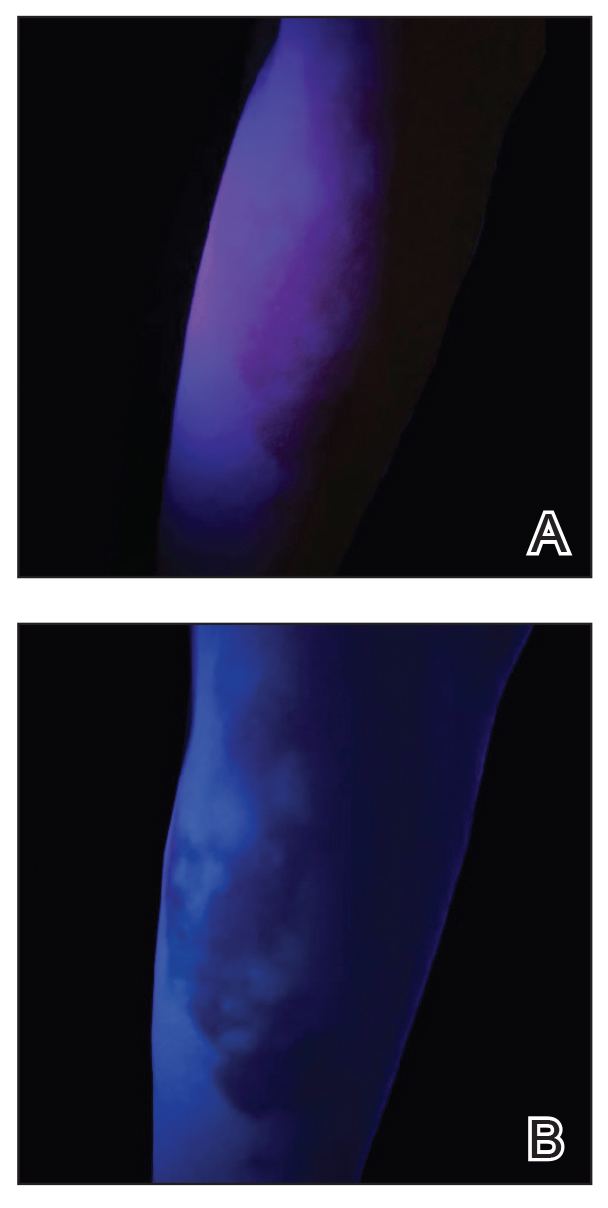

Psoriasis is a chronic relapsing papulosquamous disorder characterized by scaly erythematous plaques often overlying the extensor surfaces of the extremities. Histopathology shows a psoriasiform pattern of inflammation with thinning of the suprapapillary plates and elongation of the rete ridges. Further diagnostic clues of psoriasis include regular acanthosis, characteristic Munro microabscesses with neutrophils in a hyperkeratotic stratum corneum (Figure 1), hypogranulosis, and neutrophilic spongiform pustules of Kogoj in the stratum spinosum. Generally, there is a lack of the epidermal necrosis seen with NME.7,8

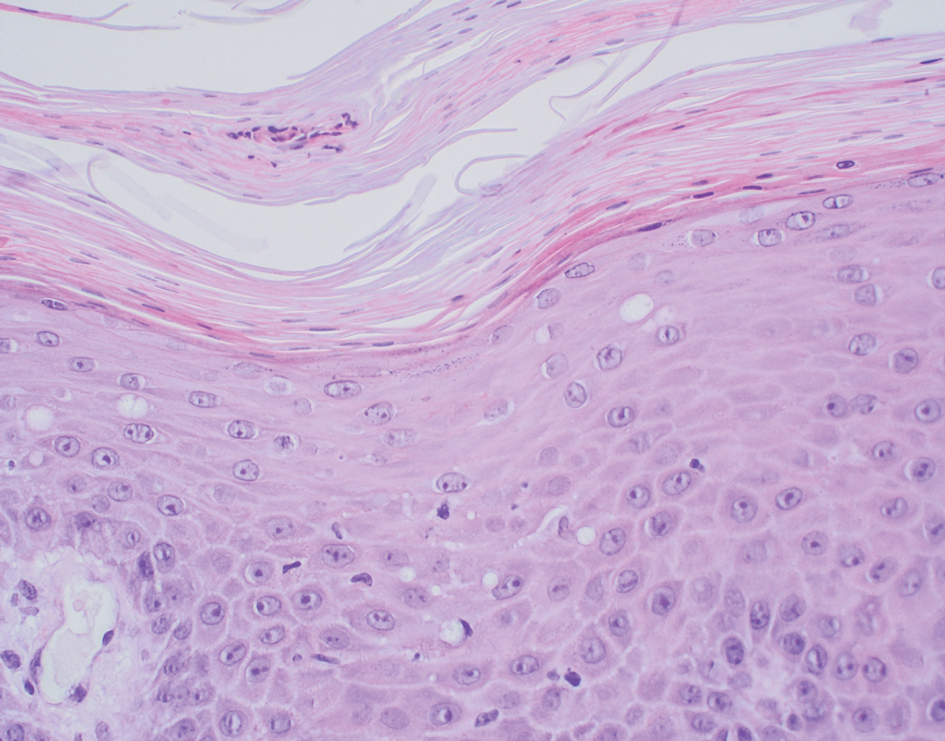

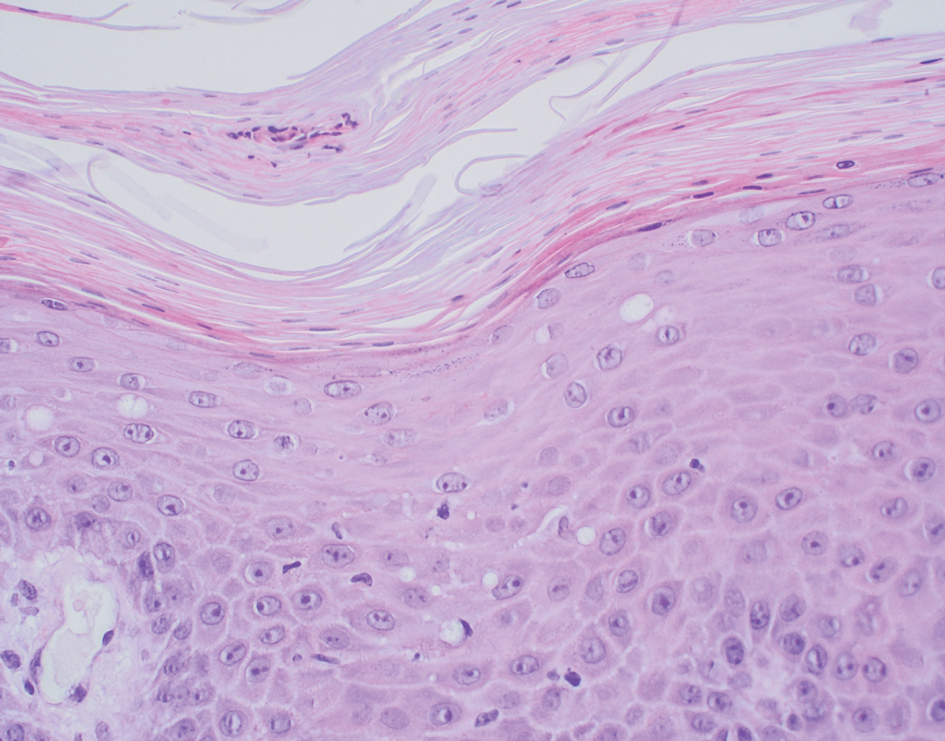

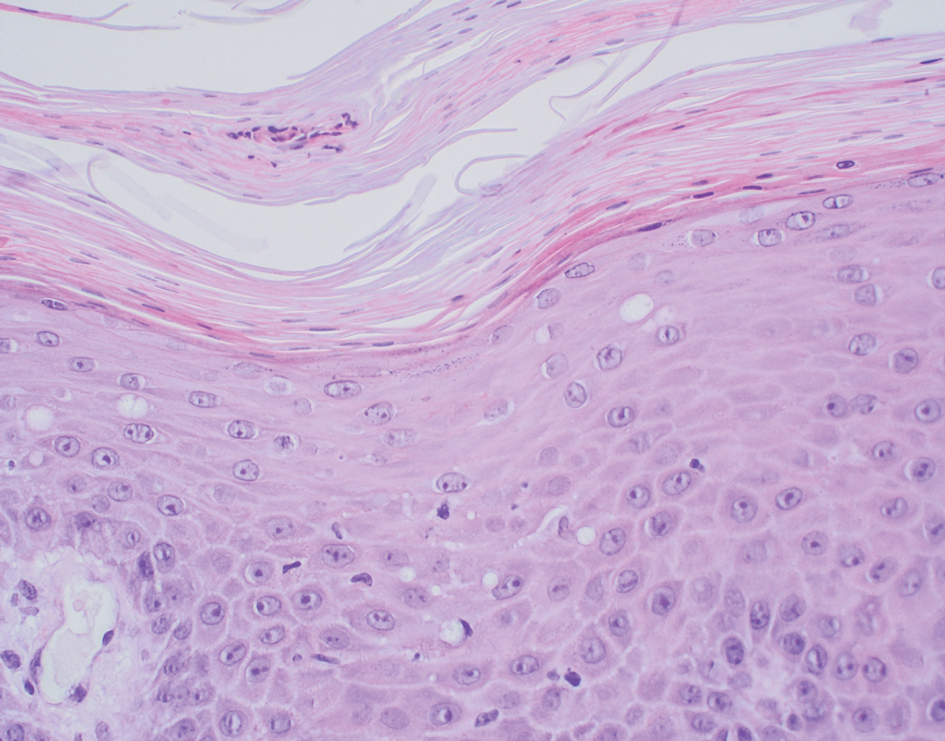

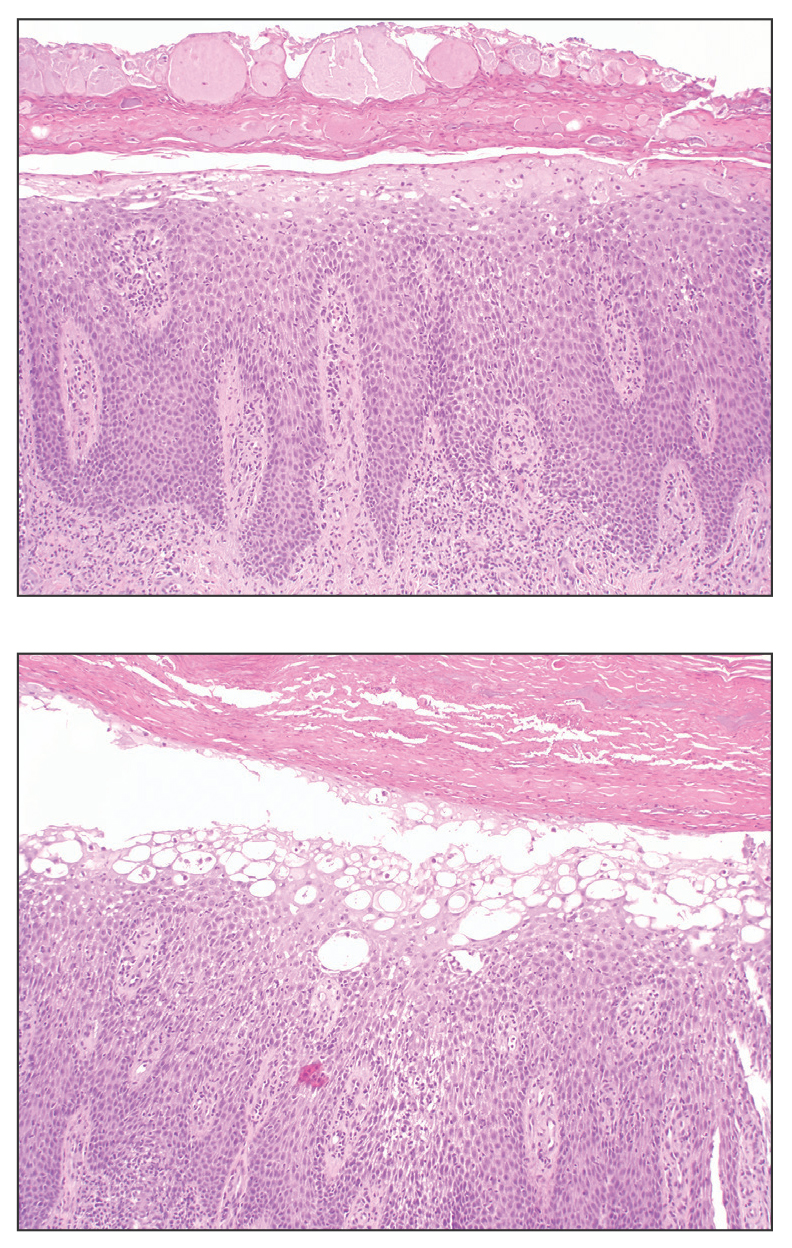

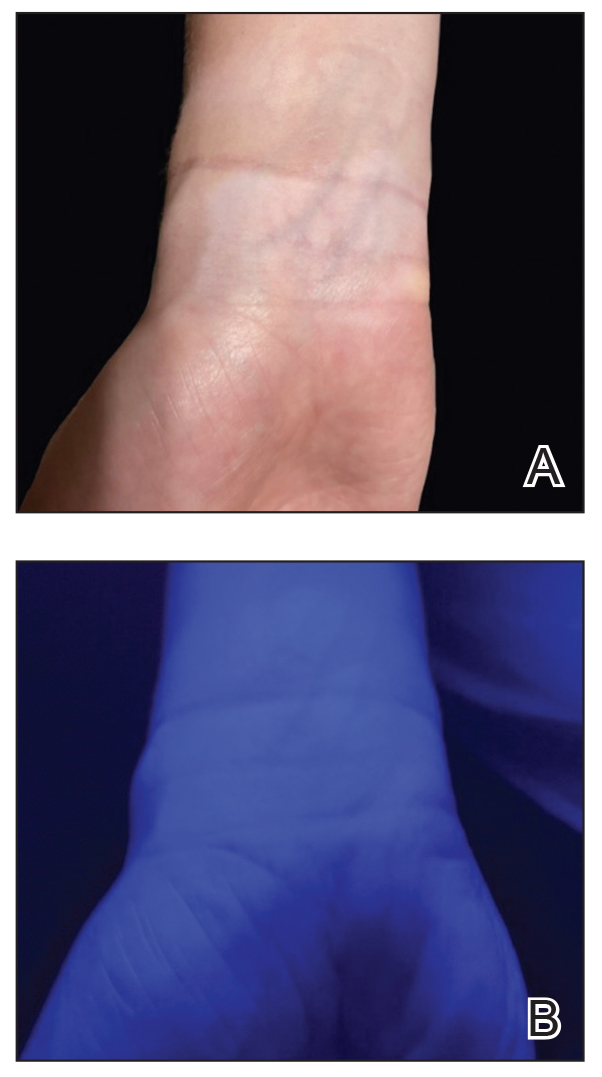

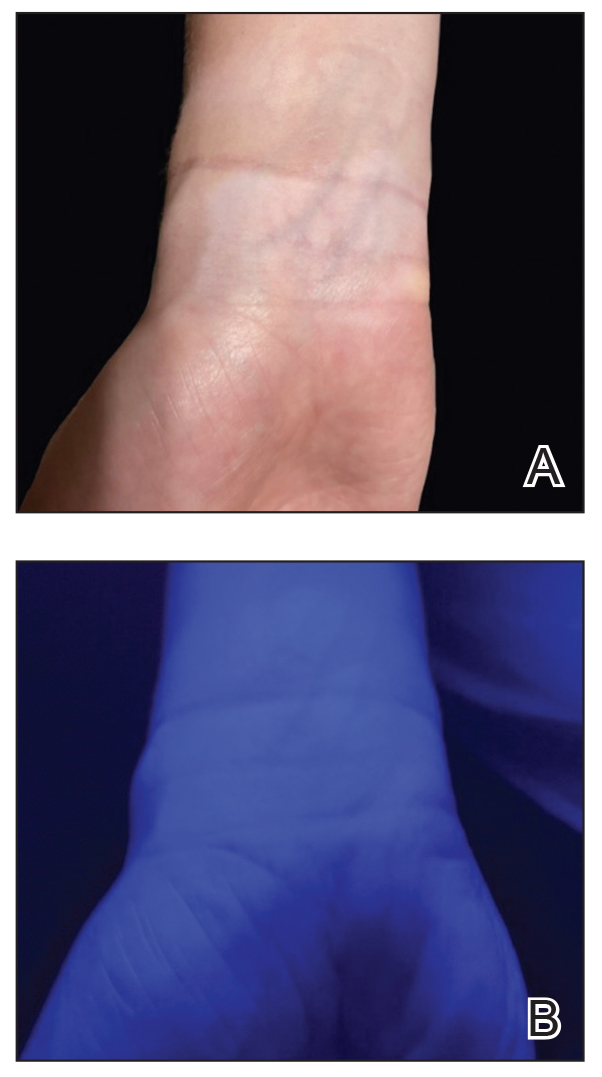

Lichen simplex chronicus manifests as pruritic, often hyperpigmented, well-defined, lichenified plaques with excoriation following repetitive mechanical trauma, commonly on the lower lateral legs, posterior neck, and flexural areas.9 The histologic landscape is marked by well-developed lesions evolving to show compact orthokeratosis, hypergranulosis, irregularly elongated rete ridges (ie, irregular acanthosis), and papillary dermal fibrosis with vertical streaking of collagen (Figure 2).9,10

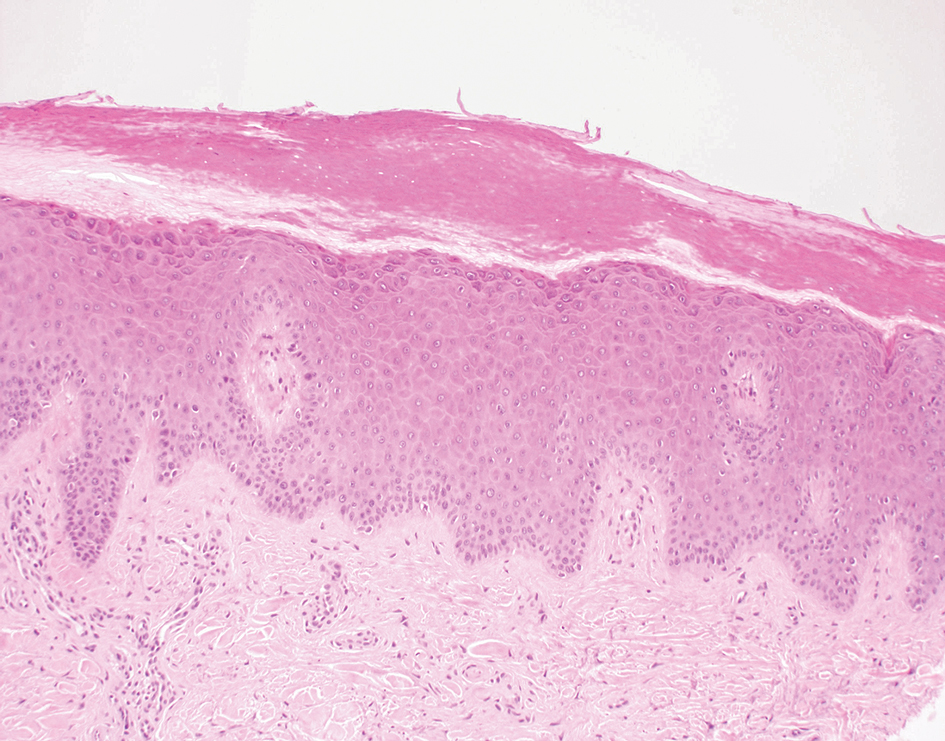

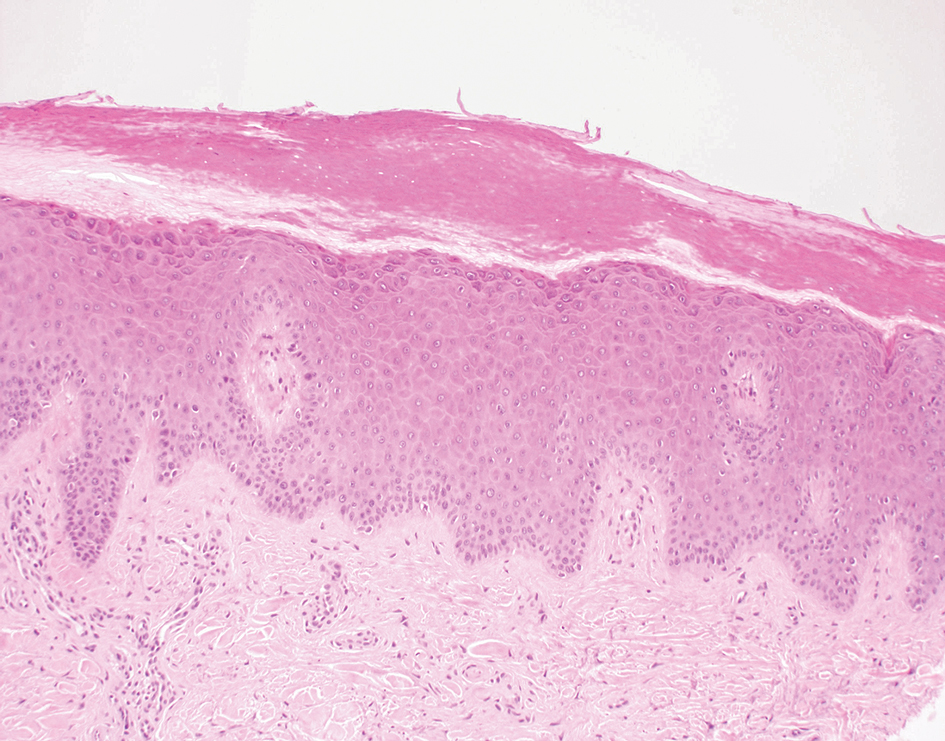

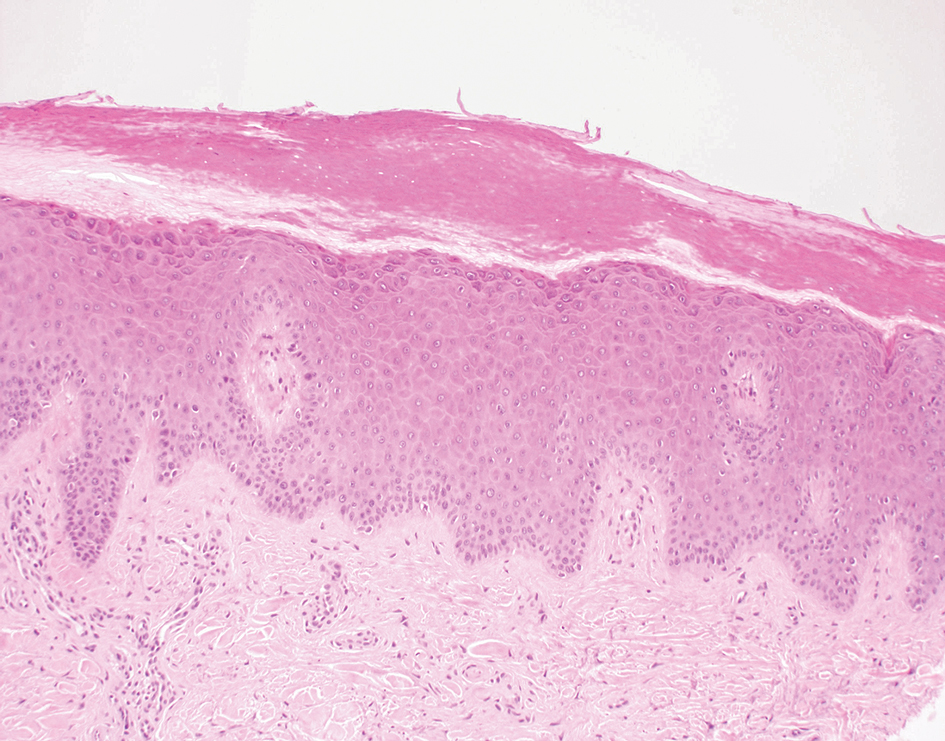

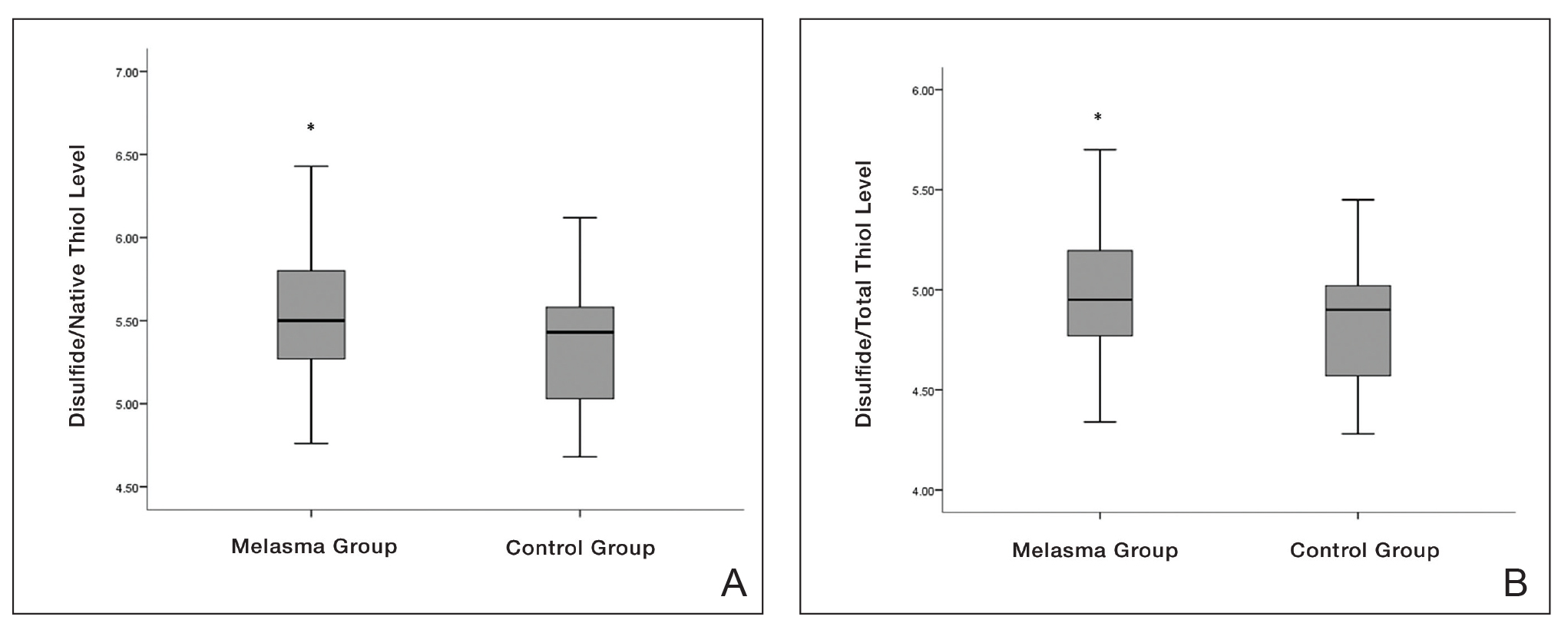

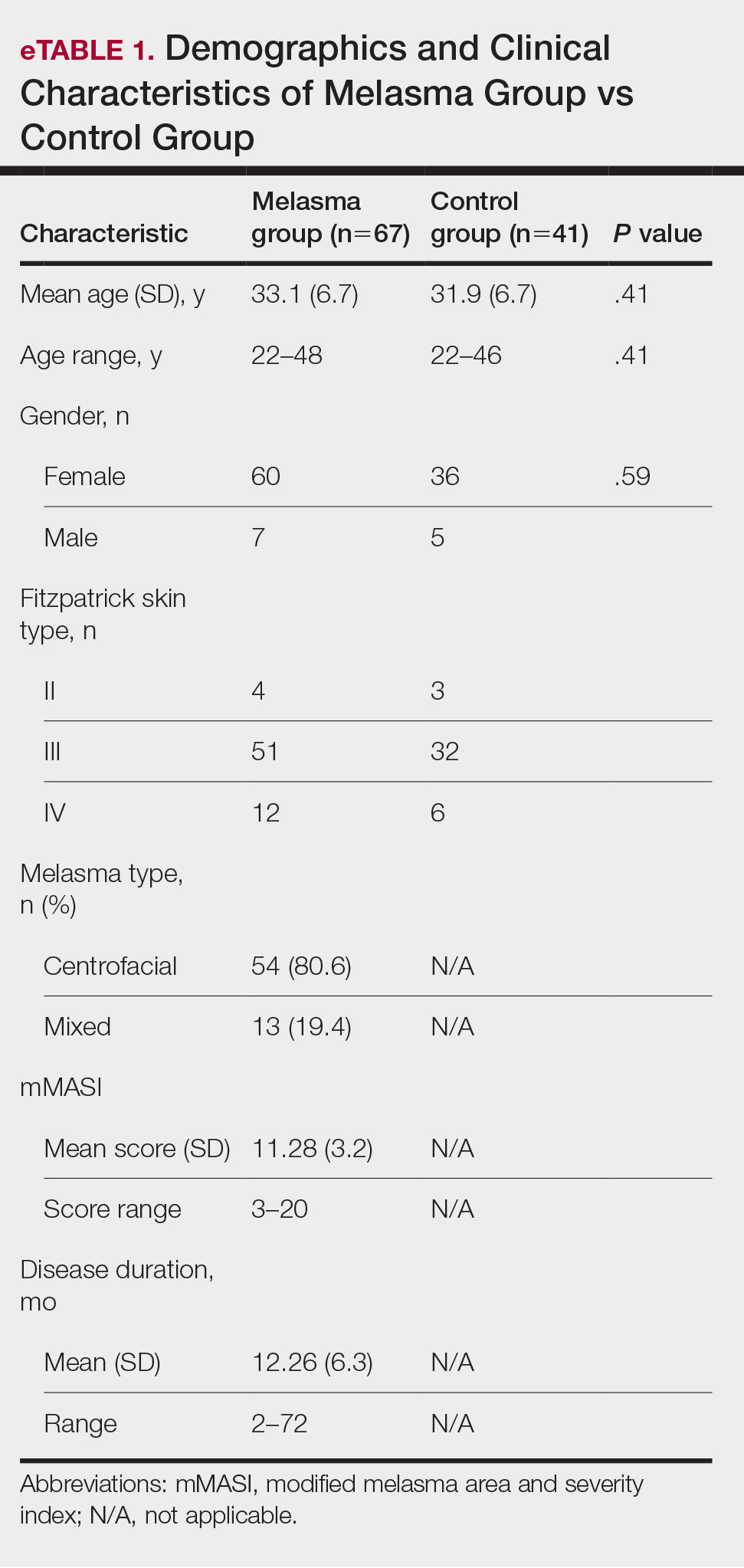

Subacute cutaneous lupus erythematosus (SCLE) is recognized clinically by scaly/psoriasiform and annular lesions with mild or absent systemic involvement. Common histopathologic findings include epidermal atrophy, vacuolar interface dermatitis with hydropic degeneration of the basal layer, a subepidermal lymphocytic infiltrate, and a periadnexal and perivascular infiltrate (Figure 3).11 Upper dermal edema, spotty necrosis of individual cells in the epidermis, dermal-epidermal separation caused by prominent basal cell degeneration, and accumulation of acid mucopolysaccharides (mucin) are other histologic features associated with SCLE.12,13

The immunofluorescence pattern in SCLE features dustlike particles of IgG deposition in the epidermis, subepidermal region, and dermal cellular infiltrate. Lesions also may have granular deposition of immunoreactions at the DEJ.11,13

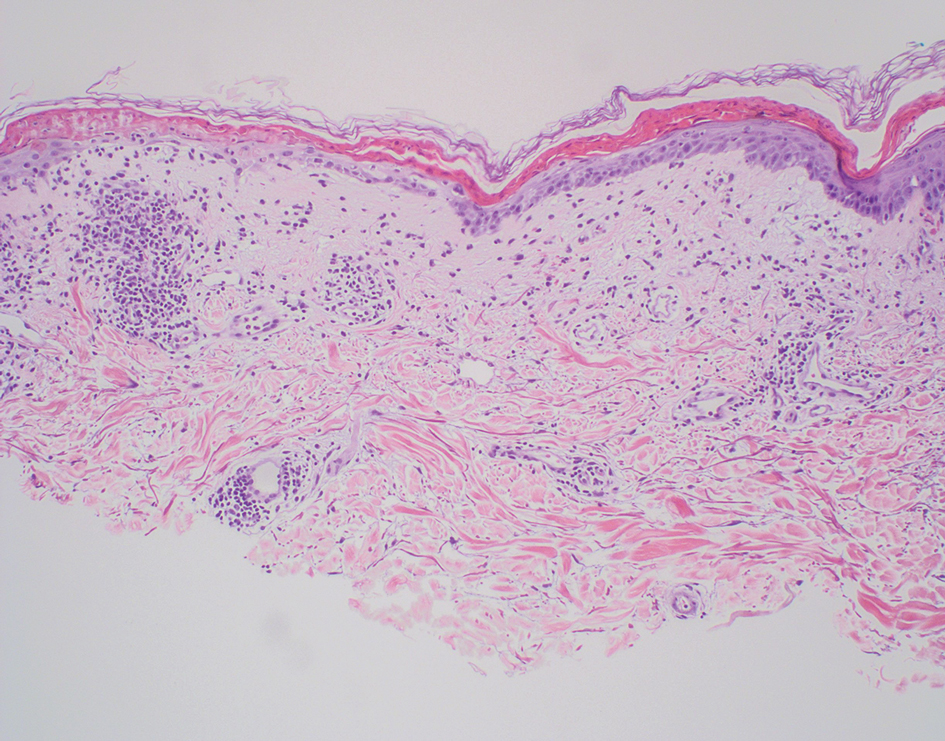

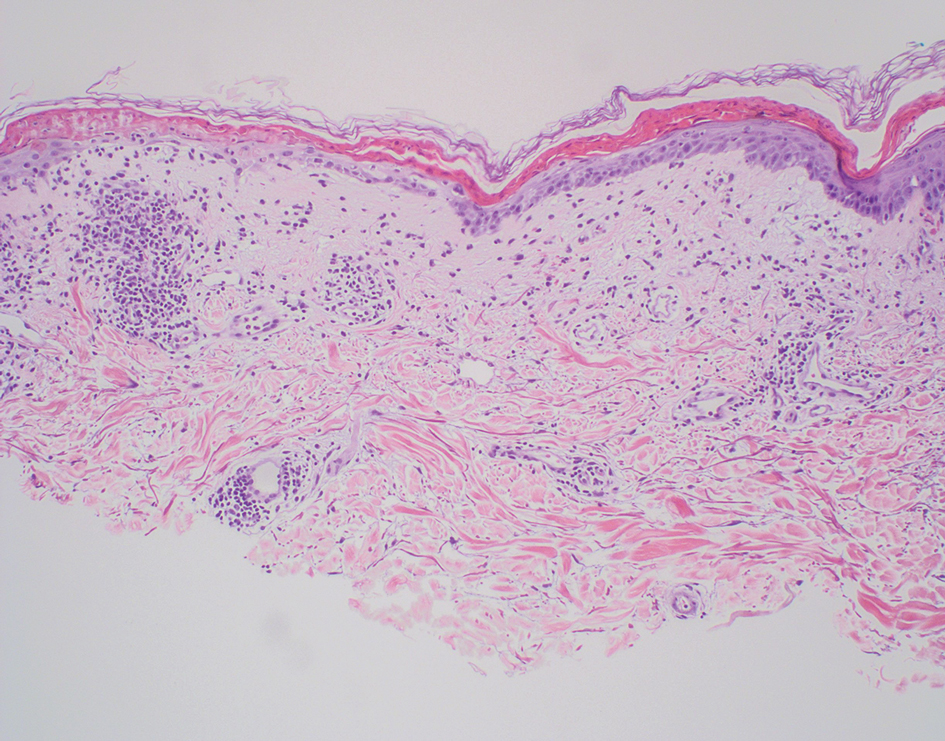

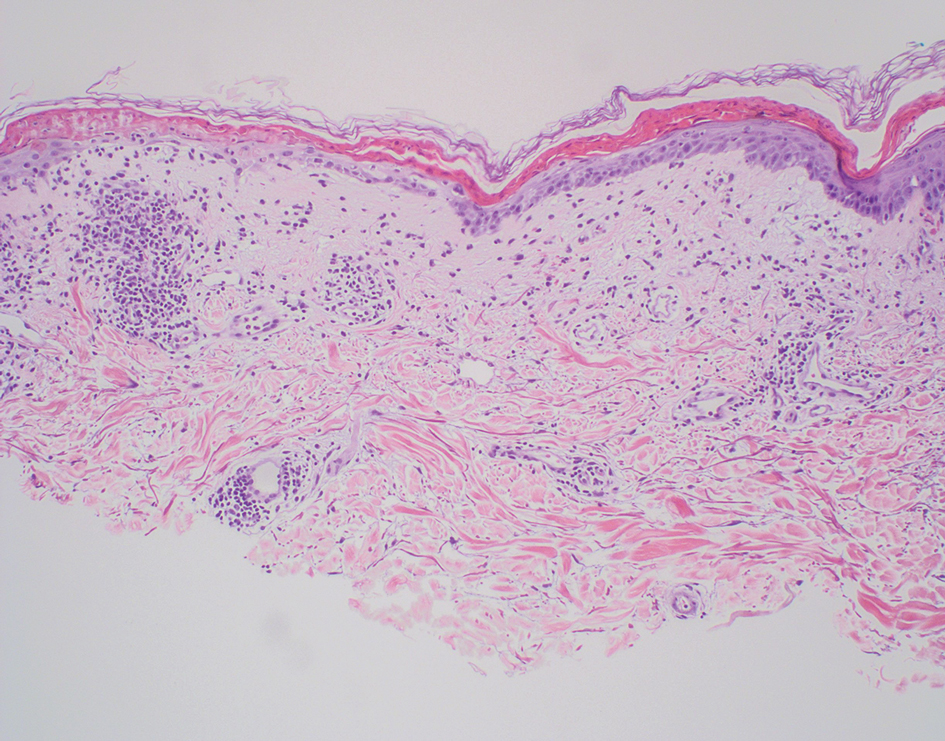

The manifestation of drug reaction with eosinophilia and systemic symptoms (DRESS) syndrome (also known as drug-induced hypersensitivity syndrome) is variable, with a morbilliform rash that spreads from the face to the entire body, urticaria, atypical target lesions, purpuriform lesions, lymphadenopathy, and exfoliative dermatitis.14 The nonspecific morphologic features of DRESS syndrome lesions are associated with variable histologic features, which include focal interface changes with vacuolar alteration of the basal layer; atypical lymphocytes with hyperchromic nuclei; and a superficial, inconsistently dense, perivascular lymphocytic infiltrate. Other relatively common histopathologic patterns include an upper dermis with dilated blood vessels, spongiosis with exocytosis of lymphocytes (Figure 4), and necrotic keratinocytes. Although peripheral eosinophilia is an important diagnostic criterion and is observed consistently, eosinophils are variably present on skin biopsy.15,16 Given the histopathologic variability and nonspecific findings, clinical correlation is required when diagnosing DRESS syndrome.

- Halvorson SA, Gilbert E, Hopkins RS, et al. Putting the pieces together: necrolytic migratory erythema and the glucagonoma syndrome. J Gen Intern Med. 2013;28:1525-1529. doi:10.1007 /s11606-013-2490-5

- Toberer F, Hartschuh W, Wiedemeyer K. Glucagonoma-associated necrolytic migratory erythema: the broad spectrum of the clinical and histopathological findings and clues to the diagnosis. Am J Dermatopathol. 2019;41:E29-E32. doi:10.1097DAD .0000000000001219

- Hunt SJ, Narus VT, Abell E. Necrolytic migratory erythema: dyskeratotic dermatitis, a clue to early diagnosis. J Am Acad Dermatol. 1991; 24:473-477. doi:10.1016/0190-9622(91)70076-e

- van Beek AP, de Haas ER, van Vloten WA, et al. The glucagonoma syndrome and necrolytic migratory erythema: a clinical review. Eur J Endocrinol. 2004;151:531-537. doi:10.1530/eje.0.1510531

- Pujol RM, Wang C-Y E, el-Azhary RA, et al. Necrolytic migratory erythema: clinicopathologic study of 13 cases. Int J Dermatol. 2004;43:12- 18. doi:10.1111/j.1365-4632.2004.01844.x

- Johnson SM, Smoller BR, Lamps LW, et al. Necrolytic migratory erythema as the only presenting sign of a glucagonoma. J Am Acad Dermatol. 2003;49:325-328. doi:10.1067/s0190-9622(02)61774-8

- De Rosa G, Mignogna C. The histopathology of psoriasis. Reumatismo. 2007;59(suppl 1):46-48. doi:10.4081/reumatismo.2007.1s.46

- Kimmel GW, Lebwohl M. Psoriasis: overview and diagnosis. In: Bhutani T, Liao W, Nakamura M, eds. Evidence-Based Psoriasis. Springer; 2018:1-16. doi:10.1007/978-3-319-90107-7_1

- Balan R, Grigoras¸ A, Popovici D, et al. The histopathological landscape of the major psoriasiform dermatoses. Arch Clin Cases. 2021;6:59-68. doi:10.22551/2019.24.0603.10155

- O’Keefe RJ, Scurry JP, Dennerstein G, et al. Audit of 114 nonneoplastic vulvar biopsies. Br J Obstet Gynaecol. 1995;102:780-786. doi:10.1111/j.1471-0528.1995.tb10842.x

- Parodi A, Caproni M, Cardinali C, et al P. Clinical, histological and immunopathological features of 58 patients with subacute cutaneous lupus erythematosus. Dermatology. 2000;200:6-10. doi:10.1159/000018307

- Lyon CC, Blewitt R, Harrison PV. Subacute cutaneous lupus erythematosus: two cases of delayed diagnosis. Acta Derm Venereol. 1998;78:57-59. doi:10.1080/00015559850135869

- David-Bajar KM. Subacute cutaneous lupus erythematosus. J Invest Dermatol. 1993;100:2S-8S. doi:10.1111/1523-1747.ep12355164

- Paulmann M, Mockenhaupt M. Severe drug-induced skin reactions: clinical features, diagnosis, etiology, and therapy. J Dtsch Dermatol Ges. 2015;13:625-643. doi:10.1111/ddg.12747

- Borroni G, Torti S, Pezzini C, et al. Histopathologic spectrum of drug reaction with eosinophilia and systemic symptoms (DRESS): a diagnosis that needs clinico-pathological correlation. G Ital Dermatol Venereol. 2014;149:291-300.

- Ortonne N, Valeyrie-Allanore L, Bastuji-Garin S, et al. Histopathology of drug rash with eosinophilia and systemic symptoms syndrome: a morphological and phenotypical study. Br J Dermatol. 2015;173:50-58. doi:10.1111/bjd.13683

The Diagnosis: Necrolytic Migratory Erythema

Necrolytic migratory erythema (NME) is a waxing and waning rash associated with rare pancreatic neuroendocrine tumors called glucagonomas. It is characterized by pruritic and painful, well-demarcated, erythematous plaques that manifest in the intertriginous areas and on the perineum and buttocks.1 Due to the evolving nature of the rash, the histopathologic findings in NME vary depending on the stage of the cutaneous lesions at the time of biopsy.2 Multiple dyskeratotic keratinocytes spanning all epidermal layers may be a diagnostic clue in early lesions of NME.3 Typical features of longstanding lesions include confluent parakeratosis, psoriasiform hyperplasia with mild or absent spongiosis, and upper epidermal necrosis with keratinocyte vacuolization and pallor.4 Morphologic features that are present prior to the development of epidermal vacuolation and necrosis frequently are misattributed to psoriasis or eczema. Long-standing lesions also may develop a neutrophilic infiltrate with subcorneal and intraepidermal pustules.2 Other common features include a discrete perivascular lymphocytic infiltrate and an erosive or encrusted epidermis.5 Although direct immunofluorescence typically is negative, nonspecific findings can be seen, including apoptotic keratinocytes labeling with fibrinogen and C3, as well as scattered, clumped, IgM-positive cytoid bodies present at the dermal-epidermal junction (DEJ).6 Biopsies also have shown scattered, clumped, IgM-positive cytoid bodies present at the DEJ.5

Psoriasis is a chronic relapsing papulosquamous disorder characterized by scaly erythematous plaques often overlying the extensor surfaces of the extremities. Histopathology shows a psoriasiform pattern of inflammation with thinning of the suprapapillary plates and elongation of the rete ridges. Further diagnostic clues of psoriasis include regular acanthosis, characteristic Munro microabscesses with neutrophils in a hyperkeratotic stratum corneum (Figure 1), hypogranulosis, and neutrophilic spongiform pustules of Kogoj in the stratum spinosum. Generally, there is a lack of the epidermal necrosis seen with NME.7,8

Lichen simplex chronicus manifests as pruritic, often hyperpigmented, well-defined, lichenified plaques with excoriation following repetitive mechanical trauma, commonly on the lower lateral legs, posterior neck, and flexural areas.9 The histologic landscape is marked by well-developed lesions evolving to show compact orthokeratosis, hypergranulosis, irregularly elongated rete ridges (ie, irregular acanthosis), and papillary dermal fibrosis with vertical streaking of collagen (Figure 2).9,10

Subacute cutaneous lupus erythematosus (SCLE) is recognized clinically by scaly/psoriasiform and annular lesions with mild or absent systemic involvement. Common histopathologic findings include epidermal atrophy, vacuolar interface dermatitis with hydropic degeneration of the basal layer, a subepidermal lymphocytic infiltrate, and a periadnexal and perivascular infiltrate (Figure 3).11 Upper dermal edema, spotty necrosis of individual cells in the epidermis, dermal-epidermal separation caused by prominent basal cell degeneration, and accumulation of acid mucopolysaccharides (mucin) are other histologic features associated with SCLE.12,13

The immunofluorescence pattern in SCLE features dustlike particles of IgG deposition in the epidermis, subepidermal region, and dermal cellular infiltrate. Lesions also may have granular deposition of immunoreactions at the DEJ.11,13

The manifestation of drug reaction with eosinophilia and systemic symptoms (DRESS) syndrome (also known as drug-induced hypersensitivity syndrome) is variable, with a morbilliform rash that spreads from the face to the entire body, urticaria, atypical target lesions, purpuriform lesions, lymphadenopathy, and exfoliative dermatitis.14 The nonspecific morphologic features of DRESS syndrome lesions are associated with variable histologic features, which include focal interface changes with vacuolar alteration of the basal layer; atypical lymphocytes with hyperchromic nuclei; and a superficial, inconsistently dense, perivascular lymphocytic infiltrate. Other relatively common histopathologic patterns include an upper dermis with dilated blood vessels, spongiosis with exocytosis of lymphocytes (Figure 4), and necrotic keratinocytes. Although peripheral eosinophilia is an important diagnostic criterion and is observed consistently, eosinophils are variably present on skin biopsy.15,16 Given the histopathologic variability and nonspecific findings, clinical correlation is required when diagnosing DRESS syndrome.

The Diagnosis: Necrolytic Migratory Erythema

Necrolytic migratory erythema (NME) is a waxing and waning rash associated with rare pancreatic neuroendocrine tumors called glucagonomas. It is characterized by pruritic and painful, well-demarcated, erythematous plaques that manifest in the intertriginous areas and on the perineum and buttocks.1 Due to the evolving nature of the rash, the histopathologic findings in NME vary depending on the stage of the cutaneous lesions at the time of biopsy.2 Multiple dyskeratotic keratinocytes spanning all epidermal layers may be a diagnostic clue in early lesions of NME.3 Typical features of longstanding lesions include confluent parakeratosis, psoriasiform hyperplasia with mild or absent spongiosis, and upper epidermal necrosis with keratinocyte vacuolization and pallor.4 Morphologic features that are present prior to the development of epidermal vacuolation and necrosis frequently are misattributed to psoriasis or eczema. Long-standing lesions also may develop a neutrophilic infiltrate with subcorneal and intraepidermal pustules.2 Other common features include a discrete perivascular lymphocytic infiltrate and an erosive or encrusted epidermis.5 Although direct immunofluorescence typically is negative, nonspecific findings can be seen, including apoptotic keratinocytes labeling with fibrinogen and C3, as well as scattered, clumped, IgM-positive cytoid bodies present at the dermal-epidermal junction (DEJ).6 Biopsies also have shown scattered, clumped, IgM-positive cytoid bodies present at the DEJ.5

Psoriasis is a chronic relapsing papulosquamous disorder characterized by scaly erythematous plaques often overlying the extensor surfaces of the extremities. Histopathology shows a psoriasiform pattern of inflammation with thinning of the suprapapillary plates and elongation of the rete ridges. Further diagnostic clues of psoriasis include regular acanthosis, characteristic Munro microabscesses with neutrophils in a hyperkeratotic stratum corneum (Figure 1), hypogranulosis, and neutrophilic spongiform pustules of Kogoj in the stratum spinosum. Generally, there is a lack of the epidermal necrosis seen with NME.7,8

Lichen simplex chronicus manifests as pruritic, often hyperpigmented, well-defined, lichenified plaques with excoriation following repetitive mechanical trauma, commonly on the lower lateral legs, posterior neck, and flexural areas.9 The histologic landscape is marked by well-developed lesions evolving to show compact orthokeratosis, hypergranulosis, irregularly elongated rete ridges (ie, irregular acanthosis), and papillary dermal fibrosis with vertical streaking of collagen (Figure 2).9,10

Subacute cutaneous lupus erythematosus (SCLE) is recognized clinically by scaly/psoriasiform and annular lesions with mild or absent systemic involvement. Common histopathologic findings include epidermal atrophy, vacuolar interface dermatitis with hydropic degeneration of the basal layer, a subepidermal lymphocytic infiltrate, and a periadnexal and perivascular infiltrate (Figure 3).11 Upper dermal edema, spotty necrosis of individual cells in the epidermis, dermal-epidermal separation caused by prominent basal cell degeneration, and accumulation of acid mucopolysaccharides (mucin) are other histologic features associated with SCLE.12,13

The immunofluorescence pattern in SCLE features dustlike particles of IgG deposition in the epidermis, subepidermal region, and dermal cellular infiltrate. Lesions also may have granular deposition of immunoreactions at the DEJ.11,13

The manifestation of drug reaction with eosinophilia and systemic symptoms (DRESS) syndrome (also known as drug-induced hypersensitivity syndrome) is variable, with a morbilliform rash that spreads from the face to the entire body, urticaria, atypical target lesions, purpuriform lesions, lymphadenopathy, and exfoliative dermatitis.14 The nonspecific morphologic features of DRESS syndrome lesions are associated with variable histologic features, which include focal interface changes with vacuolar alteration of the basal layer; atypical lymphocytes with hyperchromic nuclei; and a superficial, inconsistently dense, perivascular lymphocytic infiltrate. Other relatively common histopathologic patterns include an upper dermis with dilated blood vessels, spongiosis with exocytosis of lymphocytes (Figure 4), and necrotic keratinocytes. Although peripheral eosinophilia is an important diagnostic criterion and is observed consistently, eosinophils are variably present on skin biopsy.15,16 Given the histopathologic variability and nonspecific findings, clinical correlation is required when diagnosing DRESS syndrome.

- Halvorson SA, Gilbert E, Hopkins RS, et al. Putting the pieces together: necrolytic migratory erythema and the glucagonoma syndrome. J Gen Intern Med. 2013;28:1525-1529. doi:10.1007 /s11606-013-2490-5

- Toberer F, Hartschuh W, Wiedemeyer K. Glucagonoma-associated necrolytic migratory erythema: the broad spectrum of the clinical and histopathological findings and clues to the diagnosis. Am J Dermatopathol. 2019;41:E29-E32. doi:10.1097DAD .0000000000001219

- Hunt SJ, Narus VT, Abell E. Necrolytic migratory erythema: dyskeratotic dermatitis, a clue to early diagnosis. J Am Acad Dermatol. 1991; 24:473-477. doi:10.1016/0190-9622(91)70076-e

- van Beek AP, de Haas ER, van Vloten WA, et al. The glucagonoma syndrome and necrolytic migratory erythema: a clinical review. Eur J Endocrinol. 2004;151:531-537. doi:10.1530/eje.0.1510531

- Pujol RM, Wang C-Y E, el-Azhary RA, et al. Necrolytic migratory erythema: clinicopathologic study of 13 cases. Int J Dermatol. 2004;43:12- 18. doi:10.1111/j.1365-4632.2004.01844.x

- Johnson SM, Smoller BR, Lamps LW, et al. Necrolytic migratory erythema as the only presenting sign of a glucagonoma. J Am Acad Dermatol. 2003;49:325-328. doi:10.1067/s0190-9622(02)61774-8

- De Rosa G, Mignogna C. The histopathology of psoriasis. Reumatismo. 2007;59(suppl 1):46-48. doi:10.4081/reumatismo.2007.1s.46

- Kimmel GW, Lebwohl M. Psoriasis: overview and diagnosis. In: Bhutani T, Liao W, Nakamura M, eds. Evidence-Based Psoriasis. Springer; 2018:1-16. doi:10.1007/978-3-319-90107-7_1

- Balan R, Grigoras¸ A, Popovici D, et al. The histopathological landscape of the major psoriasiform dermatoses. Arch Clin Cases. 2021;6:59-68. doi:10.22551/2019.24.0603.10155

- O’Keefe RJ, Scurry JP, Dennerstein G, et al. Audit of 114 nonneoplastic vulvar biopsies. Br J Obstet Gynaecol. 1995;102:780-786. doi:10.1111/j.1471-0528.1995.tb10842.x

- Parodi A, Caproni M, Cardinali C, et al P. Clinical, histological and immunopathological features of 58 patients with subacute cutaneous lupus erythematosus. Dermatology. 2000;200:6-10. doi:10.1159/000018307

- Lyon CC, Blewitt R, Harrison PV. Subacute cutaneous lupus erythematosus: two cases of delayed diagnosis. Acta Derm Venereol. 1998;78:57-59. doi:10.1080/00015559850135869

- David-Bajar KM. Subacute cutaneous lupus erythematosus. J Invest Dermatol. 1993;100:2S-8S. doi:10.1111/1523-1747.ep12355164

- Paulmann M, Mockenhaupt M. Severe drug-induced skin reactions: clinical features, diagnosis, etiology, and therapy. J Dtsch Dermatol Ges. 2015;13:625-643. doi:10.1111/ddg.12747

- Borroni G, Torti S, Pezzini C, et al. Histopathologic spectrum of drug reaction with eosinophilia and systemic symptoms (DRESS): a diagnosis that needs clinico-pathological correlation. G Ital Dermatol Venereol. 2014;149:291-300.

- Ortonne N, Valeyrie-Allanore L, Bastuji-Garin S, et al. Histopathology of drug rash with eosinophilia and systemic symptoms syndrome: a morphological and phenotypical study. Br J Dermatol. 2015;173:50-58. doi:10.1111/bjd.13683

- Halvorson SA, Gilbert E, Hopkins RS, et al. Putting the pieces together: necrolytic migratory erythema and the glucagonoma syndrome. J Gen Intern Med. 2013;28:1525-1529. doi:10.1007 /s11606-013-2490-5

- Toberer F, Hartschuh W, Wiedemeyer K. Glucagonoma-associated necrolytic migratory erythema: the broad spectrum of the clinical and histopathological findings and clues to the diagnosis. Am J Dermatopathol. 2019;41:E29-E32. doi:10.1097DAD .0000000000001219

- Hunt SJ, Narus VT, Abell E. Necrolytic migratory erythema: dyskeratotic dermatitis, a clue to early diagnosis. J Am Acad Dermatol. 1991; 24:473-477. doi:10.1016/0190-9622(91)70076-e

- van Beek AP, de Haas ER, van Vloten WA, et al. The glucagonoma syndrome and necrolytic migratory erythema: a clinical review. Eur J Endocrinol. 2004;151:531-537. doi:10.1530/eje.0.1510531

- Pujol RM, Wang C-Y E, el-Azhary RA, et al. Necrolytic migratory erythema: clinicopathologic study of 13 cases. Int J Dermatol. 2004;43:12- 18. doi:10.1111/j.1365-4632.2004.01844.x

- Johnson SM, Smoller BR, Lamps LW, et al. Necrolytic migratory erythema as the only presenting sign of a glucagonoma. J Am Acad Dermatol. 2003;49:325-328. doi:10.1067/s0190-9622(02)61774-8

- De Rosa G, Mignogna C. The histopathology of psoriasis. Reumatismo. 2007;59(suppl 1):46-48. doi:10.4081/reumatismo.2007.1s.46

- Kimmel GW, Lebwohl M. Psoriasis: overview and diagnosis. In: Bhutani T, Liao W, Nakamura M, eds. Evidence-Based Psoriasis. Springer; 2018:1-16. doi:10.1007/978-3-319-90107-7_1

- Balan R, Grigoras¸ A, Popovici D, et al. The histopathological landscape of the major psoriasiform dermatoses. Arch Clin Cases. 2021;6:59-68. doi:10.22551/2019.24.0603.10155

- O’Keefe RJ, Scurry JP, Dennerstein G, et al. Audit of 114 nonneoplastic vulvar biopsies. Br J Obstet Gynaecol. 1995;102:780-786. doi:10.1111/j.1471-0528.1995.tb10842.x

- Parodi A, Caproni M, Cardinali C, et al P. Clinical, histological and immunopathological features of 58 patients with subacute cutaneous lupus erythematosus. Dermatology. 2000;200:6-10. doi:10.1159/000018307

- Lyon CC, Blewitt R, Harrison PV. Subacute cutaneous lupus erythematosus: two cases of delayed diagnosis. Acta Derm Venereol. 1998;78:57-59. doi:10.1080/00015559850135869

- David-Bajar KM. Subacute cutaneous lupus erythematosus. J Invest Dermatol. 1993;100:2S-8S. doi:10.1111/1523-1747.ep12355164

- Paulmann M, Mockenhaupt M. Severe drug-induced skin reactions: clinical features, diagnosis, etiology, and therapy. J Dtsch Dermatol Ges. 2015;13:625-643. doi:10.1111/ddg.12747

- Borroni G, Torti S, Pezzini C, et al. Histopathologic spectrum of drug reaction with eosinophilia and systemic symptoms (DRESS): a diagnosis that needs clinico-pathological correlation. G Ital Dermatol Venereol. 2014;149:291-300.

- Ortonne N, Valeyrie-Allanore L, Bastuji-Garin S, et al. Histopathology of drug rash with eosinophilia and systemic symptoms syndrome: a morphological and phenotypical study. Br J Dermatol. 2015;173:50-58. doi:10.1111/bjd.13683

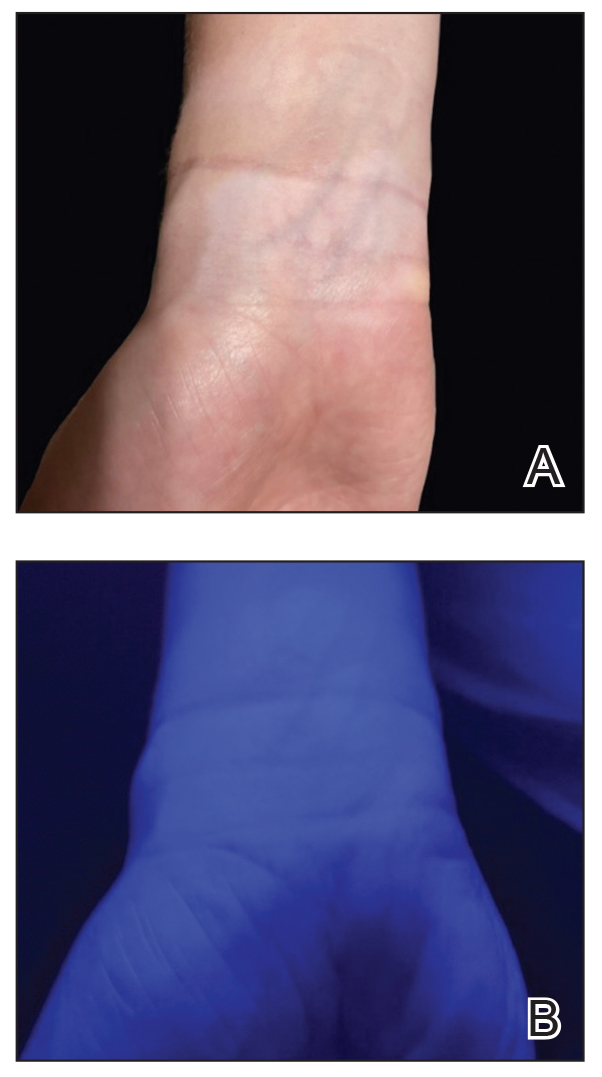

A 62-year-old man presented with an erythematous flaky rash associated with burning pain on the right medial second toe that persisted for several months. Prior treatment with econazole, ciclopirox, and oral amoxicillin had failed. A shave biopsy was performed.

Latest Breakthroughs in Molluscum Contagiosum Therapy

Molluscum contagiosum (ie, molluscum) is a ubiquitous infection caused by the poxvirus molluscum contagiosum virus (MCV). Although skin deep, molluscum shares many factors with the more virulent poxviridae. Moisture and trauma can cause viral material to be released from the pearly papules through a small opening, which also allows entry of bacteria and medications into the lesion. The MCV is transmitted by direct contact with skin or via fomites.1

Molluscum can affect children of any age, with MCV type 1 peaking in toddlers and school-aged children and MCV type 2 after the sexual debut. The prevalence of molluscum has increased since the 1980s. It is stressful for children and caregivers and poses challenges in schools as well as sports such as swimming, wrestling, and karate.1,2

For the first time, we have US Food and Drug Administration (FDA)–approved products to treat MCV infections. Previously, only off-label agents were used. Therefore, we have to contemplate why treatment is important to our patients.

What type of care is required for molluscum?

Counseling is the first and only mandatory treatment, which consists of 3 parts: natural history, risk factors for spread, and options for therapy. The natural history of molluscum in children is early spread, contagion to oneself and others (as high as 60% of sibling co-bathers3), triggering of dermatitis, eventual onset of the beginning-of-the-end (BOTE) sign, and eventually clearance. The natural history in adults is poorly understood.

Early clearance is uncommon; reports have suggested 45.6% to 48.4% of affected patients are clear at 1 year and 69.5% to 72.6% at 1.5 years.4 For many children, especially those with atopic dermatitis (AD), lesions linger and often spread, with many experiencing disease for 3 to 4 years. Fomites such as towels, washcloths, and sponges can transfer the virus and spread lesions; therefore, I advise patients to gently pat their skin dry, wash towels frequently, and avoid sharing bathing equipment.1,3,5 Children and adults with immunosuppression may have a greater number of lesions and more prolonged course of disease, including those with HIV as well as DOC8 and CARD11 mutations.6 The American Academy of Pediatrics (AAP) emphasizes that children should not be excluded from attending child care/school or from swimming in public pools but lesions should be covered.6 Lesions, especially those in the antecubital region, can trigger new-onset AD or AD flares.3 In response, gentle skin care including fragrance-free cleansers and periodic application of moisturizers may ward off AD. Topical corticosteroids are preferred.

Dermatitis in MCV is a great mimicker and can resemble erythema multiforme, Gianotti-Crosti syndrome, impetigo, and AD.1 Superinfection recently has been reported; however, in a retrospective analysis of 56 patients with inflamed lesions secondary to molluscum infection, only 7 had positive bacterial cultures, which supports the idea of the swelling and redness of inflammation as a mimic for infection.7 When true infection does occur, tender, swollen, pus-filled lesions should be lanced and cultured.1,7,8

When should we consider therapy?

Therapy is highly dependent on the child, the caregiver, and the social circumstances.1 More than 80% of parents are anxious about molluscum, and countless children are embarrassed or ashamed.1 Ultimately, an unhappy child merits care. The AAP cites the following as reasons to treat: “(1) alleviate discomfort, including itching; (2) reduce autoinoculation; (3) limit transmission of the virus to close contacts; (4) reduce cosmetic concerns; and (5) prevent secondary infection.”6 For adults, we should consider limitations to intimacy and reduction of sexual transmission risk.6

Treatment can be based on the number of lesions. With a few lesions (<3), therapy is worthwhile if they are unsightly; appear on exposed skin causing embarrassment; and/or are itchy, uncomfortable, or large. In a report of 300 children with molluscum treated with cantharidin, most patients choosing therapy had 10 to 20 lesions, but this was over multiple visits.8 Looking at a 2018 data set of 50 patients (all-comers) with molluscum,3 the mean number of lesions was 10 (median, 7); 3 lesions were 1 SD below, while 14, 17, and 45 were 1, 2, and 3 SDs above, respectively. This data set shows that patients can develop more lesions rapidly, and most children have many visible lesions (N.B. Silverberg, MD, unpublished data).

Because each lesion contains infectious viral particles and patients scratch, more lesions are equated to greater autoinoculation and contagion. In addition to the AAP criteria, treatment can be considered for households with immunocompromised individuals, children at risk for new-onset AD, or those with AD at risk for flare. For patients with 45 lesions or more (3 SDs), clearance is harder to achieve with 2 sessions of in-office therapy, and multiple methods or the addition of immunomodulatory therapeutics should be considered.

Do we have to clear every lesion?

New molluscum lesions may arise until a patient achieves immunity, and they may appear more than a month after inoculation, making it difficult to keep up with the rapid spread. Latency between exposure and lesion development usually is 2 to 7 weeks but may be as long as 6 months, making it difficult to prevent spread.6 Therefore, when we treat, we should not promise full clearance to patients and parents. Rather, we should inform them that new lesions may develop later, and therapy is only effective on visible lesions. In a recent study, a 50% clearance of lesions was the satisfactory threshold for parents, demonstrating that satisfaction is possible with partial clearance.9

What is new in therapeutics for molluscum?

Molluscum therapies are either destructive, immunomodulatory, or antiviral. Two agents now are approved by the FDA for the treatment of molluscum infections.

Berdazimer gel 10.3% is approved for patients 1 year or older, but it is not yet available. This agent has both immunomodulatory and antiviral properties.10 It features a home therapy that is mixed on a small palette, then painted on by the patient or parent once daily for 12 weeks. Study outcomes demonstrated more than 50% lesional clearance.11,12 Complete clearance was achieved in at least 30% of patients.12A proprietary topical version of cantharidin 0.7% in flexible collodion is now FDA approved for patients 2 years and older. This vesicant-triggering iatrogenic is targeted at creating blisters overlying molluscum lesions. It is conceptually similar to older versions but with some enhanced features.5,13,14 This version was used for therapy every 3 weeks for up to 4 sessions in clinical trials. Safety is similar across all body sites treated (nonmucosal and not near the mucosal surfaces) but not for mucosa, the mid face, or eyelids.13 Complete lesion clearance was 46.3% to 54% and statistically greater than placebo (P<.001).14Both agents are well tolerated in children with AD; adverse effects include blistering with cantharidin and dermatitislike symptoms with berdazimer.15,16 These therapies have the advantage of being easy to use.

Final Thoughts

We have entered an era of high-quality molluscum therapy. Patient care involves developing a good knowledge of the agents, incorporating shared decision-making with patients and caregivers, and addressing therapy in the context of comorbid diseases such as AD.

- Silverberg NB. Pediatric molluscum: an update. Cutis. 2019;104:301-305, E1-E2.

- Thompson AJ, Matinpour K, Hardin J, et al. Molluscum gladiatorum. Dermatol Online J. 2014;20:13030/qt0nj121n1.

- Silverberg NB. Molluscum contagiosum virus infection can trigger atopic dermatitis disease onset or flare. Cutis. 2018;102:191-194.

- Basdag H, Rainer BM, Cohen BA. Molluscum contagiosum: to treat or not to treat? experience with 170 children in an outpatient clinic setting in the northeastern United States. Pediatr Dermatol. 2015;32:353-357. doi:10.1111/pde.12504

- Silverberg NB. Warts and molluscum in children. Adv Dermatol. 2004;20:23-73.

- Molluscum contagiosum. In: Kimberlin DW, Lynfield R, Barnett ED, et al (eds). Red Book: 2021–2024 Report of the Committee on Infectious Diseases. 32nd edition. American Academy of Pediatrics. May 26, 2021. Accessed May 20, 2024. https://publications.aap.org/redbook/book/347/chapter/5754264/Molluscum-Contagiosum

- Gross I, Ben Nachum N, Molho-Pessach V, et al. The molluscum contagiosum BOTE sign—infected or inflamed? Pediatr Dermatol. 2020;37:476-479. doi:10.1111/pde.14124

- Silverberg NB, Sidbury R, Mancini AJ. Childhood molluscum contagiosum: experience with cantharidin therapy in 300 patients. J Am Acad Dermatol. 2000;43:503-507. doi:10.1067/mjd.2000.106370

- Maeda-Chubachi T, McLeod L, Enloe C, et al. Defining clinically meaningful improvement in molluscum contagiosum. J Am Acad Dermatol. 2024;90:443-445. doi:10.1016/j.jaad.2023.10.033

- Guttman-Yassky E, Gallo RL, Pavel AB, et al. A nitric oxide-releasing topical medication as a potential treatment option for atopic dermatitis through antimicrobial and anti-inflammatory activity. J Invest Dermatol. 2020;140:2531-2535.e2. doi:10.1016/j.jid.2020.04.013

- Browning JC, Cartwright M, Thorla I Jr, et al. A patient-centered perspective of molluscum contagiosum as reported by B-SIMPLE4 Clinical Trial patients and caregivers: Global Impression of Change and Exit Interview substudy results. Am J Clin Dermatol. 2023;24:119-133. doi:10.1007/s40257-022-00733-9

- Sugarman JL, Hebert A, Browning JC, et al. Berdazimer gel for molluscum contagiosum: an integrated analysis of 3 randomized controlled trials. J Am Acad Dermatol. 2024;90:299-308. doi:10.1016/j.jaad.2023.09.066

- Eichenfield LF, Kwong P, Gonzalez ME, et al. Safety and efficacy of VP-102 (cantharidin, 0.7% w/v) in molluscum contagiosum by body region: post hoc pooled analyses from two phase III randomized trials. J Clin Aesthet Dermatol. 2021;14:42-47.

- Eichenfield LF, McFalda W, Brabec B, et al. Safety and efficacy of VP-102, a proprietary, drug-device combination product containing cantharidin, 0.7% (w/v), in children and adults with molluscum contagiosum: two phase 3 randomized clinical trials. JAMA Dermatol. 2020;156:1315-1323. doi:10.1001/jamadermatol.2020.3238

- Paller AS, Green LJ, Silverberg N, et al. Berdazimer gel for molluscum contagiosum in patients with atopic dermatitis. Pediatr Dermatol.Published online February 27, 2024. doi:10.1111/pde.15575

- Eichenfield L, Hebert A, Mancini A, et al. Therapeutic approaches and special considerations for treating molluscum contagiosum. J Drugs Dermatol. 2021;20:1185-1190. doi:10.36849/jdd.6383

Molluscum contagiosum (ie, molluscum) is a ubiquitous infection caused by the poxvirus molluscum contagiosum virus (MCV). Although skin deep, molluscum shares many factors with the more virulent poxviridae. Moisture and trauma can cause viral material to be released from the pearly papules through a small opening, which also allows entry of bacteria and medications into the lesion. The MCV is transmitted by direct contact with skin or via fomites.1

Molluscum can affect children of any age, with MCV type 1 peaking in toddlers and school-aged children and MCV type 2 after the sexual debut. The prevalence of molluscum has increased since the 1980s. It is stressful for children and caregivers and poses challenges in schools as well as sports such as swimming, wrestling, and karate.1,2

For the first time, we have US Food and Drug Administration (FDA)–approved products to treat MCV infections. Previously, only off-label agents were used. Therefore, we have to contemplate why treatment is important to our patients.

What type of care is required for molluscum?

Counseling is the first and only mandatory treatment, which consists of 3 parts: natural history, risk factors for spread, and options for therapy. The natural history of molluscum in children is early spread, contagion to oneself and others (as high as 60% of sibling co-bathers3), triggering of dermatitis, eventual onset of the beginning-of-the-end (BOTE) sign, and eventually clearance. The natural history in adults is poorly understood.

Early clearance is uncommon; reports have suggested 45.6% to 48.4% of affected patients are clear at 1 year and 69.5% to 72.6% at 1.5 years.4 For many children, especially those with atopic dermatitis (AD), lesions linger and often spread, with many experiencing disease for 3 to 4 years. Fomites such as towels, washcloths, and sponges can transfer the virus and spread lesions; therefore, I advise patients to gently pat their skin dry, wash towels frequently, and avoid sharing bathing equipment.1,3,5 Children and adults with immunosuppression may have a greater number of lesions and more prolonged course of disease, including those with HIV as well as DOC8 and CARD11 mutations.6 The American Academy of Pediatrics (AAP) emphasizes that children should not be excluded from attending child care/school or from swimming in public pools but lesions should be covered.6 Lesions, especially those in the antecubital region, can trigger new-onset AD or AD flares.3 In response, gentle skin care including fragrance-free cleansers and periodic application of moisturizers may ward off AD. Topical corticosteroids are preferred.

Dermatitis in MCV is a great mimicker and can resemble erythema multiforme, Gianotti-Crosti syndrome, impetigo, and AD.1 Superinfection recently has been reported; however, in a retrospective analysis of 56 patients with inflamed lesions secondary to molluscum infection, only 7 had positive bacterial cultures, which supports the idea of the swelling and redness of inflammation as a mimic for infection.7 When true infection does occur, tender, swollen, pus-filled lesions should be lanced and cultured.1,7,8

When should we consider therapy?

Therapy is highly dependent on the child, the caregiver, and the social circumstances.1 More than 80% of parents are anxious about molluscum, and countless children are embarrassed or ashamed.1 Ultimately, an unhappy child merits care. The AAP cites the following as reasons to treat: “(1) alleviate discomfort, including itching; (2) reduce autoinoculation; (3) limit transmission of the virus to close contacts; (4) reduce cosmetic concerns; and (5) prevent secondary infection.”6 For adults, we should consider limitations to intimacy and reduction of sexual transmission risk.6

Treatment can be based on the number of lesions. With a few lesions (<3), therapy is worthwhile if they are unsightly; appear on exposed skin causing embarrassment; and/or are itchy, uncomfortable, or large. In a report of 300 children with molluscum treated with cantharidin, most patients choosing therapy had 10 to 20 lesions, but this was over multiple visits.8 Looking at a 2018 data set of 50 patients (all-comers) with molluscum,3 the mean number of lesions was 10 (median, 7); 3 lesions were 1 SD below, while 14, 17, and 45 were 1, 2, and 3 SDs above, respectively. This data set shows that patients can develop more lesions rapidly, and most children have many visible lesions (N.B. Silverberg, MD, unpublished data).

Because each lesion contains infectious viral particles and patients scratch, more lesions are equated to greater autoinoculation and contagion. In addition to the AAP criteria, treatment can be considered for households with immunocompromised individuals, children at risk for new-onset AD, or those with AD at risk for flare. For patients with 45 lesions or more (3 SDs), clearance is harder to achieve with 2 sessions of in-office therapy, and multiple methods or the addition of immunomodulatory therapeutics should be considered.

Do we have to clear every lesion?

New molluscum lesions may arise until a patient achieves immunity, and they may appear more than a month after inoculation, making it difficult to keep up with the rapid spread. Latency between exposure and lesion development usually is 2 to 7 weeks but may be as long as 6 months, making it difficult to prevent spread.6 Therefore, when we treat, we should not promise full clearance to patients and parents. Rather, we should inform them that new lesions may develop later, and therapy is only effective on visible lesions. In a recent study, a 50% clearance of lesions was the satisfactory threshold for parents, demonstrating that satisfaction is possible with partial clearance.9

What is new in therapeutics for molluscum?

Molluscum therapies are either destructive, immunomodulatory, or antiviral. Two agents now are approved by the FDA for the treatment of molluscum infections.

Berdazimer gel 10.3% is approved for patients 1 year or older, but it is not yet available. This agent has both immunomodulatory and antiviral properties.10 It features a home therapy that is mixed on a small palette, then painted on by the patient or parent once daily for 12 weeks. Study outcomes demonstrated more than 50% lesional clearance.11,12 Complete clearance was achieved in at least 30% of patients.12A proprietary topical version of cantharidin 0.7% in flexible collodion is now FDA approved for patients 2 years and older. This vesicant-triggering iatrogenic is targeted at creating blisters overlying molluscum lesions. It is conceptually similar to older versions but with some enhanced features.5,13,14 This version was used for therapy every 3 weeks for up to 4 sessions in clinical trials. Safety is similar across all body sites treated (nonmucosal and not near the mucosal surfaces) but not for mucosa, the mid face, or eyelids.13 Complete lesion clearance was 46.3% to 54% and statistically greater than placebo (P<.001).14Both agents are well tolerated in children with AD; adverse effects include blistering with cantharidin and dermatitislike symptoms with berdazimer.15,16 These therapies have the advantage of being easy to use.

Final Thoughts

We have entered an era of high-quality molluscum therapy. Patient care involves developing a good knowledge of the agents, incorporating shared decision-making with patients and caregivers, and addressing therapy in the context of comorbid diseases such as AD.

Molluscum contagiosum (ie, molluscum) is a ubiquitous infection caused by the poxvirus molluscum contagiosum virus (MCV). Although skin deep, molluscum shares many factors with the more virulent poxviridae. Moisture and trauma can cause viral material to be released from the pearly papules through a small opening, which also allows entry of bacteria and medications into the lesion. The MCV is transmitted by direct contact with skin or via fomites.1

Molluscum can affect children of any age, with MCV type 1 peaking in toddlers and school-aged children and MCV type 2 after the sexual debut. The prevalence of molluscum has increased since the 1980s. It is stressful for children and caregivers and poses challenges in schools as well as sports such as swimming, wrestling, and karate.1,2

For the first time, we have US Food and Drug Administration (FDA)–approved products to treat MCV infections. Previously, only off-label agents were used. Therefore, we have to contemplate why treatment is important to our patients.

What type of care is required for molluscum?

Counseling is the first and only mandatory treatment, which consists of 3 parts: natural history, risk factors for spread, and options for therapy. The natural history of molluscum in children is early spread, contagion to oneself and others (as high as 60% of sibling co-bathers3), triggering of dermatitis, eventual onset of the beginning-of-the-end (BOTE) sign, and eventually clearance. The natural history in adults is poorly understood.

Early clearance is uncommon; reports have suggested 45.6% to 48.4% of affected patients are clear at 1 year and 69.5% to 72.6% at 1.5 years.4 For many children, especially those with atopic dermatitis (AD), lesions linger and often spread, with many experiencing disease for 3 to 4 years. Fomites such as towels, washcloths, and sponges can transfer the virus and spread lesions; therefore, I advise patients to gently pat their skin dry, wash towels frequently, and avoid sharing bathing equipment.1,3,5 Children and adults with immunosuppression may have a greater number of lesions and more prolonged course of disease, including those with HIV as well as DOC8 and CARD11 mutations.6 The American Academy of Pediatrics (AAP) emphasizes that children should not be excluded from attending child care/school or from swimming in public pools but lesions should be covered.6 Lesions, especially those in the antecubital region, can trigger new-onset AD or AD flares.3 In response, gentle skin care including fragrance-free cleansers and periodic application of moisturizers may ward off AD. Topical corticosteroids are preferred.

Dermatitis in MCV is a great mimicker and can resemble erythema multiforme, Gianotti-Crosti syndrome, impetigo, and AD.1 Superinfection recently has been reported; however, in a retrospective analysis of 56 patients with inflamed lesions secondary to molluscum infection, only 7 had positive bacterial cultures, which supports the idea of the swelling and redness of inflammation as a mimic for infection.7 When true infection does occur, tender, swollen, pus-filled lesions should be lanced and cultured.1,7,8

When should we consider therapy?

Therapy is highly dependent on the child, the caregiver, and the social circumstances.1 More than 80% of parents are anxious about molluscum, and countless children are embarrassed or ashamed.1 Ultimately, an unhappy child merits care. The AAP cites the following as reasons to treat: “(1) alleviate discomfort, including itching; (2) reduce autoinoculation; (3) limit transmission of the virus to close contacts; (4) reduce cosmetic concerns; and (5) prevent secondary infection.”6 For adults, we should consider limitations to intimacy and reduction of sexual transmission risk.6

Treatment can be based on the number of lesions. With a few lesions (<3), therapy is worthwhile if they are unsightly; appear on exposed skin causing embarrassment; and/or are itchy, uncomfortable, or large. In a report of 300 children with molluscum treated with cantharidin, most patients choosing therapy had 10 to 20 lesions, but this was over multiple visits.8 Looking at a 2018 data set of 50 patients (all-comers) with molluscum,3 the mean number of lesions was 10 (median, 7); 3 lesions were 1 SD below, while 14, 17, and 45 were 1, 2, and 3 SDs above, respectively. This data set shows that patients can develop more lesions rapidly, and most children have many visible lesions (N.B. Silverberg, MD, unpublished data).

Because each lesion contains infectious viral particles and patients scratch, more lesions are equated to greater autoinoculation and contagion. In addition to the AAP criteria, treatment can be considered for households with immunocompromised individuals, children at risk for new-onset AD, or those with AD at risk for flare. For patients with 45 lesions or more (3 SDs), clearance is harder to achieve with 2 sessions of in-office therapy, and multiple methods or the addition of immunomodulatory therapeutics should be considered.

Do we have to clear every lesion?

New molluscum lesions may arise until a patient achieves immunity, and they may appear more than a month after inoculation, making it difficult to keep up with the rapid spread. Latency between exposure and lesion development usually is 2 to 7 weeks but may be as long as 6 months, making it difficult to prevent spread.6 Therefore, when we treat, we should not promise full clearance to patients and parents. Rather, we should inform them that new lesions may develop later, and therapy is only effective on visible lesions. In a recent study, a 50% clearance of lesions was the satisfactory threshold for parents, demonstrating that satisfaction is possible with partial clearance.9

What is new in therapeutics for molluscum?

Molluscum therapies are either destructive, immunomodulatory, or antiviral. Two agents now are approved by the FDA for the treatment of molluscum infections.

Berdazimer gel 10.3% is approved for patients 1 year or older, but it is not yet available. This agent has both immunomodulatory and antiviral properties.10 It features a home therapy that is mixed on a small palette, then painted on by the patient or parent once daily for 12 weeks. Study outcomes demonstrated more than 50% lesional clearance.11,12 Complete clearance was achieved in at least 30% of patients.12A proprietary topical version of cantharidin 0.7% in flexible collodion is now FDA approved for patients 2 years and older. This vesicant-triggering iatrogenic is targeted at creating blisters overlying molluscum lesions. It is conceptually similar to older versions but with some enhanced features.5,13,14 This version was used for therapy every 3 weeks for up to 4 sessions in clinical trials. Safety is similar across all body sites treated (nonmucosal and not near the mucosal surfaces) but not for mucosa, the mid face, or eyelids.13 Complete lesion clearance was 46.3% to 54% and statistically greater than placebo (P<.001).14Both agents are well tolerated in children with AD; adverse effects include blistering with cantharidin and dermatitislike symptoms with berdazimer.15,16 These therapies have the advantage of being easy to use.

Final Thoughts

We have entered an era of high-quality molluscum therapy. Patient care involves developing a good knowledge of the agents, incorporating shared decision-making with patients and caregivers, and addressing therapy in the context of comorbid diseases such as AD.

- Silverberg NB. Pediatric molluscum: an update. Cutis. 2019;104:301-305, E1-E2.

- Thompson AJ, Matinpour K, Hardin J, et al. Molluscum gladiatorum. Dermatol Online J. 2014;20:13030/qt0nj121n1.

- Silverberg NB. Molluscum contagiosum virus infection can trigger atopic dermatitis disease onset or flare. Cutis. 2018;102:191-194.

- Basdag H, Rainer BM, Cohen BA. Molluscum contagiosum: to treat or not to treat? experience with 170 children in an outpatient clinic setting in the northeastern United States. Pediatr Dermatol. 2015;32:353-357. doi:10.1111/pde.12504

- Silverberg NB. Warts and molluscum in children. Adv Dermatol. 2004;20:23-73.

- Molluscum contagiosum. In: Kimberlin DW, Lynfield R, Barnett ED, et al (eds). Red Book: 2021–2024 Report of the Committee on Infectious Diseases. 32nd edition. American Academy of Pediatrics. May 26, 2021. Accessed May 20, 2024. https://publications.aap.org/redbook/book/347/chapter/5754264/Molluscum-Contagiosum

- Gross I, Ben Nachum N, Molho-Pessach V, et al. The molluscum contagiosum BOTE sign—infected or inflamed? Pediatr Dermatol. 2020;37:476-479. doi:10.1111/pde.14124

- Silverberg NB, Sidbury R, Mancini AJ. Childhood molluscum contagiosum: experience with cantharidin therapy in 300 patients. J Am Acad Dermatol. 2000;43:503-507. doi:10.1067/mjd.2000.106370

- Maeda-Chubachi T, McLeod L, Enloe C, et al. Defining clinically meaningful improvement in molluscum contagiosum. J Am Acad Dermatol. 2024;90:443-445. doi:10.1016/j.jaad.2023.10.033

- Guttman-Yassky E, Gallo RL, Pavel AB, et al. A nitric oxide-releasing topical medication as a potential treatment option for atopic dermatitis through antimicrobial and anti-inflammatory activity. J Invest Dermatol. 2020;140:2531-2535.e2. doi:10.1016/j.jid.2020.04.013

- Browning JC, Cartwright M, Thorla I Jr, et al. A patient-centered perspective of molluscum contagiosum as reported by B-SIMPLE4 Clinical Trial patients and caregivers: Global Impression of Change and Exit Interview substudy results. Am J Clin Dermatol. 2023;24:119-133. doi:10.1007/s40257-022-00733-9

- Sugarman JL, Hebert A, Browning JC, et al. Berdazimer gel for molluscum contagiosum: an integrated analysis of 3 randomized controlled trials. J Am Acad Dermatol. 2024;90:299-308. doi:10.1016/j.jaad.2023.09.066

- Eichenfield LF, Kwong P, Gonzalez ME, et al. Safety and efficacy of VP-102 (cantharidin, 0.7% w/v) in molluscum contagiosum by body region: post hoc pooled analyses from two phase III randomized trials. J Clin Aesthet Dermatol. 2021;14:42-47.

- Eichenfield LF, McFalda W, Brabec B, et al. Safety and efficacy of VP-102, a proprietary, drug-device combination product containing cantharidin, 0.7% (w/v), in children and adults with molluscum contagiosum: two phase 3 randomized clinical trials. JAMA Dermatol. 2020;156:1315-1323. doi:10.1001/jamadermatol.2020.3238

- Paller AS, Green LJ, Silverberg N, et al. Berdazimer gel for molluscum contagiosum in patients with atopic dermatitis. Pediatr Dermatol.Published online February 27, 2024. doi:10.1111/pde.15575

- Eichenfield L, Hebert A, Mancini A, et al. Therapeutic approaches and special considerations for treating molluscum contagiosum. J Drugs Dermatol. 2021;20:1185-1190. doi:10.36849/jdd.6383

- Silverberg NB. Pediatric molluscum: an update. Cutis. 2019;104:301-305, E1-E2.

- Thompson AJ, Matinpour K, Hardin J, et al. Molluscum gladiatorum. Dermatol Online J. 2014;20:13030/qt0nj121n1.

- Silverberg NB. Molluscum contagiosum virus infection can trigger atopic dermatitis disease onset or flare. Cutis. 2018;102:191-194.

- Basdag H, Rainer BM, Cohen BA. Molluscum contagiosum: to treat or not to treat? experience with 170 children in an outpatient clinic setting in the northeastern United States. Pediatr Dermatol. 2015;32:353-357. doi:10.1111/pde.12504

- Silverberg NB. Warts and molluscum in children. Adv Dermatol. 2004;20:23-73.

- Molluscum contagiosum. In: Kimberlin DW, Lynfield R, Barnett ED, et al (eds). Red Book: 2021–2024 Report of the Committee on Infectious Diseases. 32nd edition. American Academy of Pediatrics. May 26, 2021. Accessed May 20, 2024. https://publications.aap.org/redbook/book/347/chapter/5754264/Molluscum-Contagiosum

- Gross I, Ben Nachum N, Molho-Pessach V, et al. The molluscum contagiosum BOTE sign—infected or inflamed? Pediatr Dermatol. 2020;37:476-479. doi:10.1111/pde.14124

- Silverberg NB, Sidbury R, Mancini AJ. Childhood molluscum contagiosum: experience with cantharidin therapy in 300 patients. J Am Acad Dermatol. 2000;43:503-507. doi:10.1067/mjd.2000.106370

- Maeda-Chubachi T, McLeod L, Enloe C, et al. Defining clinically meaningful improvement in molluscum contagiosum. J Am Acad Dermatol. 2024;90:443-445. doi:10.1016/j.jaad.2023.10.033

- Guttman-Yassky E, Gallo RL, Pavel AB, et al. A nitric oxide-releasing topical medication as a potential treatment option for atopic dermatitis through antimicrobial and anti-inflammatory activity. J Invest Dermatol. 2020;140:2531-2535.e2. doi:10.1016/j.jid.2020.04.013

- Browning JC, Cartwright M, Thorla I Jr, et al. A patient-centered perspective of molluscum contagiosum as reported by B-SIMPLE4 Clinical Trial patients and caregivers: Global Impression of Change and Exit Interview substudy results. Am J Clin Dermatol. 2023;24:119-133. doi:10.1007/s40257-022-00733-9

- Sugarman JL, Hebert A, Browning JC, et al. Berdazimer gel for molluscum contagiosum: an integrated analysis of 3 randomized controlled trials. J Am Acad Dermatol. 2024;90:299-308. doi:10.1016/j.jaad.2023.09.066

- Eichenfield LF, Kwong P, Gonzalez ME, et al. Safety and efficacy of VP-102 (cantharidin, 0.7% w/v) in molluscum contagiosum by body region: post hoc pooled analyses from two phase III randomized trials. J Clin Aesthet Dermatol. 2021;14:42-47.

- Eichenfield LF, McFalda W, Brabec B, et al. Safety and efficacy of VP-102, a proprietary, drug-device combination product containing cantharidin, 0.7% (w/v), in children and adults with molluscum contagiosum: two phase 3 randomized clinical trials. JAMA Dermatol. 2020;156:1315-1323. doi:10.1001/jamadermatol.2020.3238

- Paller AS, Green LJ, Silverberg N, et al. Berdazimer gel for molluscum contagiosum in patients with atopic dermatitis. Pediatr Dermatol.Published online February 27, 2024. doi:10.1111/pde.15575

- Eichenfield L, Hebert A, Mancini A, et al. Therapeutic approaches and special considerations for treating molluscum contagiosum. J Drugs Dermatol. 2021;20:1185-1190. doi:10.36849/jdd.6383

Are Children Born Through ART at Higher Risk for Cancer?

The results of a large French study comparing the cancer risk in children conceived through assisted reproductive technology (ART) with that of naturally conceived children were published recently in JAMA Network Open. This study is one of the largest to date on this subject: It included 8,526,306 children born in France between 2010 and 2021, of whom 260,236 (3%) were conceived through ART, and followed them up to a median age of 6.7 years.

Motivations for the Study

ART (including artificial insemination, in vitro fertilization [IVF], or intracytoplasmic sperm injection [ICSI] with fresh or frozen embryo transfer) accounts for about 1 in 30 births in France. However, limited and heterogeneous data have suggested an increased risk for certain health disorders, including cancer, among children conceived through ART. Therefore, a large-scale evaluation of cancer risk in these children is important.

No Overall Increase

In all, 9256 children developed cancer, including 292 who were conceived through ART. Thus, Nevertheless, a slight increase in the risk for leukemia was observed in children conceived through IVF or ICSI. The investigators observed approximately one additional case for every 5000 newborns conceived through IVF or ICSI who reached age 10 years.

Epidemiological monitoring should be continued to better evaluate long-term risks and see whether the risk for leukemia is confirmed. If it is, then it will be useful to investigate the mechanisms related to ART techniques or the fertility disorders of parents that could lead to an increased risk for leukemia.

This story was translated from Univadis France, which is part of the Medscape Professional Network, using several editorial tools, including AI, as part of the process. Human editors reviewed this content before publication. A version of this article appeared on Medscape.com.

The results of a large French study comparing the cancer risk in children conceived through assisted reproductive technology (ART) with that of naturally conceived children were published recently in JAMA Network Open. This study is one of the largest to date on this subject: It included 8,526,306 children born in France between 2010 and 2021, of whom 260,236 (3%) were conceived through ART, and followed them up to a median age of 6.7 years.

Motivations for the Study

ART (including artificial insemination, in vitro fertilization [IVF], or intracytoplasmic sperm injection [ICSI] with fresh or frozen embryo transfer) accounts for about 1 in 30 births in France. However, limited and heterogeneous data have suggested an increased risk for certain health disorders, including cancer, among children conceived through ART. Therefore, a large-scale evaluation of cancer risk in these children is important.

No Overall Increase

In all, 9256 children developed cancer, including 292 who were conceived through ART. Thus, Nevertheless, a slight increase in the risk for leukemia was observed in children conceived through IVF or ICSI. The investigators observed approximately one additional case for every 5000 newborns conceived through IVF or ICSI who reached age 10 years.

Epidemiological monitoring should be continued to better evaluate long-term risks and see whether the risk for leukemia is confirmed. If it is, then it will be useful to investigate the mechanisms related to ART techniques or the fertility disorders of parents that could lead to an increased risk for leukemia.

This story was translated from Univadis France, which is part of the Medscape Professional Network, using several editorial tools, including AI, as part of the process. Human editors reviewed this content before publication. A version of this article appeared on Medscape.com.

The results of a large French study comparing the cancer risk in children conceived through assisted reproductive technology (ART) with that of naturally conceived children were published recently in JAMA Network Open. This study is one of the largest to date on this subject: It included 8,526,306 children born in France between 2010 and 2021, of whom 260,236 (3%) were conceived through ART, and followed them up to a median age of 6.7 years.

Motivations for the Study