User login

FDA clears first mobile rapid test for concussion

, the company has announced.

Eye-Sync is a virtual reality eye-tracking platform that provides objective measurements to aid in the assessment of concussion. It’s the first mobile, rapid test for concussion that has been cleared by the FDA, the company said.

As reported by this news organization, Eye-Sync received breakthrough designation from the FDA for this indication in March 2019.

The FDA initially cleared the Eye-Sync platform for recording, viewing, and analyzing eye movements to help clinicians identify visual tracking impairment.

The Eye-Sync technology uses a series of 60-second eye tracking assessments, neurocognitive batteries, symptom inventories, and standardized patient inventories to identify the type and severity of impairment after concussion.

“The platform generates customizable and interpretive reports that support clinical decision making and offers visual and vestibular therapies to remedy deficits and monitor improvement over time,” the company said.

In support of the application for use in concussion, SyncThink enrolled 1,655 children and adults into a clinical study that collected comprehensive patient and concussion-related data for over 12 months.

The company used these data to develop proprietary algorithms and deep learning models to identify a positive or negative indication of concussion.

The study showed that Eye-Sinc had sensitivity greater than 82% and specificity greater than 93%, “thereby providing clinicians with significant and actionable data when evaluating individuals with concussion,” the company said in a news release.

“The outcome of this study very clearly shows the effectiveness of our technology at detecting concussion and definitively demonstrates the clinical utility of Eye-Sinc,” SyncThink Chief Clinical Officer Scott Anderson said in the release.

“It also shows that the future of concussion diagnosis is no longer purely symptom-based but that of a technology driven multi-modal approach,” Mr. Anderson said.

A version of this article first appeared on Medscape.com.

, the company has announced.

Eye-Sync is a virtual reality eye-tracking platform that provides objective measurements to aid in the assessment of concussion. It’s the first mobile, rapid test for concussion that has been cleared by the FDA, the company said.

As reported by this news organization, Eye-Sync received breakthrough designation from the FDA for this indication in March 2019.

The FDA initially cleared the Eye-Sync platform for recording, viewing, and analyzing eye movements to help clinicians identify visual tracking impairment.

The Eye-Sync technology uses a series of 60-second eye tracking assessments, neurocognitive batteries, symptom inventories, and standardized patient inventories to identify the type and severity of impairment after concussion.

“The platform generates customizable and interpretive reports that support clinical decision making and offers visual and vestibular therapies to remedy deficits and monitor improvement over time,” the company said.

In support of the application for use in concussion, SyncThink enrolled 1,655 children and adults into a clinical study that collected comprehensive patient and concussion-related data for over 12 months.

The company used these data to develop proprietary algorithms and deep learning models to identify a positive or negative indication of concussion.

The study showed that Eye-Sinc had sensitivity greater than 82% and specificity greater than 93%, “thereby providing clinicians with significant and actionable data when evaluating individuals with concussion,” the company said in a news release.

“The outcome of this study very clearly shows the effectiveness of our technology at detecting concussion and definitively demonstrates the clinical utility of Eye-Sinc,” SyncThink Chief Clinical Officer Scott Anderson said in the release.

“It also shows that the future of concussion diagnosis is no longer purely symptom-based but that of a technology driven multi-modal approach,” Mr. Anderson said.

A version of this article first appeared on Medscape.com.

, the company has announced.

Eye-Sync is a virtual reality eye-tracking platform that provides objective measurements to aid in the assessment of concussion. It’s the first mobile, rapid test for concussion that has been cleared by the FDA, the company said.

As reported by this news organization, Eye-Sync received breakthrough designation from the FDA for this indication in March 2019.

The FDA initially cleared the Eye-Sync platform for recording, viewing, and analyzing eye movements to help clinicians identify visual tracking impairment.

The Eye-Sync technology uses a series of 60-second eye tracking assessments, neurocognitive batteries, symptom inventories, and standardized patient inventories to identify the type and severity of impairment after concussion.

“The platform generates customizable and interpretive reports that support clinical decision making and offers visual and vestibular therapies to remedy deficits and monitor improvement over time,” the company said.

In support of the application for use in concussion, SyncThink enrolled 1,655 children and adults into a clinical study that collected comprehensive patient and concussion-related data for over 12 months.

The company used these data to develop proprietary algorithms and deep learning models to identify a positive or negative indication of concussion.

The study showed that Eye-Sinc had sensitivity greater than 82% and specificity greater than 93%, “thereby providing clinicians with significant and actionable data when evaluating individuals with concussion,” the company said in a news release.

“The outcome of this study very clearly shows the effectiveness of our technology at detecting concussion and definitively demonstrates the clinical utility of Eye-Sinc,” SyncThink Chief Clinical Officer Scott Anderson said in the release.

“It also shows that the future of concussion diagnosis is no longer purely symptom-based but that of a technology driven multi-modal approach,” Mr. Anderson said.

A version of this article first appeared on Medscape.com.

Is AFib a stroke cause or innocent bystander? The debate continues

Discovery of substantial atrial fibrillation (AFib) is usually an indication to start oral anticoagulation (OAC) for stroke prevention, but it’s far from settled whether such AFib is actually a direct cause of thromboembolic stroke. And that has implications for whether patients with occasional bouts of the arrhythmia need to be on continuous OAC.

It’s possible that some with infrequent paroxysmal AFib can get away with OAC maintained only about as long as the arrhythmia persists, and then go off the drugs, say researchers based on their study, which, they caution, would need the support of prospective trials before such a strategy could be considered.

But importantly, in their patients who had been continuously monitored by their cardiac implantable electronic devices (CIEDs) prior to experiencing a stroke, the 30-day risk of that stroke more than tripled if their AFib burden on 1 day reached at least 5-6 hours. The risk jumped especially high within the first few days after accumulating that amount of AFib in a day, but then fell off sharply over the next few days.

Based on the study, “Your risk of stroke goes up acutely when you have an episode of AFib, and it decreases rapidly, back to baseline – certainly by 30 days and it looked like in our data by 5 days,” Daniel E. Singer, MD, of Massachusetts General Hospital, Boston, said in an interview.

Increasingly, he noted, “there’s a widespread belief that AFib is a risk marker, not a causal risk factor.” In that scenario, most embolic strokes are caused by thrombi formed as a result of an atrial myopathy, characterized by fibrosis and inflammation, that also happens to trigger AFib.

But said Dr. Singer, who is lead author on the analysis published online Sept. 29 in JAMA Cardiology.

Some studies have “shown that anticoagulants seem to lower stroke risk even in patients without atrial fib, and even from sources not likely to be coming from the atrium,” Mintu P. Turakhia, MD, of Stanford (Calif.) University, Palo Alto, said in an interview. Collectively they point to “atrial fibrillation as a cause of and a noncausal marker for stroke.”

For example, Dr. Turakhia pointed out in an editorial accompanying the current report that stroke in patients with CIEDs “may occur during prolonged periods of sinus rhythm.”

The current study, he said in an interview, doesn’t preclude atrial myopathy as one direct cause of stroke-associated thrombus, because probably both the myopathy and AFib can be culprits. Still, AFib itself it may bear more responsibility for strokes in patients with fewer competing risks for stroke.

In such patients at lower vascular risk, who may have a CHA2DS2-VASc score of only 1 or 2, for example, “AFib can become a more important cause” of ischemic stroke, Dr. Turakhia said. That’s when AFib is more likely to be temporally related to stroke as the likely culprit, the mechanism addressed by Dr. Singer and associates.

“I think we’re all trying to grapple with what the truth is,” Dr. Singer observed. Still, the current study was unusual for primarily looking at the temporal relationship between AFib and stroke, rather than stroke risk. “And once again, as we found in our earlier study, but now a much larger study, it’s a tight relationship.”

Based on the current results, he said, the risk is “high when you have AFib, and it decreases very rapidly after the AFib is over.” And, “it takes multiple hours of AFib to raise stroke risk.” Inclusion in the analysis required accumulation of at least 5.5 hours of AFib on at least 1 day in a month, the cut point at which stroke risk started to climb significantly in an earlier trial.

In the current analysis, however, the 30-day odds ratio for stroke was a nonsignificant 2.75 for an AFib burden of 6-23 hours in a day and jumped to a significant 5.0 for a burden in excess of 23 hours in a day. “That’s a lot of AFib” before the risk actually goes up, and supports AFib as causative, Dr. Singer said. If it were the myopathy itself triggering stroke in these particular patients, the risk would be ongoing and not subject to a threshold of AFib burden.

Implications for noncontinuous OAC

“The hope is that there are people who have very little AFib: They may have several hours, and then they have nothing for 6 months. Do they have to be anticoagulated or not?” Dr. Singer asked.

“If you believe the risk-marker story, you might say they have to be anticoagulated. But if you believe our results, you would certainly think there’s a good chance they don’t have to be anticoagulated,” he said.

“So it is logical to think, if you have the right people and continuous monitoring, that you could have time-delimited anticoagulation.” That is, patients might start right away on a direct OAC once reaching the AFib threshold in a day, Dr. Singer said, “going on and off anticoagulants in parallel with their episodes of AFib.”

The strategy wouldn’t be feasible in patients who often experience AFib, Dr. Singer noted, “but it might work for people who have infrequent paroxysmal AFib.” It certainly would first have to be tested in prospective trials, he said. Such trials would be more practical than ever to carry out given the growing availability of continuous AFib monitoring by wearables.

“We need a trial to make the case whether it’s safe or not,” Dr. Turakhia said of such a rhythm-guided approach to OAC for AFib. The population to start with, he said, would be patients with paroxysmal AFib and low CHA2DS2-VASc scores. “If you think CHA2DS2-VASc as an integrated score of vascular risk, such patients would have a lot fewer reasons to have strokes. And if they do have a stroke, it’s more reasonable to assume that it’s likely caused by atrial fib and not just a marker.”

Importantly, such a strategy could well be safer than continuous OAC for some patients – those at the lowest vascular risk and with the most occasional AFib and lowest AFib burden “who are otherwise doing fine,” Dr. Turakhia said. In such patients on continuous OAC, he proposed, the risks of bleeding and intracranial hemorrhage could potentially exceed the expected degree of protection from ischemic events.

Discordant periods of AFib burden

Dr. Singer and his colleagues linked a national electronic health record database with Medtronic CareLink records covering 10 years to identify 891 patients who experienced an ischemic stroke preceded by at least 120 days of continuous heart-rhythm monitoring.

The patients were then categorized by their pattern of AFib, if any, within each of two prestroke periods: the most recent 30 days, which was the test period, and the preceding 91-120 days, the control period.

The analysis then excluded any patients who reached an AFib-burden threshold of at least 5.5 hours on any day during both the test and control periods, and those who did not attain that threshold in either period.

“The ones who had AFib in both periods mostly had permanent AFib, and ones that didn’t have AFib in either period mostly were in sinus rhythm,” Dr. Singer said. It was “close to 100%” in both cases.

Those exclusions left 66 patients, 7.4% of the total, who reached the AFib-burden threshold on at least 1 day during either the test or control periods, but not both. They included 52 and 14 patients, respectively, with “discordant” periods, that is, at least that burden of AFib in a day during either the test or control period, but not both.

Comparing AFib burden at test versus control periods among patients for whom the two periods were discordant yielded an OR for stroke of 3.71 (95% confidence interval, 2.06-6.70).

Stroke risk levels were not evenly spread throughout the 24-hour periods that met the AFib-burden threshold or the 30 days preceding the patients’ strokes. The OR for stroke was 5.00 (95% CI, 2.62-9.55) during days 1-5 following the day in which the AFib-burden threshold was met. And it was 5.00 (95% CI, 2.08-12.01) over 30 days if the AFib burden exceeded 23 hours on any day of the test period.

The study’s case-crossover design, in which each patient served as their own control, is one of its advantages, Dr. Singer observed. Most patient features, including CHA2DS2-VASc score and comorbidities, did not change appreciably from earliest to the latest 30-day period, which strengthens the comparison of the two because “you don’t have to worry about long-term confounding.”

Dr. Singer was supported by the Eliot B. and Edith C. Shoolman fund of the Massachusetts General Hospital. He discloses receiving grants from Boehringer Ingelheim and Bristol-Myers Squibb; personal fees from Boehringer Ingelheim, Bristol-Myers Squibb, Fitbit, Johnson & Johnson, Merck, and Pfizer; and royalties from UpToDate.

Dr. Turakhia discloses personal fees from Medtronic, Abbott, Sanofi, Pfizer, Myokardia, Johnson & Johnson, Milestone Pharmaceuticals, InCarda Therapeutics, 100Plus, Forward Pharma, and AliveCor; and grants from Bristol-Myers Squibb, the American Heart Association, Apple, and Bayer.

A version of this article first appeared on Medscape.com.

Discovery of substantial atrial fibrillation (AFib) is usually an indication to start oral anticoagulation (OAC) for stroke prevention, but it’s far from settled whether such AFib is actually a direct cause of thromboembolic stroke. And that has implications for whether patients with occasional bouts of the arrhythmia need to be on continuous OAC.

It’s possible that some with infrequent paroxysmal AFib can get away with OAC maintained only about as long as the arrhythmia persists, and then go off the drugs, say researchers based on their study, which, they caution, would need the support of prospective trials before such a strategy could be considered.

But importantly, in their patients who had been continuously monitored by their cardiac implantable electronic devices (CIEDs) prior to experiencing a stroke, the 30-day risk of that stroke more than tripled if their AFib burden on 1 day reached at least 5-6 hours. The risk jumped especially high within the first few days after accumulating that amount of AFib in a day, but then fell off sharply over the next few days.

Based on the study, “Your risk of stroke goes up acutely when you have an episode of AFib, and it decreases rapidly, back to baseline – certainly by 30 days and it looked like in our data by 5 days,” Daniel E. Singer, MD, of Massachusetts General Hospital, Boston, said in an interview.

Increasingly, he noted, “there’s a widespread belief that AFib is a risk marker, not a causal risk factor.” In that scenario, most embolic strokes are caused by thrombi formed as a result of an atrial myopathy, characterized by fibrosis and inflammation, that also happens to trigger AFib.

But said Dr. Singer, who is lead author on the analysis published online Sept. 29 in JAMA Cardiology.

Some studies have “shown that anticoagulants seem to lower stroke risk even in patients without atrial fib, and even from sources not likely to be coming from the atrium,” Mintu P. Turakhia, MD, of Stanford (Calif.) University, Palo Alto, said in an interview. Collectively they point to “atrial fibrillation as a cause of and a noncausal marker for stroke.”

For example, Dr. Turakhia pointed out in an editorial accompanying the current report that stroke in patients with CIEDs “may occur during prolonged periods of sinus rhythm.”

The current study, he said in an interview, doesn’t preclude atrial myopathy as one direct cause of stroke-associated thrombus, because probably both the myopathy and AFib can be culprits. Still, AFib itself it may bear more responsibility for strokes in patients with fewer competing risks for stroke.

In such patients at lower vascular risk, who may have a CHA2DS2-VASc score of only 1 or 2, for example, “AFib can become a more important cause” of ischemic stroke, Dr. Turakhia said. That’s when AFib is more likely to be temporally related to stroke as the likely culprit, the mechanism addressed by Dr. Singer and associates.

“I think we’re all trying to grapple with what the truth is,” Dr. Singer observed. Still, the current study was unusual for primarily looking at the temporal relationship between AFib and stroke, rather than stroke risk. “And once again, as we found in our earlier study, but now a much larger study, it’s a tight relationship.”

Based on the current results, he said, the risk is “high when you have AFib, and it decreases very rapidly after the AFib is over.” And, “it takes multiple hours of AFib to raise stroke risk.” Inclusion in the analysis required accumulation of at least 5.5 hours of AFib on at least 1 day in a month, the cut point at which stroke risk started to climb significantly in an earlier trial.

In the current analysis, however, the 30-day odds ratio for stroke was a nonsignificant 2.75 for an AFib burden of 6-23 hours in a day and jumped to a significant 5.0 for a burden in excess of 23 hours in a day. “That’s a lot of AFib” before the risk actually goes up, and supports AFib as causative, Dr. Singer said. If it were the myopathy itself triggering stroke in these particular patients, the risk would be ongoing and not subject to a threshold of AFib burden.

Implications for noncontinuous OAC

“The hope is that there are people who have very little AFib: They may have several hours, and then they have nothing for 6 months. Do they have to be anticoagulated or not?” Dr. Singer asked.

“If you believe the risk-marker story, you might say they have to be anticoagulated. But if you believe our results, you would certainly think there’s a good chance they don’t have to be anticoagulated,” he said.

“So it is logical to think, if you have the right people and continuous monitoring, that you could have time-delimited anticoagulation.” That is, patients might start right away on a direct OAC once reaching the AFib threshold in a day, Dr. Singer said, “going on and off anticoagulants in parallel with their episodes of AFib.”

The strategy wouldn’t be feasible in patients who often experience AFib, Dr. Singer noted, “but it might work for people who have infrequent paroxysmal AFib.” It certainly would first have to be tested in prospective trials, he said. Such trials would be more practical than ever to carry out given the growing availability of continuous AFib monitoring by wearables.

“We need a trial to make the case whether it’s safe or not,” Dr. Turakhia said of such a rhythm-guided approach to OAC for AFib. The population to start with, he said, would be patients with paroxysmal AFib and low CHA2DS2-VASc scores. “If you think CHA2DS2-VASc as an integrated score of vascular risk, such patients would have a lot fewer reasons to have strokes. And if they do have a stroke, it’s more reasonable to assume that it’s likely caused by atrial fib and not just a marker.”

Importantly, such a strategy could well be safer than continuous OAC for some patients – those at the lowest vascular risk and with the most occasional AFib and lowest AFib burden “who are otherwise doing fine,” Dr. Turakhia said. In such patients on continuous OAC, he proposed, the risks of bleeding and intracranial hemorrhage could potentially exceed the expected degree of protection from ischemic events.

Discordant periods of AFib burden

Dr. Singer and his colleagues linked a national electronic health record database with Medtronic CareLink records covering 10 years to identify 891 patients who experienced an ischemic stroke preceded by at least 120 days of continuous heart-rhythm monitoring.

The patients were then categorized by their pattern of AFib, if any, within each of two prestroke periods: the most recent 30 days, which was the test period, and the preceding 91-120 days, the control period.

The analysis then excluded any patients who reached an AFib-burden threshold of at least 5.5 hours on any day during both the test and control periods, and those who did not attain that threshold in either period.

“The ones who had AFib in both periods mostly had permanent AFib, and ones that didn’t have AFib in either period mostly were in sinus rhythm,” Dr. Singer said. It was “close to 100%” in both cases.

Those exclusions left 66 patients, 7.4% of the total, who reached the AFib-burden threshold on at least 1 day during either the test or control periods, but not both. They included 52 and 14 patients, respectively, with “discordant” periods, that is, at least that burden of AFib in a day during either the test or control period, but not both.

Comparing AFib burden at test versus control periods among patients for whom the two periods were discordant yielded an OR for stroke of 3.71 (95% confidence interval, 2.06-6.70).

Stroke risk levels were not evenly spread throughout the 24-hour periods that met the AFib-burden threshold or the 30 days preceding the patients’ strokes. The OR for stroke was 5.00 (95% CI, 2.62-9.55) during days 1-5 following the day in which the AFib-burden threshold was met. And it was 5.00 (95% CI, 2.08-12.01) over 30 days if the AFib burden exceeded 23 hours on any day of the test period.

The study’s case-crossover design, in which each patient served as their own control, is one of its advantages, Dr. Singer observed. Most patient features, including CHA2DS2-VASc score and comorbidities, did not change appreciably from earliest to the latest 30-day period, which strengthens the comparison of the two because “you don’t have to worry about long-term confounding.”

Dr. Singer was supported by the Eliot B. and Edith C. Shoolman fund of the Massachusetts General Hospital. He discloses receiving grants from Boehringer Ingelheim and Bristol-Myers Squibb; personal fees from Boehringer Ingelheim, Bristol-Myers Squibb, Fitbit, Johnson & Johnson, Merck, and Pfizer; and royalties from UpToDate.

Dr. Turakhia discloses personal fees from Medtronic, Abbott, Sanofi, Pfizer, Myokardia, Johnson & Johnson, Milestone Pharmaceuticals, InCarda Therapeutics, 100Plus, Forward Pharma, and AliveCor; and grants from Bristol-Myers Squibb, the American Heart Association, Apple, and Bayer.

A version of this article first appeared on Medscape.com.

Discovery of substantial atrial fibrillation (AFib) is usually an indication to start oral anticoagulation (OAC) for stroke prevention, but it’s far from settled whether such AFib is actually a direct cause of thromboembolic stroke. And that has implications for whether patients with occasional bouts of the arrhythmia need to be on continuous OAC.

It’s possible that some with infrequent paroxysmal AFib can get away with OAC maintained only about as long as the arrhythmia persists, and then go off the drugs, say researchers based on their study, which, they caution, would need the support of prospective trials before such a strategy could be considered.

But importantly, in their patients who had been continuously monitored by their cardiac implantable electronic devices (CIEDs) prior to experiencing a stroke, the 30-day risk of that stroke more than tripled if their AFib burden on 1 day reached at least 5-6 hours. The risk jumped especially high within the first few days after accumulating that amount of AFib in a day, but then fell off sharply over the next few days.

Based on the study, “Your risk of stroke goes up acutely when you have an episode of AFib, and it decreases rapidly, back to baseline – certainly by 30 days and it looked like in our data by 5 days,” Daniel E. Singer, MD, of Massachusetts General Hospital, Boston, said in an interview.

Increasingly, he noted, “there’s a widespread belief that AFib is a risk marker, not a causal risk factor.” In that scenario, most embolic strokes are caused by thrombi formed as a result of an atrial myopathy, characterized by fibrosis and inflammation, that also happens to trigger AFib.

But said Dr. Singer, who is lead author on the analysis published online Sept. 29 in JAMA Cardiology.

Some studies have “shown that anticoagulants seem to lower stroke risk even in patients without atrial fib, and even from sources not likely to be coming from the atrium,” Mintu P. Turakhia, MD, of Stanford (Calif.) University, Palo Alto, said in an interview. Collectively they point to “atrial fibrillation as a cause of and a noncausal marker for stroke.”

For example, Dr. Turakhia pointed out in an editorial accompanying the current report that stroke in patients with CIEDs “may occur during prolonged periods of sinus rhythm.”

The current study, he said in an interview, doesn’t preclude atrial myopathy as one direct cause of stroke-associated thrombus, because probably both the myopathy and AFib can be culprits. Still, AFib itself it may bear more responsibility for strokes in patients with fewer competing risks for stroke.

In such patients at lower vascular risk, who may have a CHA2DS2-VASc score of only 1 or 2, for example, “AFib can become a more important cause” of ischemic stroke, Dr. Turakhia said. That’s when AFib is more likely to be temporally related to stroke as the likely culprit, the mechanism addressed by Dr. Singer and associates.

“I think we’re all trying to grapple with what the truth is,” Dr. Singer observed. Still, the current study was unusual for primarily looking at the temporal relationship between AFib and stroke, rather than stroke risk. “And once again, as we found in our earlier study, but now a much larger study, it’s a tight relationship.”

Based on the current results, he said, the risk is “high when you have AFib, and it decreases very rapidly after the AFib is over.” And, “it takes multiple hours of AFib to raise stroke risk.” Inclusion in the analysis required accumulation of at least 5.5 hours of AFib on at least 1 day in a month, the cut point at which stroke risk started to climb significantly in an earlier trial.

In the current analysis, however, the 30-day odds ratio for stroke was a nonsignificant 2.75 for an AFib burden of 6-23 hours in a day and jumped to a significant 5.0 for a burden in excess of 23 hours in a day. “That’s a lot of AFib” before the risk actually goes up, and supports AFib as causative, Dr. Singer said. If it were the myopathy itself triggering stroke in these particular patients, the risk would be ongoing and not subject to a threshold of AFib burden.

Implications for noncontinuous OAC

“The hope is that there are people who have very little AFib: They may have several hours, and then they have nothing for 6 months. Do they have to be anticoagulated or not?” Dr. Singer asked.

“If you believe the risk-marker story, you might say they have to be anticoagulated. But if you believe our results, you would certainly think there’s a good chance they don’t have to be anticoagulated,” he said.

“So it is logical to think, if you have the right people and continuous monitoring, that you could have time-delimited anticoagulation.” That is, patients might start right away on a direct OAC once reaching the AFib threshold in a day, Dr. Singer said, “going on and off anticoagulants in parallel with their episodes of AFib.”

The strategy wouldn’t be feasible in patients who often experience AFib, Dr. Singer noted, “but it might work for people who have infrequent paroxysmal AFib.” It certainly would first have to be tested in prospective trials, he said. Such trials would be more practical than ever to carry out given the growing availability of continuous AFib monitoring by wearables.

“We need a trial to make the case whether it’s safe or not,” Dr. Turakhia said of such a rhythm-guided approach to OAC for AFib. The population to start with, he said, would be patients with paroxysmal AFib and low CHA2DS2-VASc scores. “If you think CHA2DS2-VASc as an integrated score of vascular risk, such patients would have a lot fewer reasons to have strokes. And if they do have a stroke, it’s more reasonable to assume that it’s likely caused by atrial fib and not just a marker.”

Importantly, such a strategy could well be safer than continuous OAC for some patients – those at the lowest vascular risk and with the most occasional AFib and lowest AFib burden “who are otherwise doing fine,” Dr. Turakhia said. In such patients on continuous OAC, he proposed, the risks of bleeding and intracranial hemorrhage could potentially exceed the expected degree of protection from ischemic events.

Discordant periods of AFib burden

Dr. Singer and his colleagues linked a national electronic health record database with Medtronic CareLink records covering 10 years to identify 891 patients who experienced an ischemic stroke preceded by at least 120 days of continuous heart-rhythm monitoring.

The patients were then categorized by their pattern of AFib, if any, within each of two prestroke periods: the most recent 30 days, which was the test period, and the preceding 91-120 days, the control period.

The analysis then excluded any patients who reached an AFib-burden threshold of at least 5.5 hours on any day during both the test and control periods, and those who did not attain that threshold in either period.

“The ones who had AFib in both periods mostly had permanent AFib, and ones that didn’t have AFib in either period mostly were in sinus rhythm,” Dr. Singer said. It was “close to 100%” in both cases.

Those exclusions left 66 patients, 7.4% of the total, who reached the AFib-burden threshold on at least 1 day during either the test or control periods, but not both. They included 52 and 14 patients, respectively, with “discordant” periods, that is, at least that burden of AFib in a day during either the test or control period, but not both.

Comparing AFib burden at test versus control periods among patients for whom the two periods were discordant yielded an OR for stroke of 3.71 (95% confidence interval, 2.06-6.70).

Stroke risk levels were not evenly spread throughout the 24-hour periods that met the AFib-burden threshold or the 30 days preceding the patients’ strokes. The OR for stroke was 5.00 (95% CI, 2.62-9.55) during days 1-5 following the day in which the AFib-burden threshold was met. And it was 5.00 (95% CI, 2.08-12.01) over 30 days if the AFib burden exceeded 23 hours on any day of the test period.

The study’s case-crossover design, in which each patient served as their own control, is one of its advantages, Dr. Singer observed. Most patient features, including CHA2DS2-VASc score and comorbidities, did not change appreciably from earliest to the latest 30-day period, which strengthens the comparison of the two because “you don’t have to worry about long-term confounding.”

Dr. Singer was supported by the Eliot B. and Edith C. Shoolman fund of the Massachusetts General Hospital. He discloses receiving grants from Boehringer Ingelheim and Bristol-Myers Squibb; personal fees from Boehringer Ingelheim, Bristol-Myers Squibb, Fitbit, Johnson & Johnson, Merck, and Pfizer; and royalties from UpToDate.

Dr. Turakhia discloses personal fees from Medtronic, Abbott, Sanofi, Pfizer, Myokardia, Johnson & Johnson, Milestone Pharmaceuticals, InCarda Therapeutics, 100Plus, Forward Pharma, and AliveCor; and grants from Bristol-Myers Squibb, the American Heart Association, Apple, and Bayer.

A version of this article first appeared on Medscape.com.

ADHD med may reduce apathy in Alzheimer’s disease

Methylphenidate is safe and effective for treating apathy in patients with Alzheimer’s disease (AD), new research suggests.

Results from a phase 3 randomized trial showed that, after 6 months of treatment, mean score on the Neuropsychiatric Inventory (NPI) apathy subscale decreased by 4.5 points for patients who received methylphenidate vs. a decrease of 3.1 points for those who received placebo.

In addition, the safety profile showed no significant between-group differences.

“Methylphenidate offers a treatment approach providing a modest but potentially clinically significant benefit for patients and caregivers,” said the investigators, led by Jacobo E. Mintzer, MD, MBA, professor of health studies at the Medical University of South Carolina in Charleston.

The findings were published online Sept. 27 in JAMA Neurology.

Common problem

Apathy, which is common among patients with AD, is associated with increased risk for mortality, financial burden, and caregiver burden. No treatment has proved effective for apathy in this population.

Two trials of methylphenidate, a catecholaminergic agent, have provided preliminary evidence of efficacy. Findings from the Apathy in Dementia Methylphenidate trial (ADMET) suggested the drug was associated with improved cognition and few adverse events. However, both trials had small patient populations and short durations.

The current investigators conducted ADMET 2, a 6-month, phase 3 trial, to investigate methylphenidate further. They recruited 200 patients (mean age, 76 years; 66% men; 90% White) at nine clinical centers that specialized in dementia care in the United States and one in Canada.

Eligible patients had a diagnosis of possible or probable AD and a Mini-Mental State Examination (MMSE) score between 10 and 28. They also had clinically significant apathy for at least 4 weeks and an available caregiver who spent more than 10 hours a week with the patient.

The researchers randomly assigned patients to receive methylphenidate (n = 99) or placebo (n = 101). For 3 days, participants in the active group received 10 mg/day of methylphenidate. After that point, they received 20 mg/day of methylphenidate for the rest of the study.

Patients in both treatment groups were given the same number of identical-appearing capsules each day.

In-person follow-up visits took place monthly for 6 months. Participants also were contacted by telephone at days 15, 45, and 75 after treatment assignment.

Participants underwent cognitive testing at baseline and at 2, 4, and 6 months. The battery of tests included the MMSE, Hopkins Verbal Learning Test, and Wechsler Adult Intelligence Scale – Revised Digit Span.

The trial’s two primary outcomes were mean change in NPI apathy score from baseline to 6 months and the odds of an improved rating on the Alzheimer’s Disease Cooperative Study Clinical Global Impression of Change (ADCS-CGIC) between baseline and 6 months.

Significant change on either outcome was to be considered a signal of effective treatment.

Treatment-specific benefit

Ten patients in the methylphenidate group and seven in the placebo group withdrew during the study.

Mean baseline score on the NPI apathy subscale was 8.0 vs. 7.6, respectively.

In an adjusted, longitudinal model, mean between-group difference in change in NPI apathy score at 6 months was –1.25 (P = .002). The mean NPI apathy score decreased by 4.5 points in the methylphenidate group vs. 3.1 points in the placebo group.

The largest change in apathy score occurred during the first 2 months of treatment. At 6 months, 27% of the methylphenidate group vs. 14% of the placebo group had an NPI apathy score of 0.

In addition, 43.8% of the methylphenidate group had improvement on the ADCS-CGIC compared with 35.2% of the placebo group. The odds ratio (OR) for improvement on ADCS-CGIC for methylphenidate vs. placebo was 1.90 (P = .07).

There was also a strong association between score improvement on the NPI apathy subscale and improvement on the ADCS-CGIC subscale (OR, 2.95; P = .002).

“It is important to note that there were no group differences in any of the cognitive measures, suggesting that the effect of the treatment is specific to the treatment of apathy and not a secondary effect of improvement in cognition,” the researchers wrote.

In all, 17 serious adverse events occurred in the methylphenidate group and 10 occurred in the placebo group. However, all events were found to be hospitalizations for events not related to treatment.

‘Enduring effect’

Commenting on the findings, Jeffrey L. Cummings, MD, ScD, professor of brain sciences at the University of Nevada, Las Vegas, noted that the reduction in NPI apathy subscale score of more than 50% was clinically meaningful.

A more robust outcome on the ADCS-CGIC would have been desirable, he added, although that instrument is not designed specifically for apathy.

Methylphenidate’s effect on apathy observed at 2 months and remaining stable throughout the study makes it appear to be “an enduring effect, and not something that the patient accommodates to,” said Dr. Cummings, who was not involved with the research. Such a change may manifest itself in a patient’s greater willingness to help voluntarily with housework or to suggest going for a walk, he noted.

“These are not dramatic changes in cognition, of course, but they are changes in initiative and that is very important,” Dr. Cummings said. Decreased apathy also may improve quality of life for the patient’s caregiver, he added.

Overall, the findings raise the question of whether the Food and Drug Administration should recognize apathy as an indication for which drugs can be approved, said Dr. Cummings.

“For me, that would be the next major step in this line of investigation,” he concluded.

The study was funded by the National Institute on Aging. Dr. Mintzer has served as an adviser to Praxis Bioresearch and Cerevel Therapeutics on matters unrelated to this study. Dr. Cummings is the author of the Neuropsychiatric Inventory but does not receive payments for it from academic trials such as ADMET 2.

A version of this article first appeared on Medscape.com.

Methylphenidate is safe and effective for treating apathy in patients with Alzheimer’s disease (AD), new research suggests.

Results from a phase 3 randomized trial showed that, after 6 months of treatment, mean score on the Neuropsychiatric Inventory (NPI) apathy subscale decreased by 4.5 points for patients who received methylphenidate vs. a decrease of 3.1 points for those who received placebo.

In addition, the safety profile showed no significant between-group differences.

“Methylphenidate offers a treatment approach providing a modest but potentially clinically significant benefit for patients and caregivers,” said the investigators, led by Jacobo E. Mintzer, MD, MBA, professor of health studies at the Medical University of South Carolina in Charleston.

The findings were published online Sept. 27 in JAMA Neurology.

Common problem

Apathy, which is common among patients with AD, is associated with increased risk for mortality, financial burden, and caregiver burden. No treatment has proved effective for apathy in this population.

Two trials of methylphenidate, a catecholaminergic agent, have provided preliminary evidence of efficacy. Findings from the Apathy in Dementia Methylphenidate trial (ADMET) suggested the drug was associated with improved cognition and few adverse events. However, both trials had small patient populations and short durations.

The current investigators conducted ADMET 2, a 6-month, phase 3 trial, to investigate methylphenidate further. They recruited 200 patients (mean age, 76 years; 66% men; 90% White) at nine clinical centers that specialized in dementia care in the United States and one in Canada.

Eligible patients had a diagnosis of possible or probable AD and a Mini-Mental State Examination (MMSE) score between 10 and 28. They also had clinically significant apathy for at least 4 weeks and an available caregiver who spent more than 10 hours a week with the patient.

The researchers randomly assigned patients to receive methylphenidate (n = 99) or placebo (n = 101). For 3 days, participants in the active group received 10 mg/day of methylphenidate. After that point, they received 20 mg/day of methylphenidate for the rest of the study.

Patients in both treatment groups were given the same number of identical-appearing capsules each day.

In-person follow-up visits took place monthly for 6 months. Participants also were contacted by telephone at days 15, 45, and 75 after treatment assignment.

Participants underwent cognitive testing at baseline and at 2, 4, and 6 months. The battery of tests included the MMSE, Hopkins Verbal Learning Test, and Wechsler Adult Intelligence Scale – Revised Digit Span.

The trial’s two primary outcomes were mean change in NPI apathy score from baseline to 6 months and the odds of an improved rating on the Alzheimer’s Disease Cooperative Study Clinical Global Impression of Change (ADCS-CGIC) between baseline and 6 months.

Significant change on either outcome was to be considered a signal of effective treatment.

Treatment-specific benefit

Ten patients in the methylphenidate group and seven in the placebo group withdrew during the study.

Mean baseline score on the NPI apathy subscale was 8.0 vs. 7.6, respectively.

In an adjusted, longitudinal model, mean between-group difference in change in NPI apathy score at 6 months was –1.25 (P = .002). The mean NPI apathy score decreased by 4.5 points in the methylphenidate group vs. 3.1 points in the placebo group.

The largest change in apathy score occurred during the first 2 months of treatment. At 6 months, 27% of the methylphenidate group vs. 14% of the placebo group had an NPI apathy score of 0.

In addition, 43.8% of the methylphenidate group had improvement on the ADCS-CGIC compared with 35.2% of the placebo group. The odds ratio (OR) for improvement on ADCS-CGIC for methylphenidate vs. placebo was 1.90 (P = .07).

There was also a strong association between score improvement on the NPI apathy subscale and improvement on the ADCS-CGIC subscale (OR, 2.95; P = .002).

“It is important to note that there were no group differences in any of the cognitive measures, suggesting that the effect of the treatment is specific to the treatment of apathy and not a secondary effect of improvement in cognition,” the researchers wrote.

In all, 17 serious adverse events occurred in the methylphenidate group and 10 occurred in the placebo group. However, all events were found to be hospitalizations for events not related to treatment.

‘Enduring effect’

Commenting on the findings, Jeffrey L. Cummings, MD, ScD, professor of brain sciences at the University of Nevada, Las Vegas, noted that the reduction in NPI apathy subscale score of more than 50% was clinically meaningful.

A more robust outcome on the ADCS-CGIC would have been desirable, he added, although that instrument is not designed specifically for apathy.

Methylphenidate’s effect on apathy observed at 2 months and remaining stable throughout the study makes it appear to be “an enduring effect, and not something that the patient accommodates to,” said Dr. Cummings, who was not involved with the research. Such a change may manifest itself in a patient’s greater willingness to help voluntarily with housework or to suggest going for a walk, he noted.

“These are not dramatic changes in cognition, of course, but they are changes in initiative and that is very important,” Dr. Cummings said. Decreased apathy also may improve quality of life for the patient’s caregiver, he added.

Overall, the findings raise the question of whether the Food and Drug Administration should recognize apathy as an indication for which drugs can be approved, said Dr. Cummings.

“For me, that would be the next major step in this line of investigation,” he concluded.

The study was funded by the National Institute on Aging. Dr. Mintzer has served as an adviser to Praxis Bioresearch and Cerevel Therapeutics on matters unrelated to this study. Dr. Cummings is the author of the Neuropsychiatric Inventory but does not receive payments for it from academic trials such as ADMET 2.

A version of this article first appeared on Medscape.com.

Methylphenidate is safe and effective for treating apathy in patients with Alzheimer’s disease (AD), new research suggests.

Results from a phase 3 randomized trial showed that, after 6 months of treatment, mean score on the Neuropsychiatric Inventory (NPI) apathy subscale decreased by 4.5 points for patients who received methylphenidate vs. a decrease of 3.1 points for those who received placebo.

In addition, the safety profile showed no significant between-group differences.

“Methylphenidate offers a treatment approach providing a modest but potentially clinically significant benefit for patients and caregivers,” said the investigators, led by Jacobo E. Mintzer, MD, MBA, professor of health studies at the Medical University of South Carolina in Charleston.

The findings were published online Sept. 27 in JAMA Neurology.

Common problem

Apathy, which is common among patients with AD, is associated with increased risk for mortality, financial burden, and caregiver burden. No treatment has proved effective for apathy in this population.

Two trials of methylphenidate, a catecholaminergic agent, have provided preliminary evidence of efficacy. Findings from the Apathy in Dementia Methylphenidate trial (ADMET) suggested the drug was associated with improved cognition and few adverse events. However, both trials had small patient populations and short durations.

The current investigators conducted ADMET 2, a 6-month, phase 3 trial, to investigate methylphenidate further. They recruited 200 patients (mean age, 76 years; 66% men; 90% White) at nine clinical centers that specialized in dementia care in the United States and one in Canada.

Eligible patients had a diagnosis of possible or probable AD and a Mini-Mental State Examination (MMSE) score between 10 and 28. They also had clinically significant apathy for at least 4 weeks and an available caregiver who spent more than 10 hours a week with the patient.

The researchers randomly assigned patients to receive methylphenidate (n = 99) or placebo (n = 101). For 3 days, participants in the active group received 10 mg/day of methylphenidate. After that point, they received 20 mg/day of methylphenidate for the rest of the study.

Patients in both treatment groups were given the same number of identical-appearing capsules each day.

In-person follow-up visits took place monthly for 6 months. Participants also were contacted by telephone at days 15, 45, and 75 after treatment assignment.

Participants underwent cognitive testing at baseline and at 2, 4, and 6 months. The battery of tests included the MMSE, Hopkins Verbal Learning Test, and Wechsler Adult Intelligence Scale – Revised Digit Span.

The trial’s two primary outcomes were mean change in NPI apathy score from baseline to 6 months and the odds of an improved rating on the Alzheimer’s Disease Cooperative Study Clinical Global Impression of Change (ADCS-CGIC) between baseline and 6 months.

Significant change on either outcome was to be considered a signal of effective treatment.

Treatment-specific benefit

Ten patients in the methylphenidate group and seven in the placebo group withdrew during the study.

Mean baseline score on the NPI apathy subscale was 8.0 vs. 7.6, respectively.

In an adjusted, longitudinal model, mean between-group difference in change in NPI apathy score at 6 months was –1.25 (P = .002). The mean NPI apathy score decreased by 4.5 points in the methylphenidate group vs. 3.1 points in the placebo group.

The largest change in apathy score occurred during the first 2 months of treatment. At 6 months, 27% of the methylphenidate group vs. 14% of the placebo group had an NPI apathy score of 0.

In addition, 43.8% of the methylphenidate group had improvement on the ADCS-CGIC compared with 35.2% of the placebo group. The odds ratio (OR) for improvement on ADCS-CGIC for methylphenidate vs. placebo was 1.90 (P = .07).

There was also a strong association between score improvement on the NPI apathy subscale and improvement on the ADCS-CGIC subscale (OR, 2.95; P = .002).

“It is important to note that there were no group differences in any of the cognitive measures, suggesting that the effect of the treatment is specific to the treatment of apathy and not a secondary effect of improvement in cognition,” the researchers wrote.

In all, 17 serious adverse events occurred in the methylphenidate group and 10 occurred in the placebo group. However, all events were found to be hospitalizations for events not related to treatment.

‘Enduring effect’

Commenting on the findings, Jeffrey L. Cummings, MD, ScD, professor of brain sciences at the University of Nevada, Las Vegas, noted that the reduction in NPI apathy subscale score of more than 50% was clinically meaningful.

A more robust outcome on the ADCS-CGIC would have been desirable, he added, although that instrument is not designed specifically for apathy.

Methylphenidate’s effect on apathy observed at 2 months and remaining stable throughout the study makes it appear to be “an enduring effect, and not something that the patient accommodates to,” said Dr. Cummings, who was not involved with the research. Such a change may manifest itself in a patient’s greater willingness to help voluntarily with housework or to suggest going for a walk, he noted.

“These are not dramatic changes in cognition, of course, but they are changes in initiative and that is very important,” Dr. Cummings said. Decreased apathy also may improve quality of life for the patient’s caregiver, he added.

Overall, the findings raise the question of whether the Food and Drug Administration should recognize apathy as an indication for which drugs can be approved, said Dr. Cummings.

“For me, that would be the next major step in this line of investigation,” he concluded.

The study was funded by the National Institute on Aging. Dr. Mintzer has served as an adviser to Praxis Bioresearch and Cerevel Therapeutics on matters unrelated to this study. Dr. Cummings is the author of the Neuropsychiatric Inventory but does not receive payments for it from academic trials such as ADMET 2.

A version of this article first appeared on Medscape.com.

More than half of U.S. children under 6 years show detectable blood lead levels

Lead poisoning remains a significant threat to the health of young children in the United States, based on data from blood tests of more than 1 million children.

Any level of lead is potentially harmful, although blood lead levels have decreased over the past several decades in part because of the elimination of lead from many consumer products, as well as from gas, paint, and plumbing fixtures, wrote Marissa Hauptman, MD, of Boston Children’s Hospital and colleagues.

However, “numerous environmental sources of legacy lead still exist,” and children living in poverty and in older housing in particular remain at increased risk for lead exposure, they noted.

In a study published in JAMA Pediatrics, the researchers analyzed deidentified results from blood lead tests performed at a single clinical laboratory for 1,141,441 children younger than 6 years between Oct. 1, 2018, and Feb. 29, 2020. The mean age of the children was 2.3 years; approximately half were boys.

Overall, 50.5% of the children tested (576,092 children) had detectable blood lead levels (BLLs), defined as 1.0 mcg/dL or higher, and 1.9% (21,172 children) had elevated BLLs, defined as 5.0 mcg/dL or higher.

In multivariate analysis, both detectable BLLs and elevated BLLs were significantly more common among children with public insurance (adjusted odds ratios, 2.01 and 1.08, respectively).

Children in the highest vs. lowest quintile of pre-1950s housing had significantly greater odds of both detectable and elevated BLLs (aOR, 1.65 and aOR, 3.06); those in the highest vs. lowest quintiles of poverty showed similarly increased risk of detectable and elevated BLLs (aOR, 1.89 and aOR, 1.99, respectively; P < .001 for all).

When the data were broken out by ZIP code, children in predominantly Black non-Hispanic and non-Latino neighborhoods were more likely than those living in other ZIP codes to have detectable BLLs (aOR, 1.13), but less likely to have elevated BLLs (aOR, 0.83). States with the highest overall proportions of children with detectable BLLs were Nebraska (83%), Missouri (82%), and Michigan (78%).

The study findings were limited by several factors, especially the potential for selection bias because of the use of a single reference laboratory (Quest Diagnostics), that does not perform all lead testing in the United States, the researchers noted. Other limitations included variability in testing at the state level, and the use of ZIP code–level data to estimate race, ethnicity, housing, and poverty, they said.

However, the results suggest that lead exposure remains a problem in young children, with significant disparities at the individual and community level, and national efforts must focus on further reductions of lead exposure in areas of highest risk, they concluded.

Step up lead elimination efforts

“The removal of lead from gasoline and new paint produced a precipitous decrease in blood lead levels from a population mean of 17 mcg/dL (all ages) in 1976 to 4 mcg/dL in the early 1990s to less than 2 mcg/dL today,” wrote Philip J. Landrigan, MD, of Boston College and David Bellinger, PhD, of Harvard University, Boston, in an accompanying editorial. However, “The findings from this study underscore the urgent need to eliminate all sources of lead exposure from U.S. children’s environments,” and highlight the persistent disparities in children’s lead exposure, they said.

The authors emphasized the need to remove existing lead paint from U.S. homes, as not only the paint itself, but the dust that enters the environment as the pain wears over time, continue to account for most detectable and elevated BLLs in children. A comprehensive lead paint removal effort would be an investment that would protect children now and would protect future generations, they emphasized. They proposed “creating a lead paint removal workforce through federally supported partnerships between city governments and major unions,” that would not only protect children from disease and disability, but could potentially provide jobs and vocational programs that would have a significant impact on communities.

Elevated lead levels may be underreported

In fact, the situation of children’s lead exposure in the United States may be more severe than indicated by the study findings, given the variation in testing at the state and local levels, said Karalyn Kinsella, MD, a pediatrician in private practice in Cheshire, Conn.

“There are no available lead test kits in our offices, so I do worry that many elevated lead levels will be missed,” she said.

“The recent case of elevated lead levels in drinking water in Flint, Michigan, was largely detected through pediatric clinic screening and showed that elevated lead levels may remain a major issue in some communities,” said Tim Joos, MD, a clinician in combined internal medicine/pediatrics in Seattle, Wash., in an interview.

“It is important to highlight to what extent baseline and point-source lead contamination still exists, monitor progress towards lowering levels, and identify communities at high risk,” Dr. Joos emphasized. “The exact prevalence of elevated lead levels among the general pediatric populations is hard to estimate from this study because of the methodology, which looked at demographic characteristics of the subset of the pediatric population that had venous samples sent to Quest Lab,” he noted.

“As the authors pointed out, it is hard to know what biases went into deciding whether to screen or not, and whether these were confirmatory tests for elevated point of care testing done earlier in the clinic,” said Dr. Joos. “Nonetheless, it does point to the role of poverty and pre-1950s housing in elevated blood lead levels,” he added. “The study also highlights that, as the CDC considers lowering the level for what is considered an ‘elevated blood lead level’ from 5.0 to perhaps 3.5 mcg/dL, we still have a lot more work to do,” he said.

The study was funded by Quest Diagnostics and the company provided salaries to several coauthors during the study. Dr. Hauptmann disclosed support from the National Institutes of Health/National Institute of Environmental Health Sciences during the current study and support from the Agency for Toxic Substances and Disease Registry and the U.S. Environmental Protection Agency unrelated to the current study. Dr. Landrigan had no financial conflicts to disclose. Dr. Bellinger disclosed fees from attorneys for testimony in cases unrelated to the editorial. Dr. Kinsella had no financial conflicts to disclose, but serves on the Editorial Advisory Board of Pediatric News. Dr. Joos had no financial conflicts to disclose, but serves on the Pediatric News Editorial Advisory Board.

Lead poisoning remains a significant threat to the health of young children in the United States, based on data from blood tests of more than 1 million children.

Any level of lead is potentially harmful, although blood lead levels have decreased over the past several decades in part because of the elimination of lead from many consumer products, as well as from gas, paint, and plumbing fixtures, wrote Marissa Hauptman, MD, of Boston Children’s Hospital and colleagues.

However, “numerous environmental sources of legacy lead still exist,” and children living in poverty and in older housing in particular remain at increased risk for lead exposure, they noted.

In a study published in JAMA Pediatrics, the researchers analyzed deidentified results from blood lead tests performed at a single clinical laboratory for 1,141,441 children younger than 6 years between Oct. 1, 2018, and Feb. 29, 2020. The mean age of the children was 2.3 years; approximately half were boys.

Overall, 50.5% of the children tested (576,092 children) had detectable blood lead levels (BLLs), defined as 1.0 mcg/dL or higher, and 1.9% (21,172 children) had elevated BLLs, defined as 5.0 mcg/dL or higher.

In multivariate analysis, both detectable BLLs and elevated BLLs were significantly more common among children with public insurance (adjusted odds ratios, 2.01 and 1.08, respectively).

Children in the highest vs. lowest quintile of pre-1950s housing had significantly greater odds of both detectable and elevated BLLs (aOR, 1.65 and aOR, 3.06); those in the highest vs. lowest quintiles of poverty showed similarly increased risk of detectable and elevated BLLs (aOR, 1.89 and aOR, 1.99, respectively; P < .001 for all).

When the data were broken out by ZIP code, children in predominantly Black non-Hispanic and non-Latino neighborhoods were more likely than those living in other ZIP codes to have detectable BLLs (aOR, 1.13), but less likely to have elevated BLLs (aOR, 0.83). States with the highest overall proportions of children with detectable BLLs were Nebraska (83%), Missouri (82%), and Michigan (78%).

The study findings were limited by several factors, especially the potential for selection bias because of the use of a single reference laboratory (Quest Diagnostics), that does not perform all lead testing in the United States, the researchers noted. Other limitations included variability in testing at the state level, and the use of ZIP code–level data to estimate race, ethnicity, housing, and poverty, they said.

However, the results suggest that lead exposure remains a problem in young children, with significant disparities at the individual and community level, and national efforts must focus on further reductions of lead exposure in areas of highest risk, they concluded.

Step up lead elimination efforts

“The removal of lead from gasoline and new paint produced a precipitous decrease in blood lead levels from a population mean of 17 mcg/dL (all ages) in 1976 to 4 mcg/dL in the early 1990s to less than 2 mcg/dL today,” wrote Philip J. Landrigan, MD, of Boston College and David Bellinger, PhD, of Harvard University, Boston, in an accompanying editorial. However, “The findings from this study underscore the urgent need to eliminate all sources of lead exposure from U.S. children’s environments,” and highlight the persistent disparities in children’s lead exposure, they said.

The authors emphasized the need to remove existing lead paint from U.S. homes, as not only the paint itself, but the dust that enters the environment as the pain wears over time, continue to account for most detectable and elevated BLLs in children. A comprehensive lead paint removal effort would be an investment that would protect children now and would protect future generations, they emphasized. They proposed “creating a lead paint removal workforce through federally supported partnerships between city governments and major unions,” that would not only protect children from disease and disability, but could potentially provide jobs and vocational programs that would have a significant impact on communities.

Elevated lead levels may be underreported

In fact, the situation of children’s lead exposure in the United States may be more severe than indicated by the study findings, given the variation in testing at the state and local levels, said Karalyn Kinsella, MD, a pediatrician in private practice in Cheshire, Conn.

“There are no available lead test kits in our offices, so I do worry that many elevated lead levels will be missed,” she said.

“The recent case of elevated lead levels in drinking water in Flint, Michigan, was largely detected through pediatric clinic screening and showed that elevated lead levels may remain a major issue in some communities,” said Tim Joos, MD, a clinician in combined internal medicine/pediatrics in Seattle, Wash., in an interview.

“It is important to highlight to what extent baseline and point-source lead contamination still exists, monitor progress towards lowering levels, and identify communities at high risk,” Dr. Joos emphasized. “The exact prevalence of elevated lead levels among the general pediatric populations is hard to estimate from this study because of the methodology, which looked at demographic characteristics of the subset of the pediatric population that had venous samples sent to Quest Lab,” he noted.

“As the authors pointed out, it is hard to know what biases went into deciding whether to screen or not, and whether these were confirmatory tests for elevated point of care testing done earlier in the clinic,” said Dr. Joos. “Nonetheless, it does point to the role of poverty and pre-1950s housing in elevated blood lead levels,” he added. “The study also highlights that, as the CDC considers lowering the level for what is considered an ‘elevated blood lead level’ from 5.0 to perhaps 3.5 mcg/dL, we still have a lot more work to do,” he said.

The study was funded by Quest Diagnostics and the company provided salaries to several coauthors during the study. Dr. Hauptmann disclosed support from the National Institutes of Health/National Institute of Environmental Health Sciences during the current study and support from the Agency for Toxic Substances and Disease Registry and the U.S. Environmental Protection Agency unrelated to the current study. Dr. Landrigan had no financial conflicts to disclose. Dr. Bellinger disclosed fees from attorneys for testimony in cases unrelated to the editorial. Dr. Kinsella had no financial conflicts to disclose, but serves on the Editorial Advisory Board of Pediatric News. Dr. Joos had no financial conflicts to disclose, but serves on the Pediatric News Editorial Advisory Board.

Lead poisoning remains a significant threat to the health of young children in the United States, based on data from blood tests of more than 1 million children.

Any level of lead is potentially harmful, although blood lead levels have decreased over the past several decades in part because of the elimination of lead from many consumer products, as well as from gas, paint, and plumbing fixtures, wrote Marissa Hauptman, MD, of Boston Children’s Hospital and colleagues.

However, “numerous environmental sources of legacy lead still exist,” and children living in poverty and in older housing in particular remain at increased risk for lead exposure, they noted.

In a study published in JAMA Pediatrics, the researchers analyzed deidentified results from blood lead tests performed at a single clinical laboratory for 1,141,441 children younger than 6 years between Oct. 1, 2018, and Feb. 29, 2020. The mean age of the children was 2.3 years; approximately half were boys.

Overall, 50.5% of the children tested (576,092 children) had detectable blood lead levels (BLLs), defined as 1.0 mcg/dL or higher, and 1.9% (21,172 children) had elevated BLLs, defined as 5.0 mcg/dL or higher.

In multivariate analysis, both detectable BLLs and elevated BLLs were significantly more common among children with public insurance (adjusted odds ratios, 2.01 and 1.08, respectively).

Children in the highest vs. lowest quintile of pre-1950s housing had significantly greater odds of both detectable and elevated BLLs (aOR, 1.65 and aOR, 3.06); those in the highest vs. lowest quintiles of poverty showed similarly increased risk of detectable and elevated BLLs (aOR, 1.89 and aOR, 1.99, respectively; P < .001 for all).

When the data were broken out by ZIP code, children in predominantly Black non-Hispanic and non-Latino neighborhoods were more likely than those living in other ZIP codes to have detectable BLLs (aOR, 1.13), but less likely to have elevated BLLs (aOR, 0.83). States with the highest overall proportions of children with detectable BLLs were Nebraska (83%), Missouri (82%), and Michigan (78%).

The study findings were limited by several factors, especially the potential for selection bias because of the use of a single reference laboratory (Quest Diagnostics), that does not perform all lead testing in the United States, the researchers noted. Other limitations included variability in testing at the state level, and the use of ZIP code–level data to estimate race, ethnicity, housing, and poverty, they said.

However, the results suggest that lead exposure remains a problem in young children, with significant disparities at the individual and community level, and national efforts must focus on further reductions of lead exposure in areas of highest risk, they concluded.

Step up lead elimination efforts

“The removal of lead from gasoline and new paint produced a precipitous decrease in blood lead levels from a population mean of 17 mcg/dL (all ages) in 1976 to 4 mcg/dL in the early 1990s to less than 2 mcg/dL today,” wrote Philip J. Landrigan, MD, of Boston College and David Bellinger, PhD, of Harvard University, Boston, in an accompanying editorial. However, “The findings from this study underscore the urgent need to eliminate all sources of lead exposure from U.S. children’s environments,” and highlight the persistent disparities in children’s lead exposure, they said.

The authors emphasized the need to remove existing lead paint from U.S. homes, as not only the paint itself, but the dust that enters the environment as the pain wears over time, continue to account for most detectable and elevated BLLs in children. A comprehensive lead paint removal effort would be an investment that would protect children now and would protect future generations, they emphasized. They proposed “creating a lead paint removal workforce through federally supported partnerships between city governments and major unions,” that would not only protect children from disease and disability, but could potentially provide jobs and vocational programs that would have a significant impact on communities.

Elevated lead levels may be underreported

In fact, the situation of children’s lead exposure in the United States may be more severe than indicated by the study findings, given the variation in testing at the state and local levels, said Karalyn Kinsella, MD, a pediatrician in private practice in Cheshire, Conn.

“There are no available lead test kits in our offices, so I do worry that many elevated lead levels will be missed,” she said.

“The recent case of elevated lead levels in drinking water in Flint, Michigan, was largely detected through pediatric clinic screening and showed that elevated lead levels may remain a major issue in some communities,” said Tim Joos, MD, a clinician in combined internal medicine/pediatrics in Seattle, Wash., in an interview.

“It is important to highlight to what extent baseline and point-source lead contamination still exists, monitor progress towards lowering levels, and identify communities at high risk,” Dr. Joos emphasized. “The exact prevalence of elevated lead levels among the general pediatric populations is hard to estimate from this study because of the methodology, which looked at demographic characteristics of the subset of the pediatric population that had venous samples sent to Quest Lab,” he noted.

“As the authors pointed out, it is hard to know what biases went into deciding whether to screen or not, and whether these were confirmatory tests for elevated point of care testing done earlier in the clinic,” said Dr. Joos. “Nonetheless, it does point to the role of poverty and pre-1950s housing in elevated blood lead levels,” he added. “The study also highlights that, as the CDC considers lowering the level for what is considered an ‘elevated blood lead level’ from 5.0 to perhaps 3.5 mcg/dL, we still have a lot more work to do,” he said.

The study was funded by Quest Diagnostics and the company provided salaries to several coauthors during the study. Dr. Hauptmann disclosed support from the National Institutes of Health/National Institute of Environmental Health Sciences during the current study and support from the Agency for Toxic Substances and Disease Registry and the U.S. Environmental Protection Agency unrelated to the current study. Dr. Landrigan had no financial conflicts to disclose. Dr. Bellinger disclosed fees from attorneys for testimony in cases unrelated to the editorial. Dr. Kinsella had no financial conflicts to disclose, but serves on the Editorial Advisory Board of Pediatric News. Dr. Joos had no financial conflicts to disclose, but serves on the Pediatric News Editorial Advisory Board.

FROM JAMA PEDIATRICS

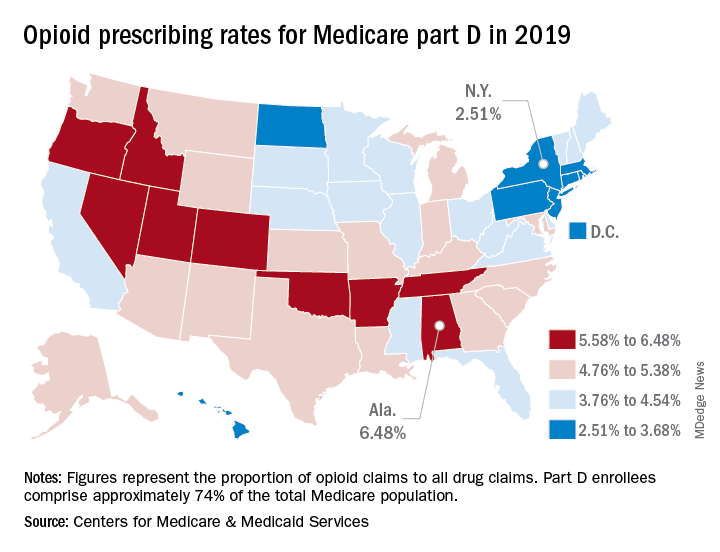

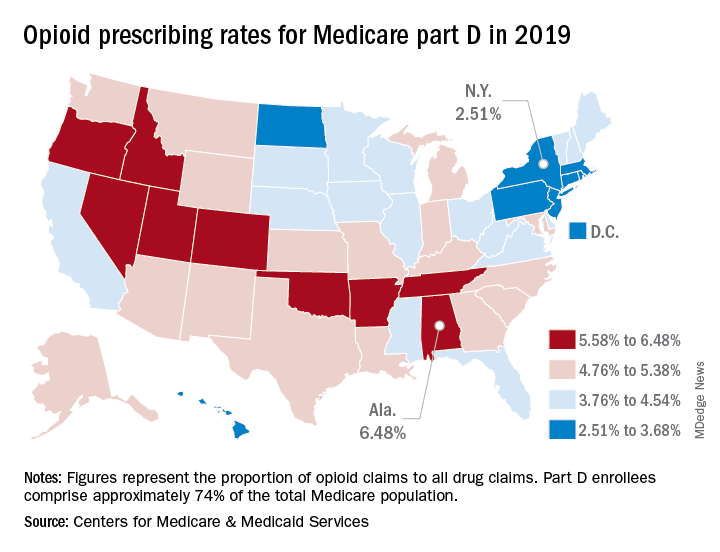

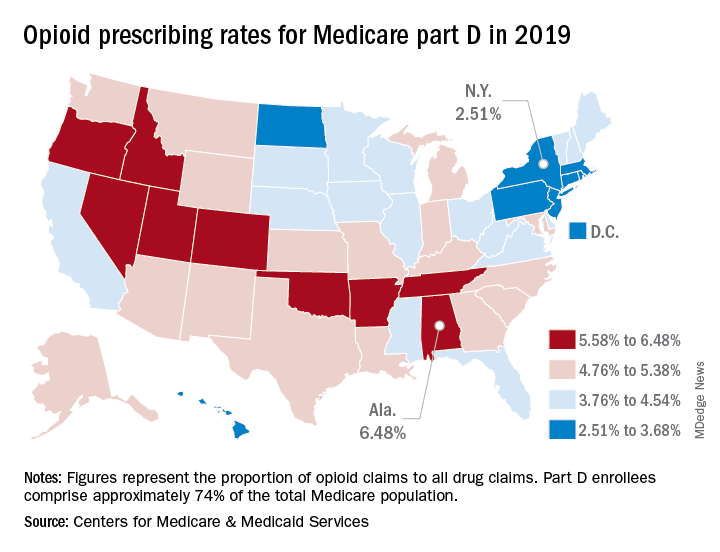

Opioid prescribing mapped: Alabama highest, New York lowest

Medicare beneficiaries in Alabama were more likely to get a prescription for an opioid than in any other state in 2019, based on newly released data.

That year, opioids represented 6.48% of all drug claims for part D enrollees in the state, just ahead of Utah at 6.41%. Idaho, at 6.07%, was the only other state with an opioid prescribing rate over 6%, while Oklahoma came in at an even 6.0%, according to the latest update of the Centers for Medicare & Medicaid Services’ dataset.

The lowest rate in 2019 belonged to New York, where 2.51% of drug claims, including original prescriptions and refills, involved an opioid. Rhode Island was next at 2.87%, followed by New Jersey (3.23%), Massachusetts (3.26%), and North Dakota (3.39%),

Altogether, Medicare part D processed 1.5 billion drug claims in 2019, of which 66.1 million, or 4.41%, involved opioids. Both of the opioid numbers were down from 2018, when opioids represented 4.68% (70.2 million) of the 1.5 billion total claims, and from 2014, when opioids were involved in 5.73% (81,026,831) of the 1.41 billion drug claims, the CMS data show. That works out to 5.77% fewer opioids in 2019, compared with 2014, despite the increase in total volume.

from 2014 to 2019, with Hawaii showing the smallest decline as it slipped 0.41 percentage points from 3.9% to 3.49%, according to the CMS.

In 2019, part D beneficiaries in Vermont were the most likely to receive a long-acting opioid, which accounted for 20.14% of all opioid prescriptions in the state, while Kentucky had the lowest share of prescriptions written for long-acting forms at 6.41%. The national average was 11.02%, dropping from 11.79% in 2018 and 12.75% in 2014, the CMS reported.

Medicare beneficiaries in Alabama were more likely to get a prescription for an opioid than in any other state in 2019, based on newly released data.

That year, opioids represented 6.48% of all drug claims for part D enrollees in the state, just ahead of Utah at 6.41%. Idaho, at 6.07%, was the only other state with an opioid prescribing rate over 6%, while Oklahoma came in at an even 6.0%, according to the latest update of the Centers for Medicare & Medicaid Services’ dataset.

The lowest rate in 2019 belonged to New York, where 2.51% of drug claims, including original prescriptions and refills, involved an opioid. Rhode Island was next at 2.87%, followed by New Jersey (3.23%), Massachusetts (3.26%), and North Dakota (3.39%),

Altogether, Medicare part D processed 1.5 billion drug claims in 2019, of which 66.1 million, or 4.41%, involved opioids. Both of the opioid numbers were down from 2018, when opioids represented 4.68% (70.2 million) of the 1.5 billion total claims, and from 2014, when opioids were involved in 5.73% (81,026,831) of the 1.41 billion drug claims, the CMS data show. That works out to 5.77% fewer opioids in 2019, compared with 2014, despite the increase in total volume.

from 2014 to 2019, with Hawaii showing the smallest decline as it slipped 0.41 percentage points from 3.9% to 3.49%, according to the CMS.

In 2019, part D beneficiaries in Vermont were the most likely to receive a long-acting opioid, which accounted for 20.14% of all opioid prescriptions in the state, while Kentucky had the lowest share of prescriptions written for long-acting forms at 6.41%. The national average was 11.02%, dropping from 11.79% in 2018 and 12.75% in 2014, the CMS reported.

Medicare beneficiaries in Alabama were more likely to get a prescription for an opioid than in any other state in 2019, based on newly released data.

That year, opioids represented 6.48% of all drug claims for part D enrollees in the state, just ahead of Utah at 6.41%. Idaho, at 6.07%, was the only other state with an opioid prescribing rate over 6%, while Oklahoma came in at an even 6.0%, according to the latest update of the Centers for Medicare & Medicaid Services’ dataset.

The lowest rate in 2019 belonged to New York, where 2.51% of drug claims, including original prescriptions and refills, involved an opioid. Rhode Island was next at 2.87%, followed by New Jersey (3.23%), Massachusetts (3.26%), and North Dakota (3.39%),

Altogether, Medicare part D processed 1.5 billion drug claims in 2019, of which 66.1 million, or 4.41%, involved opioids. Both of the opioid numbers were down from 2018, when opioids represented 4.68% (70.2 million) of the 1.5 billion total claims, and from 2014, when opioids were involved in 5.73% (81,026,831) of the 1.41 billion drug claims, the CMS data show. That works out to 5.77% fewer opioids in 2019, compared with 2014, despite the increase in total volume.

from 2014 to 2019, with Hawaii showing the smallest decline as it slipped 0.41 percentage points from 3.9% to 3.49%, according to the CMS.

In 2019, part D beneficiaries in Vermont were the most likely to receive a long-acting opioid, which accounted for 20.14% of all opioid prescriptions in the state, while Kentucky had the lowest share of prescriptions written for long-acting forms at 6.41%. The national average was 11.02%, dropping from 11.79% in 2018 and 12.75% in 2014, the CMS reported.

MIND diet preserves cognition, new data show

Adherence to the MIND diet can improve memory and thinking skills of older adults, even in the presence of Alzheimer’s disease pathology, new data from the Rush Memory and Aging Project (MAP) show.

“The MIND diet was associated with better cognitive functions independently of brain pathologies related to Alzheimer’s disease, suggesting that diet may contribute to cognitive resilience, which ultimately indicates that it is never too late for dementia prevention,” lead author Klodian Dhana, MD, PhD, with the Rush Institute of Healthy Aging at Rush University, Chicago, said in an interview.

The study was published online Sept. 14, 2021, in the Journal of Alzheimer’s Disease.

Impact on brain pathology