User login

67% of teens have substantial cardiometabolic risk burden, blood donor survey shows

ORLANDO – Fully two-thirds of nearly 25,000 Dallas-area volunteer blood donors ages 16-19 had elevated or borderline total cholesterol, blood pressure, and/or hemoglobin A1c, Dr. Merlyn H. Sayers reported at the American Heart Association scientific sessions.

“It is startling that such a significant percentage of these young, ostensibly healthy volunteers have abnormal cardiometabolic health metrics,” observed Dr. Sayers, president and chief executive officer of Carter BloodCare of Bedford, Tex., a nonprofit organization that is the largest blood bank in the state.

After all, he noted, longitudinal studies have clearly shown that cardiometabolic risk factors present in adolescence will persist into adulthood and are associated with increased risks of cardiovascular disease and diabetes. Moreover, it’s troubling, albeit not really surprising, that for the most part these adolescents don’t seem to care about their cardiometabolic risk, the hematologist-oncologist added.

“We give all these youngsters an opportunity to go to the Carter BloodCare website and confidentially retrieve their values. But despite all manner of urging on our part that these results are important, at best only about 20% of the individuals actually do so, and that rate varies substantially by race and ethnicity,” according to Dr. Sayers. “Where appropriate, we need to find ways to impose behavior modification on a group that is relatively resistant to guidance and intervention. Even the best kids, as teenagers, really don’t take this sort of advice about their health risk very seriously. They regard themselves as immortal during their teenage years.”

Noting that behavioral change is not a core strength among transfusion medicine specialists, Dr. Sayers appealed to his audience of cardiologists for suggestions as to how to encourage lifestyle modification in this youthful group without browbeating them to the point that they’re driven off from becoming serial blood donors.

It’s not widely appreciated that across the U.S. during the school year, 20% of all unpaid blood donors are high school students. These high school blood drives provide an as-yet untapped opportunity to screen adolescents for cardiometabolic risk at low cost and minimal inconvenience to participants, said Dr. Sayers of the University of Texas, Dallas.

“We need allies to help us to ensure we get the kids’ attention better,” he explained. “I want to leave you with the sense that perhaps you will see these blood drives as an opportunity to find interventions that might address primordial prevention of cardiometabolic risk.”

He presented a study of 24,925 youths aged 16-19 who donated blood to Carter BloodCare during 2011-2012. Since blood is drawn for obligatory infectious diseases screening at each donation, Dr. Sayers and coinvestigators were able to measure nonfasting total cholesterol and HbA1c in every teen donor. Blood pressure is also measured at every donation.

The investigators used widely accepted definitions of elevated blood pressure, cholesterol, and HbA1c: namely, at least 140/80 mm Hg, 200 mg/dL, and 6.5%, respectively.

While the percentage of teen blood donors with borderline or elevated levels of all three cardiometabolic risk factors was in the low single figures, 21% of boys and 15% of girls were positive for two out of the three.

The prevalence of cardiometabolic risk factors varied by ethnicity. Sixteen percent of white adolescents had elevated or borderline levels of two risk factors. So did 24% of African Americans, 22% of Asian Americans, and 18% of Hispanics.

“These are really staggering results,” commented session chair Dr. Seth S. Martin of Johns Hopkins University, Baltimore. “This is a call to action now that you’ve identified all these kids who are on a trajectory that doesn’t look good.”

As to how physicians can help to favorably alter that trajectory, however, audience members admitted to being stumped, especially since many young people stop going to a primary care physician for preventive care during their teenage years.

“The big problem here is how to use this information to initiate lifestyle change,” observed Dr. Lewis H. Kuller, professor and past chair of epidemiology at the University of Pittsburgh.

Dr. Sayers reported having no financial conflicts regarding his study.

ORLANDO – Fully two-thirds of nearly 25,000 Dallas-area volunteer blood donors ages 16-19 had elevated or borderline total cholesterol, blood pressure, and/or hemoglobin A1c, Dr. Merlyn H. Sayers reported at the American Heart Association scientific sessions.

“It is startling that such a significant percentage of these young, ostensibly healthy volunteers have abnormal cardiometabolic health metrics,” observed Dr. Sayers, president and chief executive officer of Carter BloodCare of Bedford, Tex., a nonprofit organization that is the largest blood bank in the state.

After all, he noted, longitudinal studies have clearly shown that cardiometabolic risk factors present in adolescence will persist into adulthood and are associated with increased risks of cardiovascular disease and diabetes. Moreover, it’s troubling, albeit not really surprising, that for the most part these adolescents don’t seem to care about their cardiometabolic risk, the hematologist-oncologist added.

“We give all these youngsters an opportunity to go to the Carter BloodCare website and confidentially retrieve their values. But despite all manner of urging on our part that these results are important, at best only about 20% of the individuals actually do so, and that rate varies substantially by race and ethnicity,” according to Dr. Sayers. “Where appropriate, we need to find ways to impose behavior modification on a group that is relatively resistant to guidance and intervention. Even the best kids, as teenagers, really don’t take this sort of advice about their health risk very seriously. They regard themselves as immortal during their teenage years.”

Noting that behavioral change is not a core strength among transfusion medicine specialists, Dr. Sayers appealed to his audience of cardiologists for suggestions as to how to encourage lifestyle modification in this youthful group without browbeating them to the point that they’re driven off from becoming serial blood donors.

It’s not widely appreciated that across the U.S. during the school year, 20% of all unpaid blood donors are high school students. These high school blood drives provide an as-yet untapped opportunity to screen adolescents for cardiometabolic risk at low cost and minimal inconvenience to participants, said Dr. Sayers of the University of Texas, Dallas.

“We need allies to help us to ensure we get the kids’ attention better,” he explained. “I want to leave you with the sense that perhaps you will see these blood drives as an opportunity to find interventions that might address primordial prevention of cardiometabolic risk.”

He presented a study of 24,925 youths aged 16-19 who donated blood to Carter BloodCare during 2011-2012. Since blood is drawn for obligatory infectious diseases screening at each donation, Dr. Sayers and coinvestigators were able to measure nonfasting total cholesterol and HbA1c in every teen donor. Blood pressure is also measured at every donation.

The investigators used widely accepted definitions of elevated blood pressure, cholesterol, and HbA1c: namely, at least 140/80 mm Hg, 200 mg/dL, and 6.5%, respectively.

While the percentage of teen blood donors with borderline or elevated levels of all three cardiometabolic risk factors was in the low single figures, 21% of boys and 15% of girls were positive for two out of the three.

The prevalence of cardiometabolic risk factors varied by ethnicity. Sixteen percent of white adolescents had elevated or borderline levels of two risk factors. So did 24% of African Americans, 22% of Asian Americans, and 18% of Hispanics.

“These are really staggering results,” commented session chair Dr. Seth S. Martin of Johns Hopkins University, Baltimore. “This is a call to action now that you’ve identified all these kids who are on a trajectory that doesn’t look good.”

As to how physicians can help to favorably alter that trajectory, however, audience members admitted to being stumped, especially since many young people stop going to a primary care physician for preventive care during their teenage years.

“The big problem here is how to use this information to initiate lifestyle change,” observed Dr. Lewis H. Kuller, professor and past chair of epidemiology at the University of Pittsburgh.

Dr. Sayers reported having no financial conflicts regarding his study.

ORLANDO – Fully two-thirds of nearly 25,000 Dallas-area volunteer blood donors ages 16-19 had elevated or borderline total cholesterol, blood pressure, and/or hemoglobin A1c, Dr. Merlyn H. Sayers reported at the American Heart Association scientific sessions.

“It is startling that such a significant percentage of these young, ostensibly healthy volunteers have abnormal cardiometabolic health metrics,” observed Dr. Sayers, president and chief executive officer of Carter BloodCare of Bedford, Tex., a nonprofit organization that is the largest blood bank in the state.

After all, he noted, longitudinal studies have clearly shown that cardiometabolic risk factors present in adolescence will persist into adulthood and are associated with increased risks of cardiovascular disease and diabetes. Moreover, it’s troubling, albeit not really surprising, that for the most part these adolescents don’t seem to care about their cardiometabolic risk, the hematologist-oncologist added.

“We give all these youngsters an opportunity to go to the Carter BloodCare website and confidentially retrieve their values. But despite all manner of urging on our part that these results are important, at best only about 20% of the individuals actually do so, and that rate varies substantially by race and ethnicity,” according to Dr. Sayers. “Where appropriate, we need to find ways to impose behavior modification on a group that is relatively resistant to guidance and intervention. Even the best kids, as teenagers, really don’t take this sort of advice about their health risk very seriously. They regard themselves as immortal during their teenage years.”

Noting that behavioral change is not a core strength among transfusion medicine specialists, Dr. Sayers appealed to his audience of cardiologists for suggestions as to how to encourage lifestyle modification in this youthful group without browbeating them to the point that they’re driven off from becoming serial blood donors.

It’s not widely appreciated that across the U.S. during the school year, 20% of all unpaid blood donors are high school students. These high school blood drives provide an as-yet untapped opportunity to screen adolescents for cardiometabolic risk at low cost and minimal inconvenience to participants, said Dr. Sayers of the University of Texas, Dallas.

“We need allies to help us to ensure we get the kids’ attention better,” he explained. “I want to leave you with the sense that perhaps you will see these blood drives as an opportunity to find interventions that might address primordial prevention of cardiometabolic risk.”

He presented a study of 24,925 youths aged 16-19 who donated blood to Carter BloodCare during 2011-2012. Since blood is drawn for obligatory infectious diseases screening at each donation, Dr. Sayers and coinvestigators were able to measure nonfasting total cholesterol and HbA1c in every teen donor. Blood pressure is also measured at every donation.

The investigators used widely accepted definitions of elevated blood pressure, cholesterol, and HbA1c: namely, at least 140/80 mm Hg, 200 mg/dL, and 6.5%, respectively.

While the percentage of teen blood donors with borderline or elevated levels of all three cardiometabolic risk factors was in the low single figures, 21% of boys and 15% of girls were positive for two out of the three.

The prevalence of cardiometabolic risk factors varied by ethnicity. Sixteen percent of white adolescents had elevated or borderline levels of two risk factors. So did 24% of African Americans, 22% of Asian Americans, and 18% of Hispanics.

“These are really staggering results,” commented session chair Dr. Seth S. Martin of Johns Hopkins University, Baltimore. “This is a call to action now that you’ve identified all these kids who are on a trajectory that doesn’t look good.”

As to how physicians can help to favorably alter that trajectory, however, audience members admitted to being stumped, especially since many young people stop going to a primary care physician for preventive care during their teenage years.

“The big problem here is how to use this information to initiate lifestyle change,” observed Dr. Lewis H. Kuller, professor and past chair of epidemiology at the University of Pittsburgh.

Dr. Sayers reported having no financial conflicts regarding his study.

AT THE AHA SCIENTIFIC SESSIONS

Key clinical point: Two-thirds of 16- to 19-year-olds have borderline or frank hypertension, hypercholesterolemia, and/or high blood glucose.

Major finding: Of a very large group of 16- to 19-year-old blood donors, 67% had borderline or elevated total cholesterol, blood pressure, and/or hemoglobin A1c levels.

Data source: A retrospective analysis of total cholesterol, blood pressure, and HbA1c levels in 24,925 Dallas-area blood donors aged 16-19.

Disclosures: The presenter reported having no financial conflicts of interest regarding this study.

Cognitive impairment varies according to mood disorder

The nature and severity of cognitive alterations can vary significantly between different mood disorders, according to Dr. Charles Cotrena and his associates.

The study of 205 Brazilians comprised patients with major depressive disorder (MDD), patients with bipolar disorder I (BDI) and II (BDII), and a healthy control group, all of whom took a battery of neurocognitive tests. MDD patients performed poorly in tests involving attention and timed tasks, compared with the control group, but had less motor inhibition than did patients with BDI. Patients with BDI tended to perform worse across all executive functions, compared with patients with MDD, BDII, and the control group; however, BDII patients were the only ones who performed worse on the Iowa Gambling Task than did the control group, and performed worse on the Stroop Color–Word Test than did BDI patients.

While MDD patients had worse psychological quality of life (QOL) than that of controls, there was no difference in other QOL measures. MDD patients reported better physical health and lower disability rates than did BD patients. BDII patients had worse QOL than did control patients, but had lower disability rates than did BDI patients.

The investigators found that differences in cognitive function and quality of life still existed in patients with mood disorder, even after adjustment for mania and depressive symptoms.

“The importance of a detailed assessment of [executive function] and disability levels within each of these diagnostic categories, while controlling for demographic variables, was especially evident from the current results. Additionally, the comparison of impairment rates between groups – which is not a usual measure in the literature – provided important contributions to current knowledge regarding cognition and mood disorders,” the investigators noted.

Find the study in the Journal of Affective Disorders (doi: 10.1016/j.jad.2015.11.007).

The nature and severity of cognitive alterations can vary significantly between different mood disorders, according to Dr. Charles Cotrena and his associates.

The study of 205 Brazilians comprised patients with major depressive disorder (MDD), patients with bipolar disorder I (BDI) and II (BDII), and a healthy control group, all of whom took a battery of neurocognitive tests. MDD patients performed poorly in tests involving attention and timed tasks, compared with the control group, but had less motor inhibition than did patients with BDI. Patients with BDI tended to perform worse across all executive functions, compared with patients with MDD, BDII, and the control group; however, BDII patients were the only ones who performed worse on the Iowa Gambling Task than did the control group, and performed worse on the Stroop Color–Word Test than did BDI patients.

While MDD patients had worse psychological quality of life (QOL) than that of controls, there was no difference in other QOL measures. MDD patients reported better physical health and lower disability rates than did BD patients. BDII patients had worse QOL than did control patients, but had lower disability rates than did BDI patients.

The investigators found that differences in cognitive function and quality of life still existed in patients with mood disorder, even after adjustment for mania and depressive symptoms.

“The importance of a detailed assessment of [executive function] and disability levels within each of these diagnostic categories, while controlling for demographic variables, was especially evident from the current results. Additionally, the comparison of impairment rates between groups – which is not a usual measure in the literature – provided important contributions to current knowledge regarding cognition and mood disorders,” the investigators noted.

Find the study in the Journal of Affective Disorders (doi: 10.1016/j.jad.2015.11.007).

The nature and severity of cognitive alterations can vary significantly between different mood disorders, according to Dr. Charles Cotrena and his associates.

The study of 205 Brazilians comprised patients with major depressive disorder (MDD), patients with bipolar disorder I (BDI) and II (BDII), and a healthy control group, all of whom took a battery of neurocognitive tests. MDD patients performed poorly in tests involving attention and timed tasks, compared with the control group, but had less motor inhibition than did patients with BDI. Patients with BDI tended to perform worse across all executive functions, compared with patients with MDD, BDII, and the control group; however, BDII patients were the only ones who performed worse on the Iowa Gambling Task than did the control group, and performed worse on the Stroop Color–Word Test than did BDI patients.

While MDD patients had worse psychological quality of life (QOL) than that of controls, there was no difference in other QOL measures. MDD patients reported better physical health and lower disability rates than did BD patients. BDII patients had worse QOL than did control patients, but had lower disability rates than did BDI patients.

The investigators found that differences in cognitive function and quality of life still existed in patients with mood disorder, even after adjustment for mania and depressive symptoms.

“The importance of a detailed assessment of [executive function] and disability levels within each of these diagnostic categories, while controlling for demographic variables, was especially evident from the current results. Additionally, the comparison of impairment rates between groups – which is not a usual measure in the literature – provided important contributions to current knowledge regarding cognition and mood disorders,” the investigators noted.

Find the study in the Journal of Affective Disorders (doi: 10.1016/j.jad.2015.11.007).

FROM THE JOURNAL OF AFFECTIVE DISORDERS

Webcast: Obesity and contraceptive efficacy and risks

The video associated with this article is no longer available on this site. Please view all of our videos on the MDedge YouTube channelAccess Dr. Burkman's Webcasts on contraception: Helpful resources for your practice: |

The video associated with this article is no longer available on this site. Please view all of our videos on the MDedge YouTube channelAccess Dr. Burkman's Webcasts on contraception: Helpful resources for your practice: |

The video associated with this article is no longer available on this site. Please view all of our videos on the MDedge YouTube channelAccess Dr. Burkman's Webcasts on contraception: Helpful resources for your practice: |

8 tips to help patients cope with insomnia

Patients who struggle with insomnia may be better able to cope with their condition if they follow some of the tips listed in this patient handout from Neurology Reviews. Resisting naps, exercising earlier in the day, and avoiding the use of certain electronic devices before bedtime are among the tips offered at: http://cdn4.imng.com/fileadmin/qhi/nr/pdfs/53_TIPS_Insomnia_1115.pdf.

Patients who struggle with insomnia may be better able to cope with their condition if they follow some of the tips listed in this patient handout from Neurology Reviews. Resisting naps, exercising earlier in the day, and avoiding the use of certain electronic devices before bedtime are among the tips offered at: http://cdn4.imng.com/fileadmin/qhi/nr/pdfs/53_TIPS_Insomnia_1115.pdf.

Patients who struggle with insomnia may be better able to cope with their condition if they follow some of the tips listed in this patient handout from Neurology Reviews. Resisting naps, exercising earlier in the day, and avoiding the use of certain electronic devices before bedtime are among the tips offered at: http://cdn4.imng.com/fileadmin/qhi/nr/pdfs/53_TIPS_Insomnia_1115.pdf.

Proportion of HCV patients with cirrhosis is climbing

Despite a decline in the overall prevalence of hepatitis C virus (HCV) in the United States, the number of HCV patients with cirrhosis has risen significantly. Researchers report that the estimated prevalence of cirrhosis in HCV-infected patients has climbed from 6.6% in the early 1990s to more than 17% in the present era. Read more about this study, including the factors contributing to this increase, at Family Practice News. http://www.familypracticenews.com/specialty-focus/infectious-diseases/single-article-page/proportion-of-hcv-patients-in-us-with-cirrhosis-climbing/0a460b7fef6d035236f12b94f8aafc32.html

Despite a decline in the overall prevalence of hepatitis C virus (HCV) in the United States, the number of HCV patients with cirrhosis has risen significantly. Researchers report that the estimated prevalence of cirrhosis in HCV-infected patients has climbed from 6.6% in the early 1990s to more than 17% in the present era. Read more about this study, including the factors contributing to this increase, at Family Practice News. http://www.familypracticenews.com/specialty-focus/infectious-diseases/single-article-page/proportion-of-hcv-patients-in-us-with-cirrhosis-climbing/0a460b7fef6d035236f12b94f8aafc32.html

Despite a decline in the overall prevalence of hepatitis C virus (HCV) in the United States, the number of HCV patients with cirrhosis has risen significantly. Researchers report that the estimated prevalence of cirrhosis in HCV-infected patients has climbed from 6.6% in the early 1990s to more than 17% in the present era. Read more about this study, including the factors contributing to this increase, at Family Practice News. http://www.familypracticenews.com/specialty-focus/infectious-diseases/single-article-page/proportion-of-hcv-patients-in-us-with-cirrhosis-climbing/0a460b7fef6d035236f12b94f8aafc32.html

High-dose vitamin D increases fall risk in study of 200 patients

Older adults treated with high-dose vitamin D as part of a double-blind, randomized clinical trial achieved target 25-hydroxyvitamin D levels at 6 and 12 months, but did not achieve the primary endpoint of improved lower extremity function.

In fact, the highest dose used in the study – 60,000 IU of vitamin D3 monthly – was associated with an increased risk of falls, according to Dr. Heike A. Bischoff-Ferrari of University Hospital Zurich, and her colleagues.

In the 200 home-dwelling adults aged older than 70 years who participated in the 1-year study, monthly doses of 60,000 IU of vitamin D3 and 24,000 IU of vitamin D3 plus 300 mcg of calcifediol were significantly more likely than a 24,000 IU dose of vitamin D3 alone to result in 25-hydroxyvitamin D levels of at least 30 ng/mL at 6 and 12 months, but lower extremity function did not differ among the groups, the investigators reported in a study published online Jan. 4 in JAMA Internal Medicine.

For example, the adjusted changes in Short Physical Performance Battery scores – a measure of walking speed, successive chair stands, and balance – were 0.17, 0.16, and 0.16 at 6 months in the 24,000 IU, 60,000 IU, and 24,000 IU plus calcifediol groups, respectively, and 0.38, 0.10, and 0.11 at 12 months (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7148).

However, the incidence of falls was 66.9%, 66.1%, and 47.9% in the groups, respectively, and the mean number of falls was higher in the 60,000 IU group (1.47) and 24,000 IU plus calcifediol group (1.24) than in the 24,000 IU group (0.94), they said, noting that a similar pattern was observed during study months 0-6 and months 7-12.

Study subjects had a mean age of 78 years, 58% were vitamin D deficient with levels of less than 20 ng/mL at baseline, and 13% were severely deficient (levels less than 10 ng/mL). All had experienced a low-trauma fall in the previous 12 months and were thus considered a high-risk group for vitamin D deficiency and functional decline. Vitamin D supplementation was given via one 5-mL drink solution each month that provided 24,000 IU of vitamin D3 – equivalent to the current recommendation of 800 IU/day – plus three placebo capsules; a 5-mL drink solution that provided 60,000 IU of vitamin D3 – the equivalent of 2,000 IU/day – plus three placebo capsules; or a 5-mL placebo drink, two capsules containing 12,000 IU of vitamin D3 each, and one capsule containing 300 mcg of calcifediol, which is a potent liver metabolite of vitamin D.

Although vitamin D supplementation has been proposed as a possible strategy for delaying functional decline because of its effects on muscle strength, the current findings, which are consistent with some prior studies, suggest that high-doses of vitamin D confer no benefit with respect to function decline, compared with a standard-of-care dose of 24,000 IU of D3, and that high doses may increase falls in those with a prior fall event. Further research is needed to confirm the findings for daily dosing regimens, as well as to explore the physiology behind a possible detrimental effect of a high monthly bolus dose of vitamin D on muscle function and falls, they concluded.

This study was funded by the Swiss National Science Foundation and the VELUX Foundations, as well as by investigator-initiated funds from Merck Sharp & Dohme AG, WILD, and DSM Nutritional Products. Dr. Bischoff-Ferrari reported receiving speaker fees from and serving on advisory boards for Merck Sharp & Dohme AG, Amgen, WILD, DSM Nutritional Products, Roche Diagnostics, Nestle, Pfizer, and Sanofi.

The vitamin D storyline may be similar to that of antioxidant vitamins, Dr. Steven R. Cummings and his colleagues wrote in an editorial.

“Enthusiasm for the health benefits of vitamin supplements is coupled with the belief that ‘vitamins’ are inherently safe and reinforced by observational studies showing, essentially, that healthy people have higher vitamin levels. Then [randomized, controlled trials] and meta-analyses proved that the supplements in fact increase mortality (beta carotene, vitamin E), or have no health benefits (vitamin A, vitamin C),” they wrote (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7568).

The strategy of supplementation with vitamin D to achieve serum levels of at least 30 ng/mL to reduce the risk of falls and fractures has not been established, and according to the findings by Bischoff-Ferrari et al., it may increase the risk of falling.

“Until that approach is supported by randomized trials with updated meta-analyses, it would be prudent to follow recommendation from the Institute of Medicine (IOM) that people 70 years or older have a total daily intake of 800 IU of vitamin D without routine measurement of serum 25(OH)D levels,” they wrote, adding that recommended intakes are best achieved through a balanced diet.

Dr. Cummings is with the California Pacific Medical Center Research Institute, San Francisco, and the University of California, San Francisco. He and his coauthors reported having no disclosures.

The vitamin D storyline may be similar to that of antioxidant vitamins, Dr. Steven R. Cummings and his colleagues wrote in an editorial.

“Enthusiasm for the health benefits of vitamin supplements is coupled with the belief that ‘vitamins’ are inherently safe and reinforced by observational studies showing, essentially, that healthy people have higher vitamin levels. Then [randomized, controlled trials] and meta-analyses proved that the supplements in fact increase mortality (beta carotene, vitamin E), or have no health benefits (vitamin A, vitamin C),” they wrote (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7568).

The strategy of supplementation with vitamin D to achieve serum levels of at least 30 ng/mL to reduce the risk of falls and fractures has not been established, and according to the findings by Bischoff-Ferrari et al., it may increase the risk of falling.

“Until that approach is supported by randomized trials with updated meta-analyses, it would be prudent to follow recommendation from the Institute of Medicine (IOM) that people 70 years or older have a total daily intake of 800 IU of vitamin D without routine measurement of serum 25(OH)D levels,” they wrote, adding that recommended intakes are best achieved through a balanced diet.

Dr. Cummings is with the California Pacific Medical Center Research Institute, San Francisco, and the University of California, San Francisco. He and his coauthors reported having no disclosures.

The vitamin D storyline may be similar to that of antioxidant vitamins, Dr. Steven R. Cummings and his colleagues wrote in an editorial.

“Enthusiasm for the health benefits of vitamin supplements is coupled with the belief that ‘vitamins’ are inherently safe and reinforced by observational studies showing, essentially, that healthy people have higher vitamin levels. Then [randomized, controlled trials] and meta-analyses proved that the supplements in fact increase mortality (beta carotene, vitamin E), or have no health benefits (vitamin A, vitamin C),” they wrote (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7568).

The strategy of supplementation with vitamin D to achieve serum levels of at least 30 ng/mL to reduce the risk of falls and fractures has not been established, and according to the findings by Bischoff-Ferrari et al., it may increase the risk of falling.

“Until that approach is supported by randomized trials with updated meta-analyses, it would be prudent to follow recommendation from the Institute of Medicine (IOM) that people 70 years or older have a total daily intake of 800 IU of vitamin D without routine measurement of serum 25(OH)D levels,” they wrote, adding that recommended intakes are best achieved through a balanced diet.

Dr. Cummings is with the California Pacific Medical Center Research Institute, San Francisco, and the University of California, San Francisco. He and his coauthors reported having no disclosures.

Older adults treated with high-dose vitamin D as part of a double-blind, randomized clinical trial achieved target 25-hydroxyvitamin D levels at 6 and 12 months, but did not achieve the primary endpoint of improved lower extremity function.

In fact, the highest dose used in the study – 60,000 IU of vitamin D3 monthly – was associated with an increased risk of falls, according to Dr. Heike A. Bischoff-Ferrari of University Hospital Zurich, and her colleagues.

In the 200 home-dwelling adults aged older than 70 years who participated in the 1-year study, monthly doses of 60,000 IU of vitamin D3 and 24,000 IU of vitamin D3 plus 300 mcg of calcifediol were significantly more likely than a 24,000 IU dose of vitamin D3 alone to result in 25-hydroxyvitamin D levels of at least 30 ng/mL at 6 and 12 months, but lower extremity function did not differ among the groups, the investigators reported in a study published online Jan. 4 in JAMA Internal Medicine.

For example, the adjusted changes in Short Physical Performance Battery scores – a measure of walking speed, successive chair stands, and balance – were 0.17, 0.16, and 0.16 at 6 months in the 24,000 IU, 60,000 IU, and 24,000 IU plus calcifediol groups, respectively, and 0.38, 0.10, and 0.11 at 12 months (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7148).

However, the incidence of falls was 66.9%, 66.1%, and 47.9% in the groups, respectively, and the mean number of falls was higher in the 60,000 IU group (1.47) and 24,000 IU plus calcifediol group (1.24) than in the 24,000 IU group (0.94), they said, noting that a similar pattern was observed during study months 0-6 and months 7-12.

Study subjects had a mean age of 78 years, 58% were vitamin D deficient with levels of less than 20 ng/mL at baseline, and 13% were severely deficient (levels less than 10 ng/mL). All had experienced a low-trauma fall in the previous 12 months and were thus considered a high-risk group for vitamin D deficiency and functional decline. Vitamin D supplementation was given via one 5-mL drink solution each month that provided 24,000 IU of vitamin D3 – equivalent to the current recommendation of 800 IU/day – plus three placebo capsules; a 5-mL drink solution that provided 60,000 IU of vitamin D3 – the equivalent of 2,000 IU/day – plus three placebo capsules; or a 5-mL placebo drink, two capsules containing 12,000 IU of vitamin D3 each, and one capsule containing 300 mcg of calcifediol, which is a potent liver metabolite of vitamin D.

Although vitamin D supplementation has been proposed as a possible strategy for delaying functional decline because of its effects on muscle strength, the current findings, which are consistent with some prior studies, suggest that high-doses of vitamin D confer no benefit with respect to function decline, compared with a standard-of-care dose of 24,000 IU of D3, and that high doses may increase falls in those with a prior fall event. Further research is needed to confirm the findings for daily dosing regimens, as well as to explore the physiology behind a possible detrimental effect of a high monthly bolus dose of vitamin D on muscle function and falls, they concluded.

This study was funded by the Swiss National Science Foundation and the VELUX Foundations, as well as by investigator-initiated funds from Merck Sharp & Dohme AG, WILD, and DSM Nutritional Products. Dr. Bischoff-Ferrari reported receiving speaker fees from and serving on advisory boards for Merck Sharp & Dohme AG, Amgen, WILD, DSM Nutritional Products, Roche Diagnostics, Nestle, Pfizer, and Sanofi.

Older adults treated with high-dose vitamin D as part of a double-blind, randomized clinical trial achieved target 25-hydroxyvitamin D levels at 6 and 12 months, but did not achieve the primary endpoint of improved lower extremity function.

In fact, the highest dose used in the study – 60,000 IU of vitamin D3 monthly – was associated with an increased risk of falls, according to Dr. Heike A. Bischoff-Ferrari of University Hospital Zurich, and her colleagues.

In the 200 home-dwelling adults aged older than 70 years who participated in the 1-year study, monthly doses of 60,000 IU of vitamin D3 and 24,000 IU of vitamin D3 plus 300 mcg of calcifediol were significantly more likely than a 24,000 IU dose of vitamin D3 alone to result in 25-hydroxyvitamin D levels of at least 30 ng/mL at 6 and 12 months, but lower extremity function did not differ among the groups, the investigators reported in a study published online Jan. 4 in JAMA Internal Medicine.

For example, the adjusted changes in Short Physical Performance Battery scores – a measure of walking speed, successive chair stands, and balance – were 0.17, 0.16, and 0.16 at 6 months in the 24,000 IU, 60,000 IU, and 24,000 IU plus calcifediol groups, respectively, and 0.38, 0.10, and 0.11 at 12 months (JAMA Intern Med. 2016 Jan 4. doi: 10.1001/jamainternmed.2015.7148).

However, the incidence of falls was 66.9%, 66.1%, and 47.9% in the groups, respectively, and the mean number of falls was higher in the 60,000 IU group (1.47) and 24,000 IU plus calcifediol group (1.24) than in the 24,000 IU group (0.94), they said, noting that a similar pattern was observed during study months 0-6 and months 7-12.

Study subjects had a mean age of 78 years, 58% were vitamin D deficient with levels of less than 20 ng/mL at baseline, and 13% were severely deficient (levels less than 10 ng/mL). All had experienced a low-trauma fall in the previous 12 months and were thus considered a high-risk group for vitamin D deficiency and functional decline. Vitamin D supplementation was given via one 5-mL drink solution each month that provided 24,000 IU of vitamin D3 – equivalent to the current recommendation of 800 IU/day – plus three placebo capsules; a 5-mL drink solution that provided 60,000 IU of vitamin D3 – the equivalent of 2,000 IU/day – plus three placebo capsules; or a 5-mL placebo drink, two capsules containing 12,000 IU of vitamin D3 each, and one capsule containing 300 mcg of calcifediol, which is a potent liver metabolite of vitamin D.

Although vitamin D supplementation has been proposed as a possible strategy for delaying functional decline because of its effects on muscle strength, the current findings, which are consistent with some prior studies, suggest that high-doses of vitamin D confer no benefit with respect to function decline, compared with a standard-of-care dose of 24,000 IU of D3, and that high doses may increase falls in those with a prior fall event. Further research is needed to confirm the findings for daily dosing regimens, as well as to explore the physiology behind a possible detrimental effect of a high monthly bolus dose of vitamin D on muscle function and falls, they concluded.

This study was funded by the Swiss National Science Foundation and the VELUX Foundations, as well as by investigator-initiated funds from Merck Sharp & Dohme AG, WILD, and DSM Nutritional Products. Dr. Bischoff-Ferrari reported receiving speaker fees from and serving on advisory boards for Merck Sharp & Dohme AG, Amgen, WILD, DSM Nutritional Products, Roche Diagnostics, Nestle, Pfizer, and Sanofi.

FROM JAMA INTERNAL MEDICINE

Key clinical point: Older adults treated with high-dose vitamin D as part of a double-blind, randomized clinical trial achieved target 25-hydroxyvitamin D levels at 6 and 12 months, but did not achieve the primary endpoint of improved lower extremity function.

Major finding: Fall incidence was 66.9%, 66.1%, and 47.9% with vitamin D3 at doses of 24,000 IU, 60,000 IU, and 24,000 IU, respectively, plus 300 mcg calcifediol.

Data source: A double-blind, randomized trial involving 200 patients.

Disclosures: This study was funded by the Swiss National Science Foundation and the VELUX Foundations, as well as by investigator-initiated funds from Merck Sharp & Dohme AG, WILD, and DSM Nutritional Products. Dr. Bischoff-Ferrari disclosed ties with Merck Sharp & Dohme AG, Amgen, WILD, DSM Nutritional Products, Roche Diagnostics, Nestle, Pfizer, and Sanofi.

Cancer drug discovery database goes 3D

Photo by Rhoda Baer

Researchers have updated the canSAR database, a tool designed to aid cancer drug discovery, by adding 3D structures of faulty proteins and maps of cancer’s communication networks.

The canSAR database brings together biological, chemical, and pharmacological data.

The goal of the database is to make these data accessible to researchers worldwide to help with hypothesis generation and support drug discovery decisions.

Users can search canSAR using text queries, protein/gene name searches, any keyword searches, chemical structure searches, and sequence similarity searches. Users can also explore and filter chemical compound sets, view experimental data, and produce summary plots.

The canSAR database was launched in 2011 with the goal of using Big Data approaches to build a detailed picture of how the majority of known human molecules behave.

The database has already collated billions of experimental measurements, mapping the actions of 1 million drugs and chemicals on human proteins, and it has combined these data with genetic information and results from clinical trials.

The updated version of canSAR uses artificial intelligence to identify nooks and crannies on the surface of faulty cancer-causing molecules as a key step in designing new drugs to block them. It also allows researchers to identify communication lines that can be intercepted within tumor cells, opening up potential new approaches for cancer treatment.

The growing database now holds the 3D structures of almost 3 million cavities on the surface of nearly 110,000 molecules.

“Our database is constantly growing with information and is the largest of its kind, with more than 140,000 users from over 175 countries,” said Bissan Al-Lazikani, PhD, of The Institute of Cancer Research in London, UK.

“And we regularly develop new artificial intelligence technologies that help scientists make predictions and design experiments. Our aim is that cancer scientists will be armed with the data they need to carry out life-saving research into the most exciting drugs of the future.”

“Scientists need to find all the information there is about a faulty gene or protein to understand whether a new drug might work. These data are vast and scattered, but the canSAR database brings them together and adds value by identifying hidden links and presenting the key information easily.”

Details on the updates to canSAR have been published in Nucleic Acid Research. The database is available online at https://cansar.icr.ac.uk/. ![]()

Photo by Rhoda Baer

Researchers have updated the canSAR database, a tool designed to aid cancer drug discovery, by adding 3D structures of faulty proteins and maps of cancer’s communication networks.

The canSAR database brings together biological, chemical, and pharmacological data.

The goal of the database is to make these data accessible to researchers worldwide to help with hypothesis generation and support drug discovery decisions.

Users can search canSAR using text queries, protein/gene name searches, any keyword searches, chemical structure searches, and sequence similarity searches. Users can also explore and filter chemical compound sets, view experimental data, and produce summary plots.

The canSAR database was launched in 2011 with the goal of using Big Data approaches to build a detailed picture of how the majority of known human molecules behave.

The database has already collated billions of experimental measurements, mapping the actions of 1 million drugs and chemicals on human proteins, and it has combined these data with genetic information and results from clinical trials.

The updated version of canSAR uses artificial intelligence to identify nooks and crannies on the surface of faulty cancer-causing molecules as a key step in designing new drugs to block them. It also allows researchers to identify communication lines that can be intercepted within tumor cells, opening up potential new approaches for cancer treatment.

The growing database now holds the 3D structures of almost 3 million cavities on the surface of nearly 110,000 molecules.

“Our database is constantly growing with information and is the largest of its kind, with more than 140,000 users from over 175 countries,” said Bissan Al-Lazikani, PhD, of The Institute of Cancer Research in London, UK.

“And we regularly develop new artificial intelligence technologies that help scientists make predictions and design experiments. Our aim is that cancer scientists will be armed with the data they need to carry out life-saving research into the most exciting drugs of the future.”

“Scientists need to find all the information there is about a faulty gene or protein to understand whether a new drug might work. These data are vast and scattered, but the canSAR database brings them together and adds value by identifying hidden links and presenting the key information easily.”

Details on the updates to canSAR have been published in Nucleic Acid Research. The database is available online at https://cansar.icr.ac.uk/. ![]()

Photo by Rhoda Baer

Researchers have updated the canSAR database, a tool designed to aid cancer drug discovery, by adding 3D structures of faulty proteins and maps of cancer’s communication networks.

The canSAR database brings together biological, chemical, and pharmacological data.

The goal of the database is to make these data accessible to researchers worldwide to help with hypothesis generation and support drug discovery decisions.

Users can search canSAR using text queries, protein/gene name searches, any keyword searches, chemical structure searches, and sequence similarity searches. Users can also explore and filter chemical compound sets, view experimental data, and produce summary plots.

The canSAR database was launched in 2011 with the goal of using Big Data approaches to build a detailed picture of how the majority of known human molecules behave.

The database has already collated billions of experimental measurements, mapping the actions of 1 million drugs and chemicals on human proteins, and it has combined these data with genetic information and results from clinical trials.

The updated version of canSAR uses artificial intelligence to identify nooks and crannies on the surface of faulty cancer-causing molecules as a key step in designing new drugs to block them. It also allows researchers to identify communication lines that can be intercepted within tumor cells, opening up potential new approaches for cancer treatment.

The growing database now holds the 3D structures of almost 3 million cavities on the surface of nearly 110,000 molecules.

“Our database is constantly growing with information and is the largest of its kind, with more than 140,000 users from over 175 countries,” said Bissan Al-Lazikani, PhD, of The Institute of Cancer Research in London, UK.

“And we regularly develop new artificial intelligence technologies that help scientists make predictions and design experiments. Our aim is that cancer scientists will be armed with the data they need to carry out life-saving research into the most exciting drugs of the future.”

“Scientists need to find all the information there is about a faulty gene or protein to understand whether a new drug might work. These data are vast and scattered, but the canSAR database brings them together and adds value by identifying hidden links and presenting the key information easily.”

Details on the updates to canSAR have been published in Nucleic Acid Research. The database is available online at https://cansar.icr.ac.uk/. ![]()

Nonalcoholic fatty liver disease will keep rising ‘in near term’

Nonalcoholic fatty liver disease (NAFLD) almost tripled among United States veterans in a recent 9-year period, investigators reported in the February issue of Clinical Gastroenterology and Hepatology.

The trend “was evident in all racial groups, across all age groups, and in both genders,” said Dr. Fasiha Kanwal of the Michael E. DeBakey Veterans Affairs Medical Center and Baylor College of Medicine, both in Houston, and her associates. The increasing prevalence of NAFLD “is likely generalizable to nonveterans,” and will probably persist because of a “fairly steady” 2%-3% overall annual incidence and a steeper rise among younger individuals, they added. “Nonalcoholic fatty liver disease will continue to remain a major public health problem in the United States, at least in the near and intermediate future.”

Although NAFLD is the leading cause of chronic liver failure in the United States, few studies have examined its incidence or prevalence over time, which are key to predicting future disease burden. Therefore, the investigators analyzed data for more than 9.78 million patients who visited the VA at least once between 2003 and 2011. They defined NAFLD as at least two elevated alanine aminotransferase (ALT) values (greater than 40 IU/mL) separated by at least 6 months, with no history of positive serology for hepatitis B surface antigen or hepatitis C virus RNA, and no alcohol-related ICD-9 codes or positive AUDIT-C scores within a year of elevated ALT levels (Clin Gastroenterol Hepatol. 2015 Aug 7. doi: 10.1016/j.cgh.2015.08.010).

During the study period, more than 1.3 million patients, or 13.6%, met the definition of NAFLD, said the researchers. Age-adjusted incidence rates dropped slightly from 3.16% in 2003 to 2.5% in 2011, ranging between 2.3% and 2.7% in most years. Prevalence, however, rose from 6.3% in 2003 (95% confidence interval, 6.26%-6.3%) to 17.6% in 2011 (95% CI, 17.58%-17.65%), a 2.8-fold increase. Moreover, about one in five patients with NAFLD who visited the VA in 2011 was at risk for advanced fibrosis.

Among individuals who were younger than 45 years, the incidence of NAFLD rose from 2.3 to 4.3 cases per 100 persons (annual percentage change, 7.4%; 95% CI, 5.7% to 9.2%), the researchers also found. “Although recent studies show that the rate of increase in both obesity and diabetes, which are both major risk factors for NAFLD, may be slowing down in the U.S., this may not be the case in the VA, where the prevalence of obesity and diabetes is in fact higher than in the U.S. population,” they said.

In general, the findings mirror a recent analysis of the National Health and Nutrition Examination Survey (Aliment Pharmacol Ther. 2015 Jan;41[1]:65-76), according to the investigators. “The VA is the largest integrated health care system in the United States,” they added. “We believe that the sheer size of the veteran cohort, combined with a complete dearth of information regarding the burden of NAFLD in the VA, renders our findings highly significant. Furthermore, the VA is in a unique position to test and implement systemic changes in medical care delivery to improve the health care of NAFLD patients.”

The study was partially supported by the Michael E. DeBakey Veterans Affairs Medical Center. The researchers had no disclosures.

Kanwal and colleagues present an interesting study assessing the trends in the incidence and prevalence of NAFLD in the United States. Findings suggest that the annual incidence of NAFLD has generally been stable (2.2%-3.2%), while the prevalence of NAFLD has increased by 2.8-fold (6.3%-17.6%). These findings are consistent with the literature and provide additional evidence supporting the increasing burden of NAFLD. Although an important study, there are some limitations to the study design. First, the diagnosis of NAFLD was solely based on elevated liver enzymes, which can underestimate the true incidence and prevalence of NAFLD. In fact, in a recent meta-analysis, NAFLD prevalence based on liver enzymes was 13%, while NAFLD prevalence based on radiologic diagnosis was 25% (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Second, the study subjects came from the VA system, which may not be representative of the U.S. population (Patrick AFB, FL: Defense Equal Opportunity Management Institute, 2010). This is important because sex-specific differences in the prevalence of NAFLD have been reported (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Nevertheless, these limitations do not minimize the important contribution of this study. There appears to be an alarming increase in the burden of NAFLD within all the racial and age groups in the U.S. Further, this increase in the incidence and prevalence of NAFLD is especially significant among the younger age groups (less than 45 years). This finding is in contrast to others who have reported a higher prevalence in older subjects (Presented at AASLD 2015. San Francisco. Abstract #534). If confirmed, this younger cohort of patients with NAFLD can fuel the future burden of liver disease for the next few decades (JAMA. 2012;307:491-7). Given the current lack of an effective treatment for NAFLD, a national strategy to deal with this important and rising cause of chronic liver disease is urgently needed.

Dr. Zobair M. Younossi, MPH, FACG, AGAF, FAASLD, is chairman, department of medicine, Inova Fairfax Hospital; vice president for research, Inova Health System; professor of medicine, VCU-Inova Campus and Beatty Center for Integrated Research, Falls Church, Va. He has consulted for Gilead, AbbVie, Intercept, BMS, and GSK.

Kanwal and colleagues present an interesting study assessing the trends in the incidence and prevalence of NAFLD in the United States. Findings suggest that the annual incidence of NAFLD has generally been stable (2.2%-3.2%), while the prevalence of NAFLD has increased by 2.8-fold (6.3%-17.6%). These findings are consistent with the literature and provide additional evidence supporting the increasing burden of NAFLD. Although an important study, there are some limitations to the study design. First, the diagnosis of NAFLD was solely based on elevated liver enzymes, which can underestimate the true incidence and prevalence of NAFLD. In fact, in a recent meta-analysis, NAFLD prevalence based on liver enzymes was 13%, while NAFLD prevalence based on radiologic diagnosis was 25% (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Second, the study subjects came from the VA system, which may not be representative of the U.S. population (Patrick AFB, FL: Defense Equal Opportunity Management Institute, 2010). This is important because sex-specific differences in the prevalence of NAFLD have been reported (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Nevertheless, these limitations do not minimize the important contribution of this study. There appears to be an alarming increase in the burden of NAFLD within all the racial and age groups in the U.S. Further, this increase in the incidence and prevalence of NAFLD is especially significant among the younger age groups (less than 45 years). This finding is in contrast to others who have reported a higher prevalence in older subjects (Presented at AASLD 2015. San Francisco. Abstract #534). If confirmed, this younger cohort of patients with NAFLD can fuel the future burden of liver disease for the next few decades (JAMA. 2012;307:491-7). Given the current lack of an effective treatment for NAFLD, a national strategy to deal with this important and rising cause of chronic liver disease is urgently needed.

Dr. Zobair M. Younossi, MPH, FACG, AGAF, FAASLD, is chairman, department of medicine, Inova Fairfax Hospital; vice president for research, Inova Health System; professor of medicine, VCU-Inova Campus and Beatty Center for Integrated Research, Falls Church, Va. He has consulted for Gilead, AbbVie, Intercept, BMS, and GSK.

Kanwal and colleagues present an interesting study assessing the trends in the incidence and prevalence of NAFLD in the United States. Findings suggest that the annual incidence of NAFLD has generally been stable (2.2%-3.2%), while the prevalence of NAFLD has increased by 2.8-fold (6.3%-17.6%). These findings are consistent with the literature and provide additional evidence supporting the increasing burden of NAFLD. Although an important study, there are some limitations to the study design. First, the diagnosis of NAFLD was solely based on elevated liver enzymes, which can underestimate the true incidence and prevalence of NAFLD. In fact, in a recent meta-analysis, NAFLD prevalence based on liver enzymes was 13%, while NAFLD prevalence based on radiologic diagnosis was 25% (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Second, the study subjects came from the VA system, which may not be representative of the U.S. population (Patrick AFB, FL: Defense Equal Opportunity Management Institute, 2010). This is important because sex-specific differences in the prevalence of NAFLD have been reported (Hepatology. 2015 Dec 28. doi: 10.1002/hep.28431. [Epub ahead of print]). Nevertheless, these limitations do not minimize the important contribution of this study. There appears to be an alarming increase in the burden of NAFLD within all the racial and age groups in the U.S. Further, this increase in the incidence and prevalence of NAFLD is especially significant among the younger age groups (less than 45 years). This finding is in contrast to others who have reported a higher prevalence in older subjects (Presented at AASLD 2015. San Francisco. Abstract #534). If confirmed, this younger cohort of patients with NAFLD can fuel the future burden of liver disease for the next few decades (JAMA. 2012;307:491-7). Given the current lack of an effective treatment for NAFLD, a national strategy to deal with this important and rising cause of chronic liver disease is urgently needed.

Dr. Zobair M. Younossi, MPH, FACG, AGAF, FAASLD, is chairman, department of medicine, Inova Fairfax Hospital; vice president for research, Inova Health System; professor of medicine, VCU-Inova Campus and Beatty Center for Integrated Research, Falls Church, Va. He has consulted for Gilead, AbbVie, Intercept, BMS, and GSK.

Nonalcoholic fatty liver disease (NAFLD) almost tripled among United States veterans in a recent 9-year period, investigators reported in the February issue of Clinical Gastroenterology and Hepatology.

The trend “was evident in all racial groups, across all age groups, and in both genders,” said Dr. Fasiha Kanwal of the Michael E. DeBakey Veterans Affairs Medical Center and Baylor College of Medicine, both in Houston, and her associates. The increasing prevalence of NAFLD “is likely generalizable to nonveterans,” and will probably persist because of a “fairly steady” 2%-3% overall annual incidence and a steeper rise among younger individuals, they added. “Nonalcoholic fatty liver disease will continue to remain a major public health problem in the United States, at least in the near and intermediate future.”

Although NAFLD is the leading cause of chronic liver failure in the United States, few studies have examined its incidence or prevalence over time, which are key to predicting future disease burden. Therefore, the investigators analyzed data for more than 9.78 million patients who visited the VA at least once between 2003 and 2011. They defined NAFLD as at least two elevated alanine aminotransferase (ALT) values (greater than 40 IU/mL) separated by at least 6 months, with no history of positive serology for hepatitis B surface antigen or hepatitis C virus RNA, and no alcohol-related ICD-9 codes or positive AUDIT-C scores within a year of elevated ALT levels (Clin Gastroenterol Hepatol. 2015 Aug 7. doi: 10.1016/j.cgh.2015.08.010).

During the study period, more than 1.3 million patients, or 13.6%, met the definition of NAFLD, said the researchers. Age-adjusted incidence rates dropped slightly from 3.16% in 2003 to 2.5% in 2011, ranging between 2.3% and 2.7% in most years. Prevalence, however, rose from 6.3% in 2003 (95% confidence interval, 6.26%-6.3%) to 17.6% in 2011 (95% CI, 17.58%-17.65%), a 2.8-fold increase. Moreover, about one in five patients with NAFLD who visited the VA in 2011 was at risk for advanced fibrosis.

Among individuals who were younger than 45 years, the incidence of NAFLD rose from 2.3 to 4.3 cases per 100 persons (annual percentage change, 7.4%; 95% CI, 5.7% to 9.2%), the researchers also found. “Although recent studies show that the rate of increase in both obesity and diabetes, which are both major risk factors for NAFLD, may be slowing down in the U.S., this may not be the case in the VA, where the prevalence of obesity and diabetes is in fact higher than in the U.S. population,” they said.

In general, the findings mirror a recent analysis of the National Health and Nutrition Examination Survey (Aliment Pharmacol Ther. 2015 Jan;41[1]:65-76), according to the investigators. “The VA is the largest integrated health care system in the United States,” they added. “We believe that the sheer size of the veteran cohort, combined with a complete dearth of information regarding the burden of NAFLD in the VA, renders our findings highly significant. Furthermore, the VA is in a unique position to test and implement systemic changes in medical care delivery to improve the health care of NAFLD patients.”

The study was partially supported by the Michael E. DeBakey Veterans Affairs Medical Center. The researchers had no disclosures.

Nonalcoholic fatty liver disease (NAFLD) almost tripled among United States veterans in a recent 9-year period, investigators reported in the February issue of Clinical Gastroenterology and Hepatology.

The trend “was evident in all racial groups, across all age groups, and in both genders,” said Dr. Fasiha Kanwal of the Michael E. DeBakey Veterans Affairs Medical Center and Baylor College of Medicine, both in Houston, and her associates. The increasing prevalence of NAFLD “is likely generalizable to nonveterans,” and will probably persist because of a “fairly steady” 2%-3% overall annual incidence and a steeper rise among younger individuals, they added. “Nonalcoholic fatty liver disease will continue to remain a major public health problem in the United States, at least in the near and intermediate future.”

Although NAFLD is the leading cause of chronic liver failure in the United States, few studies have examined its incidence or prevalence over time, which are key to predicting future disease burden. Therefore, the investigators analyzed data for more than 9.78 million patients who visited the VA at least once between 2003 and 2011. They defined NAFLD as at least two elevated alanine aminotransferase (ALT) values (greater than 40 IU/mL) separated by at least 6 months, with no history of positive serology for hepatitis B surface antigen or hepatitis C virus RNA, and no alcohol-related ICD-9 codes or positive AUDIT-C scores within a year of elevated ALT levels (Clin Gastroenterol Hepatol. 2015 Aug 7. doi: 10.1016/j.cgh.2015.08.010).

During the study period, more than 1.3 million patients, or 13.6%, met the definition of NAFLD, said the researchers. Age-adjusted incidence rates dropped slightly from 3.16% in 2003 to 2.5% in 2011, ranging between 2.3% and 2.7% in most years. Prevalence, however, rose from 6.3% in 2003 (95% confidence interval, 6.26%-6.3%) to 17.6% in 2011 (95% CI, 17.58%-17.65%), a 2.8-fold increase. Moreover, about one in five patients with NAFLD who visited the VA in 2011 was at risk for advanced fibrosis.

Among individuals who were younger than 45 years, the incidence of NAFLD rose from 2.3 to 4.3 cases per 100 persons (annual percentage change, 7.4%; 95% CI, 5.7% to 9.2%), the researchers also found. “Although recent studies show that the rate of increase in both obesity and diabetes, which are both major risk factors for NAFLD, may be slowing down in the U.S., this may not be the case in the VA, where the prevalence of obesity and diabetes is in fact higher than in the U.S. population,” they said.

In general, the findings mirror a recent analysis of the National Health and Nutrition Examination Survey (Aliment Pharmacol Ther. 2015 Jan;41[1]:65-76), according to the investigators. “The VA is the largest integrated health care system in the United States,” they added. “We believe that the sheer size of the veteran cohort, combined with a complete dearth of information regarding the burden of NAFLD in the VA, renders our findings highly significant. Furthermore, the VA is in a unique position to test and implement systemic changes in medical care delivery to improve the health care of NAFLD patients.”

The study was partially supported by the Michael E. DeBakey Veterans Affairs Medical Center. The researchers had no disclosures.

FROM CLINICAL GASTROENTEROLOGY AND HEPATOLOGY

Key clinical point: The prevalence of nonalcoholic fatty liver disease has risen substantially since 2003, and will probably keep increasing in the near term.

Major finding: Prevalence among veterans rose about 2.8 times between 2003 and 2011, mirroring trends reported in the general population.

Data source: An analysis of data from 9.78 million Veterans Affairs patients.

Disclosures: The study was partially supported by the Michael E. DeBakey Veterans Affairs Medical Center. The researchers had no disclosures.

Is there an increased risk of GI bleeds with SSRIs?

Yes. Selective serotonin reuptake inhibitors (SSRIs) are likely associated with a moderate increased risk of upper gastrointestinal (UGI) bleeding. Use of a nonsteroidal anti-inflammatory drug (NSAID) in combination with the SSRI appears to amplify the risk (strength of recommendation [SOR]: B, meta-analysis of cohort and case control studies).

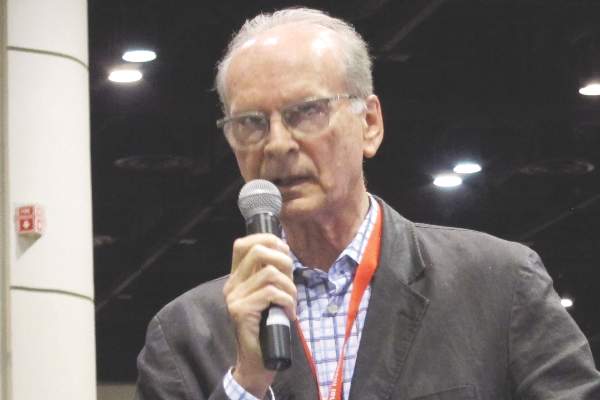

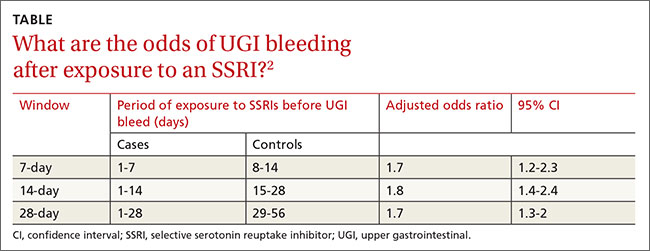

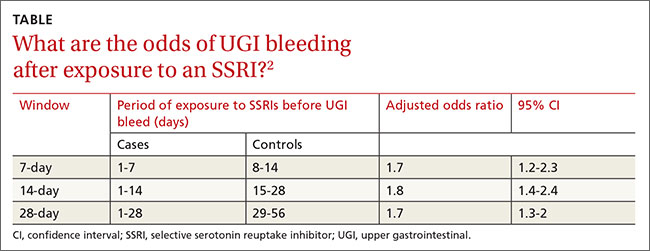

The increased risk from SSRIs occurs within the first 7 to 28 days after exposure (SOR: B, retrospective study).

SSRIs raise bleeding risk; concurrent NSAIDs raise it more

A 2014 systematic review and meta-analysis of 19 case-control and cohort studies with a total of 446,949 patients investigated the risk of UGI bleeding in patients using SSRIs and NSAIDs.1 The studies, which included both inpatients and outpatients, were done in Europe and North America. Patients were at least 16 years old, but pooled demographics were not reported. Investigators compared SSRI use with or without concurrent NSAID use to placebo or no treatment.

SSRI use was associated with an increased risk of UGI bleeding in 15 case-control studies (393,268 patients; odds ratio [OR]=1.7; 95% confidence interval [CI], 1.4-1.9) and 4 cohort studies (53,681 patients; OR=1.7; 95% CI, 1.1-2.5). The simultaneous use of SSRIs and NSAIDs compared to nonuse of both medications was associated with a larger increase in bleeding risk (10 case-control studies, 223,336 patients; OR=4.3; 95% CI, 2.8-6.4).

The meta-analysis is limited by statistically significant heterogeneity in all of the pooled results and high risk of bias in 9 of the case-control studies and all of the cohort studies. There was no evidence of publication bias, however.

Bleeding risk rises 7 to 28 days after SSRI exposure

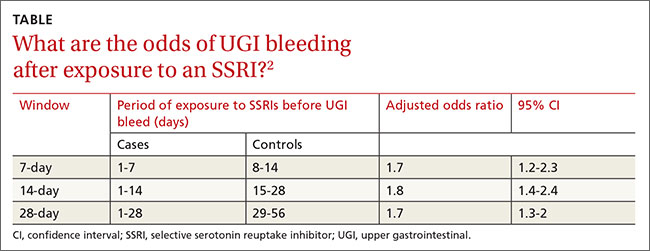

A 2014 case-crossover study of 5377 inpatients in Taiwan with a psychiatric diagnosis evaluated the risk of UGI bleeding within the first 28 days after SSRI exposure (SSRI-mediated inhibition of platelets occurs within the first 7 to 14 days).2 The average age of the patients was 58 years and 75% of the study population was male. Each patient served as his or her own control.

ORs were calculated to compare patients who were exposed to SSRIs only during 7-, 14-, and 21-day windows immediately before a UGI bleed to controls exposed to SSRIs only during the control periods before the 7-, 14-, and 21-day windows. The ORs were adjusted through multivariate analysis to account for 7 potential confounding factors.

SSRI use was associated with an increased risk of UGI bleeding in 7-, 14-, and 21-day windows before the index event (TABLE2). An increased bleeding risk in the 14 days after SSRI initiation was observed in men (OR=2.4; 95% CI, 1.8-3.4) but not women (OR=1.0; 95% CI, 0.6-1.6). Increased bleeding risk in the 14 days after SSRI initiation was also observed in patients younger than 55 years (OR=2.1; 95% CI, 1.5-3.1), patients with a history of upper GI disease (OR=3.1; 95% CI, 1.7-6.0), and patients with no previous exposure to SSRIs (OR=2.6; 95% CI, 1.6-4.2).

This study didn’t account for SSRI indication as a potential confounder, and the study’s inclusion of inpatients, whose illnesses are typically more severe, may limit generalizability.

1. Anglin R, Yuan Y, Moayyedi P, et al. Risk of upper gastrointestinal bleeding with selective serotonin reuptake inhibitors with or without concurrent nonsteroidal anti-inflammatory use: a systematic review and meta-analysis. Am J Gastroenterol. 2014;109:811-819.

2. Wang YP, Chen YT, Tsai C, et al. Short-term use of serotonin reuptake inhibitors and risk of upper gastrointestinal bleeding. Am J Psychiatry. 2014;171:54-61.

Yes. Selective serotonin reuptake inhibitors (SSRIs) are likely associated with a moderate increased risk of upper gastrointestinal (UGI) bleeding. Use of a nonsteroidal anti-inflammatory drug (NSAID) in combination with the SSRI appears to amplify the risk (strength of recommendation [SOR]: B, meta-analysis of cohort and case control studies).

The increased risk from SSRIs occurs within the first 7 to 28 days after exposure (SOR: B, retrospective study).

SSRIs raise bleeding risk; concurrent NSAIDs raise it more

A 2014 systematic review and meta-analysis of 19 case-control and cohort studies with a total of 446,949 patients investigated the risk of UGI bleeding in patients using SSRIs and NSAIDs.1 The studies, which included both inpatients and outpatients, were done in Europe and North America. Patients were at least 16 years old, but pooled demographics were not reported. Investigators compared SSRI use with or without concurrent NSAID use to placebo or no treatment.

SSRI use was associated with an increased risk of UGI bleeding in 15 case-control studies (393,268 patients; odds ratio [OR]=1.7; 95% confidence interval [CI], 1.4-1.9) and 4 cohort studies (53,681 patients; OR=1.7; 95% CI, 1.1-2.5). The simultaneous use of SSRIs and NSAIDs compared to nonuse of both medications was associated with a larger increase in bleeding risk (10 case-control studies, 223,336 patients; OR=4.3; 95% CI, 2.8-6.4).

The meta-analysis is limited by statistically significant heterogeneity in all of the pooled results and high risk of bias in 9 of the case-control studies and all of the cohort studies. There was no evidence of publication bias, however.

Bleeding risk rises 7 to 28 days after SSRI exposure

A 2014 case-crossover study of 5377 inpatients in Taiwan with a psychiatric diagnosis evaluated the risk of UGI bleeding within the first 28 days after SSRI exposure (SSRI-mediated inhibition of platelets occurs within the first 7 to 14 days).2 The average age of the patients was 58 years and 75% of the study population was male. Each patient served as his or her own control.

ORs were calculated to compare patients who were exposed to SSRIs only during 7-, 14-, and 21-day windows immediately before a UGI bleed to controls exposed to SSRIs only during the control periods before the 7-, 14-, and 21-day windows. The ORs were adjusted through multivariate analysis to account for 7 potential confounding factors.

SSRI use was associated with an increased risk of UGI bleeding in 7-, 14-, and 21-day windows before the index event (TABLE2). An increased bleeding risk in the 14 days after SSRI initiation was observed in men (OR=2.4; 95% CI, 1.8-3.4) but not women (OR=1.0; 95% CI, 0.6-1.6). Increased bleeding risk in the 14 days after SSRI initiation was also observed in patients younger than 55 years (OR=2.1; 95% CI, 1.5-3.1), patients with a history of upper GI disease (OR=3.1; 95% CI, 1.7-6.0), and patients with no previous exposure to SSRIs (OR=2.6; 95% CI, 1.6-4.2).

This study didn’t account for SSRI indication as a potential confounder, and the study’s inclusion of inpatients, whose illnesses are typically more severe, may limit generalizability.

Yes. Selective serotonin reuptake inhibitors (SSRIs) are likely associated with a moderate increased risk of upper gastrointestinal (UGI) bleeding. Use of a nonsteroidal anti-inflammatory drug (NSAID) in combination with the SSRI appears to amplify the risk (strength of recommendation [SOR]: B, meta-analysis of cohort and case control studies).

The increased risk from SSRIs occurs within the first 7 to 28 days after exposure (SOR: B, retrospective study).

SSRIs raise bleeding risk; concurrent NSAIDs raise it more

A 2014 systematic review and meta-analysis of 19 case-control and cohort studies with a total of 446,949 patients investigated the risk of UGI bleeding in patients using SSRIs and NSAIDs.1 The studies, which included both inpatients and outpatients, were done in Europe and North America. Patients were at least 16 years old, but pooled demographics were not reported. Investigators compared SSRI use with or without concurrent NSAID use to placebo or no treatment.

SSRI use was associated with an increased risk of UGI bleeding in 15 case-control studies (393,268 patients; odds ratio [OR]=1.7; 95% confidence interval [CI], 1.4-1.9) and 4 cohort studies (53,681 patients; OR=1.7; 95% CI, 1.1-2.5). The simultaneous use of SSRIs and NSAIDs compared to nonuse of both medications was associated with a larger increase in bleeding risk (10 case-control studies, 223,336 patients; OR=4.3; 95% CI, 2.8-6.4).

The meta-analysis is limited by statistically significant heterogeneity in all of the pooled results and high risk of bias in 9 of the case-control studies and all of the cohort studies. There was no evidence of publication bias, however.

Bleeding risk rises 7 to 28 days after SSRI exposure

A 2014 case-crossover study of 5377 inpatients in Taiwan with a psychiatric diagnosis evaluated the risk of UGI bleeding within the first 28 days after SSRI exposure (SSRI-mediated inhibition of platelets occurs within the first 7 to 14 days).2 The average age of the patients was 58 years and 75% of the study population was male. Each patient served as his or her own control.

ORs were calculated to compare patients who were exposed to SSRIs only during 7-, 14-, and 21-day windows immediately before a UGI bleed to controls exposed to SSRIs only during the control periods before the 7-, 14-, and 21-day windows. The ORs were adjusted through multivariate analysis to account for 7 potential confounding factors.

SSRI use was associated with an increased risk of UGI bleeding in 7-, 14-, and 21-day windows before the index event (TABLE2). An increased bleeding risk in the 14 days after SSRI initiation was observed in men (OR=2.4; 95% CI, 1.8-3.4) but not women (OR=1.0; 95% CI, 0.6-1.6). Increased bleeding risk in the 14 days after SSRI initiation was also observed in patients younger than 55 years (OR=2.1; 95% CI, 1.5-3.1), patients with a history of upper GI disease (OR=3.1; 95% CI, 1.7-6.0), and patients with no previous exposure to SSRIs (OR=2.6; 95% CI, 1.6-4.2).

This study didn’t account for SSRI indication as a potential confounder, and the study’s inclusion of inpatients, whose illnesses are typically more severe, may limit generalizability.

1. Anglin R, Yuan Y, Moayyedi P, et al. Risk of upper gastrointestinal bleeding with selective serotonin reuptake inhibitors with or without concurrent nonsteroidal anti-inflammatory use: a systematic review and meta-analysis. Am J Gastroenterol. 2014;109:811-819.

2. Wang YP, Chen YT, Tsai C, et al. Short-term use of serotonin reuptake inhibitors and risk of upper gastrointestinal bleeding. Am J Psychiatry. 2014;171:54-61.

1. Anglin R, Yuan Y, Moayyedi P, et al. Risk of upper gastrointestinal bleeding with selective serotonin reuptake inhibitors with or without concurrent nonsteroidal anti-inflammatory use: a systematic review and meta-analysis. Am J Gastroenterol. 2014;109:811-819.

2. Wang YP, Chen YT, Tsai C, et al. Short-term use of serotonin reuptake inhibitors and risk of upper gastrointestinal bleeding. Am J Psychiatry. 2014;171:54-61.

Evidence-based answers from the Family Physicians Inquiries Network

A new standard of care for rel/ref MM?

Photo courtesy of ASH

ORLANDO, FL—Adding the oral proteasome inhibitor ixazomib to treatment with lenalidomide and dexamethasone can prolong progression-free survival (PFS) in patients with relapsed and/or refractory multiple myeloma (MM), according to interim results of the phase 3 TOURMALINE-MM1 trial.

It is not yet clear if the 3-drug combination can prolong overall survival when compared to treatment with lenalidomide and dexamethasone.

However, researchers believe the triplet shows promise and could become a new standard of care for relapsed/refractory MM.

Philippe Moreau, MD, of the University of Nantes in France, discussed this possibility while presenting results from TOURMALINE-MM1 at the 2015 ASH Annual Meeting (abstract 727*). The study was sponsored by Millennium Pharmaceuticals, Inc.

The trial included 722 MM patients enrolled at 147 centers in 26 countries. Patients were randomized to receive ixazomib, lenalidomide, and dexamethasone (IRd, n=360) or placebo, lenalidomide, and dexamethasone (Rd, n=362).

Baseline patient characteristics were similar between the arms. The median age was 66 in both arms (overall range, 30-91), and nearly 60% of patients in both arms were male.

Fifty percent of patients in the IRd arm and 47% in the Rd arm had an ECOG performance status of 0. Forty-three percent and 45%, respectively, had a status of 1, and 5% and 7%, respectively, had a status of 2.

Eighty-seven percent and 88%, respectively, had an ISS stage of I or II. Fifty-five percent of patients in the IRd arm had standard-risk cytogenetics, as did 60% in the Rd arm.

Fifty-nine percent of patients in both arms had received 1 prior line of therapy, and 41% in both arms had 2 or 3 prior lines.

Response and survival

“Ixazomib, when combined with len-dex . . . , was associated with a significant and meaningful improvement in progression-free survival, improved time to progression, and [higher] response rate as well,” Dr Moreau said.

At a median follow-up of about 15 months, the median PFS was 20.6 months in the IRd arm and 14.7 months in the Rd arm. The hazard ratio was 0.742 (P=0.012).

Dr Moreau said the PFS benefit was consistent across pre-specified subgroups. So the benefit was present regardless of age, ISS stage, cytogenetic risk, number of prior therapies, prior exposure to a proteasome inhibitor, prior immunomodulatory therapy, whether the patient was refractory to his last therapy, and whether the patient had relapsed or refractory disease.

Dr Moreau also pointed out that, in the IRd arm, the median PFS in high-risk patients was similar to that in the overall patient population and in patients with standard-risk cytogenetics. This suggests ixazomib may overcome the negative impact of cytogenetic alterations.