User login

Society of Hospital Medicine’s 2015 Fellows Class Applications Welcome

Don’t wait until the last minute to apply for the Fellow and Senior Fellow in Hospital Medicine designation. Start your application today at www.hospitalmedicine.org/fellows.

The FHM and SFHM designation are open to all hospitalists, not just physicians. Physician assistants, nurse practitioners, and practice administrators are all eligible candidates for Fellow status. All inductees will be recognized at a plenary session at HM15 in National Harbor, Md.

The deadline for the 2015 class of Fellows is Jan. 9, 2015.

Don’t wait until the last minute to apply for the Fellow and Senior Fellow in Hospital Medicine designation. Start your application today at www.hospitalmedicine.org/fellows.

The FHM and SFHM designation are open to all hospitalists, not just physicians. Physician assistants, nurse practitioners, and practice administrators are all eligible candidates for Fellow status. All inductees will be recognized at a plenary session at HM15 in National Harbor, Md.

The deadline for the 2015 class of Fellows is Jan. 9, 2015.

Don’t wait until the last minute to apply for the Fellow and Senior Fellow in Hospital Medicine designation. Start your application today at www.hospitalmedicine.org/fellows.

The FHM and SFHM designation are open to all hospitalists, not just physicians. Physician assistants, nurse practitioners, and practice administrators are all eligible candidates for Fellow status. All inductees will be recognized at a plenary session at HM15 in National Harbor, Md.

The deadline for the 2015 class of Fellows is Jan. 9, 2015.

Common Coding Mistakes Hospitalists Should Avoid

Medical decision-making (MDM) mistakes are common. Here are the coding and documentation mistakes hospitalists make most often, along with some tips on how to avoid them.

Listing the problem without a plan. Healthcare professionals are able to infer the acuity and severity of a case without superfluous or redundant documentation, but auditors may not have this ability. Adequate documentation for every service date helps to convey patient complexity during a medical record review. Although the problem list may not change dramatically from day to day during a hospitalization, the auditor only reviews the service date in question, not the entire medical record.

Hospitalists should be sure to formulate a complete and accurate description of the patient’s condition with an analogous plan of care for each encounter. Listing problems without a corresponding plan of care does not corroborate physician management of that problem and could cause a downgrade of complexity. Listing problems with a brief, generalized comment (e.g. “DM, CKD, CHF: Continue current treatment plan”) equally diminishes the complexity and effort put forth by the physician.

Clearly document the plan. The care plan represents problems the physician personally manages, along with those that must also be considered when he or she formulates the management options, even if another physician is primarily managing the problem. For example, the hospitalist can monitor the patient’s diabetic management while the nephrologist oversees the chronic kidney disease (CKD). Since the CKD impacts the hospitalist’s diabetic care plan, the hospitalist may also receive credit for any CKD consideration if the documentation supports a hospitalist-related care plan, or comment about CKD that does not overlap or replicate the nephrologist’s plan. In other words, there must be some “value-added” input by the hospitalist.

Credit is given for the quantity of problems addressed as well as the quality. For inpatient care, an established problem is defined as one in which a care plan has been generated by the physician (or same specialty group practice member) during the current hospitalization. Established problems are less complex than new problems, for which a diagnosis, prognosis, or care plan has not been developed. Severity of the problem also influences complexity. A “worsening” problem is considered more complex than an “improving” problem, since the worsening problem likely requires revisions to the current care plan and, thus, more physician effort. Physician documentation should always:

- Identify all problems managed or addressed during each encounter;

- Identify problems as stable or progressing, when appropriate;

- Indicate differential diagnoses when the problem remains undefined;

- Indicate the management/treatment option(s) for each problem; and

- Note management options to be continued somewhere in the progress note for that encounter (e.g. medication list) when documentation indicates a continuation of current management options (e.g. “continue meds”).

Considering relevant data. “Data” is organized as pathology/laboratory testing, radiology, and medicine-based diagnostic testing that contributes to diagnosing or managing patient problems. Pertinent orders or results may appear in the medical record, but most of the background interactions and communications involving testing are undetected when reviewing the progress note. To receive credit:

- Specify tests ordered and rationale in the physician’s progress note, or make an entry that refers to another auditor-accessible location for ordered tests and studies; however, this latter option jeopardizes a medical record review due to potential lack of awareness of the need to submit this extraneous information during a payer record request or appeal.

- Document test review by including a brief entry in the progress note (e.g. “elevated glucose levels” or “CXR shows RLL infiltrates”); credit is not given for entries lacking a comment on the findings (e.g. “CXR reviewed”).

- Summarize key points when reviewing old records or obtaining history from someone other than the patient, as necessary; be sure to identify the increased efforts of reviewing the considerable number of old records by stating, “OSH (outside hospital) records reviewed and shows…” or “Records from previous hospitalization(s) reveal….”

- Indicate when images, tracings, or specimens are “personally reviewed,” or the auditor will assume the physician merely reviewed the written report; be sure to include a comment on the findings.

- Summarize any discussions of unexpected or contradictory test results with the physician performing the procedure or diagnostic study.

Data credit may be more substantial during the initial investigative phase of the hospitalization, before diagnoses or treatment options have been confirmed. Routine monitoring of the stabilized patient may not yield as many “points.”

Undervaluing the patient’s complexity. A general lack of understanding of the MDM component of the documentation guidelines often results in physicians undervaluing their services. Some physicians may consider a case “low complexity” simply because of the frequency with which they encounter the case type. The speed with which the care plan is developed should have no bearing on how complex the patient’s condition really is. Hospitalists need to better identify the risk involved for the patient.

Patient risk is categorized as minimal, low, moderate, or high based on pre-assigned items pertaining to the presenting problem, diagnostic procedures ordered, and management options selected. The single highest-rated item detected on the Table of Risk determines the overall patient risk for an encounter.1 Chronic conditions with exacerbations and invasive procedures offer more patient risk than acute, uncomplicated illnesses or noninvasive procedures. Stable or improving problems are considered “less risky” than progressing problems; conditions that pose a threat to life/bodily function outweigh undiagnosed problems where it is difficult to determine the patient’s prognosis; and medication risk varies with the administration (e.g. oral vs. parenteral), type, and potential for adverse effects. Medication risk for a particular drug is invariable whether the dosage is increased, decreased, or continued without change. Physicians should:

- Provide status for all problems in the plan of care and identify them as stable, worsening, or progressing (mild or severe), when applicable; don’t assume that the auditor can infer this from the documentation details.

- Document all diagnostic or therapeutic procedures considered.

- Identify surgical risk factors involving co-morbid conditions that place the patient at greater risk than the average patient, when appropriate.

- Associate the labs ordered to monitor for medication toxicity with the corresponding medication; don’t assume that the auditor knows which labs are used to check for toxicity.

Varying levels of complexity. Remember that decision-making is just one of three components in evaluation and management (E&M) services, along with history and exam. MDM is identical for both the 1995 and 1997 guidelines, rooted in the complexity of the patient’s problem(s) addressed during a given encounter.1,2 Complexity is categorized as straightforward, low, moderate, or high, and directly correlates to the content of physician documentation.

Each visit level represents a particular level of complexity (see Table 1). Auditors only consider the care plan for a given service date when reviewing MDM. More specifically, the auditor reviews three areas of MDM for each encounter (see Table 2), and the physician receives credit for: a) the number of diagnoses and/or treatment options; b) the amount and/or complexity of data ordered/reviewed; c) the risk of complications/morbidity/mortality.

To determine MDM complexity, each MDM category is assigned a point level. Complexity correlates to the second-highest MDM category. For example, if the auditor assigns “multiple” diagnoses/treatment options, “minimal” data, and “high” risk, the physician attains moderate complexity decision-making (see Table 3).

Carol Pohlig is a billing and coding expert with the University of Pennsylvania Medical Center, Philadelphia. She is also on the faculty of SHM’s inpatient coding course.

References

- Centers for Medicare and Medicaid Services. 1995 Documentation Guidelines for Evaluation and Management Services. Available at: www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/95Docguidelines.pdf. Accessed July 7, 2014.

- Centers for Medicare and Medicaid Services. 1997 Documentation Guidelines for Evaluation and Management Services. Available at: http://www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/97Docguidelines.pdf. Accessed July 7, 2014.

- American Medical Association. Current Procedural Terminology: 2014 Professional Edition. Chicago: American Medical Association; 2013:14-21.

- Novitas Solutions. Novitas Solutions documentation worksheet. Available at: www.novitas-solutions.com/webcenter/content/conn/UCM_Repository/uuid/dDocName:00004966. Accessed July 7, 2014.

Medical decision-making (MDM) mistakes are common. Here are the coding and documentation mistakes hospitalists make most often, along with some tips on how to avoid them.

Listing the problem without a plan. Healthcare professionals are able to infer the acuity and severity of a case without superfluous or redundant documentation, but auditors may not have this ability. Adequate documentation for every service date helps to convey patient complexity during a medical record review. Although the problem list may not change dramatically from day to day during a hospitalization, the auditor only reviews the service date in question, not the entire medical record.

Hospitalists should be sure to formulate a complete and accurate description of the patient’s condition with an analogous plan of care for each encounter. Listing problems without a corresponding plan of care does not corroborate physician management of that problem and could cause a downgrade of complexity. Listing problems with a brief, generalized comment (e.g. “DM, CKD, CHF: Continue current treatment plan”) equally diminishes the complexity and effort put forth by the physician.

Clearly document the plan. The care plan represents problems the physician personally manages, along with those that must also be considered when he or she formulates the management options, even if another physician is primarily managing the problem. For example, the hospitalist can monitor the patient’s diabetic management while the nephrologist oversees the chronic kidney disease (CKD). Since the CKD impacts the hospitalist’s diabetic care plan, the hospitalist may also receive credit for any CKD consideration if the documentation supports a hospitalist-related care plan, or comment about CKD that does not overlap or replicate the nephrologist’s plan. In other words, there must be some “value-added” input by the hospitalist.

Credit is given for the quantity of problems addressed as well as the quality. For inpatient care, an established problem is defined as one in which a care plan has been generated by the physician (or same specialty group practice member) during the current hospitalization. Established problems are less complex than new problems, for which a diagnosis, prognosis, or care plan has not been developed. Severity of the problem also influences complexity. A “worsening” problem is considered more complex than an “improving” problem, since the worsening problem likely requires revisions to the current care plan and, thus, more physician effort. Physician documentation should always:

- Identify all problems managed or addressed during each encounter;

- Identify problems as stable or progressing, when appropriate;

- Indicate differential diagnoses when the problem remains undefined;

- Indicate the management/treatment option(s) for each problem; and

- Note management options to be continued somewhere in the progress note for that encounter (e.g. medication list) when documentation indicates a continuation of current management options (e.g. “continue meds”).

Considering relevant data. “Data” is organized as pathology/laboratory testing, radiology, and medicine-based diagnostic testing that contributes to diagnosing or managing patient problems. Pertinent orders or results may appear in the medical record, but most of the background interactions and communications involving testing are undetected when reviewing the progress note. To receive credit:

- Specify tests ordered and rationale in the physician’s progress note, or make an entry that refers to another auditor-accessible location for ordered tests and studies; however, this latter option jeopardizes a medical record review due to potential lack of awareness of the need to submit this extraneous information during a payer record request or appeal.

- Document test review by including a brief entry in the progress note (e.g. “elevated glucose levels” or “CXR shows RLL infiltrates”); credit is not given for entries lacking a comment on the findings (e.g. “CXR reviewed”).

- Summarize key points when reviewing old records or obtaining history from someone other than the patient, as necessary; be sure to identify the increased efforts of reviewing the considerable number of old records by stating, “OSH (outside hospital) records reviewed and shows…” or “Records from previous hospitalization(s) reveal….”

- Indicate when images, tracings, or specimens are “personally reviewed,” or the auditor will assume the physician merely reviewed the written report; be sure to include a comment on the findings.

- Summarize any discussions of unexpected or contradictory test results with the physician performing the procedure or diagnostic study.

Data credit may be more substantial during the initial investigative phase of the hospitalization, before diagnoses or treatment options have been confirmed. Routine monitoring of the stabilized patient may not yield as many “points.”

Undervaluing the patient’s complexity. A general lack of understanding of the MDM component of the documentation guidelines often results in physicians undervaluing their services. Some physicians may consider a case “low complexity” simply because of the frequency with which they encounter the case type. The speed with which the care plan is developed should have no bearing on how complex the patient’s condition really is. Hospitalists need to better identify the risk involved for the patient.

Patient risk is categorized as minimal, low, moderate, or high based on pre-assigned items pertaining to the presenting problem, diagnostic procedures ordered, and management options selected. The single highest-rated item detected on the Table of Risk determines the overall patient risk for an encounter.1 Chronic conditions with exacerbations and invasive procedures offer more patient risk than acute, uncomplicated illnesses or noninvasive procedures. Stable or improving problems are considered “less risky” than progressing problems; conditions that pose a threat to life/bodily function outweigh undiagnosed problems where it is difficult to determine the patient’s prognosis; and medication risk varies with the administration (e.g. oral vs. parenteral), type, and potential for adverse effects. Medication risk for a particular drug is invariable whether the dosage is increased, decreased, or continued without change. Physicians should:

- Provide status for all problems in the plan of care and identify them as stable, worsening, or progressing (mild or severe), when applicable; don’t assume that the auditor can infer this from the documentation details.

- Document all diagnostic or therapeutic procedures considered.

- Identify surgical risk factors involving co-morbid conditions that place the patient at greater risk than the average patient, when appropriate.

- Associate the labs ordered to monitor for medication toxicity with the corresponding medication; don’t assume that the auditor knows which labs are used to check for toxicity.

Varying levels of complexity. Remember that decision-making is just one of three components in evaluation and management (E&M) services, along with history and exam. MDM is identical for both the 1995 and 1997 guidelines, rooted in the complexity of the patient’s problem(s) addressed during a given encounter.1,2 Complexity is categorized as straightforward, low, moderate, or high, and directly correlates to the content of physician documentation.

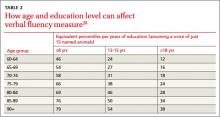

Each visit level represents a particular level of complexity (see Table 1). Auditors only consider the care plan for a given service date when reviewing MDM. More specifically, the auditor reviews three areas of MDM for each encounter (see Table 2), and the physician receives credit for: a) the number of diagnoses and/or treatment options; b) the amount and/or complexity of data ordered/reviewed; c) the risk of complications/morbidity/mortality.

To determine MDM complexity, each MDM category is assigned a point level. Complexity correlates to the second-highest MDM category. For example, if the auditor assigns “multiple” diagnoses/treatment options, “minimal” data, and “high” risk, the physician attains moderate complexity decision-making (see Table 3).

Carol Pohlig is a billing and coding expert with the University of Pennsylvania Medical Center, Philadelphia. She is also on the faculty of SHM’s inpatient coding course.

References

- Centers for Medicare and Medicaid Services. 1995 Documentation Guidelines for Evaluation and Management Services. Available at: www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/95Docguidelines.pdf. Accessed July 7, 2014.

- Centers for Medicare and Medicaid Services. 1997 Documentation Guidelines for Evaluation and Management Services. Available at: http://www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/97Docguidelines.pdf. Accessed July 7, 2014.

- American Medical Association. Current Procedural Terminology: 2014 Professional Edition. Chicago: American Medical Association; 2013:14-21.

- Novitas Solutions. Novitas Solutions documentation worksheet. Available at: www.novitas-solutions.com/webcenter/content/conn/UCM_Repository/uuid/dDocName:00004966. Accessed July 7, 2014.

Medical decision-making (MDM) mistakes are common. Here are the coding and documentation mistakes hospitalists make most often, along with some tips on how to avoid them.

Listing the problem without a plan. Healthcare professionals are able to infer the acuity and severity of a case without superfluous or redundant documentation, but auditors may not have this ability. Adequate documentation for every service date helps to convey patient complexity during a medical record review. Although the problem list may not change dramatically from day to day during a hospitalization, the auditor only reviews the service date in question, not the entire medical record.

Hospitalists should be sure to formulate a complete and accurate description of the patient’s condition with an analogous plan of care for each encounter. Listing problems without a corresponding plan of care does not corroborate physician management of that problem and could cause a downgrade of complexity. Listing problems with a brief, generalized comment (e.g. “DM, CKD, CHF: Continue current treatment plan”) equally diminishes the complexity and effort put forth by the physician.

Clearly document the plan. The care plan represents problems the physician personally manages, along with those that must also be considered when he or she formulates the management options, even if another physician is primarily managing the problem. For example, the hospitalist can monitor the patient’s diabetic management while the nephrologist oversees the chronic kidney disease (CKD). Since the CKD impacts the hospitalist’s diabetic care plan, the hospitalist may also receive credit for any CKD consideration if the documentation supports a hospitalist-related care plan, or comment about CKD that does not overlap or replicate the nephrologist’s plan. In other words, there must be some “value-added” input by the hospitalist.

Credit is given for the quantity of problems addressed as well as the quality. For inpatient care, an established problem is defined as one in which a care plan has been generated by the physician (or same specialty group practice member) during the current hospitalization. Established problems are less complex than new problems, for which a diagnosis, prognosis, or care plan has not been developed. Severity of the problem also influences complexity. A “worsening” problem is considered more complex than an “improving” problem, since the worsening problem likely requires revisions to the current care plan and, thus, more physician effort. Physician documentation should always:

- Identify all problems managed or addressed during each encounter;

- Identify problems as stable or progressing, when appropriate;

- Indicate differential diagnoses when the problem remains undefined;

- Indicate the management/treatment option(s) for each problem; and

- Note management options to be continued somewhere in the progress note for that encounter (e.g. medication list) when documentation indicates a continuation of current management options (e.g. “continue meds”).

Considering relevant data. “Data” is organized as pathology/laboratory testing, radiology, and medicine-based diagnostic testing that contributes to diagnosing or managing patient problems. Pertinent orders or results may appear in the medical record, but most of the background interactions and communications involving testing are undetected when reviewing the progress note. To receive credit:

- Specify tests ordered and rationale in the physician’s progress note, or make an entry that refers to another auditor-accessible location for ordered tests and studies; however, this latter option jeopardizes a medical record review due to potential lack of awareness of the need to submit this extraneous information during a payer record request or appeal.

- Document test review by including a brief entry in the progress note (e.g. “elevated glucose levels” or “CXR shows RLL infiltrates”); credit is not given for entries lacking a comment on the findings (e.g. “CXR reviewed”).

- Summarize key points when reviewing old records or obtaining history from someone other than the patient, as necessary; be sure to identify the increased efforts of reviewing the considerable number of old records by stating, “OSH (outside hospital) records reviewed and shows…” or “Records from previous hospitalization(s) reveal….”

- Indicate when images, tracings, or specimens are “personally reviewed,” or the auditor will assume the physician merely reviewed the written report; be sure to include a comment on the findings.

- Summarize any discussions of unexpected or contradictory test results with the physician performing the procedure or diagnostic study.

Data credit may be more substantial during the initial investigative phase of the hospitalization, before diagnoses or treatment options have been confirmed. Routine monitoring of the stabilized patient may not yield as many “points.”

Undervaluing the patient’s complexity. A general lack of understanding of the MDM component of the documentation guidelines often results in physicians undervaluing their services. Some physicians may consider a case “low complexity” simply because of the frequency with which they encounter the case type. The speed with which the care plan is developed should have no bearing on how complex the patient’s condition really is. Hospitalists need to better identify the risk involved for the patient.

Patient risk is categorized as minimal, low, moderate, or high based on pre-assigned items pertaining to the presenting problem, diagnostic procedures ordered, and management options selected. The single highest-rated item detected on the Table of Risk determines the overall patient risk for an encounter.1 Chronic conditions with exacerbations and invasive procedures offer more patient risk than acute, uncomplicated illnesses or noninvasive procedures. Stable or improving problems are considered “less risky” than progressing problems; conditions that pose a threat to life/bodily function outweigh undiagnosed problems where it is difficult to determine the patient’s prognosis; and medication risk varies with the administration (e.g. oral vs. parenteral), type, and potential for adverse effects. Medication risk for a particular drug is invariable whether the dosage is increased, decreased, or continued without change. Physicians should:

- Provide status for all problems in the plan of care and identify them as stable, worsening, or progressing (mild or severe), when applicable; don’t assume that the auditor can infer this from the documentation details.

- Document all diagnostic or therapeutic procedures considered.

- Identify surgical risk factors involving co-morbid conditions that place the patient at greater risk than the average patient, when appropriate.

- Associate the labs ordered to monitor for medication toxicity with the corresponding medication; don’t assume that the auditor knows which labs are used to check for toxicity.

Varying levels of complexity. Remember that decision-making is just one of three components in evaluation and management (E&M) services, along with history and exam. MDM is identical for both the 1995 and 1997 guidelines, rooted in the complexity of the patient’s problem(s) addressed during a given encounter.1,2 Complexity is categorized as straightforward, low, moderate, or high, and directly correlates to the content of physician documentation.

Each visit level represents a particular level of complexity (see Table 1). Auditors only consider the care plan for a given service date when reviewing MDM. More specifically, the auditor reviews three areas of MDM for each encounter (see Table 2), and the physician receives credit for: a) the number of diagnoses and/or treatment options; b) the amount and/or complexity of data ordered/reviewed; c) the risk of complications/morbidity/mortality.

To determine MDM complexity, each MDM category is assigned a point level. Complexity correlates to the second-highest MDM category. For example, if the auditor assigns “multiple” diagnoses/treatment options, “minimal” data, and “high” risk, the physician attains moderate complexity decision-making (see Table 3).

Carol Pohlig is a billing and coding expert with the University of Pennsylvania Medical Center, Philadelphia. She is also on the faculty of SHM’s inpatient coding course.

References

- Centers for Medicare and Medicaid Services. 1995 Documentation Guidelines for Evaluation and Management Services. Available at: www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/95Docguidelines.pdf. Accessed July 7, 2014.

- Centers for Medicare and Medicaid Services. 1997 Documentation Guidelines for Evaluation and Management Services. Available at: http://www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/97Docguidelines.pdf. Accessed July 7, 2014.

- American Medical Association. Current Procedural Terminology: 2014 Professional Edition. Chicago: American Medical Association; 2013:14-21.

- Novitas Solutions. Novitas Solutions documentation worksheet. Available at: www.novitas-solutions.com/webcenter/content/conn/UCM_Repository/uuid/dDocName:00004966. Accessed July 7, 2014.

Society of Hospital Medicine Accepting Pre-Orders for 2014 HM Report

How does your HM group’s productivity stack up against others on productivity? How about compensation? These are the questions that can guide major business decisions for a hospital medicine group, and SHM’s State of Hospital Medicine Report, published every two years, can answer them. The 2014 issue is now available for pre-order and delivery in September.

To order, visit www.hospitalmedicine.org/survey.

How does your HM group’s productivity stack up against others on productivity? How about compensation? These are the questions that can guide major business decisions for a hospital medicine group, and SHM’s State of Hospital Medicine Report, published every two years, can answer them. The 2014 issue is now available for pre-order and delivery in September.

To order, visit www.hospitalmedicine.org/survey.

How does your HM group’s productivity stack up against others on productivity? How about compensation? These are the questions that can guide major business decisions for a hospital medicine group, and SHM’s State of Hospital Medicine Report, published every two years, can answer them. The 2014 issue is now available for pre-order and delivery in September.

To order, visit www.hospitalmedicine.org/survey.

Society of Hospital Medicine’s New Membership Ambassador Program Perks

Do you know someone who should be a part of the HM movement but hasn’t joined SHM? Now you can both win: Your colleague can enjoy all the benefits of SHM membership, and you can receive credits against your future dues. Plus, you’ll get the chance to win a free registration to HM15.

Now through December 31, all active SHM members can earn dues credits and special recognition for recruiting new physician, allied health, or affiliate members. Active members will be eligible for:

- A $35 credit toward 2015-2016 dues when recruiting one new member;

- A $50 credit toward 2015-2016 dues when recruiting two to four new members;

- A $75 credit toward 2015-2016 dues when recruiting five to nine new members; or

- A $125 credit toward 2015-2016 dues when recruiting 10+ new members.

For EVERY member recruited, individuals will receive one entry into a grand prize drawing to receive complimentary registration to Hospital Medicine 2015 in National Harbor, Md.

For details, visit www.hospitalmedicine.org/membership.

Do you know someone who should be a part of the HM movement but hasn’t joined SHM? Now you can both win: Your colleague can enjoy all the benefits of SHM membership, and you can receive credits against your future dues. Plus, you’ll get the chance to win a free registration to HM15.

Now through December 31, all active SHM members can earn dues credits and special recognition for recruiting new physician, allied health, or affiliate members. Active members will be eligible for:

- A $35 credit toward 2015-2016 dues when recruiting one new member;

- A $50 credit toward 2015-2016 dues when recruiting two to four new members;

- A $75 credit toward 2015-2016 dues when recruiting five to nine new members; or

- A $125 credit toward 2015-2016 dues when recruiting 10+ new members.

For EVERY member recruited, individuals will receive one entry into a grand prize drawing to receive complimentary registration to Hospital Medicine 2015 in National Harbor, Md.

For details, visit www.hospitalmedicine.org/membership.

Do you know someone who should be a part of the HM movement but hasn’t joined SHM? Now you can both win: Your colleague can enjoy all the benefits of SHM membership, and you can receive credits against your future dues. Plus, you’ll get the chance to win a free registration to HM15.

Now through December 31, all active SHM members can earn dues credits and special recognition for recruiting new physician, allied health, or affiliate members. Active members will be eligible for:

- A $35 credit toward 2015-2016 dues when recruiting one new member;

- A $50 credit toward 2015-2016 dues when recruiting two to four new members;

- A $75 credit toward 2015-2016 dues when recruiting five to nine new members; or

- A $125 credit toward 2015-2016 dues when recruiting 10+ new members.

For EVERY member recruited, individuals will receive one entry into a grand prize drawing to receive complimentary registration to Hospital Medicine 2015 in National Harbor, Md.

For details, visit www.hospitalmedicine.org/membership.

CODE-H Medical Coding Education Program Becomes Interactive

SHM’s coding education program, CODE-H, now has an interactive component through the SHM Learning Portal. CODE-H originally was developed as a series of live and on-demand webinars complemented by online forums; today, CODE-H Interactive brings the same expertise to an interactive platform ideal for new hospitalists learning the nuances of coding, hospital medicine groups assessing the coding skills of their caregivers, or even coders using it as a training tool for conducting audits of hospital medicine groups.

To learn more about CODE-H and CODE-H Interactive, visit www.hospitalmedicine.org/codeh.

SHM’s coding education program, CODE-H, now has an interactive component through the SHM Learning Portal. CODE-H originally was developed as a series of live and on-demand webinars complemented by online forums; today, CODE-H Interactive brings the same expertise to an interactive platform ideal for new hospitalists learning the nuances of coding, hospital medicine groups assessing the coding skills of their caregivers, or even coders using it as a training tool for conducting audits of hospital medicine groups.

To learn more about CODE-H and CODE-H Interactive, visit www.hospitalmedicine.org/codeh.

SHM’s coding education program, CODE-H, now has an interactive component through the SHM Learning Portal. CODE-H originally was developed as a series of live and on-demand webinars complemented by online forums; today, CODE-H Interactive brings the same expertise to an interactive platform ideal for new hospitalists learning the nuances of coding, hospital medicine groups assessing the coding skills of their caregivers, or even coders using it as a training tool for conducting audits of hospital medicine groups.

To learn more about CODE-H and CODE-H Interactive, visit www.hospitalmedicine.org/codeh.

Adult Hospital Medicine Boot Camp for Physician Assistants, Nurse Practitioners

Nurse practitioners and physician assistants are a critical part of the hospitalist care team. Together with the American Academy of Physician Assistants, SHM is hosting the annual Adult Hospital Medicine Boot Camp (www.aapa.org/bootcamp) specifically for nurse practitioners (NPs) and physician assistants (PAs).

The four-day program helps PAs and NPs stay up to date on the most common diagnoses, diseases, and treatments for hospitalized patients (27.75 hours Category 1 CME). A pre-course for PAs and NPs new to hospital medicine introduces them to the unique demands of inpatient care (eight hours Category 1 CME).

Adult Hospital Medicine Boot Camp October 2-5, 2014

The Westin Peachtree Plaza, Atlanta

Hospital Medicine 101

October 1, 2014

The Westin Peachtree Plaza, Atlanta

Nurse practitioners and physician assistants are a critical part of the hospitalist care team. Together with the American Academy of Physician Assistants, SHM is hosting the annual Adult Hospital Medicine Boot Camp (www.aapa.org/bootcamp) specifically for nurse practitioners (NPs) and physician assistants (PAs).

The four-day program helps PAs and NPs stay up to date on the most common diagnoses, diseases, and treatments for hospitalized patients (27.75 hours Category 1 CME). A pre-course for PAs and NPs new to hospital medicine introduces them to the unique demands of inpatient care (eight hours Category 1 CME).

Adult Hospital Medicine Boot Camp October 2-5, 2014

The Westin Peachtree Plaza, Atlanta

Hospital Medicine 101

October 1, 2014

The Westin Peachtree Plaza, Atlanta

Nurse practitioners and physician assistants are a critical part of the hospitalist care team. Together with the American Academy of Physician Assistants, SHM is hosting the annual Adult Hospital Medicine Boot Camp (www.aapa.org/bootcamp) specifically for nurse practitioners (NPs) and physician assistants (PAs).

The four-day program helps PAs and NPs stay up to date on the most common diagnoses, diseases, and treatments for hospitalized patients (27.75 hours Category 1 CME). A pre-course for PAs and NPs new to hospital medicine introduces them to the unique demands of inpatient care (eight hours Category 1 CME).

Adult Hospital Medicine Boot Camp October 2-5, 2014

The Westin Peachtree Plaza, Atlanta

Hospital Medicine 101

October 1, 2014

The Westin Peachtree Plaza, Atlanta

TeamHealth Hospital Medicine Shares Performance Stats

In February, SHM published the first performance assessment tool for HM groups. Now, HMGs across the country are using the “Key Principles and Characteristics of an Effective Hospital Medicine Group” to better understand their organizations’ strengths and areas needing improvement. Knoxville-based TeamHealth is the first to share its findings with SHM and The Hospitalist.

Before SHM published the assessment tool, there were very few objective attempts to provide guidelines that define an effective HMG. At TeamHealth, we viewed this tool as a way to proactively analyze our HMGs—a starting point if you will, to measure our performance against the principles identified in this assessment.

To this end, we allocated an internal analyst to work with our regional leadership teams. We felt it was important to have one person coordinating the analysis in order to ensure consistency with regard to how performance was defined. The analyst, along with the regional medical director and vice president of client services, went through each of the 47 key characteristics and identified the program’s status by evaluating the following statements:

- This characteristic does not apply to our HMG;

- Yes, we fully address the characteristic;

- Yes, we partially address the characteristic; or

- No, we do not materially address the characteristic.

For purposes of scoring, we then assigned a weight to each of the characteristics: three points if “fully addressed”; two points if “partially addressed”; one point if not addressed. We did not find that any of the characteristics fell under the “does not apply to our HMG” category.

A “100% effective” HMG was defined as scoring the highest possible score of 141 (i.e., three points for “fully addressing” each of the 47 characteristics).

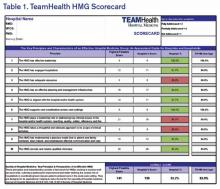

We are currently at the next step in our assessment process. This step involves completion of a scorecard for each individual HMG (see Table 1). Additionally, the individual HMG score will be benchmarked against TeamHealth Hospital Medicine performance overall.

Finally, our regional teams will take the scorecard and meet with their hospital administrators to review the assessment tool, our methodology for completion, and the hospital’s performance.

We fully recognize that some of our hospital partners have measurement standards that differ from those presented by SHM in this assessment; nonetheless, TeamHealth feels the tool in its present state is a significant first step toward quantifying a high-functioning HMG—and will ultimately help improve both hospitalists and hospital performance.

Roberta P. Himebaugh is executive vice president of TeamHealth Hospital Medicine.

In February, SHM published the first performance assessment tool for HM groups. Now, HMGs across the country are using the “Key Principles and Characteristics of an Effective Hospital Medicine Group” to better understand their organizations’ strengths and areas needing improvement. Knoxville-based TeamHealth is the first to share its findings with SHM and The Hospitalist.

Before SHM published the assessment tool, there were very few objective attempts to provide guidelines that define an effective HMG. At TeamHealth, we viewed this tool as a way to proactively analyze our HMGs—a starting point if you will, to measure our performance against the principles identified in this assessment.

To this end, we allocated an internal analyst to work with our regional leadership teams. We felt it was important to have one person coordinating the analysis in order to ensure consistency with regard to how performance was defined. The analyst, along with the regional medical director and vice president of client services, went through each of the 47 key characteristics and identified the program’s status by evaluating the following statements:

- This characteristic does not apply to our HMG;

- Yes, we fully address the characteristic;

- Yes, we partially address the characteristic; or

- No, we do not materially address the characteristic.

For purposes of scoring, we then assigned a weight to each of the characteristics: three points if “fully addressed”; two points if “partially addressed”; one point if not addressed. We did not find that any of the characteristics fell under the “does not apply to our HMG” category.

A “100% effective” HMG was defined as scoring the highest possible score of 141 (i.e., three points for “fully addressing” each of the 47 characteristics).

We are currently at the next step in our assessment process. This step involves completion of a scorecard for each individual HMG (see Table 1). Additionally, the individual HMG score will be benchmarked against TeamHealth Hospital Medicine performance overall.

Finally, our regional teams will take the scorecard and meet with their hospital administrators to review the assessment tool, our methodology for completion, and the hospital’s performance.

We fully recognize that some of our hospital partners have measurement standards that differ from those presented by SHM in this assessment; nonetheless, TeamHealth feels the tool in its present state is a significant first step toward quantifying a high-functioning HMG—and will ultimately help improve both hospitalists and hospital performance.

Roberta P. Himebaugh is executive vice president of TeamHealth Hospital Medicine.

In February, SHM published the first performance assessment tool for HM groups. Now, HMGs across the country are using the “Key Principles and Characteristics of an Effective Hospital Medicine Group” to better understand their organizations’ strengths and areas needing improvement. Knoxville-based TeamHealth is the first to share its findings with SHM and The Hospitalist.

Before SHM published the assessment tool, there were very few objective attempts to provide guidelines that define an effective HMG. At TeamHealth, we viewed this tool as a way to proactively analyze our HMGs—a starting point if you will, to measure our performance against the principles identified in this assessment.

To this end, we allocated an internal analyst to work with our regional leadership teams. We felt it was important to have one person coordinating the analysis in order to ensure consistency with regard to how performance was defined. The analyst, along with the regional medical director and vice president of client services, went through each of the 47 key characteristics and identified the program’s status by evaluating the following statements:

- This characteristic does not apply to our HMG;

- Yes, we fully address the characteristic;

- Yes, we partially address the characteristic; or

- No, we do not materially address the characteristic.

For purposes of scoring, we then assigned a weight to each of the characteristics: three points if “fully addressed”; two points if “partially addressed”; one point if not addressed. We did not find that any of the characteristics fell under the “does not apply to our HMG” category.

A “100% effective” HMG was defined as scoring the highest possible score of 141 (i.e., three points for “fully addressing” each of the 47 characteristics).

We are currently at the next step in our assessment process. This step involves completion of a scorecard for each individual HMG (see Table 1). Additionally, the individual HMG score will be benchmarked against TeamHealth Hospital Medicine performance overall.

Finally, our regional teams will take the scorecard and meet with their hospital administrators to review the assessment tool, our methodology for completion, and the hospital’s performance.

We fully recognize that some of our hospital partners have measurement standards that differ from those presented by SHM in this assessment; nonetheless, TeamHealth feels the tool in its present state is a significant first step toward quantifying a high-functioning HMG—and will ultimately help improve both hospitalists and hospital performance.

Roberta P. Himebaugh is executive vice president of TeamHealth Hospital Medicine.

Are topical nitrates safe and effective for upper extremity tendinopathies?

Topical nitrates provide short-term relief with some side effects, especially headache. Topical nitroglycerin (NTG) patches improve subjective pain scores by about 30% and range of motion over 3 days in patients with acute shoulder tendinopathy (strength of recommendation [SOR]: C, small randomized controlled trial [RCT] with no methodologic flaws).

NTG patches, when combined with tendon rehabilitation, improve subjective pain ratings by about 30% and shoulder strength by about 10% in patients with chronic shoulder tendinopathy over 3 to 6 months, but not in the long term (SOR: C, RCTs with methodologic flaws). They improve pain and strength 15% to 50% for chronic extensor tendinosis of the elbow over a 6-month period (SOR: C, small RCT with methodologic flaws).

NTG patches used without tendon rehabilitation don’t improve pain or strength in chronic lateral epicondylitis over 8 weeks (SOR: C, RCT).

Topical NTG patches commonly produce headaches and rashes (SOR: B, multiple RCTs).

EVIDENCE SUMMARY

A small RCT found that NTG therapy improved short-term pain and joint mobility in patients with acute supraspinatus tendinitis.1 Investigators randomized 10 men and 10 women with acute shoulder tendonitis (fewer than 7 days’ duration) to use either 5-mg NTG patches or placebo patches daily for 3 days. Patients rated pain on a 10-point scale, and investigators measured joint mobility on a 4-point scale.

After 48 hours of treatment, NTG patches significantly reduced pain ratings from baseline (from 7 to 2 points; P<.001), whereas placebo didn’t (6 vs 6 points; P not significant). NTG patches also improved joint mobility from baseline (from 2 points “moderately restricted” to .1 points “not restricted”; P<.001), but placebo didn’t (1.2 points “mildly restricted” vs 1.2 points; P not significant). The placebo group had less pain and joint restriction than the NTG group at the start of the study. Two patients reported headache 24 hours after starting treatment.

NTG plus rehabilitation improves chronic shoulder pain, range of motion

A double-blind RCT evaluating NTG patches for 53 patients (57 shoulders) with chronic supraspinatus tendinopathy (shoulder pain lasting longer than 3 months) found that they improved pain, strength, and range of motion at 3 to 6 months.2 Investigators randomized patients to receive one-quarter of a 5-mg 24-hour NTG patch or placebo patch daily and enrolled all patients in a rehabilitation program. They assessed subjective pain (at night and with activity), strength, and external rotation at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved nighttime pain about 30% (at 12 and 24 weeks), pain with activity about 60% (at 24 weeks), strength about 10% (at 12 and 24 weeks), and range of motion about 20% (at 24 weeks; P<.05 for all comparisons). The placebo group initially had more pain, less strength, and less mobility than the NTG group. Investigators reported no adverse effects.

NTG and rehab improve elbow pain, but with side effects

Another RCT comparing topical NTG patches in patients with chronic extensor tendinosis of the elbow found that they improved most parameters.3 Investigators randomized 86 patients with elbow tendonitis (longer than 3 months) to NTG patches (one-quarter of a 5-mg 24-hour patch) or placebo patches and enrolled all patients in a tendon rehabilitation program. They assessed subjective pain, extensor tendon tenderness, and muscle strength at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved subjective pain, tendon tenderness, and strength significantly more than placebo at all follow-up points, by 15% to 50% (P<.05 for all comparisons). The study was flawed because the control group started with more pain, tenderness, and weakness than the NTG group. Five patients discontinued NTG because of adverse effects (headache, dermatitis, and facial flushing).

A follow-up study done 5 years after discontinuation of therapy found equal outcomes with NTG and placebo.4 Investigators evaluated, by phone or in person, 58 of the 86 patients in the original study. NTG and placebo therapy produced equivalent reductions in subjective 0 to 4 elbow pain scores over baseline (average pain 2.5 initially, 1.5 at 12 weeks, and 1.0 at 5 years; P<.01 for all comparisons with baseline, no significant difference between nitrates and placebo).

NTG without rehab works no better than placebo

Another RCT that evaluated 3 different doses of NTG patches for 8 weeks in 154 patients with chronic lateral epicondylosis found NTG treatment was no better than placebo for pain or strength.5 Investigators randomized patients with more than 3 months of symptoms to 3 NTG patch doses (.72 mg/24 h, 1.44 mg/ 24 h, or 3.6 mg/24 h) compared with placebo and evaluated subjective pain (at rest, with activity, and at night), grip strength, and force, at baseline and 8 weeks.

The study lacked a formal wrist strengthening rehabilitation program. Patients in the placebo group had lower baseline pain scores than the NTG groups. Seven patients dropped out of the study because of headaches.

RECOMMENDATIONS

We found no authoritative recommendations regarding the use of topical nitrates for upper extremity tendinopathies.

An online reference text doesn’t make a recommendation, but references the studies described previously.6 The authors state that headache is the most common adverse effect of topical nitrates, but it becomes less severe over the course of treatment. They recommend caution in patients with hypotension, pregnancy, or migraines, and those who take diuretics. The authors also note that nitrates are relatively contraindicated in patients with ischemic heart disease, anemia, phosphodiesterase inhibitor therapy (such as sildenafil), angle-closure glaucoma, and allergy to nitrates.

1. Berrazueta JR, Losada A, Poveda J, et al. Successful treatment of shoulder pain syndrome due to supraspinatus tendinitis with transdermal nitroglycerin. A double blind study. Pain. 1996;66:63-67.

2. Paoloni JA, Appleyard RC, Nelson J, et al. Topical glyceryl trinitrate application in the treatment of chronic supraspinatus tendinopathy: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2005;33:806-813.

3. Paoloni JA, Appleyard RC, Nelson J, et al. Topical nitric oxide application in the treatment of chronic extensor tendinosis at the elbow: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2003;31:915-920.

4. McCallum SD, Paoloni JA, Murrell GA, et al. Five-year prospective comparison study of topical glyceryl trinitrate treatment of chronic lateral epicondylosis at the elbow. Br J Sports Med. 2011;45:416-420.

5. Paolini JA, Murrell GA, Burch RM, et al. Randomised, double-blind, placebo-controlled clinical trial of a new topical glyceryl trinitrate patch for chronic lateral epicondylosis. Br J Sports Med. 2009;43:299-302.

6. Simons SM, Kruse D. Rotator cuff tendinopathy. UpToDate Web site. Available at: www.uptodate.com/contents/rotator-cuff-tendinopathy. Accessed February 19, 2014.

Topical nitrates provide short-term relief with some side effects, especially headache. Topical nitroglycerin (NTG) patches improve subjective pain scores by about 30% and range of motion over 3 days in patients with acute shoulder tendinopathy (strength of recommendation [SOR]: C, small randomized controlled trial [RCT] with no methodologic flaws).

NTG patches, when combined with tendon rehabilitation, improve subjective pain ratings by about 30% and shoulder strength by about 10% in patients with chronic shoulder tendinopathy over 3 to 6 months, but not in the long term (SOR: C, RCTs with methodologic flaws). They improve pain and strength 15% to 50% for chronic extensor tendinosis of the elbow over a 6-month period (SOR: C, small RCT with methodologic flaws).

NTG patches used without tendon rehabilitation don’t improve pain or strength in chronic lateral epicondylitis over 8 weeks (SOR: C, RCT).

Topical NTG patches commonly produce headaches and rashes (SOR: B, multiple RCTs).

EVIDENCE SUMMARY

A small RCT found that NTG therapy improved short-term pain and joint mobility in patients with acute supraspinatus tendinitis.1 Investigators randomized 10 men and 10 women with acute shoulder tendonitis (fewer than 7 days’ duration) to use either 5-mg NTG patches or placebo patches daily for 3 days. Patients rated pain on a 10-point scale, and investigators measured joint mobility on a 4-point scale.

After 48 hours of treatment, NTG patches significantly reduced pain ratings from baseline (from 7 to 2 points; P<.001), whereas placebo didn’t (6 vs 6 points; P not significant). NTG patches also improved joint mobility from baseline (from 2 points “moderately restricted” to .1 points “not restricted”; P<.001), but placebo didn’t (1.2 points “mildly restricted” vs 1.2 points; P not significant). The placebo group had less pain and joint restriction than the NTG group at the start of the study. Two patients reported headache 24 hours after starting treatment.

NTG plus rehabilitation improves chronic shoulder pain, range of motion

A double-blind RCT evaluating NTG patches for 53 patients (57 shoulders) with chronic supraspinatus tendinopathy (shoulder pain lasting longer than 3 months) found that they improved pain, strength, and range of motion at 3 to 6 months.2 Investigators randomized patients to receive one-quarter of a 5-mg 24-hour NTG patch or placebo patch daily and enrolled all patients in a rehabilitation program. They assessed subjective pain (at night and with activity), strength, and external rotation at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved nighttime pain about 30% (at 12 and 24 weeks), pain with activity about 60% (at 24 weeks), strength about 10% (at 12 and 24 weeks), and range of motion about 20% (at 24 weeks; P<.05 for all comparisons). The placebo group initially had more pain, less strength, and less mobility than the NTG group. Investigators reported no adverse effects.

NTG and rehab improve elbow pain, but with side effects

Another RCT comparing topical NTG patches in patients with chronic extensor tendinosis of the elbow found that they improved most parameters.3 Investigators randomized 86 patients with elbow tendonitis (longer than 3 months) to NTG patches (one-quarter of a 5-mg 24-hour patch) or placebo patches and enrolled all patients in a tendon rehabilitation program. They assessed subjective pain, extensor tendon tenderness, and muscle strength at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved subjective pain, tendon tenderness, and strength significantly more than placebo at all follow-up points, by 15% to 50% (P<.05 for all comparisons). The study was flawed because the control group started with more pain, tenderness, and weakness than the NTG group. Five patients discontinued NTG because of adverse effects (headache, dermatitis, and facial flushing).

A follow-up study done 5 years after discontinuation of therapy found equal outcomes with NTG and placebo.4 Investigators evaluated, by phone or in person, 58 of the 86 patients in the original study. NTG and placebo therapy produced equivalent reductions in subjective 0 to 4 elbow pain scores over baseline (average pain 2.5 initially, 1.5 at 12 weeks, and 1.0 at 5 years; P<.01 for all comparisons with baseline, no significant difference between nitrates and placebo).

NTG without rehab works no better than placebo

Another RCT that evaluated 3 different doses of NTG patches for 8 weeks in 154 patients with chronic lateral epicondylosis found NTG treatment was no better than placebo for pain or strength.5 Investigators randomized patients with more than 3 months of symptoms to 3 NTG patch doses (.72 mg/24 h, 1.44 mg/ 24 h, or 3.6 mg/24 h) compared with placebo and evaluated subjective pain (at rest, with activity, and at night), grip strength, and force, at baseline and 8 weeks.

The study lacked a formal wrist strengthening rehabilitation program. Patients in the placebo group had lower baseline pain scores than the NTG groups. Seven patients dropped out of the study because of headaches.

RECOMMENDATIONS

We found no authoritative recommendations regarding the use of topical nitrates for upper extremity tendinopathies.

An online reference text doesn’t make a recommendation, but references the studies described previously.6 The authors state that headache is the most common adverse effect of topical nitrates, but it becomes less severe over the course of treatment. They recommend caution in patients with hypotension, pregnancy, or migraines, and those who take diuretics. The authors also note that nitrates are relatively contraindicated in patients with ischemic heart disease, anemia, phosphodiesterase inhibitor therapy (such as sildenafil), angle-closure glaucoma, and allergy to nitrates.

Topical nitrates provide short-term relief with some side effects, especially headache. Topical nitroglycerin (NTG) patches improve subjective pain scores by about 30% and range of motion over 3 days in patients with acute shoulder tendinopathy (strength of recommendation [SOR]: C, small randomized controlled trial [RCT] with no methodologic flaws).

NTG patches, when combined with tendon rehabilitation, improve subjective pain ratings by about 30% and shoulder strength by about 10% in patients with chronic shoulder tendinopathy over 3 to 6 months, but not in the long term (SOR: C, RCTs with methodologic flaws). They improve pain and strength 15% to 50% for chronic extensor tendinosis of the elbow over a 6-month period (SOR: C, small RCT with methodologic flaws).

NTG patches used without tendon rehabilitation don’t improve pain or strength in chronic lateral epicondylitis over 8 weeks (SOR: C, RCT).

Topical NTG patches commonly produce headaches and rashes (SOR: B, multiple RCTs).

EVIDENCE SUMMARY

A small RCT found that NTG therapy improved short-term pain and joint mobility in patients with acute supraspinatus tendinitis.1 Investigators randomized 10 men and 10 women with acute shoulder tendonitis (fewer than 7 days’ duration) to use either 5-mg NTG patches or placebo patches daily for 3 days. Patients rated pain on a 10-point scale, and investigators measured joint mobility on a 4-point scale.

After 48 hours of treatment, NTG patches significantly reduced pain ratings from baseline (from 7 to 2 points; P<.001), whereas placebo didn’t (6 vs 6 points; P not significant). NTG patches also improved joint mobility from baseline (from 2 points “moderately restricted” to .1 points “not restricted”; P<.001), but placebo didn’t (1.2 points “mildly restricted” vs 1.2 points; P not significant). The placebo group had less pain and joint restriction than the NTG group at the start of the study. Two patients reported headache 24 hours after starting treatment.

NTG plus rehabilitation improves chronic shoulder pain, range of motion

A double-blind RCT evaluating NTG patches for 53 patients (57 shoulders) with chronic supraspinatus tendinopathy (shoulder pain lasting longer than 3 months) found that they improved pain, strength, and range of motion at 3 to 6 months.2 Investigators randomized patients to receive one-quarter of a 5-mg 24-hour NTG patch or placebo patch daily and enrolled all patients in a rehabilitation program. They assessed subjective pain (at night and with activity), strength, and external rotation at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved nighttime pain about 30% (at 12 and 24 weeks), pain with activity about 60% (at 24 weeks), strength about 10% (at 12 and 24 weeks), and range of motion about 20% (at 24 weeks; P<.05 for all comparisons). The placebo group initially had more pain, less strength, and less mobility than the NTG group. Investigators reported no adverse effects.

NTG and rehab improve elbow pain, but with side effects

Another RCT comparing topical NTG patches in patients with chronic extensor tendinosis of the elbow found that they improved most parameters.3 Investigators randomized 86 patients with elbow tendonitis (longer than 3 months) to NTG patches (one-quarter of a 5-mg 24-hour patch) or placebo patches and enrolled all patients in a tendon rehabilitation program. They assessed subjective pain, extensor tendon tenderness, and muscle strength at baseline and at 2, 6, 12, and 24 weeks.

NTG patches improved subjective pain, tendon tenderness, and strength significantly more than placebo at all follow-up points, by 15% to 50% (P<.05 for all comparisons). The study was flawed because the control group started with more pain, tenderness, and weakness than the NTG group. Five patients discontinued NTG because of adverse effects (headache, dermatitis, and facial flushing).

A follow-up study done 5 years after discontinuation of therapy found equal outcomes with NTG and placebo.4 Investigators evaluated, by phone or in person, 58 of the 86 patients in the original study. NTG and placebo therapy produced equivalent reductions in subjective 0 to 4 elbow pain scores over baseline (average pain 2.5 initially, 1.5 at 12 weeks, and 1.0 at 5 years; P<.01 for all comparisons with baseline, no significant difference between nitrates and placebo).

NTG without rehab works no better than placebo

Another RCT that evaluated 3 different doses of NTG patches for 8 weeks in 154 patients with chronic lateral epicondylosis found NTG treatment was no better than placebo for pain or strength.5 Investigators randomized patients with more than 3 months of symptoms to 3 NTG patch doses (.72 mg/24 h, 1.44 mg/ 24 h, or 3.6 mg/24 h) compared with placebo and evaluated subjective pain (at rest, with activity, and at night), grip strength, and force, at baseline and 8 weeks.

The study lacked a formal wrist strengthening rehabilitation program. Patients in the placebo group had lower baseline pain scores than the NTG groups. Seven patients dropped out of the study because of headaches.

RECOMMENDATIONS

We found no authoritative recommendations regarding the use of topical nitrates for upper extremity tendinopathies.

An online reference text doesn’t make a recommendation, but references the studies described previously.6 The authors state that headache is the most common adverse effect of topical nitrates, but it becomes less severe over the course of treatment. They recommend caution in patients with hypotension, pregnancy, or migraines, and those who take diuretics. The authors also note that nitrates are relatively contraindicated in patients with ischemic heart disease, anemia, phosphodiesterase inhibitor therapy (such as sildenafil), angle-closure glaucoma, and allergy to nitrates.

1. Berrazueta JR, Losada A, Poveda J, et al. Successful treatment of shoulder pain syndrome due to supraspinatus tendinitis with transdermal nitroglycerin. A double blind study. Pain. 1996;66:63-67.

2. Paoloni JA, Appleyard RC, Nelson J, et al. Topical glyceryl trinitrate application in the treatment of chronic supraspinatus tendinopathy: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2005;33:806-813.

3. Paoloni JA, Appleyard RC, Nelson J, et al. Topical nitric oxide application in the treatment of chronic extensor tendinosis at the elbow: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2003;31:915-920.

4. McCallum SD, Paoloni JA, Murrell GA, et al. Five-year prospective comparison study of topical glyceryl trinitrate treatment of chronic lateral epicondylosis at the elbow. Br J Sports Med. 2011;45:416-420.

5. Paolini JA, Murrell GA, Burch RM, et al. Randomised, double-blind, placebo-controlled clinical trial of a new topical glyceryl trinitrate patch for chronic lateral epicondylosis. Br J Sports Med. 2009;43:299-302.

6. Simons SM, Kruse D. Rotator cuff tendinopathy. UpToDate Web site. Available at: www.uptodate.com/contents/rotator-cuff-tendinopathy. Accessed February 19, 2014.

1. Berrazueta JR, Losada A, Poveda J, et al. Successful treatment of shoulder pain syndrome due to supraspinatus tendinitis with transdermal nitroglycerin. A double blind study. Pain. 1996;66:63-67.

2. Paoloni JA, Appleyard RC, Nelson J, et al. Topical glyceryl trinitrate application in the treatment of chronic supraspinatus tendinopathy: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2005;33:806-813.

3. Paoloni JA, Appleyard RC, Nelson J, et al. Topical nitric oxide application in the treatment of chronic extensor tendinosis at the elbow: a randomized, double-blinded, placebo-controlled clinical trial. Am J Sports Med. 2003;31:915-920.

4. McCallum SD, Paoloni JA, Murrell GA, et al. Five-year prospective comparison study of topical glyceryl trinitrate treatment of chronic lateral epicondylosis at the elbow. Br J Sports Med. 2011;45:416-420.

5. Paolini JA, Murrell GA, Burch RM, et al. Randomised, double-blind, placebo-controlled clinical trial of a new topical glyceryl trinitrate patch for chronic lateral epicondylosis. Br J Sports Med. 2009;43:299-302.

6. Simons SM, Kruse D. Rotator cuff tendinopathy. UpToDate Web site. Available at: www.uptodate.com/contents/rotator-cuff-tendinopathy. Accessed February 19, 2014.

Evidence-based answers from the Family Physicians Inquiries Network

Medical Decision-Making: Avoid These Common Coding & Documentation Mistakes

Medical decision-making (MDM) mistakes are common. Here are the coding and documentation mistakes hospitalists make most often, along with some tips on how to avoid them.

Listing the problem without a plan. Healthcare professionals are able to infer the acuity and severity of a case without superfluous or redundant documentation, but auditors may not have this ability. Adequate documentation for every service date helps to convey patient complexity during a medical record review. Although the problem list may not change dramatically from day to day during a hospitalization, the auditor only reviews the service date in question, not the entire medical record.

Hospitalists should be sure to formulate a complete and accurate description of the patient’s condition with an analogous plan of care for each encounter. Listing problems without a corresponding plan of care does not corroborate physician management of that problem and could cause a downgrade of complexity. Listing problems with a brief, generalized comment (e.g. “DM, CKD, CHF: Continue current treatment plan”) equally diminishes the complexity and effort put forth by the physician.

Clearly document the plan. The care plan represents problems the physician personally manages, along with those that must also be considered when he or she formulates the management options, even if another physician is primarily managing the problem. For example, the hospitalist can monitor the patient’s diabetic management while the nephrologist oversees the chronic kidney disease (CKD). Since the CKD impacts the hospitalist’s diabetic care plan, the hospitalist may also receive credit for any CKD consideration if the documentation supports a hospitalist-related care plan, or comment about CKD that does not overlap or replicate the nephrologist’s plan. In other words, there must be some “value-added” input by the hospitalist.

Credit is given for the quantity of problems addressed as well as the quality. For inpatient care, an established problem is defined as one in which a care plan has been generated by the physician (or same specialty group practice member) during the current hospitalization. Established problems are less complex than new problems, for which a diagnosis, prognosis, or care plan has not been developed. Severity of the problem also influences complexity. A “worsening” problem is considered more complex than an “improving” problem, since the worsening problem likely requires revisions to the current care plan and, thus, more physician effort. Physician documentation should always:

- Identify all problems managed or addressed during each encounter;

- Identify problems as stable or progressing, when appropriate;

- Indicate differential diagnoses when the problem remains undefined;

- Indicate the management/treatment option(s) for each problem; and

- Note management options to be continued somewhere in the progress note for that encounter (e.g. medication list) when documentation indicates a continuation of current management options (e.g. “continue meds”).

Considering relevant data. “Data” is organized as pathology/laboratory testing, radiology, and medicine-based diagnostic testing that contributes to diagnosing or managing patient problems. Pertinent orders or results may appear in the medical record, but most of the background interactions and communications involving testing are undetected when reviewing the progress note. To receive credit:

- Specify tests ordered and rationale in the physician’s progress note, or make an entry that refers to another auditor-accessible location for ordered tests and studies; however, this latter option jeopardizes a medical record review due to potential lack of awareness of the need to submit this extraneous information during a payer record request or appeal.

- Document test review by including a brief entry in the progress note (e.g. “elevated glucose levels” or “CXR shows RLL infiltrates”); credit is not given for entries lacking a comment on the findings (e.g. “CXR reviewed”).

- Summarize key points when reviewing old records or obtaining history from someone other than the patient, as necessary; be sure to identify the increased efforts of reviewing the considerable number of old records by stating, “OSH (outside hospital) records reviewed and shows…” or “Records from previous hospitalization(s) reveal….”

- Indicate when images, tracings, or specimens are “personally reviewed,” or the auditor will assume the physician merely reviewed the written report; be sure to include a comment on the findings.

- Summarize any discussions of unexpected or contradictory test results with the physician performing the procedure or diagnostic study.

Data credit may be more substantial during the initial investigative phase of the hospitalization, before diagnoses or treatment options have been confirmed. Routine monitoring of the stabilized patient may not yield as many “points.”

Undervaluing the patient’s complexity. A general lack of understanding of the MDM component of the documentation guidelines often results in physicians undervaluing their services. Some physicians may consider a case “low complexity” simply because of the frequency with which they encounter the case type. The speed with which the care plan is developed should have no bearing on how complex the patient’s condition really is. Hospitalists need to better identify the risk involved for the patient.

Patient risk is categorized as minimal, low, moderate, or high based on pre-assigned items pertaining to the presenting problem, diagnostic procedures ordered, and management options selected. The single highest-rated item detected on the Table of Risk determines the overall patient risk for an encounter.1 Chronic conditions with exacerbations and invasive procedures offer more patient risk than acute, uncomplicated illnesses or noninvasive procedures. Stable or improving problems are considered “less risky” than progressing problems; conditions that pose a threat to life/bodily function outweigh undiagnosed problems where it is difficult to determine the patient’s prognosis; and medication risk varies with the administration (e.g. oral vs. parenteral), type, and potential for adverse effects. Medication risk for a particular drug is invariable whether the dosage is increased, decreased, or continued without change. Physicians should:

- Provide status for all problems in the plan of care and identify them as stable, worsening, or progressing (mild or severe), when applicable; don’t assume that the auditor can infer this from the documentation details.

- Document all diagnostic or therapeutic procedures considered.

- Identify surgical risk factors involving co-morbid conditions that place the patient at greater risk than the average patient, when appropriate.

- Associate the labs ordered to monitor for medication toxicity with the corresponding medication; don’t assume that the auditor knows which labs are used to check for toxicity.

Varying levels of complexity. Remember that decision-making is just one of three components in evaluation and management (E&M) services, along with history and exam. MDM is identical for both the 1995 and 1997 guidelines, rooted in the complexity of the patient’s problem(s) addressed during a given encounter.1,2 Complexity is categorized as straightforward, low, moderate, or high, and directly correlates to the content of physician documentation.

Each visit level represents a particular level of complexity (see Table 1). Auditors only consider the care plan for a given service date when reviewing MDM. More specifically, the auditor reviews three areas of MDM for each encounter (see Table 2), and the physician receives credit for: a) the number of diagnoses and/or treatment options; b) the amount and/or complexity of data ordered/reviewed; c) the risk of complications/morbidity/mortality.

To determine MDM complexity, each MDM category is assigned a point level. Complexity correlates to the second-highest MDM category. For example, if the auditor assigns “multiple” diagnoses/treatment options, “minimal” data, and “high” risk, the physician attains moderate complexity decision-making (see Table 3).

Carol Pohlig is a billing and coding expert with the University of Pennsylvania Medical Center, Philadelphia. She is also on the faculty of SHM’s inpatient coding course.

References

- Centers for Medicare and Medicaid Services. 1995 Documentation Guidelines for Evaluation and Management Services. Available at: www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/95Docguidelines.pdf. Accessed July 7, 2014.

- Centers for Medicare and Medicaid Services. 1997 Documentation Guidelines for Evaluation and Management Services. Available at: http://www.cms.gov/Outreach-and-Education/Medicare-Learning-Network-MLN/MLNEdWebGuide/Downloads/97Docguidelines.pdf. Accessed July 7, 2014.

- American Medical Association. Current Procedural Terminology: 2014 Professional Edition. Chicago: American Medical Association; 2013:14-21.

- Novitas Solutions. Novitas Solutions documentation worksheet. Available at: www.novitas-solutions.com/webcenter/content/conn/UCM_Repository/uuid/dDocName:00004966. Accessed July 7, 2014.

Medical decision-making (MDM) mistakes are common. Here are the coding and documentation mistakes hospitalists make most often, along with some tips on how to avoid them.

Listing the problem without a plan. Healthcare professionals are able to infer the acuity and severity of a case without superfluous or redundant documentation, but auditors may not have this ability. Adequate documentation for every service date helps to convey patient complexity during a medical record review. Although the problem list may not change dramatically from day to day during a hospitalization, the auditor only reviews the service date in question, not the entire medical record.

Hospitalists should be sure to formulate a complete and accurate description of the patient’s condition with an analogous plan of care for each encounter. Listing problems without a corresponding plan of care does not corroborate physician management of that problem and could cause a downgrade of complexity. Listing problems with a brief, generalized comment (e.g. “DM, CKD, CHF: Continue current treatment plan”) equally diminishes the complexity and effort put forth by the physician.